- The paper introduces a novel lightweight framework that standardizes and scales data pipelines for large action models.

- It implements advanced rule-based filtering and real-time verification to ensure high-quality processing of diverse agent trajectory data.

- Empirical results demonstrate significant performance boosts and robustness on benchmarks like NexusRaven and CRM Agent Bench.

ActionStudio: Efficient Data and Training Infrastructure for Large Action Models

Motivation and Challenges

The proliferation of large action models (LAMs) has accelerated the capabilities of autonomous agents across highly diverse environments—ranging from productivity tools to industrial automation. However, model development is impeded by complex, noisy, and heterogeneous agentic trajectory data, as well as a lack of standardized, scalable infrastructure for data processing and training. Existing frameworks are not suited to agent-specific requirements, often relying on rigid templates or non-generalizable schemas and lacking public pipelines for data conversion and quality control. Furthermore, popular LLM training tools require substantial customization to accommodate agentic datasets, hindering reproducibility and limiting cross-task transfer.

ActionStudio Framework Overview

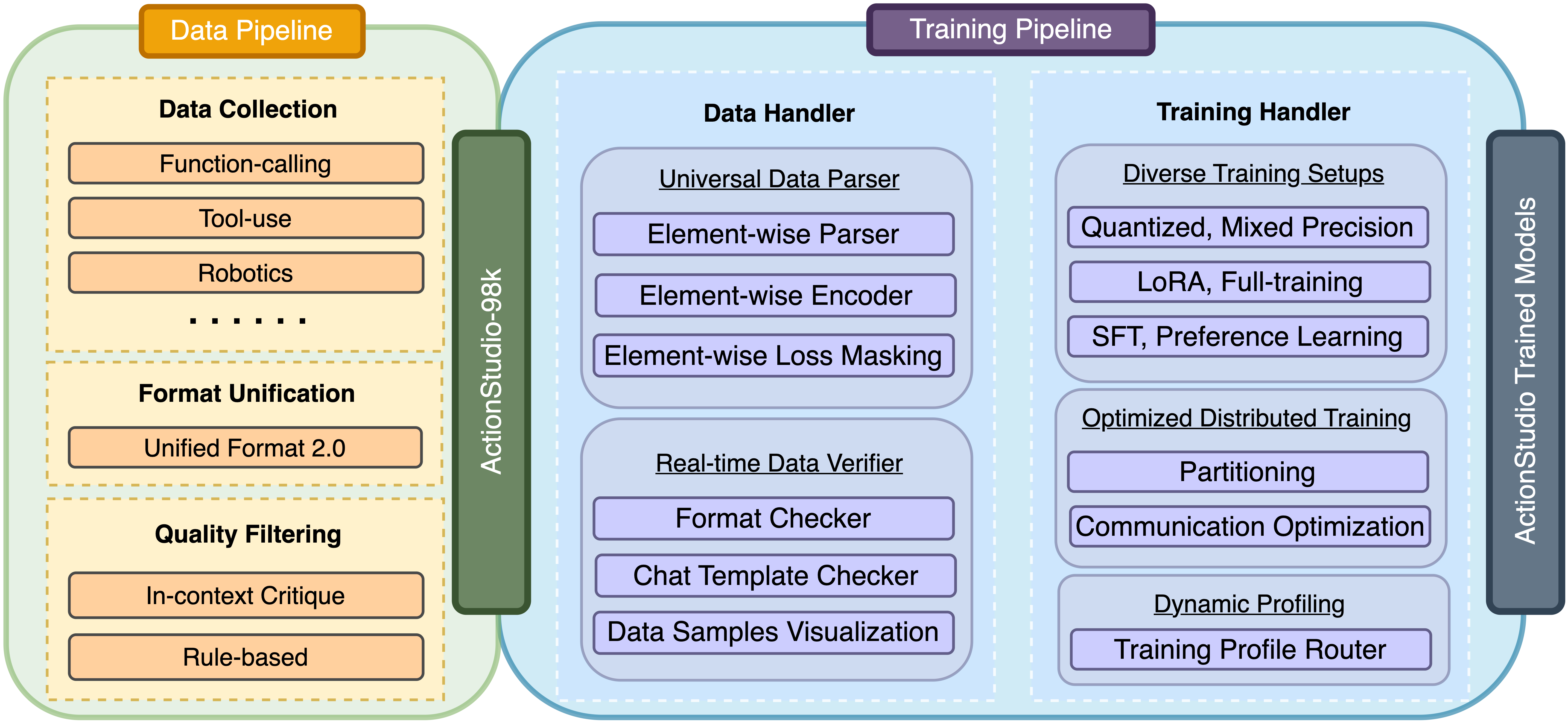

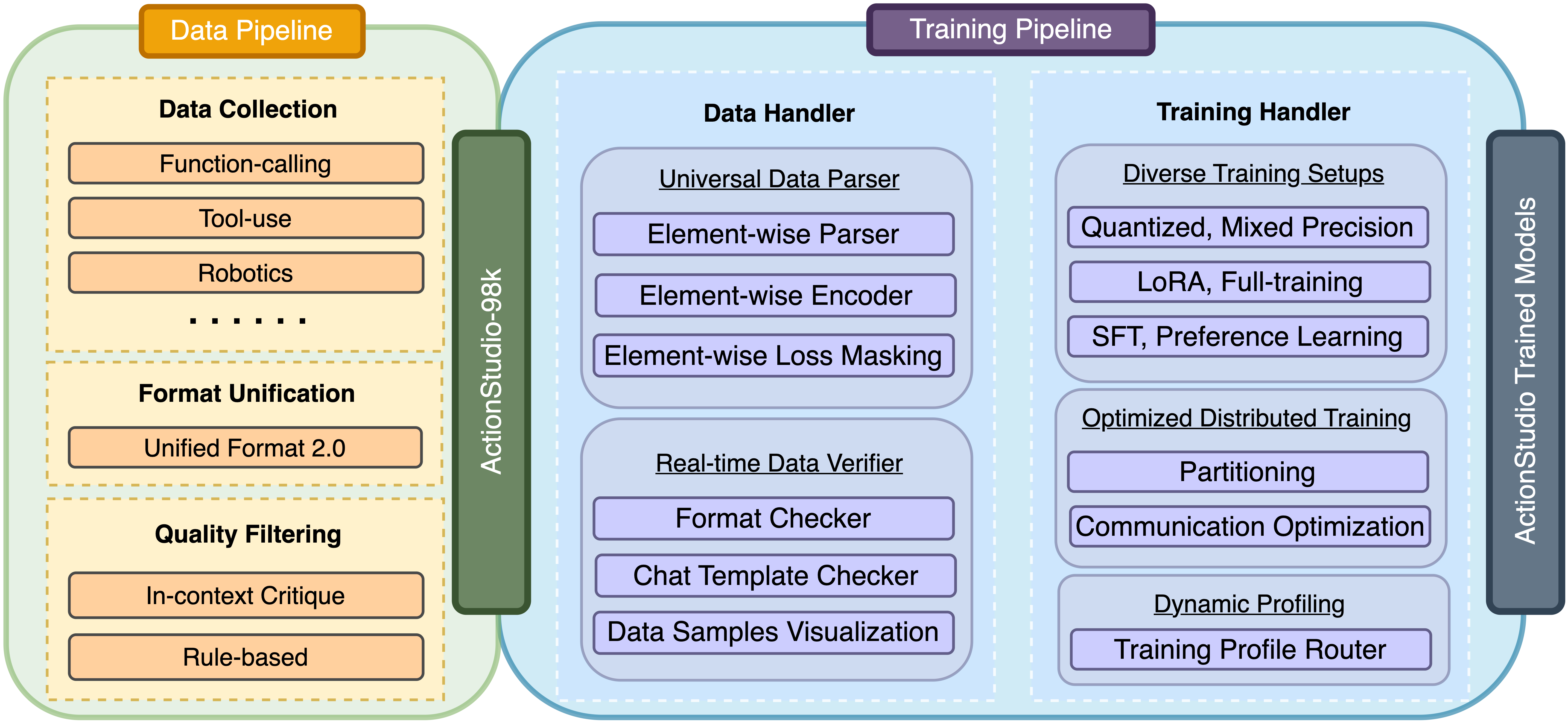

ActionStudio is presented as a lightweight, modular framework with end-to-end pipelines for standardized data processing and scalable training of large action models. The framework addresses both data and training challenges by introducing advanced filtering methodologies, real-time verification for data integrity, and extensible distributed training support.

Figure 1: Framework of ActionStudio outlining modular data and training pipelines, unified format processing, filtering, verification, and distributed training infrastructure.

Data Pipeline

- Collection: Aggregates high-quality trajectories across a broad range of domains, including function calling, tool use, conversational and robotic settings.

- Unified Format 2.0: Advances prior work by introducing a natively chat-based schema compatible with modern LLM APIs and HuggingFace-style templates. This structure modularizes agent trajectories, promotes semantic grounding of tasks and tool usage, and streamlines conversion to training-ready formats.

- Critique-and-Filter Pipeline: Incorporates in-context LLM critique augmented by human-aligned exemplars to achieve granular, fine-tuned filtering of trajectory quality. This mitigates common pitfalls of commercial evaluators such as overconfidence and median bias.

- Rule-Based Filtering and Real-Time Verification: Automated rule checks catch systematic errors (e.g., missed function calls, malformed arguments), while real-time verification ensures structural integrity and facilitates visualization at each preprocessing stage.

Training Pipeline

- Universal Data Handler: Supports element-wise parsing and encoding of highly diverse conversation histories—single/multi-turn, variable roles, step-wise reasoning—facilitating granular loss masking and flexible training objectives.

- Distributed Training: Enables parameter-efficient fine-tuning (LoRA, quantization, mixed-precision) and full-model training with near-linear scaling across multi-node clusters. Optimization of inter-GPU communication, sharding, and parallelization strategies minimizes throughput bottlenecks.

- Dynamic Profiling: Auto-tunings for varying architectures and model sizes, adapting resource allocation at runtime to maximize performance and efficiency.

- Real-Time Data Verifier: Enforces format and template compliance, dynamically flags errors, and provides before-after visualization to guarantee reliable preprocessing and seamless transition to model training.

Evaluations are performed on NexusRaven (function calling benchmark) and CRM Agent Bench (real-world enterprise scenarios), comparing ActionStudio-trained LAMs against commercial and open-source baselines. The results show strong quantitative improvements in both function-calling precision and practical CRM agent tasks.

- NexusRaven: ActionStudio-Mixtral-8x22b-inst-exp attains an F1-score of 0.969, outperforming prominent commercial offerings (GPT-4, GPT-4o), and exceeds the performance of large-scale open-source LAMs (Llama-4, DeepSeek).

- CRM Agent Bench: ActionStudio-Llama-3.3-70b-inst-exp achieves overall accuracy of 0.87, surpassing its instruct baseline by 0.03, strong agentic models (o1-preview, AgentOhana), and commercial leaders.

- Fine-tuning Gains: Models fine-tuned under ActionStudio consistently show large improvements over untuned or instruction-only baselines, demonstrating the impact of high-quality preprocessing and flexible training.

Training Efficiency and Scalability

ActionStudio demonstrates up to 9x higher throughput versus competing frameworks (AgentOhana, Lumos), with robust support for LoRA, quantized, and full-model training across single and multi-node settings. Notably:

- Quantized LoRA: Up to 79k tokens/sec for Llama-3.1-8B, 2.8x faster than AgentOhana, 9.4x faster than Lumos.

- Full-Model Tuning: Only ActionStudio completes all LoRA configurations without OOM, supports full-model updates with linear speedup as nodes are added.

- Long-Context: Throughput remains constant or improves for extreme sequence lengths (Mixtral-8x22b, 32k tokens), validating ActionStudio's memory scheduling and scalability.

- Multi-Pod Scaling: Throughput rises proportionally with pod count, facilitating large-scale training on industrial hardware.

Data Quality and Ablation Analysis

Critique-and-filter pipeline is validated by independent human annotation, achieving 85% agreement with expert judgments (15% higher than LLM-based agent data baselines). Ablation studies show ActionStudio preprocessing lifts function-call accuracy by 10 points and free-text accuracy by 22 points relative to untuned/AgentOhana pipelines, highlighting the importance of robust quality control in agentic data preparation.

Implications and Future Directions

ActionStudio substantially reduces infrastructural and reproducibility barriers in agentic model research and deployment. The Unified Format 2.0 standard, open-source pipelines, and high-throughput distributed training facilitate plug-and-play integration across tasks and environments. The release of actionstudio-98k, covering 30k APIs across 300 domains, will accelerate agentic research, benchmarking, and cross-domain transfer. The framework's modularity paves the way for future expansion into multimodal and embodied domains, further broadening the applicability of LAMs in real-world settings.

From a theoretical perspective, the fine-grained critique-and-filter pipeline and modular format abstractions are likely to foster more robust alignment and generalization across agentic applications. Practically, ActionStudio's efficiency and scalability will enable rapid prototyping and evaluation for both academic and enterprise-grade autonomous agents.

Conclusion

ActionStudio provides a comprehensive infrastructure for the development and deployment of large action models, integrating advanced data pipelines with scalable training workflows. Strong empirical performance is demonstrated across both function-calling and realistic agent benchmarks, with pronounced gains attributed to robust preprocessing, critique-and-filter, unified formatting, and dynamic distributed training. The framework's extensibility and open-source approach lay a foundation for continued innovation and cross-domain generalization in agentic AI systems (2503.22673).