RADIO-ViPE: Open-Vocabulary SLAM That Speaks Your Language

This lightning talk introduces RADIO-ViPE, a breakthrough system that performs real-time semantic mapping from unconstrained monocular video without requiring depth sensors or camera calibration. We explore how it tightly couples geometric, visual, and language representations in a unified optimization framework, handles dynamic environments through adaptive robust kernels, and achieves state-of-the-art open-vocabulary 3D segmentation while remaining deployable in the wild.Script

Most robots see geometry but not meaning. RADIO-ViPE changes that by building semantic maps from raw video that answer language queries in real time, no depth sensor or calibration required.

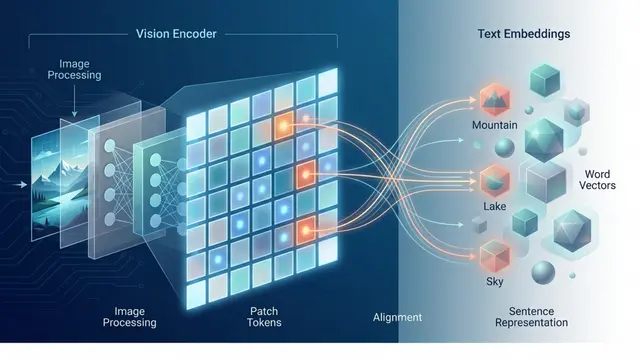

The core challenge is fusing three worlds: camera geometry, pixel appearance, and what foundation models understand about language. Previous systems treat these as separate stages, but RADIO-ViPE optimizes all three together in a single tightly coupled graph.

The system bootstraps camera parameters using GeoCalib, extracts 256-dimensional RADIO embeddings compressed via PCA, predicts monocular depth, then pulls everything into a factor graph where semantic similarity edges connect keyframes not just by overlap but by visual meaning.

Dynamic objects usually break SLAM, but RADIO-ViPE introduces temporally consistent adaptive robust kernels. By analyzing how stable embeddings are over time, the system automatically shifts from quadratic loss on static walls to Cauchy loss on moving people, suppressing outliers without hand-coded masks.

On Replica, RADIO-ViPE achieves top-3 mean intersection over union among all approaches while running online with zero calibration. When compared to offline methods that use ground truth depth and pose, it drops less than 2 percent, proving monocular self-supervision can rival privileged pipelines.

RADIO-ViPE makes language-driven robot perception deployable in the wild, turning any camera into a semantic sensor. To see the full system in action and create your own explainer videos, visit EmergentMind.com.