Vision-Language-Action Models Are Resistant to Forgetting in Continual Learning

This presentation examines groundbreaking research on how large-scale pretrained Vision-Language-Action models handle sequential task learning in robotics. Unlike smaller policies that suffer catastrophic forgetting, these pretrained models demonstrate remarkable resistance to forgetting—achieving near-zero performance loss on prior tasks with minimal experience replay. The talk explores the quantitative evidence from LIBERO benchmarks, reveals how pretraining fundamentally alters the stability-plasticity trade-off, and uncovers the internal mechanisms that enable rapid recovery of seemingly forgotten knowledge. These findings challenge conventional wisdom in continual learning and point toward a simpler, more efficient paradigm for lifelong robotic skill acquisition.Script

Large language models memorize their training data, and now robotic AI faces the same risk in reverse. When robots learn new tasks sequentially, they catastrophically forget old ones—except when they don't. This paper reveals that pretrained Vision-Language-Action models defy conventional expectations.

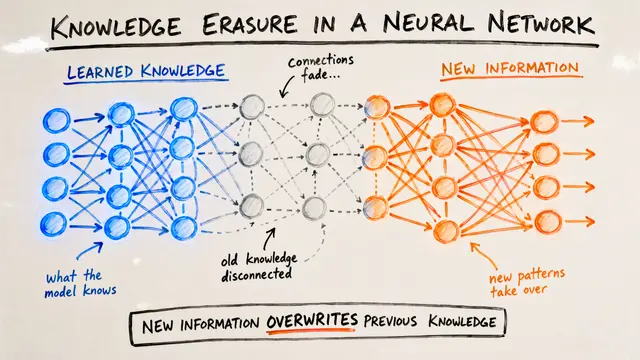

When robots learn manipulation tasks one after another, smaller models trained from scratch suffer severe memory loss. Even with 20 percent of the original data replayed during training, they still forget half of what they learned. This instability has long been the signature challenge of continual learning.

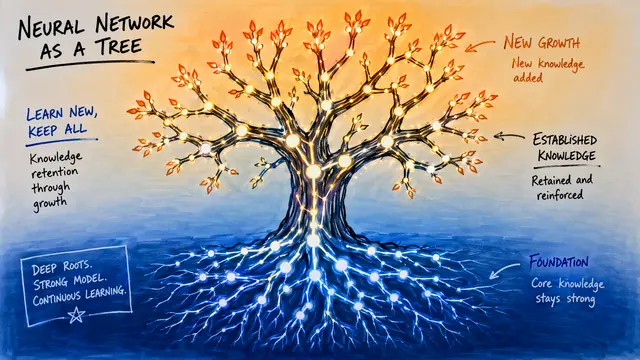

Pretrained Vision-Language-Action models operate in a fundamentally different regime.

On the LIBERO benchmarks, pretrained VLAs retain nearly perfect performance on earlier tasks using replay buffers as small as 2 percent of the dataset. In some cases, learning new tasks actually improved performance on old ones. The models no longer face the brutal choice between remembering the past and adapting to the future.

The researchers probed where forgetting actually occurs by swapping architectural components between trained models. The vision-language backbone bears most of the performance loss, yet it hasn't truly erased the knowledge. Task representations remain latent and recoverable. The action head, by contrast, maintains consistency across tasks, exploiting the compositional structure learned during pretraining.

These findings rewrite the playbook for continual learning in robotics. The sophisticated regularization techniques developed for smaller models become secondary concerns. What matters is the richness of the pretrained representations and a modest replay strategy. Practitioners can deploy robots that learn new skills without elaborate infrastructure to protect old ones.

Pretrained Vision-Language-Action models don't just resist forgetting—they retain knowledge internally, ready to resurface with minimal prompting. The classical stability-plasticity dilemma dissolves when models begin with sufficiently rich multimodal understanding. To explore this research further and create your own video presentations, visit EmergentMind.com.