Masked Depth Modeling for Spatial Perception

An overview of a novel approach that transforms sensor failures—like holes in depth maps caused by transparent surfaces—into "natural masks" for training powerful geometry-aware models.Script

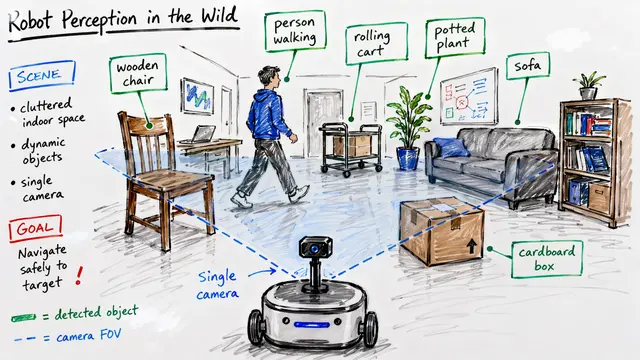

Imagine a robot navigating an office, trying to detect a glass door. To the human eye, it is a barrier, but to the robot's depth sensors, the glass is often an invisible void. This paper addresses that blindness by engaging with the very errors sensors traditionally discard.

Standard depth cameras struggle with shiny or see-through surfaces, leaving wide gaps in the data. Instead of treating these holes as confusing noise, the researchers reframe them as "natural masks"—valuable training signals that force the model to learn better geometry.

To capitalize on this idea, the method uses a joint architecture that combines the full RGB context with whatever partial depth is available. By processing aligned tokens from both modalities, the Vision Transformer learns to reconstruct precise, dense metric depth even where the physical sensor failed.

You can see the dramatic effect of this reconstruction in video depth completion. While the raw sensor input on the top row shows complete blackouts on mirrors and aquarium glass, the model successfully infers the missing geometry, creating a temporally consistent and dense depth map.

This isn't just about prettier images; it translates directly to physical utility for robotics. The authors demonstrate that this refined depth enables robotic arms to successfully grasp transparent cups that were previously invisible to the system, while also improving stability for 3D point tracking.

By embracing imperfection as a learning signal, this work bridges the gap between seeing an object and understanding its true shape. For the full code and datasets, check out the paper on ArXiv or visit EmergentMind.com.