Neural Thickets: Diverse Task Experts Are Dense Around Pretrained Weights

This presentation explores a fundamental shift in how large neural networks can be adapted after pretraining. The research reveals that sufficiently scaled pretrained models exist in 'neural thickets'—dense local neighborhoods populated by diverse, task-specialized weight configurations that can be discovered through simple random perturbation rather than costly sequential optimization. The authors demonstrate that this density-diversity regime enables RandOpt, a highly parallel gradient-free method that matches traditional fine-tuning methods while dramatically reducing coordination overhead, challenging conventional assumptions about learning difficulty and post-training adaptation.Script

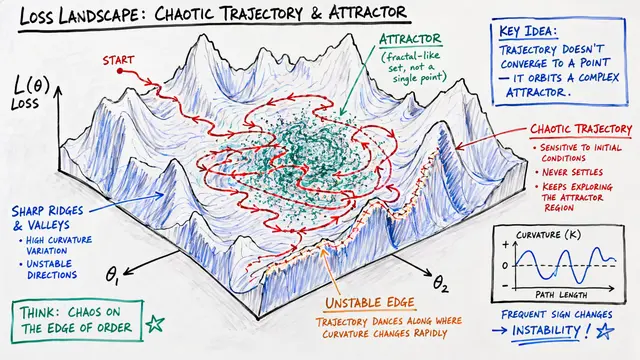

As neural networks grow larger, something remarkable happens in the space around their pretrained weights. What was once a barren landscape requiring careful sequential search transforms into a dense thicket of diverse, high-performing solutions discoverable by random exploration alone.

The researchers identify a phase transition in post-training weight space. For small models, finding effective adaptations is like searching for needles in a haystack, requiring structured optimization. But scale changes everything. Large pretrained models inhabit what the authors call neural thickets, where random perturbations routinely discover functional improvements. This isn't luck—solution density increases predictably with model scale, reaching remarkably high probabilities for current large language models.

These solutions aren't just dense—they're functionally diverse in surprising ways.

When the researchers sampled random perturbations around pretrained weights, each seed exhibited a unique performance spectrum across tasks. The visualization reveals something striking: these perturbations organize into tight clusters, each representing specialists with different areas of expertise. One cluster excels at mathematical reasoning, another at code generation, yet another at creative writing. This structured diversity isn't noise—it's a signature of how large-scale pretraining organizes the local weight space into distinct functional niches.

The thicket regime enables a radical departure from standard fine-tuning. Traditional methods like proximal policy optimization require expensive sequential gradient updates with coordination between steps. RandOpt exploits thicket density differently: generate thousands of random weight perturbations in parallel, evaluate them independently, select the top performers, and ensemble their predictions through majority voting. Across mathematical reasoning, code generation, and other domains, this approach achieves parity with reinforcement learning methods while requiring only a single synchronization point. The efficiency gain is architectural—fundamentally parallel rather than sequential.

A critical question: are these improvements real, or just superficial fixes? The decomposition reveals both matter. On mathematical reasoning tasks, roughly half the gains come from correcting answer formatting issues—teaching the model to express solutions in parseable form. But the other half represents substantive reasoning improvements, where perturbations genuinely solve problems the base model could not. The thicket phenomenon operates at multiple levels of abstraction simultaneously, from surface-level formatting conventions to deep problem-solving capabilities. This dual nature validates that the local weight space contains functionally meaningful structure, not just distributional shortcuts.

Neural thickets reframe what pretraining accomplishes. Rather than simply locating a good solution, large-scale pretraining transforms the local geometry itself, populating weight space neighborhoods with diverse functional specialists. This explains the surprising effectiveness of parameter-efficient methods like LoRA and quality-diversity algorithms—they're navigating pre-structured landscapes. The fundamental question remains open: precisely how do overparameterization and pretraining objective diversity conspire to induce this phase transition? Understanding this could revolutionize how we think about model initialization, adaptation, and the very nature of learned representations in high-dimensional parameter spaces.

Large pretrained models don't just solve problems—they exist in rich neighborhoods of diverse solutions waiting to be discovered. Visit EmergentMind.com to explore more cutting-edge research and create your own video presentations.