Learning to Discover at Test Time

A lightning talk on TTT-Discover, a method that enables large language models to update their weights during inference to solve hard scientific discovery problems.Script

What if a scientist stopped learning the moment they faced their hardest problem? That is the paradox of modern large language models: they are frozen in time exactly when they need to adapt. This paper, titled "Learning to Discover at Test Time," proposes a method to unfreeze them.

To understand why this is necessary, we must realize that scientific discovery requires ideas that simply do not exist in the training data. Current approaches typically prompt a frozen model to search for answers, but they cannot internalize their failures. The authors argue that to solve a truly hard problem, an AI agent must run reinforcement learning on that specific problem, in real-time.

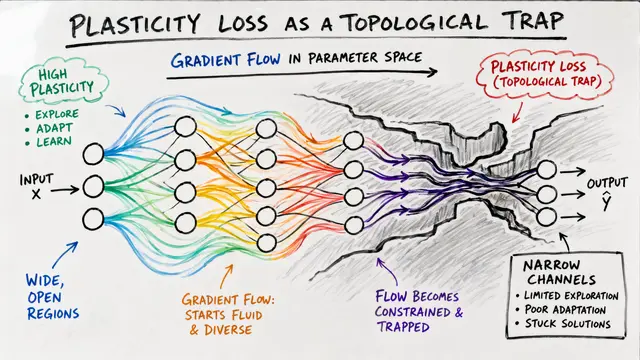

This approach changes the fundamental rules of reinforcement learning. While standard methods try to improve the average quality of an answer, scientific discovery only cares about the single best result. The researchers introduce an Entropic Objective that explicitly ignores safety to aggressively hunt for the peaks in the reward landscape, updating the model's actual parameters using gradients at test time.

The impact of allowing open-weight models to learn at test time is immediate and measurable across diverse fields. The method generated GPU kernels that run nearly twice as fast as those written by human experts, and it broke longstanding records in mathematics that previous AI systems could not touch.

You can see the complexity gap clearly in this visualization of the Erdős Minimum Overlap Problem. While the previous state-of-the-art method, AlphaEvolve, found a simple 95-piece construction, this new method discovered a highly complex, asymmetric 600-piece function. This illustrates how updating weights allows the model to drift far deeper into the solution space than simple search ever could.

Ultimately, this papers proves that compute spent on learning is far more valuable than compute spent on simple repetition. To dive deeper into these discovery algorithms, visit EmergentMind.com.