Deriving Neural Scaling Laws from the Statistics of Natural Language

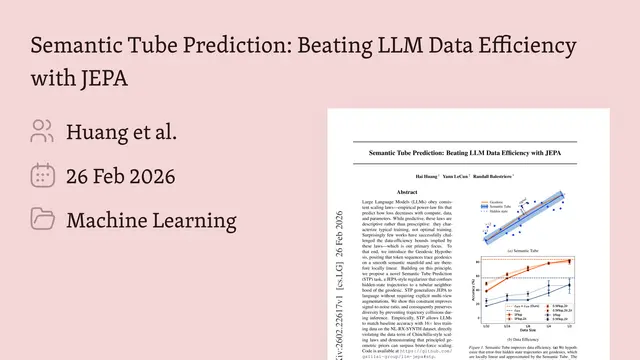

This presentation explores a breakthrough in understanding why large language models scale the way they do. The authors demonstrate that two measurable statistical properties of language data—how quickly conditional entropy decays with context and how token correlations fade over distance—directly determine the exponents governing neural scaling laws in the data-limited regime. Through rigorous theory and extensive experiments with autoregressive transformers on TinyStories and WikiText-103, they provide the first parameter-free framework for predicting scaling behavior from dataset statistics alone, revealing fundamental constraints on what models can learn from finite data.Script

Why do language models follow precise power laws when we scale up training data? The answer, it turns out, is hidden in two simple statistics you can measure directly from text itself.

Building on that puzzle, let's examine what we've known and what has remained opaque.

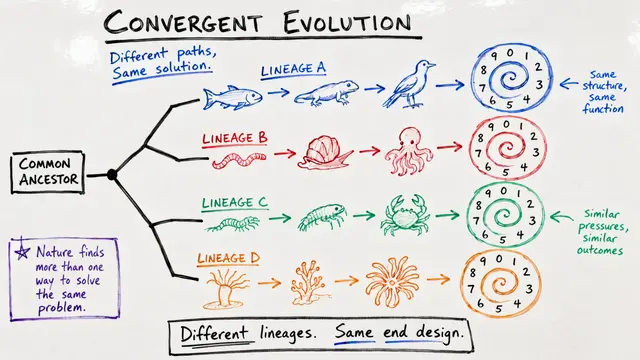

We've long observed that language model performance follows predictable power laws as training data grows. Yet the fundamental question has persisted: what determines these exponents? The authors set out to answer this by looking directly at language statistics.

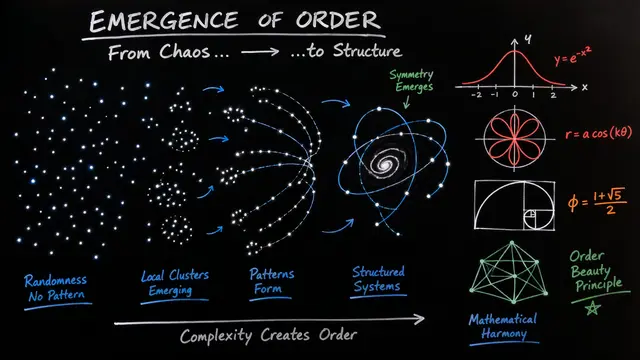

The breakthrough comes from identifying exactly two measurable properties of text.

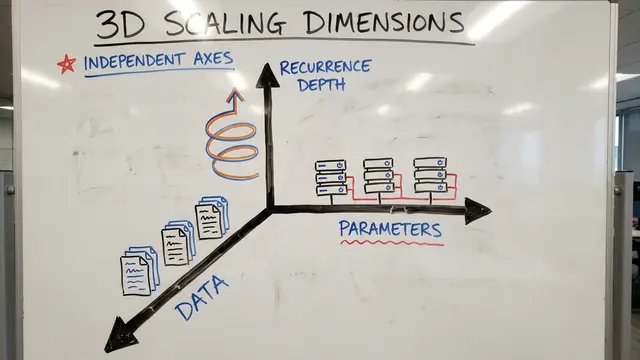

The theory identifies gamma, which tracks how quickly we reduce uncertainty about the next token as we see more context, and beta, which captures how correlations between distant tokens decay. Together, these define a dataset's learning signature.

The elegant result is alpha_D equals gamma over 2 beta, with no free parameters. This formula quantitatively predicts how test loss decreases with dataset size, purely from dataset statistics, and further predicts that learning curves across different context horizons collapse onto a single master curve.

Now let's see how theory meets reality across multiple architectures and datasets.

The authors validated their predictions rigorously. On TinyStories, they measured gamma at 0.34 and beta at 0.88, yielding a predicted exponent of 0.19, which matched observations across multiple transformer variants. WikiText showed similarly precise agreement, with the predicted 0.14 exponent confirmed experimentally.

The theory reveals that deep transformers operate in a horizon-limited regime, where performance gains come primarily from unlocking longer contexts rather than perfecting shorter ones. Crucially, there exists a statistical ceiling: no amount of architectural sophistication can overcome the fundamental limits imposed by a dataset's entropy and correlation structure at finite scale.

This work transforms our understanding of what drives scaling behavior in language models. By providing a concrete, theory-grounded method to predict exponents from data alone, it offers both practical tools for pretraining decisions and deep theoretical insight, suggesting that scaling laws emerge from universal properties of language rather than architectural details.

The next time you see a scaling law, remember: those exponents are echoes of the statistical structure hidden in language itself. Visit EmergentMind.com to explore more cutting-edge research.