There Will Be a Scientific Theory of Deep Learning

This presentation examines the bold claim that deep learning is evolving from an empirical art into a rigorous, predictive science. Drawing parallels with physics, the authors argue that convergent evidence across analytically solvable systems, scaling limits, empirical laws, hyperparameter disentanglement, and universality points toward an emerging "learning mechanics"—a first-principles framework capable of quantitatively predicting optimization dynamics, feature formation, and generalization in overparameterized neural networks.Script

Can the extraordinary empirical success of deep learning be explained by a rigorous, predictive theory, or will it remain a collection of clever tricks? This paper argues that learning mechanics, a physics-inspired framework for understanding gradient-based optimization, is already emerging.

The infinite width limit reveals a fundamental dichotomy. In the lazy regime, networks behave like fixed kernels, their features frozen during training. But modulate the output multiplier, and networks enter the rich regime, where features actively adapt and evolve.

Macroscopic observables follow surprisingly simple laws. Test loss obeys robust power-law relationships with parameter count, data size, and compute. These neural scaling laws are not just empirical curiosities; they are quantitative, falsifiable predictions that hold across architectures and tasks.

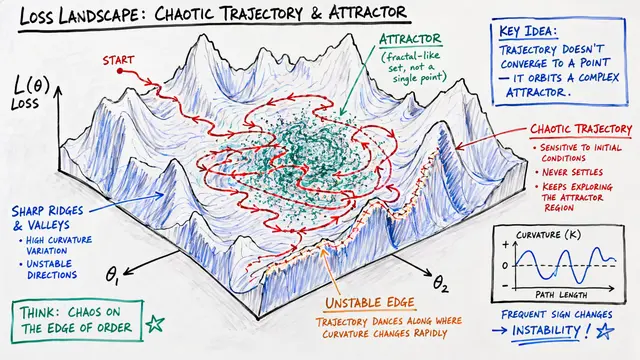

Training dynamics are poised at the edge of stability. Gradient descent drives the sharpness of the loss Hessian to exactly 2 over the learning rate, then hovers there. This precise, quantitative phenomenon holds across architectures, suggesting that aggregate statistics are governed by discoverable laws, not accidents of design.

Universality emerges across systems and modalities. Despite differences in design, vision and language models converge toward similar representations as they scale. Diffusion architectures trained on disparate data learn analogous distributions. These patterns suggest that deep learning occupies a universality class, where macroscopic behavior is insensitive to microscopic details.

The convergence of tractable limits, scaling laws, and hyperparameter transfer signals that learning mechanics is not a distant dream but an active research frontier. To explore this emerging theory further and create your own videos on cutting-edge research, visit EmergentMind.com.