A Theory of Generalization in Deep Learning

This presentation introduces a comprehensive framework for understanding how deep neural networks generalize beyond their training data. The theory decomposes the output space into signal channels where learning happens and reservoirs where errors are trapped, explaining phenomena like benign overfitting, double descent, and grokking as natural consequences of this structure. The work delivers both theoretical insights and a practical training algorithm that accelerates generalization in real tasks.Script

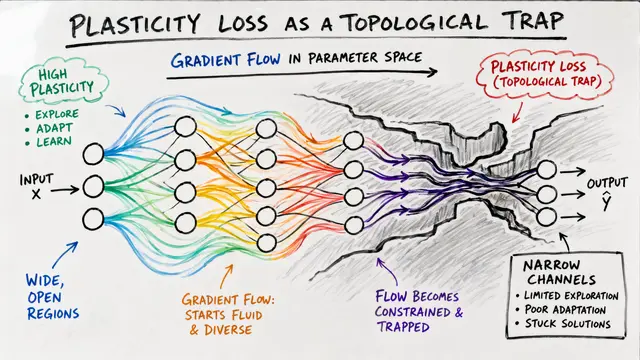

Deep networks can fit training data perfectly yet still generalize well. The authors explain this paradox through a geometric lens: the output space splits into signal channels where learning happens and reservoirs where errors are trapped and invisible at test time.

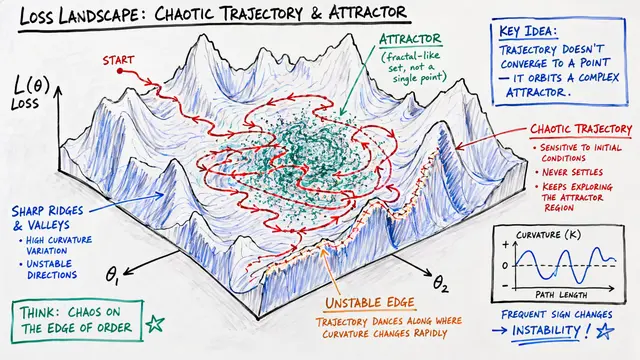

The empirical Neural Tangent Kernel and its trajectory-integrated dissipation Gramian determine which directions in output space gradient descent can actually influence. Large eigenvalues span the signal channel; near-zero eigenvalues define the reservoir.

Training dynamics separate cleanly into drift and diffusion. Population gradient accumulates linearly along signal directions, while minibatch noise grows only at the square root rate, naturally suppressing memorization of label noise.

Even as the network learns rich features and the kernel evolves substantially, test set motion is completely determined by training set motion projected onto the signal channel. The authors derive an exact, trajectory-dependent linear operator coupling train and test outputs.

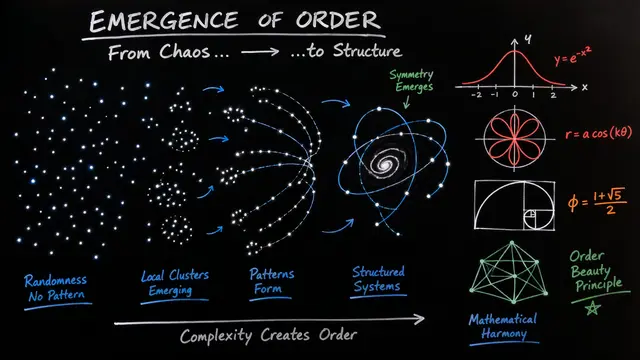

The signal-reservoir decomposition unifies four major puzzles in deep learning. Benign overfitting, double descent, implicit bias, and grokking all emerge as consequences of how signal and noise partition across the output space and evolve over training.

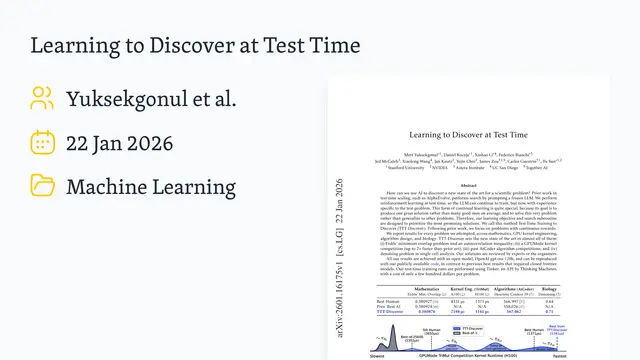

The theory yields a practical algorithm: update parameters only when their minibatch gradient signal exceeds noise variance. This population-risk-aware preconditioner accelerates grokking by 5 times, reduces convergence steps by 2.4 times in physics-informed networks, and keeps preference-tuned models 3 times closer to reference policies. Explore this framework and generate your own research videos at EmergentMind.com.