The Geometry of Noise: Why Diffusion Models Don't Need Noise Conditioning

This lightning talk unveils a fundamental geometric explanation for why diffusion models can generate high-quality samples without explicit noise-level conditioning. We explore the singular marginal energy landscape that autonomous models implicitly optimize, reveal how Riemannian geometry stabilizes otherwise divergent dynamics, and demonstrate why parameterization choice—specifically velocity-based approaches—determines whether noise-agnostic models succeed or catastrophically fail.Script

Diffusion models are generating stunning images without knowing how noisy their inputs are. This shouldn't work—traditional theory says you need explicit noise-level conditioning at every step. Yet autonomous models using a single, time-invariant field are matching the quality of their conditional cousins. The secret lies in a hidden geometric structure that has been there all along.

The paradox is striking. How does a neural network with no explicit awareness of noise levels navigate the complex journey from pure noise to clean data? The authors discovered the answer lies not in what the model knows, but in the geometry of what it optimizes.

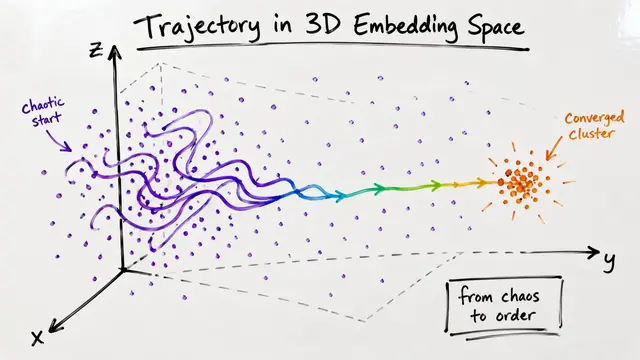

To understand autonomous models, we must first understand the energy landscape they're secretly navigating.

The marginal energy landscape integrates probability distributions across all possible noise strengths. Near clean data points, this landscape becomes singular—gradients explode toward infinity, creating potential wells so steep that naive optimization would fail instantly. This singularity explains why explicitly energy-based models struggle during training.

This visualization captures the core geometric challenge. The energy surface plunges sharply as we approach actual data points, with gradients that would overwhelm any bounded neural network. The deep blue regions represent these singular wells where traditional gradient-based methods would lose stability. Yet autonomous models navigate this treacherous geometry successfully.

The stability paradox resolves through an elegant geometric insight.

The learned vector field doesn't follow the raw energy gradient—it follows a preconditioned version where a local geometric metric counteracts the singularity. In high dimensions, concentration of measure phenomena kick in. The noise level becomes tightly encoded in the norm of the observation itself, allowing the model to implicitly infer what it was never explicitly told.

Parameterization choice is not a detail—it's fundamental to stability. The authors prove that noise-predicting models suffer from a structural instability: their gain diverges inversely with noise level. When autonomous models misestimate the implicit noise, these errors explode during sampling. Velocity-based parameterizations sidestep this entirely with bounded gains, providing mathematical stability guarantees that noise prediction cannot offer.

The theoretical predictions manifest starkly in practice. Autonomous noise-predicting models generate the grainy, incoherent samples shown in the top rows—structural instability made visible. The velocity-based Flow Matching Blind architecture produces the crisp, detailed images below, indistinguishable from models with explicit conditioning. This is not incremental improvement; it's the difference between failure and success.

This work fundamentally reframes what we thought was necessary in diffusion modeling. Explicit conditioning was considered essential, but the geometry reveals it's merely one way to stabilize sampling. The real requirement is the right parameterization—one that respects the Riemannian structure implicit in the marginal energy landscape. This insight could simplify architectures and training protocols across generative modeling.

The deep potential wells that should have trapped these models became the very structure that guides them home. Visit EmergentMind.com to explore how geometry, not conditioning, is the true compass of diffusion.