Visual Grounding in Vision-Language Models

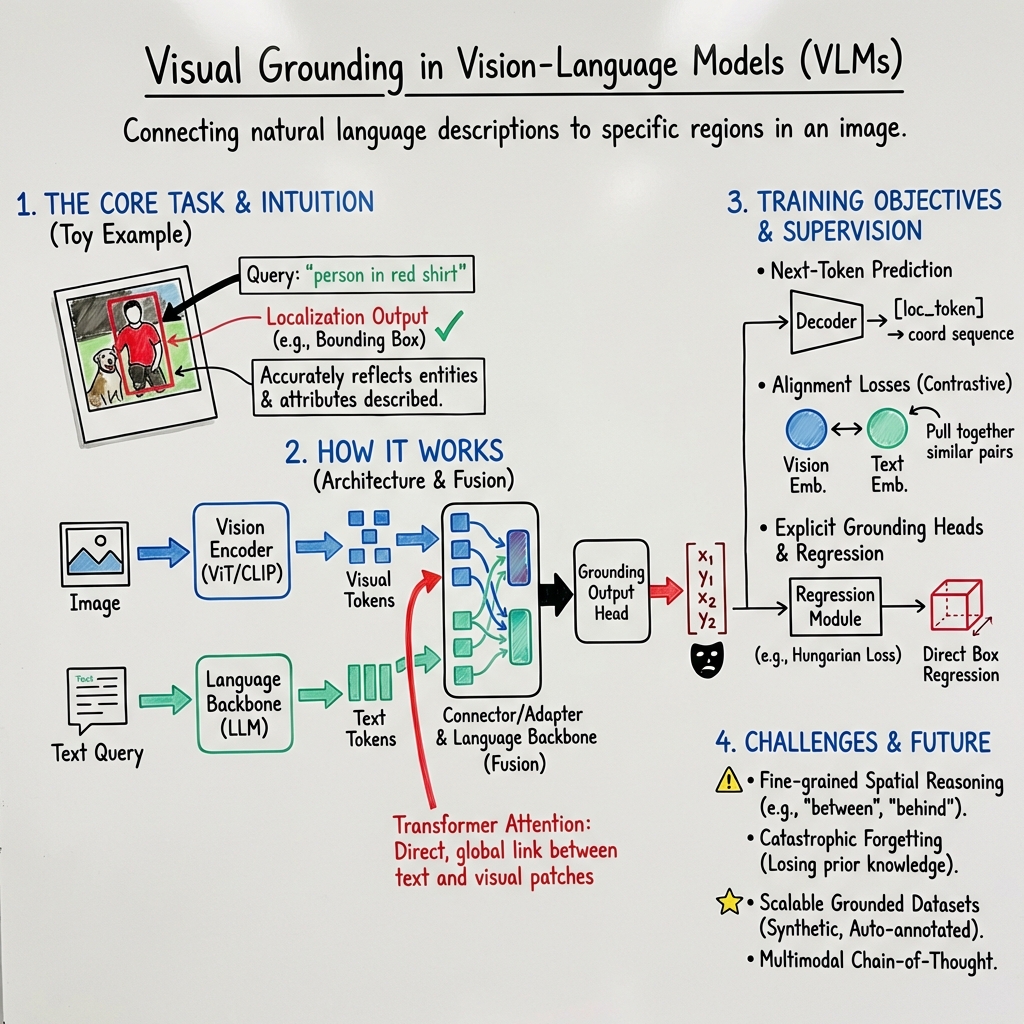

- Visual grounding in VLMs is the technique to align textual cues with precise image regions using outputs like bounding boxes or masks, evaluated with metrics such as IoU.

- Model architectures leverage transformer-based vision encoders and large language backbones, employing early fusion or interleaved cross-attention for effective multimodal integration.

- Training combines next-token prediction, alignment losses, and reward modeling alongside diverse datasets to enhance precision, generalization, and interpretability.

Visual grounding in Vision-LLMs (VLMs) refers to the capacity to localize regions in an image or scene that correspond to a given natural language description. This enables a model to anchor linguistic expressions to precise perceptual evidence—serving tasks such as referring expression comprehension, phrase grounding, instruction following, and multimodal visual reasoning. Visual grounding is foundational to trustworthy and interpretable multimodal systems, affecting domains from robotics and GUI interaction to medical report generation and remote sensing.

1. Core Definition and Formalization

Visual grounding requires that, given visual input (typically an image or point cloud) and a free-form text query , a VLM produces a localization output—commonly a bounding box, segmentation mask, point set, or a sequence of geometric primitives—that accurately reflects all entities, attributes, and relations described by .

Formally, for image and query , the model outputs a set of predicted regions . These are compared against ground-truth regions via metrics such as Intersection-over-Union (IoU) (Pantazopoulos et al., 12 Sep 2025). In advanced settings, grounding may extend to oriented boxes, full-resolution segmentation masks, or even 3D traces and trajectories (Zhou et al., 2024, Xue et al., 30 Sep 2025).

Grounding objectives are typically cast as either sequence-level cross-entropy over region coordinates/tokens, or as region–language retrieval/alignment losses (contrastive or classification) (Pantazopoulos et al., 12 Sep 2025).

2. Model Architectures and Fusion Strategies

Visual grounding models generally follow a modular design:

- Vision Encoder: Typically a transformer-based network (e.g., ViT, CLIP) encodes input images/patches into a sequence of visual tokens, often pretrained with contrastive losses (Pantazopoulos et al., 12 Sep 2025).

- Connector/Adapter: Projects visual token embeddings into the LLM’s hidden space, preserving either grid locality (CNN/MLP) or compressing via attention resamplers (Q-former, Perceiver) (Pantazopoulos et al., 12 Sep 2025, Guo et al., 23 Aug 2025, Zhou et al., 2024).

- Language Backbone: A large autoregressive transformer (e.g., LLaMA, Vicuna), processes the concatenated visual and text tokens (Pantazopoulos et al., 2024, Mahajan et al., 21 Nov 2025).

Fusion paradigms vary:

- Early fusion: Visual tokens are prepended to text tokens for all LLM layers [MiniGPT-v2].

- Interleaved cross-attention: Cross-attention modules are inserted at select layers, enabling information flow between visual and textual streams at multiple depths (Mahajan et al., 21 Nov 2025, Guo et al., 23 Aug 2025).

Recent work demonstrates that transformer backbones systematically outperform structured state-space models (SSMs) for grounding due to direct, global attention over all prior tokens (Pantazopoulos et al., 2024).

3. Training Objectives and Supervision

Grounding tasks require supervision that bridges both modalities:

- Next-token Prediction: Standard decoder objective for generating token sequences encoding region coordinates or masks (Luo et al., 2024, Toker et al., 9 Dec 2025).

- Alignment Losses: Contrastive and cross-modal alignment objectives (e.g., CLIP/SigLIP-style) to force similarity between vision and text embeddings (Pantazopoulos et al., 12 Sep 2025, Xu et al., 2023).

- Auxiliary Supervision: KL-based attention loss (KLAL) aligns internal attention maps with ground-truth region annotations, directly steering the model to attend to correct patches while generating answer tokens (Esmaeilkhani et al., 16 Nov 2025).

- Explicit Grounding Heads: Structured localization modules with control tokens (e.g., ⟨bb⟩, ⟨loc⟩) enable direct regression of box coordinates within the VLM, leveraging permutation-invariant matching (Hungarian loss) and geometry-aware objectives (Toker et al., 9 Dec 2025).

- Reward Modeling: Fine-grained human or automated predicates (e.g., object presence, attribute accuracy, relation consistency) are used to select or weight model outputs during supervised or RLHF-style training, enhancing factuality and faithfulness (Yan et al., 2024).

Hierarchical designs (coarse-to-fine) further improve grounding by linking global context perception to local refinement and semantic validation, thus suppressing hallucinations (Guo et al., 23 Aug 2025).

4. Evaluation Protocols and Benchmarks

Grounding evaluation utilizes several standardized metrics across benchmarks:

- IoU Accuracy: Percentage of predicted boxes/masks with IoU ≥ 0.5 relative to ground truth [RefCOCO, RefCOCO+, RefCOCOg].

- Pointing Game: Given the top activation or predicted point, does it fall within the annotated ground-truth region? (Rajabi et al., 2024)

- PG Uncertainty: Quantifies ambiguity when multiple top scoring regions straddle the box boundary (Rajabi et al., 2024).

- Soft IoU/Dice: Continuous overlap scores measuring GradCAM saliency against ground-truth masks (Rajabi et al., 2024).

- Generalized Precision, F1: One-to-one region matching at specified IoU thresholds; sample-level for multi-object tasks (Pantazopoulos et al., 12 Sep 2025, Zhou et al., 2024).

- Embodied Reasoning Metrics: Multi-stage protocols assess grounding, action-point selection, and trajectory/planning quality in robot or GUI contexts (Xue et al., 30 Sep 2025).

- Medical/RSS Applications: Specialized metrics such as RoDeO in medical imaging and rotated-IoU in remote sensing for oriented object grounding (Li et al., 5 Mar 2025, Zhou et al., 2024).

Extensive ablation studies probe the contributions of fusion pattern, grounding loss, semantic supervision, and cross-modal attention regularization (Pantazopoulos et al., 2024, Guo et al., 23 Aug 2025).

5. Data Synthesis, Scalability, and Domain Transfer

Visual grounding research depends on high-quality, large-scale datasets covering diverse domains:

- Synthetic Chain-of-Thought Data: SCOUT produces multi-turn, stepwise reasoning traces with bounding box supervision, supporting data-efficient forget-free fine-tuning (Bhowmik et al., 2024).

- Generative VLM Annotation: Recent approaches leverage generative VLMs to auto-annotate vast quantities of image–region–caption pairs, scaling up grounding datasets to hundreds of thousands of images and millions of referring expressions (Wang et al., 2024).

- Bootstrapped Medical Data: Phrase extraction via LLMs paired with open-source detectors and segmenters yields grounded report datasets robust to 2D/3D and structural variability (Luo et al., 2024, Zhang et al., 11 Jan 2026).

- Verification Pipelines: Multi-stage schema, geometry-prior, and visual model checks ensure annotation quality and semantic consistency, with explicit filtering for ambiguous or unsupported queries (Zhang et al., 11 Jan 2026).

- Hybrid Guidance and Region Ranking: Contrastive region guidance (CRG) enables zero-shot region selection and response guidance by contrasting model output under original and masked images, significantly improving accuracy without additional training (Wan et al., 2024).

Models trained on hybrid and verified grounded corpora display notable improvements in multi-object semantic sensitivity, domain invariance, and localization precision (Zhang et al., 11 Jan 2026, Zhou et al., 2024).

6. Domain Specialization and Extensions

Visual grounding in specialized domains necessitates domain-aware adaptation:

- Medical VLMs: Grounding is extended to both segmentation and detection, with support for anatomical structures (segmentation) and focal abnormalities (bounding boxes), backed by knowledge decomposition strategies, prompt tuning, and dynamic patch embedding for volumetric (3D) data (Luo et al., 2024, Li et al., 5 Mar 2025).

- Remote Sensing: GeoGround and SATGround equip generalist VLMs with flexible output heads supporting horizontal boxes, oriented boxes, and segmentation masks—leveraging text-based mask encoding and control tokens, as well as geometry-guided and prompt-assisted learning for consistent signal integration (Zhou et al., 2024, Toker et al., 9 Dec 2025).

- 3D Visual Grounding: Weakly- and zero-shot approaches bridge 3D point clouds and natural language via hybrid representations (rendered views + spatial descriptions) and fusion modules, with robust viewpoint adaptation and alignment scoring (Li et al., 28 May 2025, Xu et al., 2024, Xu et al., 2023).

- Embodied Agents: Hierarchical benchmarks evaluate models not only on pixel-level localization (S1), but also on action-driven task-pointing (S2) and multi-step trajectory prediction (S3), revealing a split between precise grounding and planning competence (Xue et al., 30 Sep 2025).

7. Current Limitations and Research Directions

Despite recent progress, several challenges persist:

- Fine-grained Spatial Reasoning: Models struggle with complex relations (e.g., "between," occlusion, stacking) and out-of-distribution visual patterns (Li et al., 28 May 2025, Xu et al., 2024).

- Catastrophic Forgetting: Naive fine-tuning for grounding can erase upstream image-understanding skills; dual-expert and forget-free tuning architectures mitigate but do not eliminate this risk (Bhowmik et al., 2024).

- Attention Leakage: Without explicit guidance, LLM layers often default to language priors; direct attention supervision (KLAL) and knowledge distillation from mask models (SAM) provide substantial grounding gains (Esmaeilkhani et al., 16 Nov 2025, Mahajan et al., 21 Nov 2025).

- Dataset Noise and Domain Gap: Training on synthetic or auto-generated queries may propagate model biases; carefully designed verification and semantic diversity mechanisms are required (Zhang et al., 11 Jan 2026).

- Efficient Region Linking: CRG and contrastive approaches offer zero-shot region re-ranking but incur additional inference costs and depend on the quality of region proposals (Wan et al., 2024).

- Generalization to Unseen Classes and Modalities: Decomposed knowledge prompts, explicit spatial cues, and geometry-aware heads facilitate transfer but may need richer attribute ontologies and adaptive fusion (Li et al., 5 Mar 2025, Zhou et al., 2024).

Future directions include scalable grounded dataset creation, integration of multimodal chain-of-thought with grounding rewards, development of hybrid SSM–attention architectures for efficient long-sequence grounding, and systematic benchmarks spanning physical interaction, web environments, and specialized imaging domains (Pantazopoulos et al., 12 Sep 2025, Guo et al., 23 Aug 2025, Li et al., 28 May 2025).

Visual grounding in VLMs is now characterized by multi-modal fusion strategies, explicit and implicit region alignment objectives, hierarchical reasoning pipelines, and an emerging ecosystem of scalable benchmarks, data augmentation protocols, and domain-specialized frameworks. Research continues to advance robust, data-efficient, and interpretable grounding across increasingly complex domains and tasks.