- The paper introduces AutoSkills, a framework that automatically extracts, refines, and expands a hierarchical skill knowledge base for LLM agents.

- It demonstrates significant performance improvements (~10-point task success boost) for weaker models through a multi-level skills representation.

- The iterative refinement and exploration strategies enable efficient API usage and robust knowledge transfer across diverse environments.

SkillX: Automated Construction of Transferable Skill Knowledge Bases for LLM Agents

Introduction

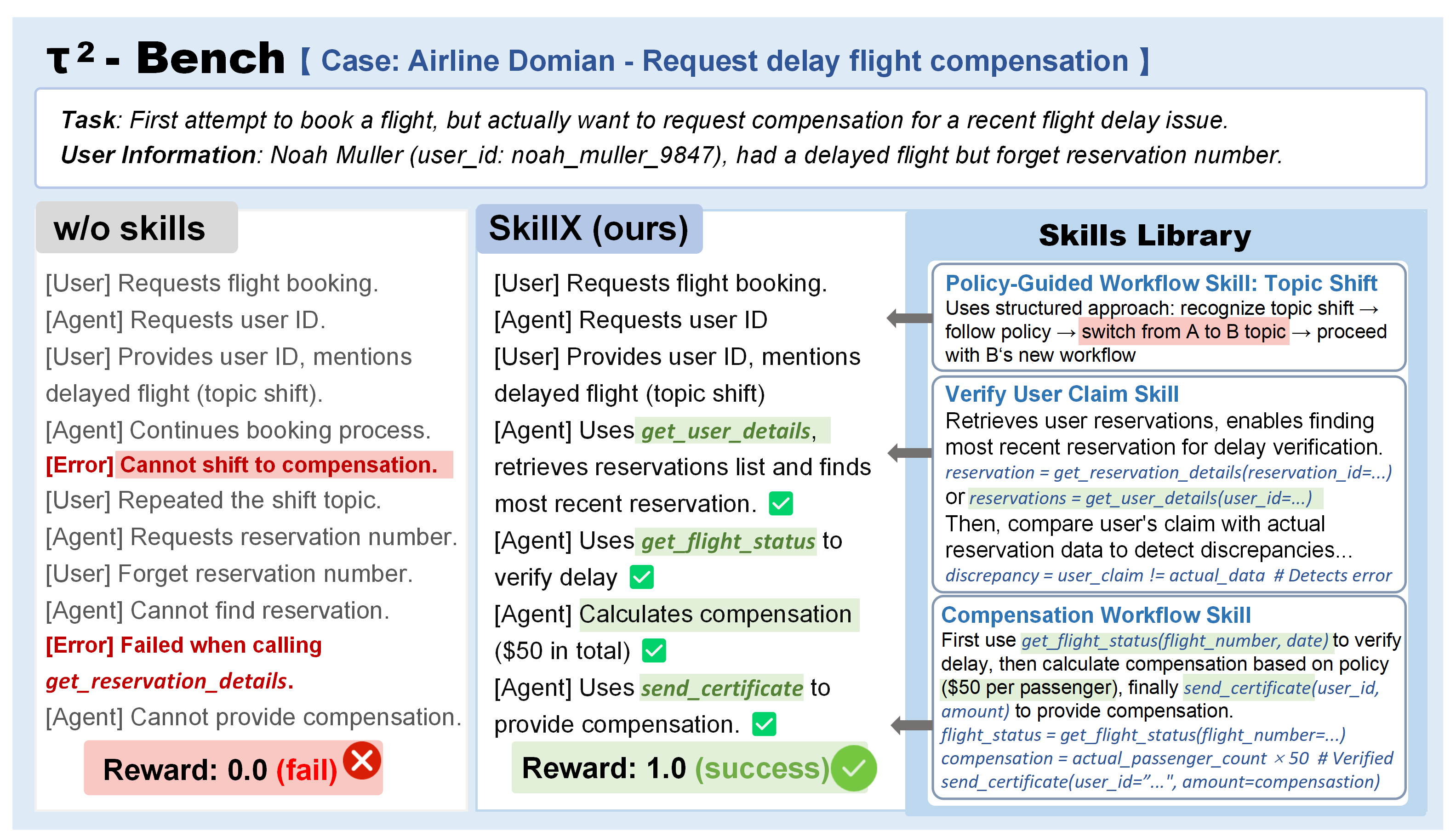

SkillX (2604.04804) systematically addresses the inefficiencies and limited generalization of prevailing experience-driven paradigms in LLM agent development. The paper introduces AutoSkills, a fully automated framework for extracting, refining, and expanding a reusable hierarchical skills knowledge base (SkillKB) that is applicable across diverse agents and environments. Unlike prior workflow- or trajectory-based experience representations, which often suffer from poor retrieval and brittle executability, AutoSkills leverages a multi-level skill hierarchy and iterative data-driven optimization strategies to mitigate redundant exploration and maximize knowledge transfer.

Multi-Level Skill Representation and Automated Pipeline

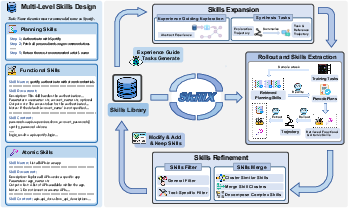

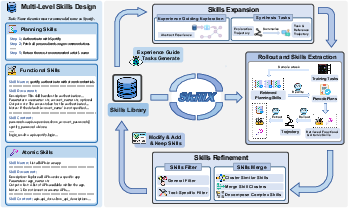

AutoSkills organizes the skills library into three distinct abstraction levels: planning skills, functional skills, and atomic skills. Planning skills represent high-level organizational knowledge, guiding functional skill composition for task decomposition; functional skills encapsulate reusable, tool-based subroutines for sub-task resolution; atomic skills encode fine-grained execution patterns, constraints, and API usage nuances.

Figure 1: AutoSkills provides an automated, iterative pipeline for constructing a skills library at three levels: planning, functional, and atomic skills.

The pipeline comprises three tightly coupled phases:

- Skills Extraction: Successful task trajectories generated by a backbone agent are distilled into the structured, multi-level hierarchy.

- Skills Refinement: The extracted skills undergo iterative consolidation—merging functionally redundant skills and strict filtering against tool schemas and abstraction standards—progressively enhancing actionability and transfer robustness.

- Skills Expansion: The system proactively explores under-utilized or failure-prone APIs, synthesizing new tasks and extracting novel skills to systematically extend the coverage of the initial library, transcending the limitations of seed training data.

This automated, modular extraction and refinement loop enhances both the compositional expressivity and generalization potential of the resulting SkillKB, directly facilitating plug-and-play extension of LLM agent competencies.

Empirical Evaluation and Analysis

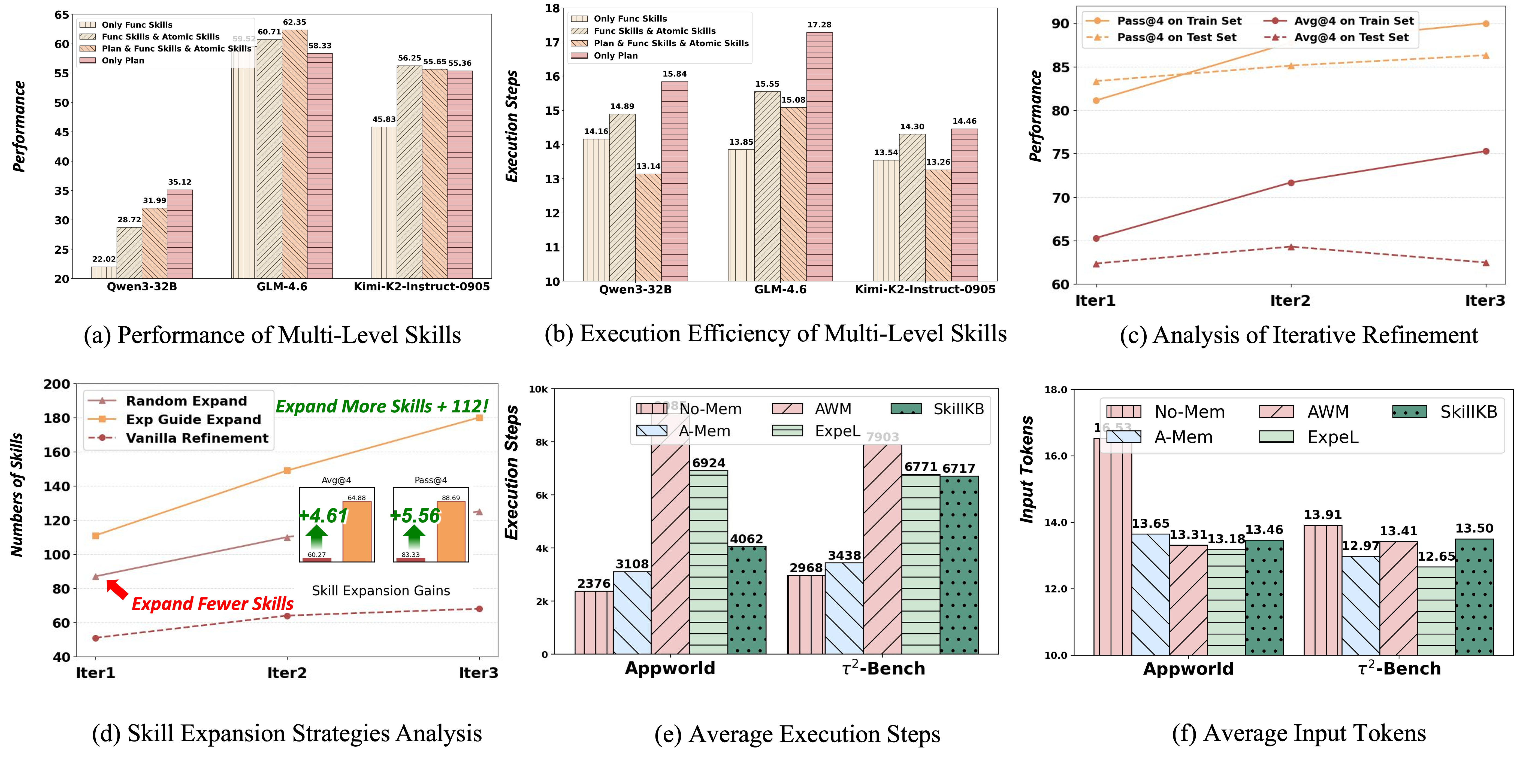

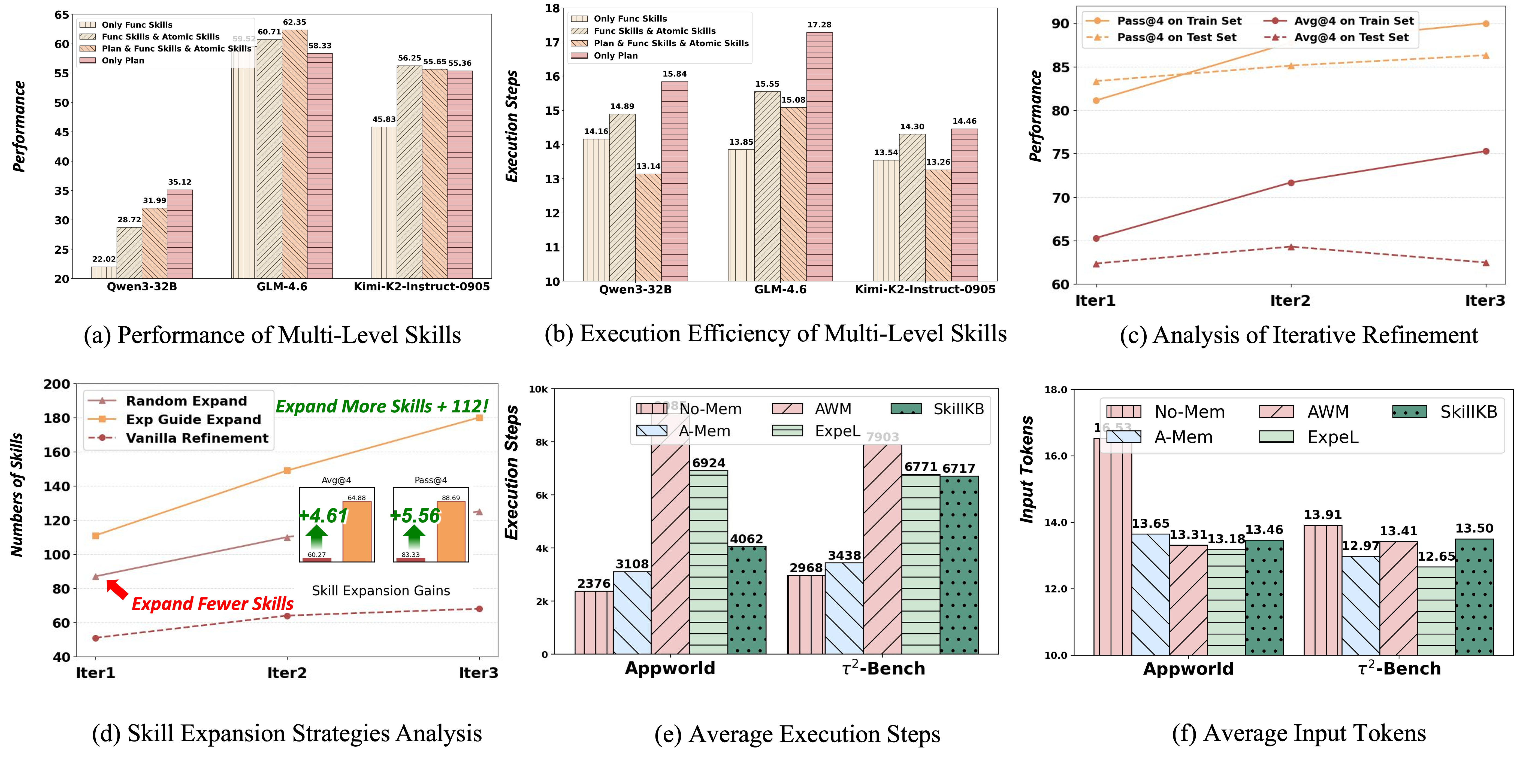

Comprehensive evaluation is performed across AppWorld, BFCL-v3, and τ2-Bench, each requiring long-horizon, user-interactive, and tool-centric reasoning. SkillKBs constructed with a strong GLM-4.6 agent significantly enhance the performance of weaker models (e.g., Qwen3-32B, Kimi-K2-Instruct-0905), yielding robust ~10-point improvements in task success and execution efficiency. Gains are present regardless of whether skill extraction is aligned or cross-model, but optimal transfer requires the multi-level, hierarchical representation; trajectory-centered or workflow-centered baselines (e.g., ExpeL, AWM) consistently underperform relative to AutoSkills.

Figure 2: Detailed analysis of AutoSkills shows compositional skill usage and iterative expansion/refinement directly impact both success rate and execution efficiency across multiple axes.

The analysis demonstrates:

- Planning skills yield the most significant reduction in execution steps for weaker models, while functional skills deliver the largest direct performance boosts.

- Atomic skills play a critical role in clarifying API usage; omission degrades performance due to increased susceptibility to under-specified or failed action sequences.

- Iterative refinement (merging/filtering cycles) progressively improves both test and train success rates, although excessive rounds can induce overfitting, particularly in limited-data regimes.

- Experience-guided expansion strategy, as opposed to random exploration, produces more diverse and high-quality skills, driving improved generalization and sample efficiency.

- Execution efficiency: SkillKB-augmented models perform more robustly with fewer tokens and fewer steps, crucial for scaling agent deployments under resource constraints.

Practical Case Studies

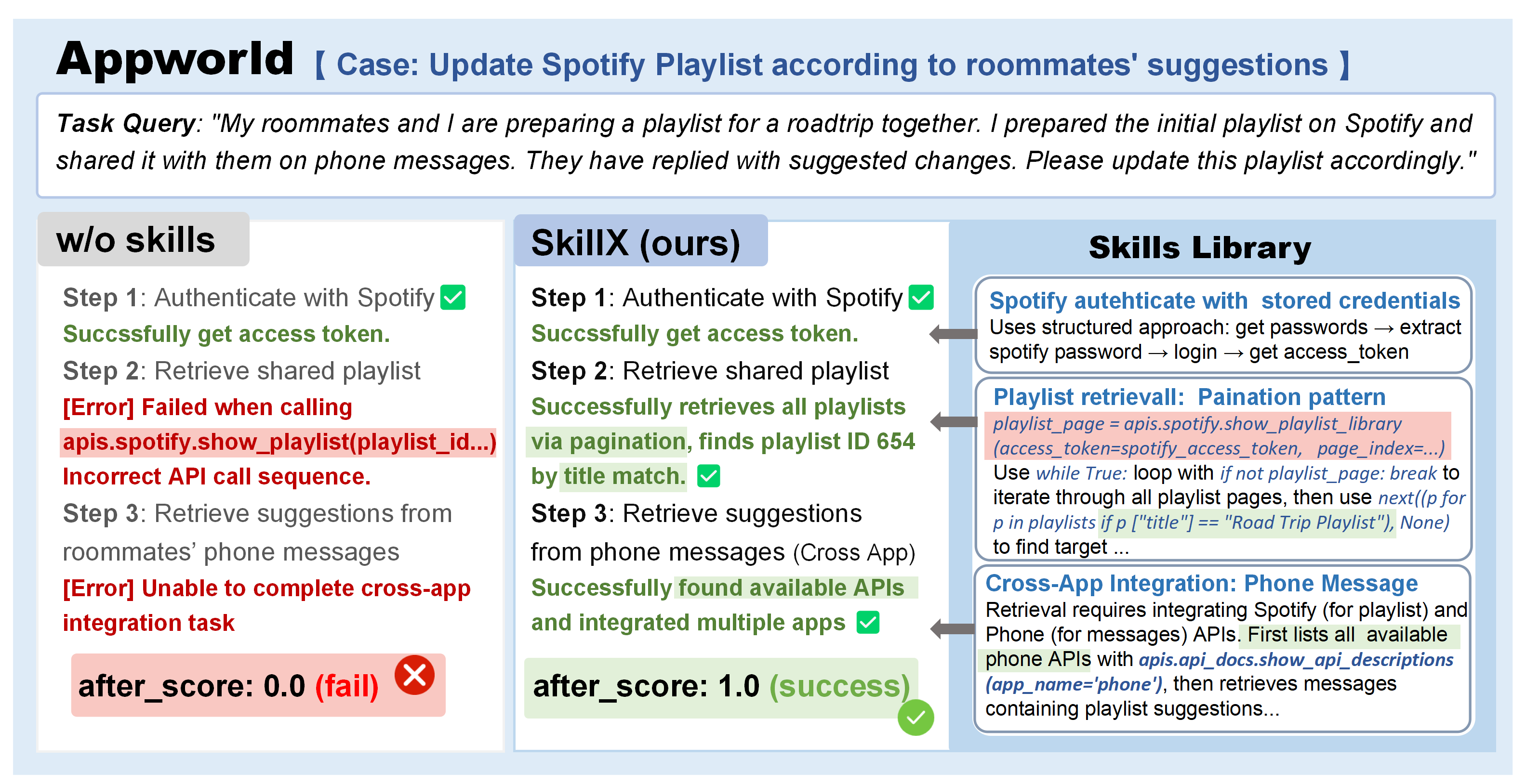

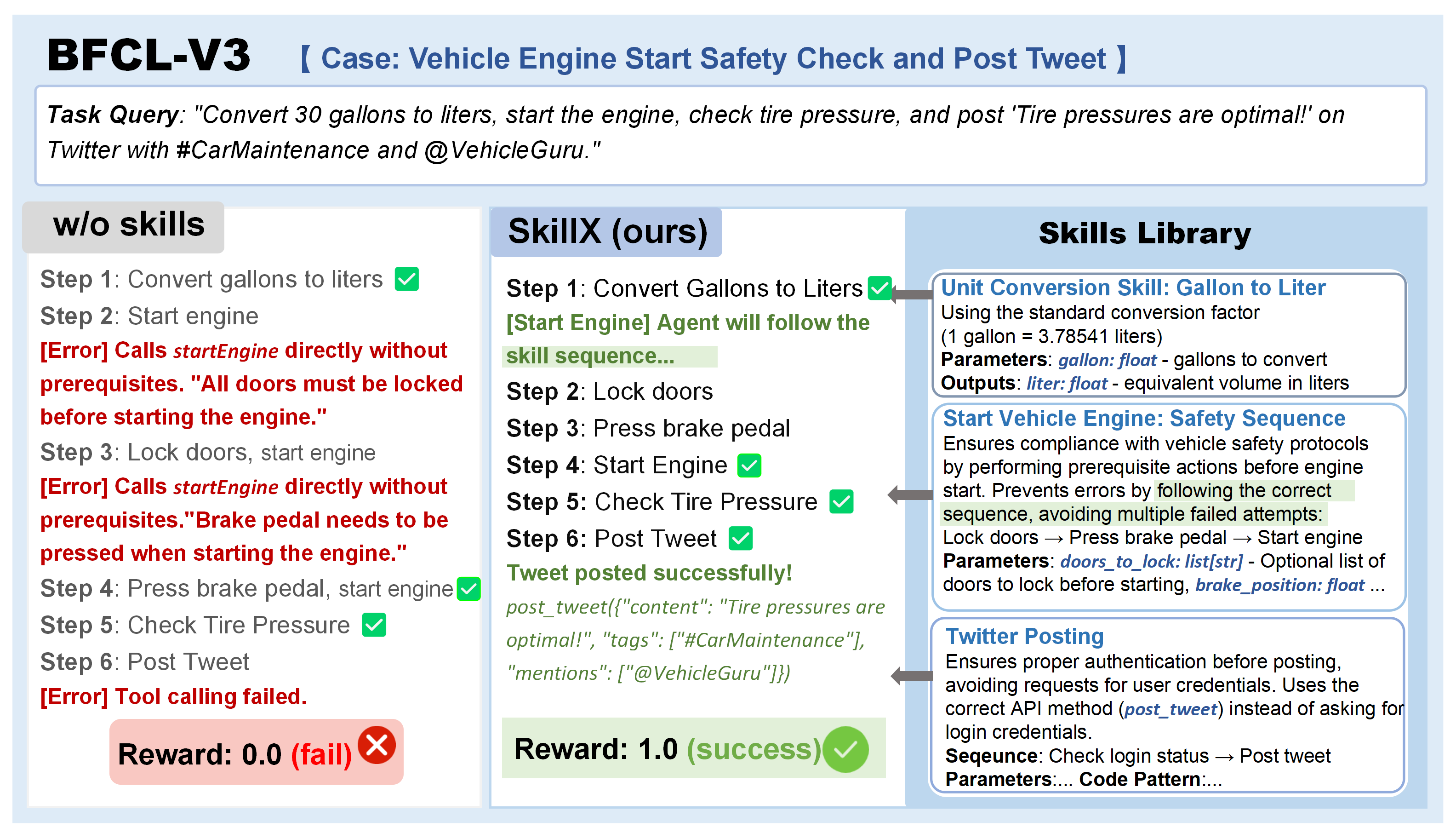

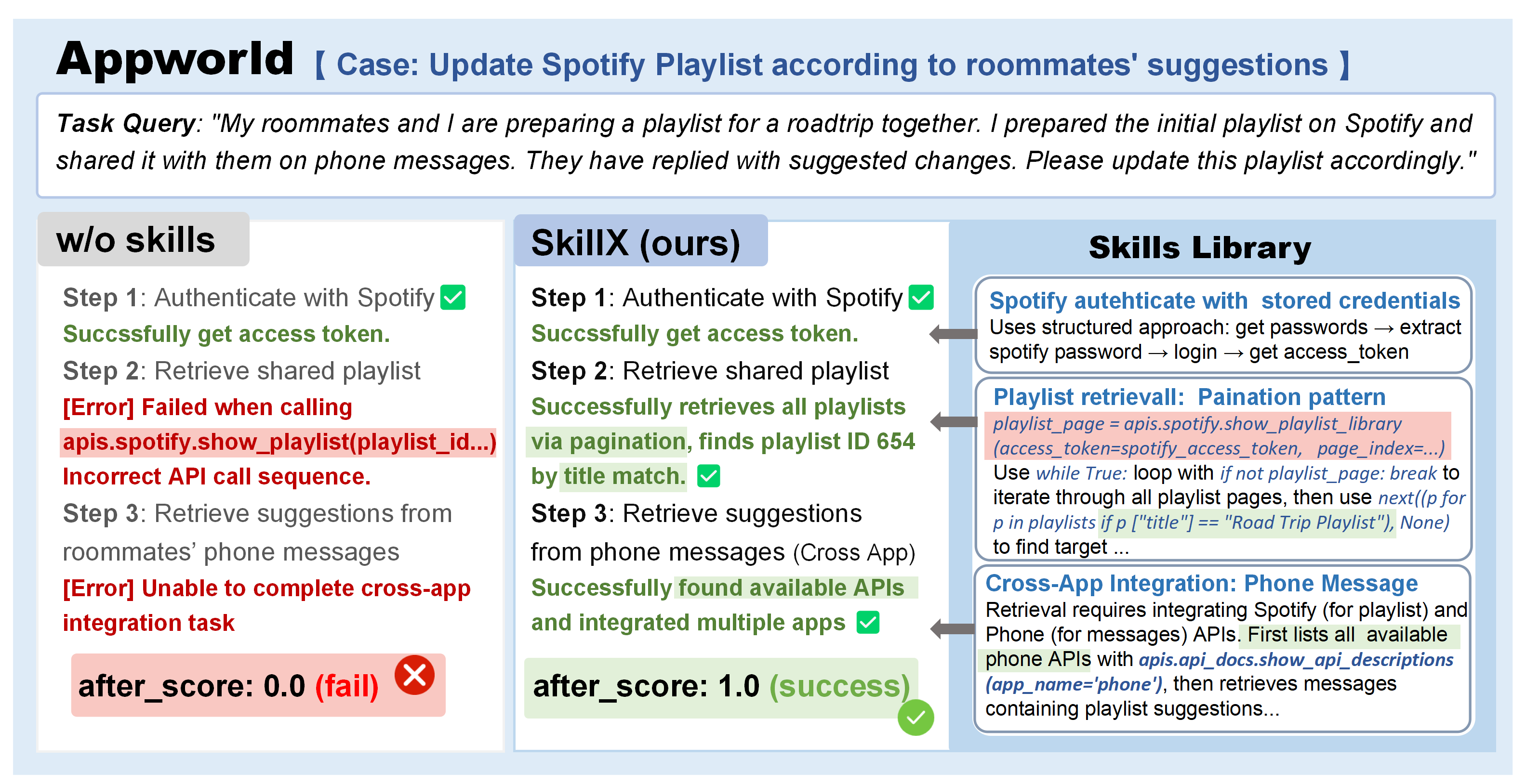

Case analyses on representative scenarios highlight AutoSkills’ operational impacts:

Figure 3: In AppWorld, AutoSkills enables correct multi-app API choreography (e.g., playlist management), resolving cross-application intent and handling pagination—failure points for non-skill-augmented agents.

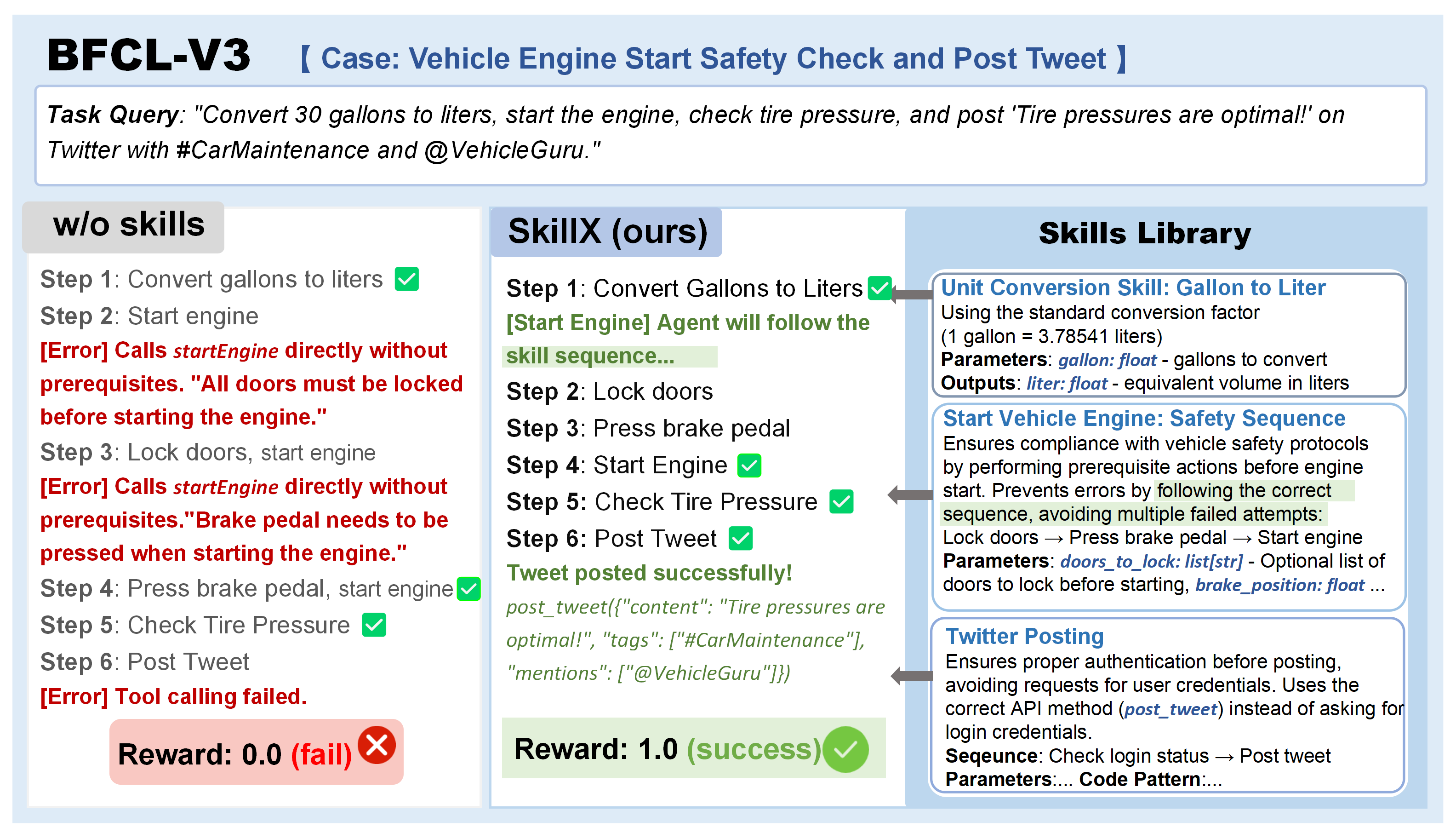

Figure 4: On BFCL-v3, prerequisite handling and robust authentication emerge from skill-guided orchestration, avoiding common tool invocation errors.

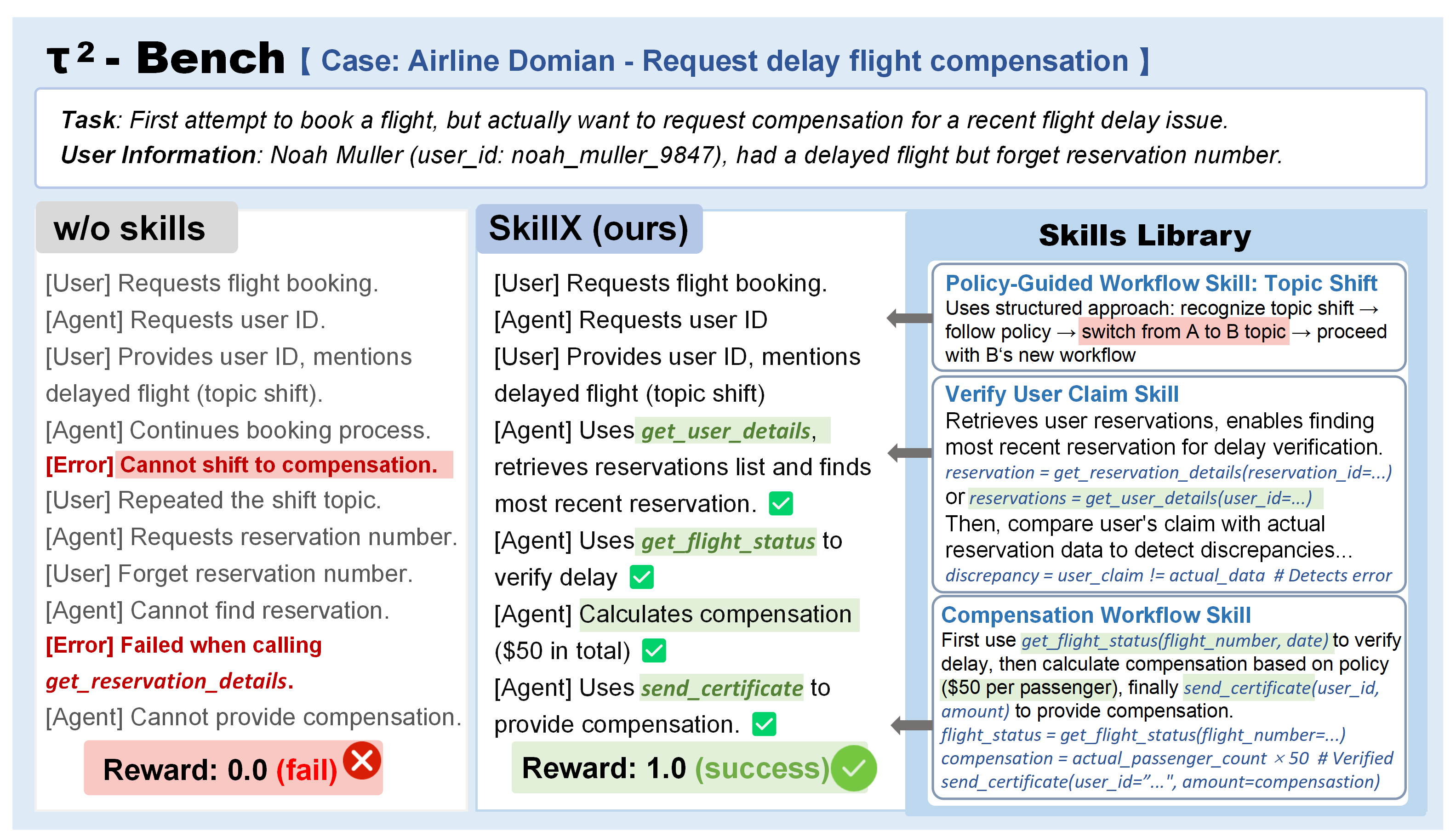

Figure 5: In the airline domain (τ2-Bench), AutoSkills-augmented agents exhibit topic-shift tracking and stateful retrieval absent in baseline systems, leading to substantially higher first-attempt success rates.

These qualitative studies underscore how structured skills substantially mitigate mode error and policy mismatch, conferring first-attempt task completion capability that surpasses non-hierarchical baselines.

Theoretical and Practical Implications

The results validate several critical design choices: hierarchical experience encoding is superior to workflow- or flat-trajectory abstraction for sustained cross-model transfer; automated, feedback-driven skills refinement and targeted exploration-based expansion substantially outperform static or randomly extended memory-based approaches; and the combination delivers sample-efficient, generalizable, modular agent learning.

This approach provides a path to scalable construction of emergent agent capabilities—from API-centric environments to settings where formal tool schemas are available—while also illuminating the limits of abstraction transfer: direct migration across heterogeneous domains or user-only interaction regimes is non-trivial and warrants further investigation (see Limitations).

Outlook and Future Perspectives

SkillX sits at the intersection of meta-agentic memory system research and modular procedural memory design. Future directions may integrate reinforcement learning for meta-selection of expansion/exploration policies, dynamically adaptive skill abstraction levels, or memory compaction via continual cross-domain distillation. Open challenges remain in cross-domain SkillKB migration, robustness under adversarial environment shifts, and compositional transfer to pure natural language (function-free) settings. The imminent public release of curated skill libraries is poised to catalyze broad community adoption, benchmarking, and comparative evaluation.

Conclusion

AutoSkills delivers a scalable, automated methodology for constructing transferable, multi-level skill knowledge bases. Its demonstrated empirical superiority arises from the synergy of hierarchical skill representation, iterative refinement, and exploration-driven expansion. The framework advances both the theory and practice of experience-driven LLM agentics, providing a robust foundation for future research into efficient, modular, and generalizable agent learning (2604.04804).