- The paper introduces ROSClaw, a unified hierarchical framework that merges high-level cognitive reasoning with low-level physical control for heterogeneous multi-agent systems.

- The paper details an innovative e-URDF-based validation mechanism that filters infeasible commands via digital twin simulation to ensure physical safety.

- The paper demonstrates robust real-world performance in smart home environments, achieving scalable, feedback-driven collaboration among diverse robotic agents.

ROSClaw: A Hierarchical Semantic-Physical Architecture for Heterogeneous Multi-Agent Collaboration

Introduction

ROSClaw introduces a unified hierarchical agent framework to bridge the longstanding gap between high-level cognitive reasoning, typically handled by large language and vision-LLMs, and low-level real-time physical control in heterogeneous multi-agent robotic systems. The framework advances the paradigm of embodied intelligence by providing a tightly integrated architecture for robust, scalable, and continually improving heterogeneous agent collaboration. Unlike modular pipelines with static and fragmented integration points, ROSClaw proposes an end-to-end system that integrates reasoning, physical constraints, and data accumulation into a closed feedback loop, emphasizing continuous system adaptation and skill reuse throughout the deployment lifecycle.

System Architecture

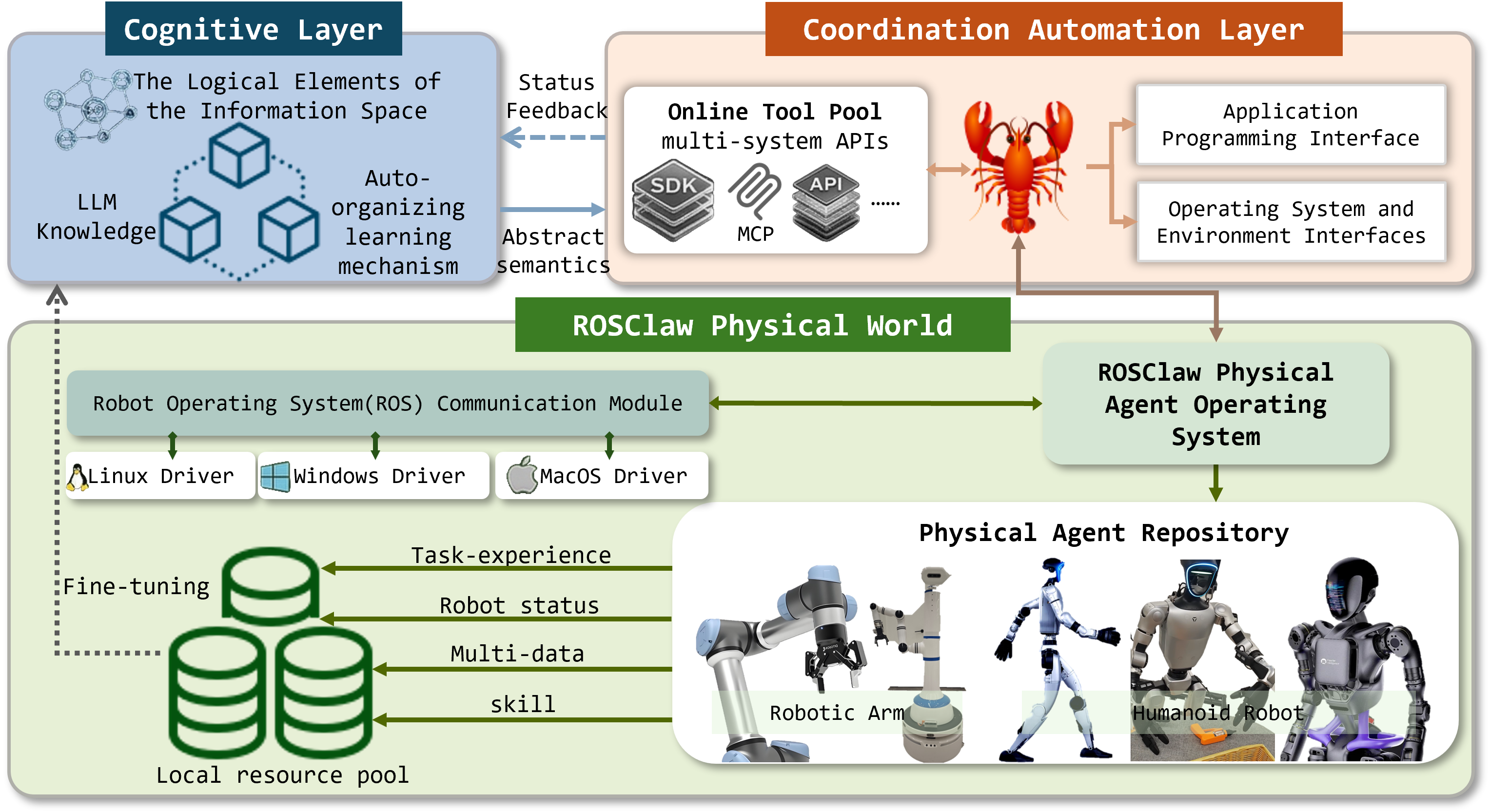

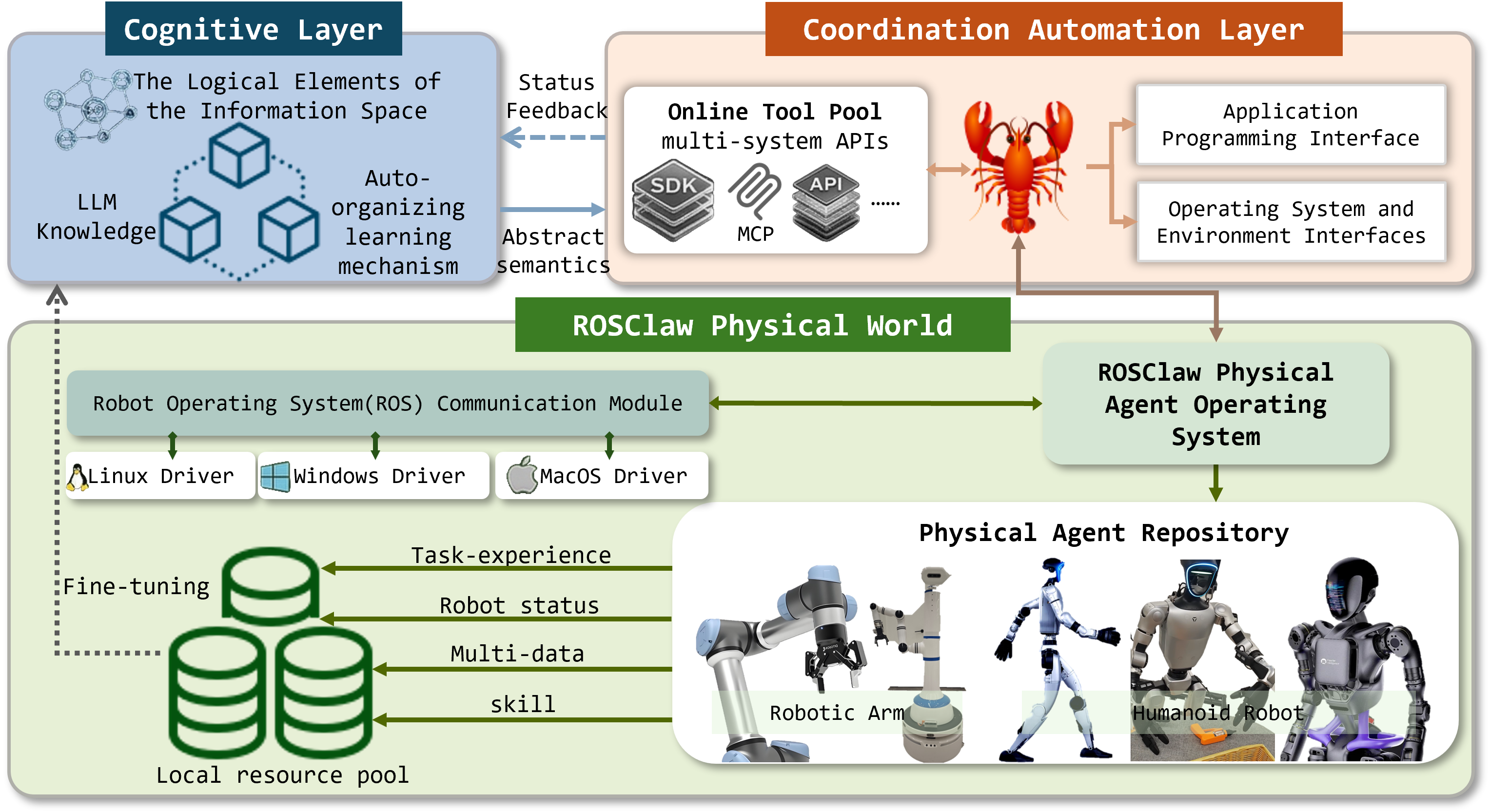

ROSClaw’s three-layer architecture encapsulates the information space (cognitive reasoning), software systems (task automation and abstraction), and the physical world (hardware-level execution). Critical to this architecture is the explicit semantic-physical decoupling, which orchestrates efficient cross-platform execution without sacrificing the context consistency between planning and action.

The cognitive layer integrates LLMs and vision-LLMs to perform semantic-level reasoning and plan decomposition. This is supported by a bidirectional interaction channel, where accumulated multimodal physical observations and execution feedback iteratively refine the reasoning loop and facilitate robust adaptation for long-horizon tasks.

Figure 1: The ROSClaw three-layer architecture bridges high-level reasoning and low-level physical execution, emphasizing continual system evolution and cross-task knowledge reuse.

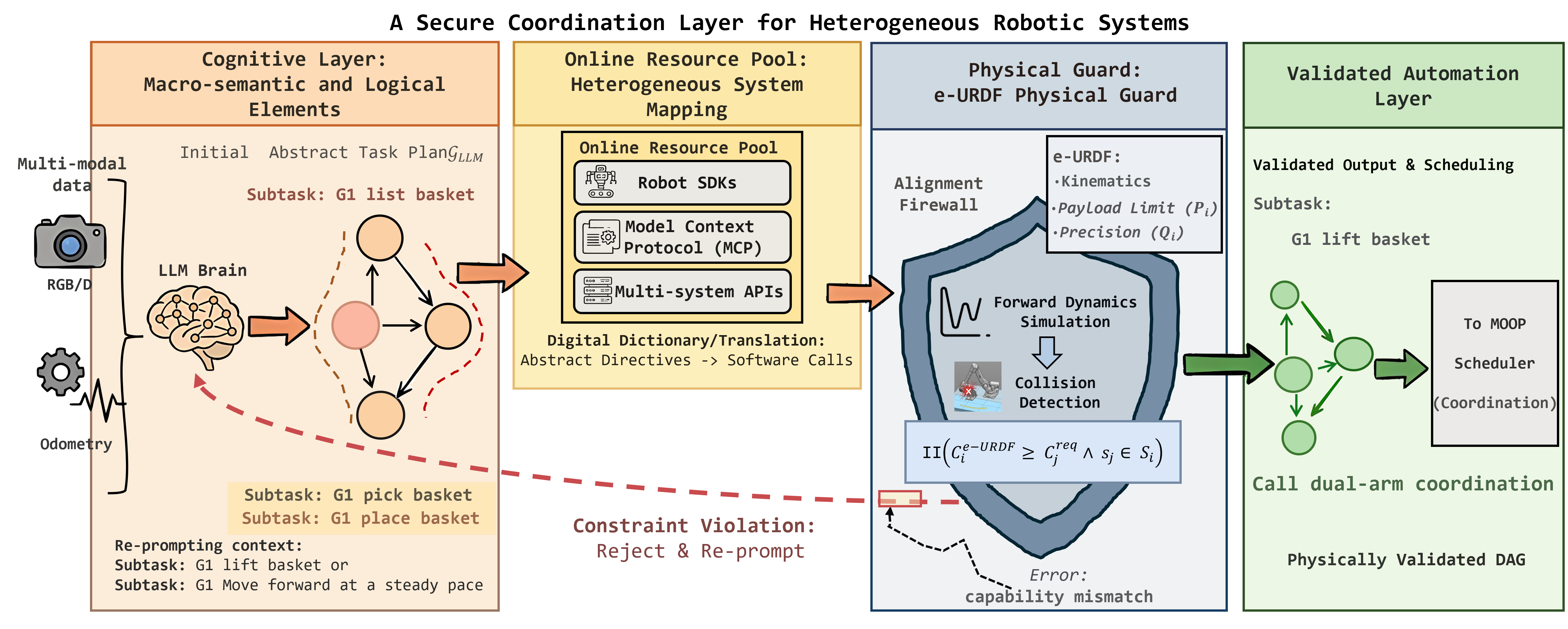

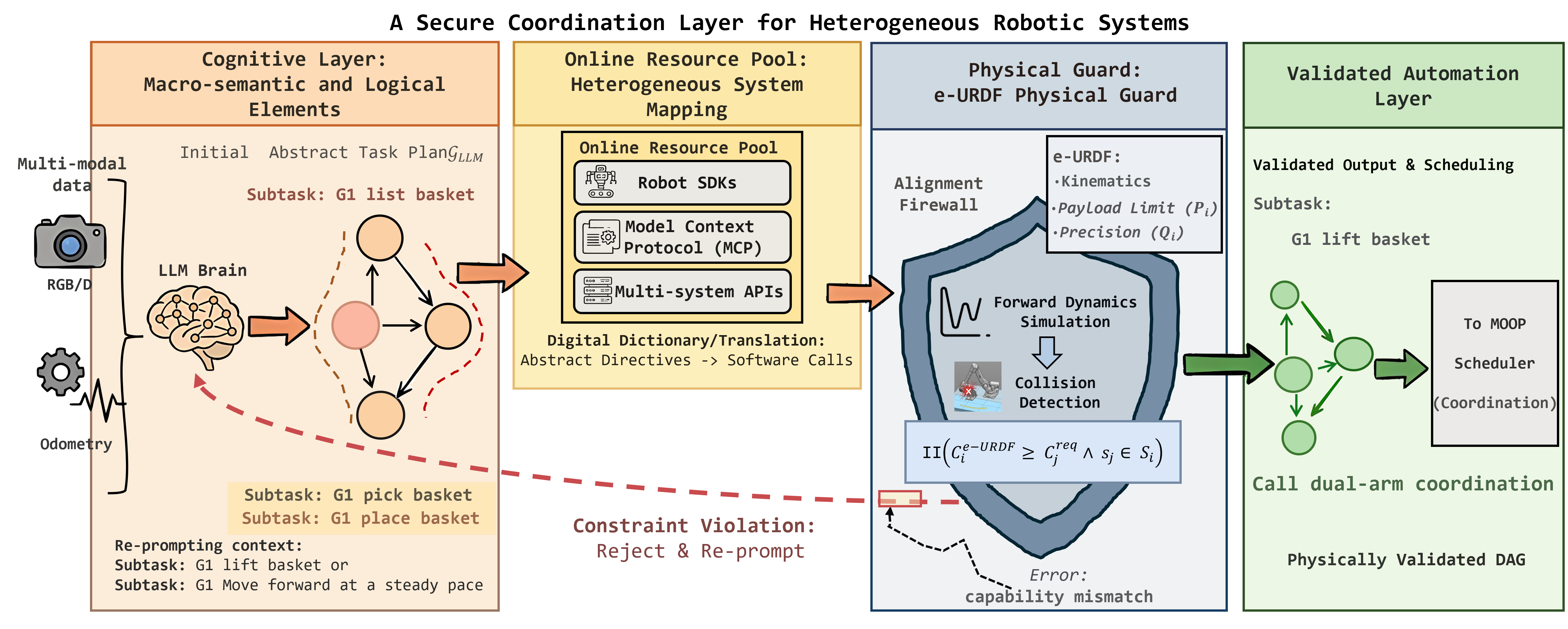

The coordination automation layer mitigates heterogeneity in system resources by aggregating SDKs, APIs, and model context protocols within an Online Tool Pool. Abstract semantic instructions are mapped automatically to hardware-specific software invocations. This layer imposes strict physical feasibility via an e-URDF-based digital twin validation process, enforcing robust constraints through dynamics simulation and collision detection.

Figure 2: The e-URDF-based firewall abstracts heterogeneity, validates physical feasibility in simulation, and enables executable operation transformation across diverse agents.

At the lowest level, the physical world layer maintains unified interfacing with ROS-based and platform-specific drivers for seamless real-time control of heterogeneous robots. This layer continuously records joint states, multimodal sensory streams, and execution trajectories into a Local Resource Pool, supporting lifelong learning, policy refinement, and the creation of reusable skill representations.

Core Mechanisms

e-URDF-based Physical Constraint Mechanism

The introduction of e-URDF as a unifying abstraction for physical constraints is a notable contribution. This representation supports the transformation of semantic commands through safety validation in a digital twin environment, utilizing Isaac Lab for forward dynamics simulation and collision checking before dispatching to the hardware. Such pre-execution filtering is essential to suppress failures induced by large-model hallucination, incorrect reasoning, and distributional anomalies, particularly in long-horizon and multi-agent collaborative settings.

The Online Tool Pool acts as a middleware layer for dynamic mapping between the high-level agent decision logic and heterogeneous SDKs and APIs. By decoupling logic from execution, it supports a scalable “train once, deploy everywhere” workflow, essential for generalizing across platforms.

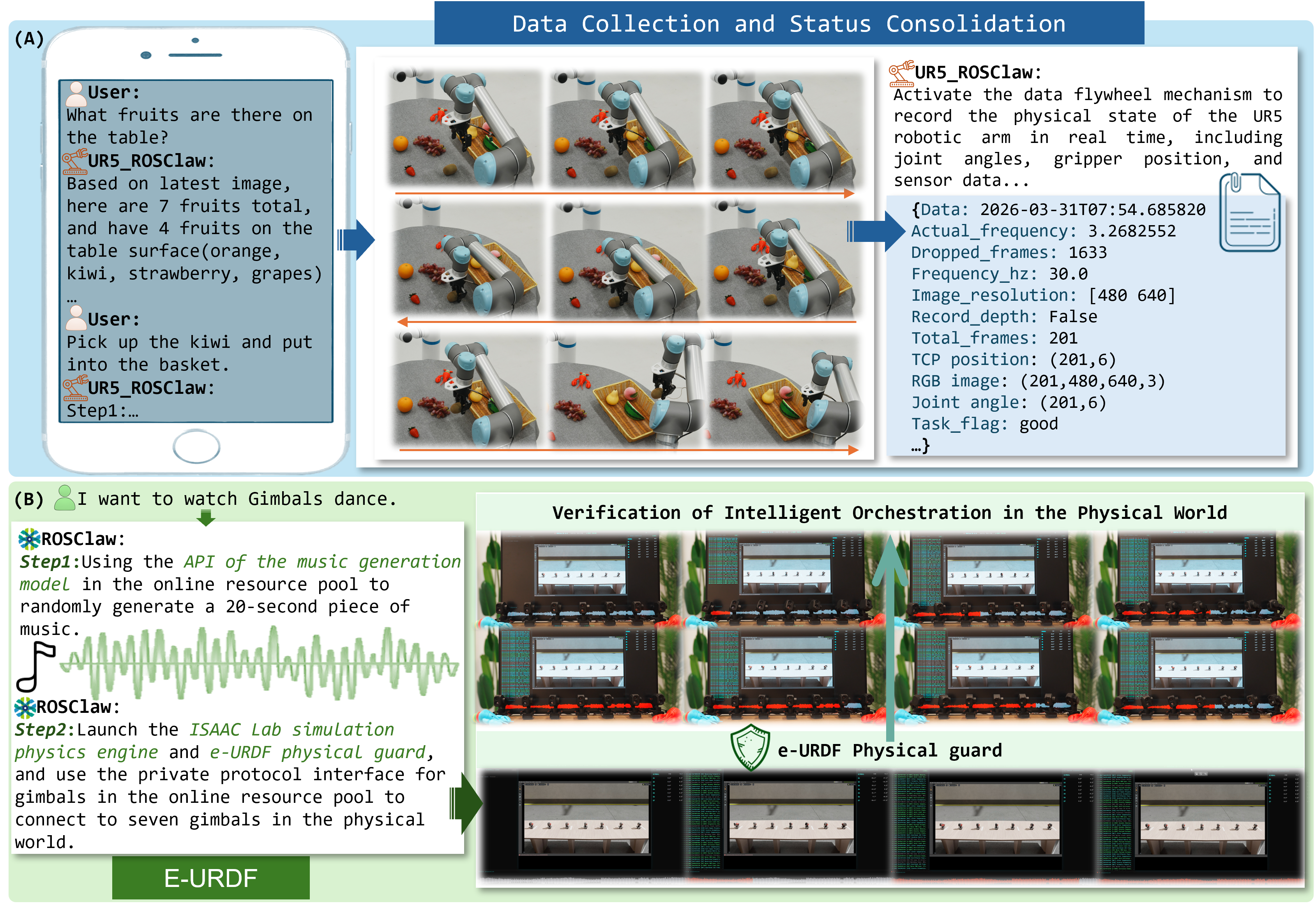

The Local Resource Pool persistently accumulates physically grounded execution experience, forming structured datasets of multimodal sensory traces and action sequences. This data can be leveraged for subsequent policy optimization, rapid skill re-use, or cloud-level model fine-tuning, minimizing the cost and need for hand-engineered demonstrations.

Experimental Validation

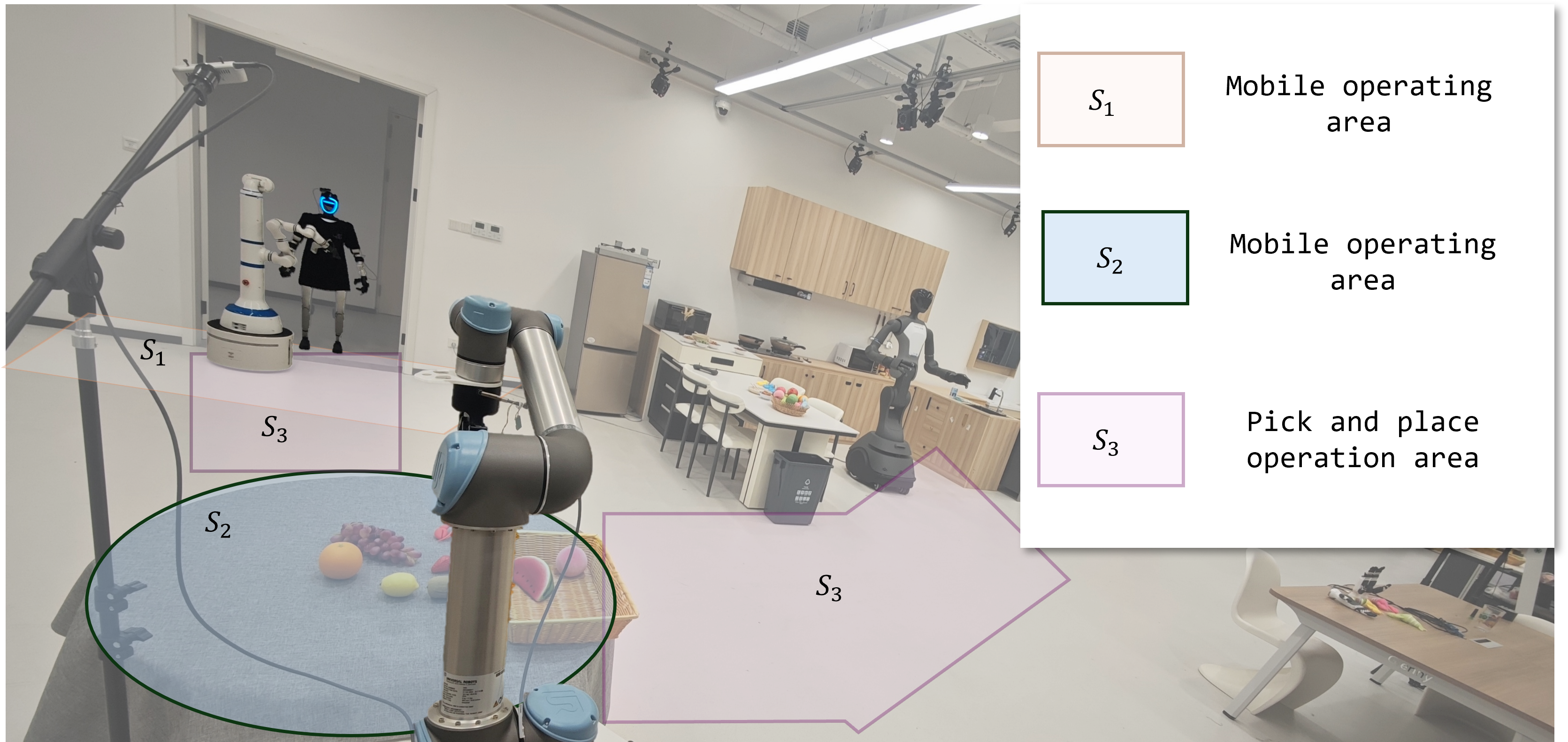

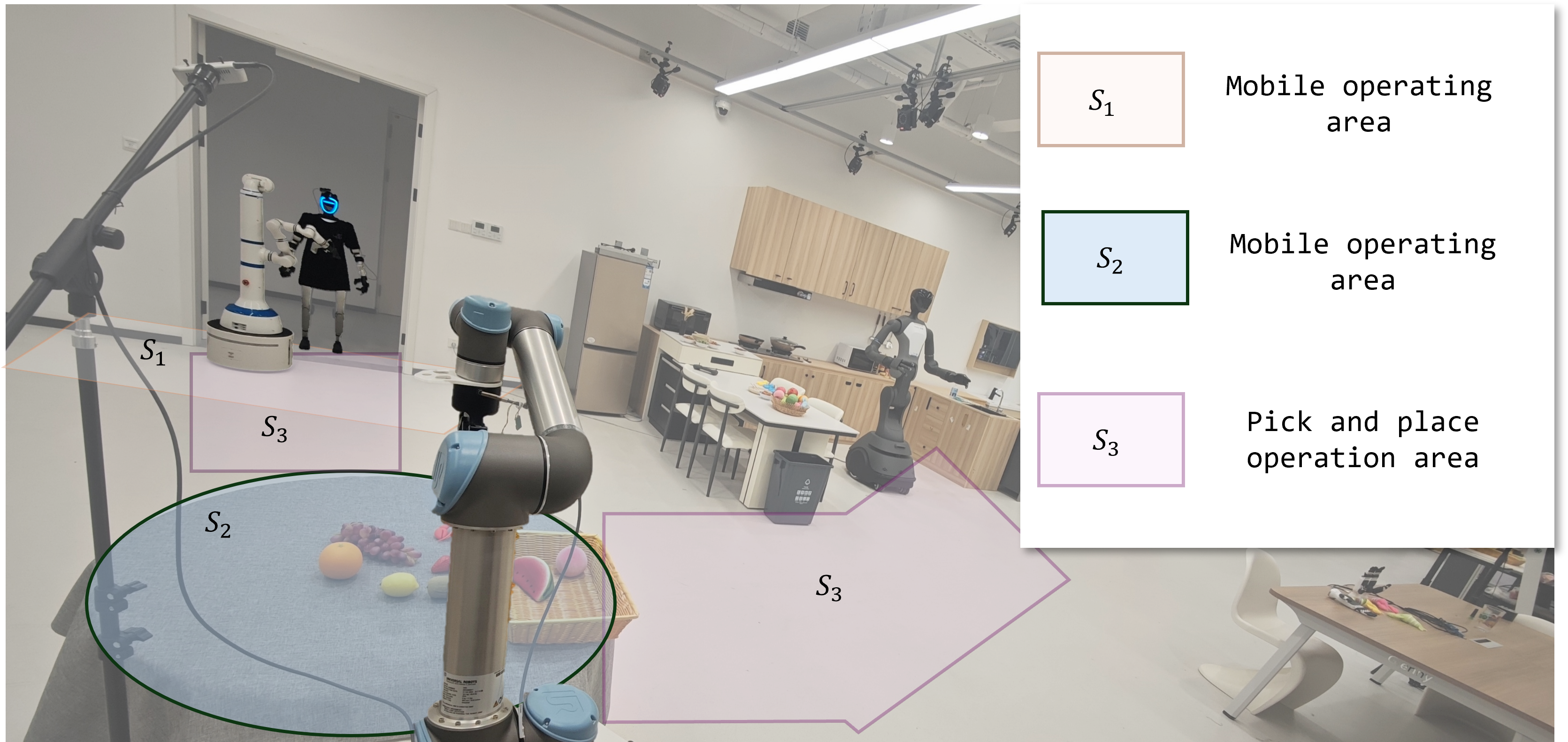

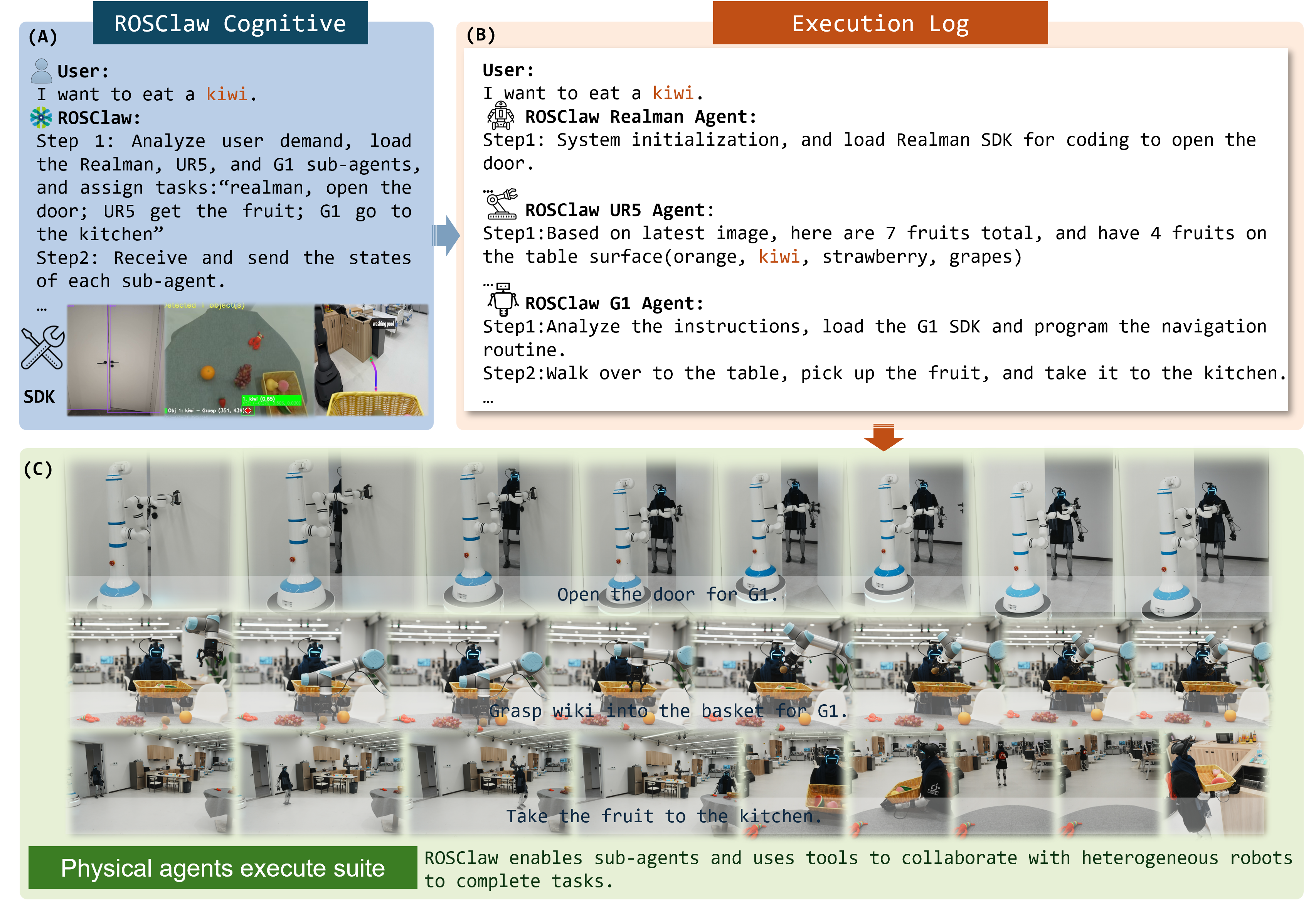

ROSClaw demonstrates robust heterogeneous multi-agent orchestration in realistic smart home environments. Empirical validation showcases collaborative tasks involving mobile manipulators, humanoid robots, and fixed-arm manipulators under cross-region and modality constraints. Task assignment, physical execution, and execution monitoring are handled asynchronously with effective context maintenance and interoperability across agents.

Figure 3: Multi-region physical workspace with distinct sectors constrained per agent, facilitating division and specialization of collaborative duties.

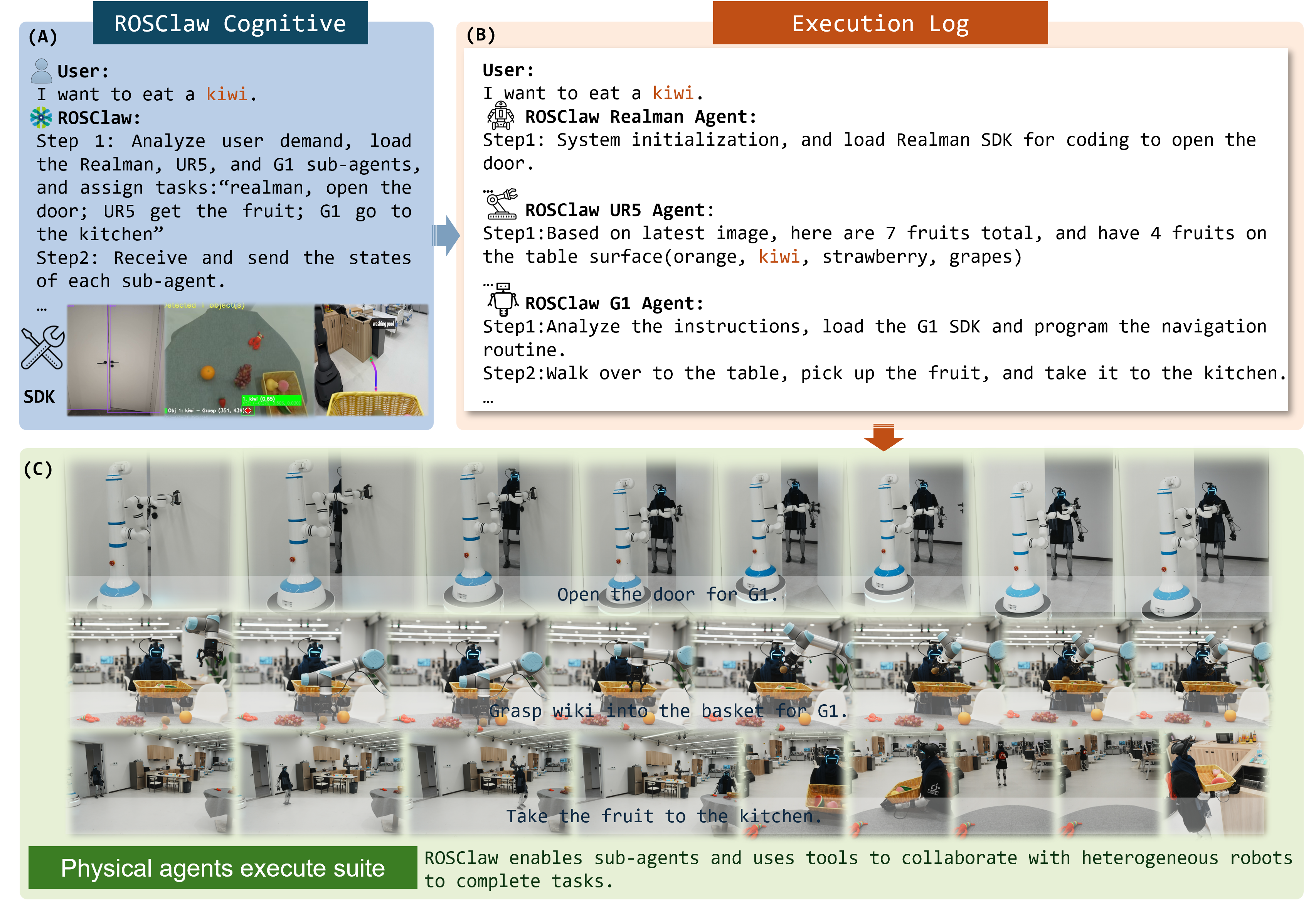

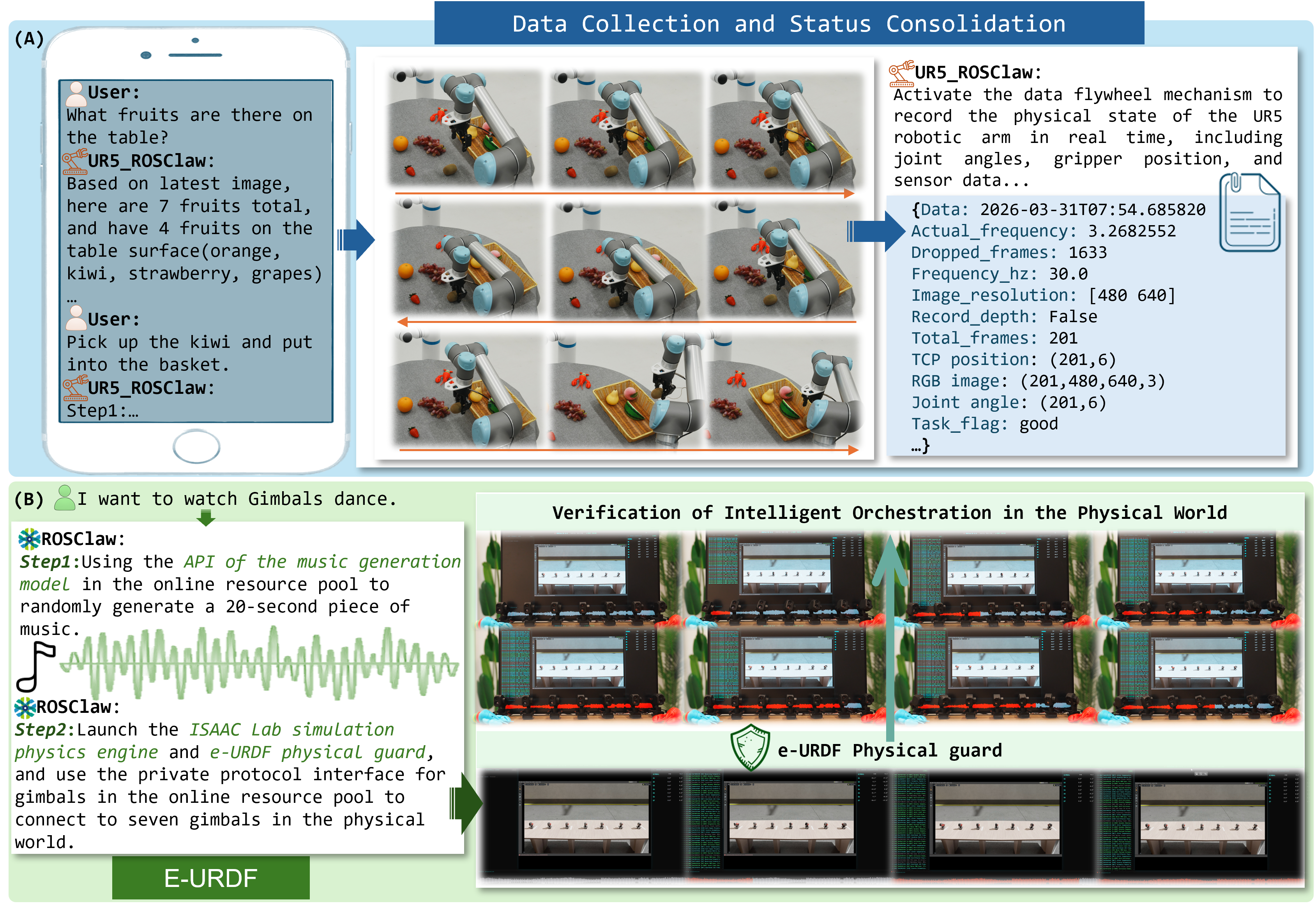

Complex sequential tasks are parsed from natural language and decomposed for distributed execution, with continuous feedback ensuring both semantic and physical alignment. Examined scenarios include collaborative fruit harvesting and transport—demonstrating context-aware coordination, perceptual feedback assimilation, and toolchain invocation.

Figure 4: Multi-agent orchestration: from task assignment and log output to heterogenous physical actuation encompassing navigation, manipulation, and inter-agent resource transfer.

The experimental protocol further highlights the performance of the e-URDF-based safeguarding in generative task settings, such as orchestrated choreography with gimbal units. This results in accelerated development cycles, reduced need for hand-coded pipelines, and compressed human intervention to initial intent specification—contrasting favorably with traditional mechanisms.

Figure 5: e-URDF-based validation and data collection: interactive user-driven flow validation, perception, and safe task execution with full-state monitoring and multi-agent physical orchestration.

Implications and Future Directions

ROSClaw’s integration of semantic reasoning and physical execution through structured mediating layers has substantial implications for the field:

- Scalability: Abstracting and unifying interaction with hardware via the Online Tool Pool enables immediate support for new platforms, enhancing transferability and deployment flexibility.

- Safety and Robustness: Pre-deployment action filtering via digital twin validation addresses critical safety risks in real-world execution, minimizing the potential for catastrophic error propagation.

- Continual Learning: The closed-loop data flow from the Local Resource Pool paves the way for autonomous adaptation, fine-tuning of policies, and scalable bootstrapping with real interaction data.

- Multi-agent Generalization: The mechanism naturally supports both centralized and decentralized agent collaboration schemas, laying the foundation for more complex, mixed-modality MAS tasks.

Current limitations are principally related to operation in highly uncertain and unstructured environments and the absence, as of now, of a full autonomous closed learning loop for online policy improvement. Addressing these challenges will require probabilistic modeling, adaptive uncertainty quantification, and robust control under perception noise and stochasticity. Incorporating end-to-end closed-loop policy optimization, using the data streams accumulated during deployment, is a natural step that can further enable scalable, continuously improving embodied intelligence.

Conclusion

ROSClaw represents a formally structured advance in hierarchical multi-agent robot systems, focusing on semantic-physical interplay and continual system adaptation. The architectural separation of cognition, coordination, and execution, combined with physical validation and continual data accumulation, addresses longstanding limitations in reliability, transferability, and developmental efficiency. Continued work on uncertainty quantification and the integration of closed-loop learning mechanisms is poised to expand the theoretical and practical impact on next-generation intelligent embodied systems.

(2604.04664)