- The paper introduces a hybrid framework that integrates LLM-driven high-level planning with RL-based low-level execution for enhanced robotic manipulation.

- The approach achieves a 33.5% reduction in completion time, an 18.1% increase in accuracy, and a 36.4% boost in adaptability compared to RL-only methods.

- The modular design supports dynamic re-planning and scalability, reducing programming overhead by using natural language instructions as an API.

Hybrid Integration of LLMs and RL for Robotic Manipulation

Introduction

The intersection of Reinforcement Learning (RL) and LLMs presents significant opportunities for advancing robotics, particularly in complex manipulation tasks requiring both semantic understanding and precise control. The paper "Hybrid Framework for Robotic Manipulation: Integrating Reinforcement Learning and LLMs" (2603.30022) implements a modular framework that fuses LLM-driven high-level planning with RL-based low-level execution. This structure is engineered to enhance the ability of robotic systems to interpret, decompose, and execute human instructions, while dynamically adapting to the environment.

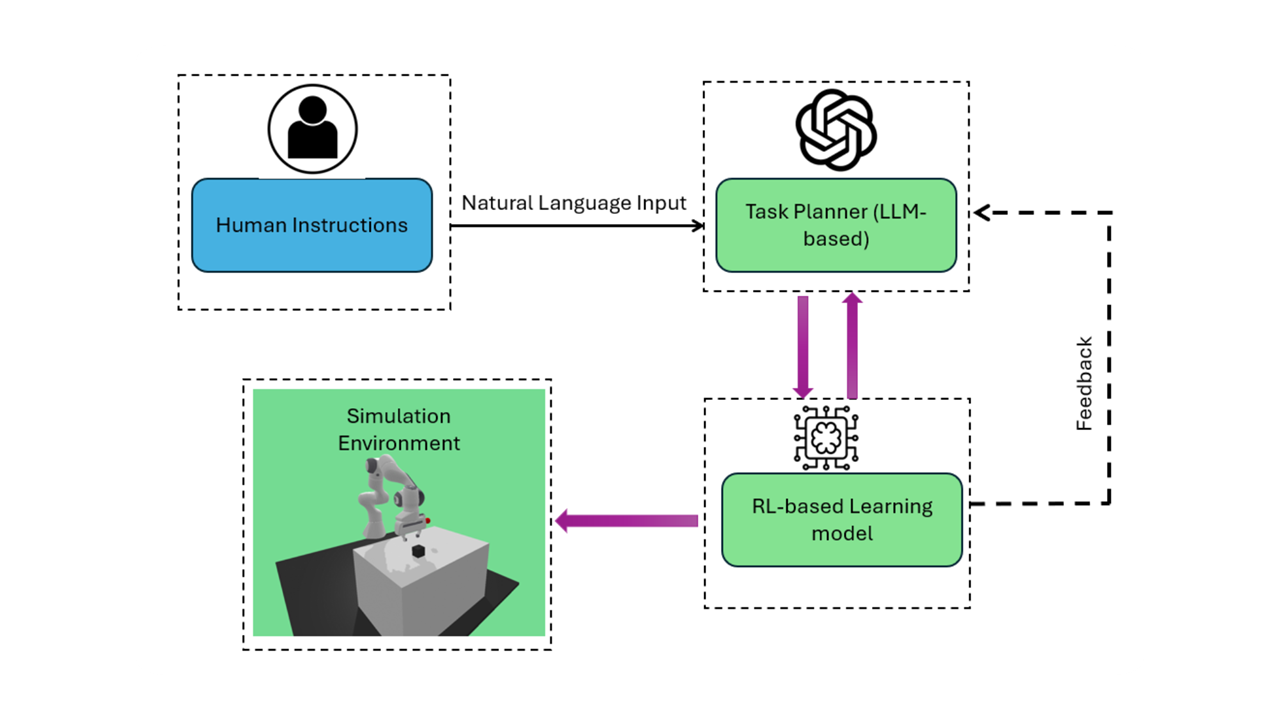

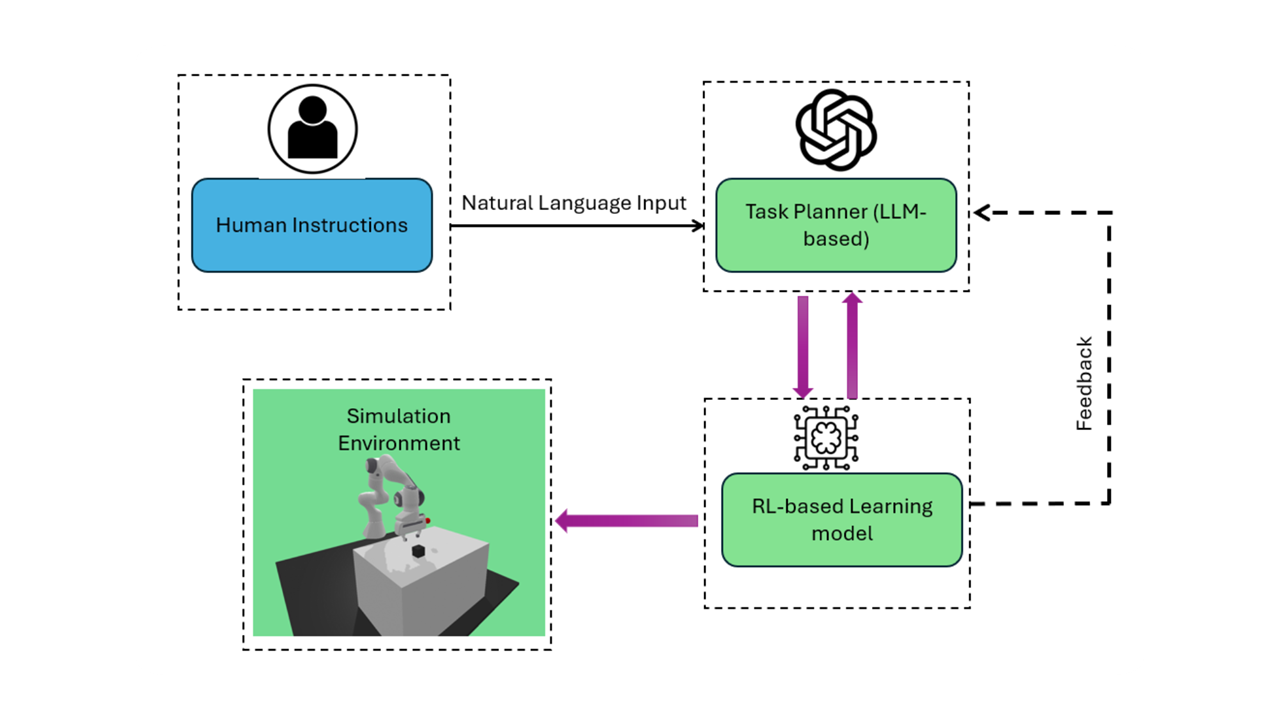

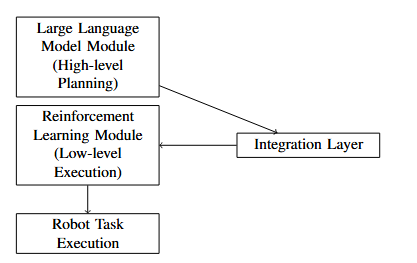

Figure 1: System architecture outlining the integration methodology between LLM-based planners and RL-based manipulation controllers for robotic tasks.

Framework Architecture

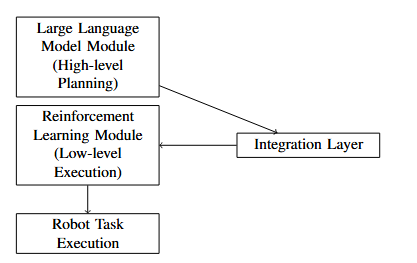

The presented framework is architected around three core modules: the LLM-based Task Planner, the RL Skill Executor, and a high-fidelity simulation environment. The LLM leverages pretrained transformers (e.g., GPT variants) to parse natural language instructions and perform hierarchical decomposition into executable subtasks. Subtasks are dispatched to the RL module, which utilizes policies trained with PPO and SAC to directly actuate the Franka Emika Panda manipulator within PyBullet.

The integration layer maintains a bidirectional communication loop, enabling feedback and real-time adaptation. As the environment shifts or errors occur, the LLM revises task plans, ensuring reactivity and robustness. This hybridization surpasses the limitations of traditional RL (which lacks semantic generalization) and LLM-only controllers (which are insufficient for low-level control without physics-aware feedback).

Figure 3: Schematic of the hybrid framework indicating the division of cognitive (LLM) and motor (RL) components and their interaction pipeline.

Simulation and Benchmark Design

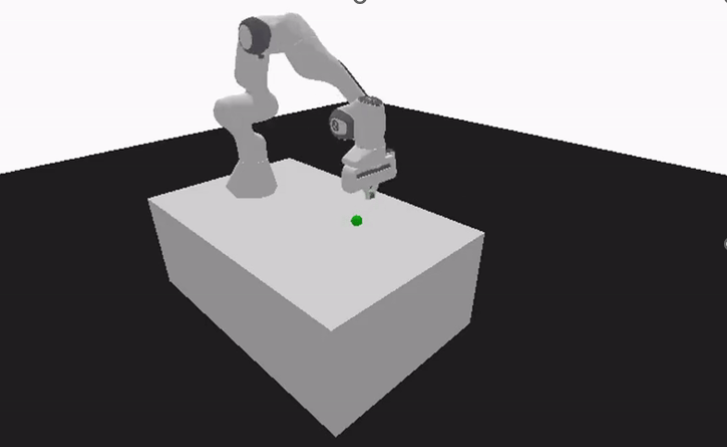

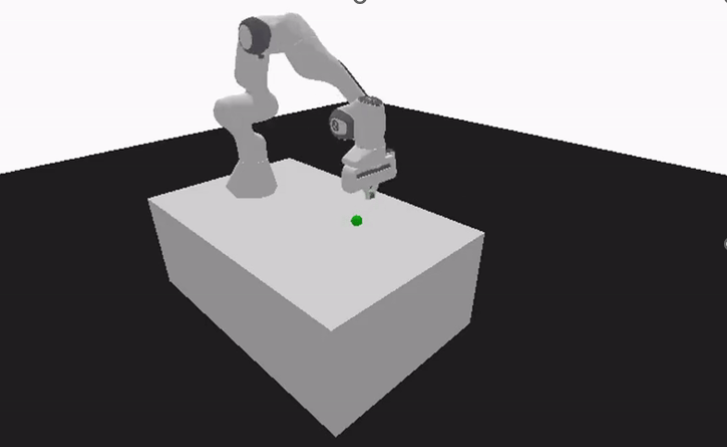

The evaluation environment constructed in PyBullet involves the Franka Emika Panda robot, equipped with simulated sensory modalities and benchmarked on pick-and-place, object sorting, and obstacle avoidance scenarios. The environment supports explicit measurement of task-level metrics, such as completion time, success rate, and adaptability under dynamic interventions.

Figure 2: PyBullet simulation configured with the Franka Emika Panda robot and diverse manipulable objects for benchmarking hybrid control strategies.

Quantitative Results

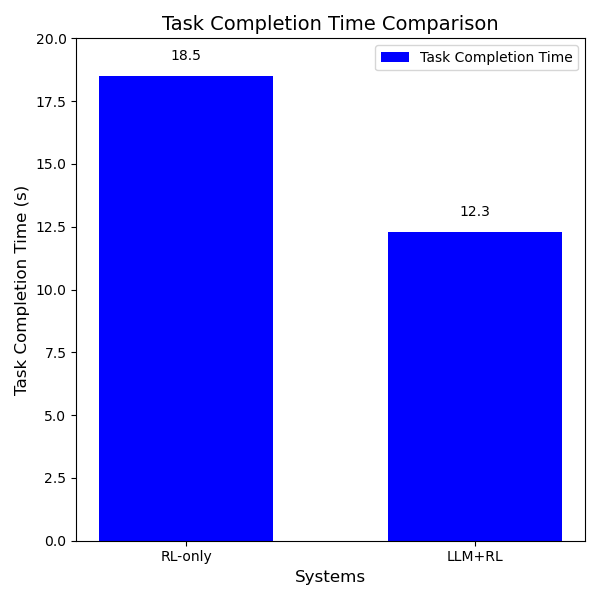

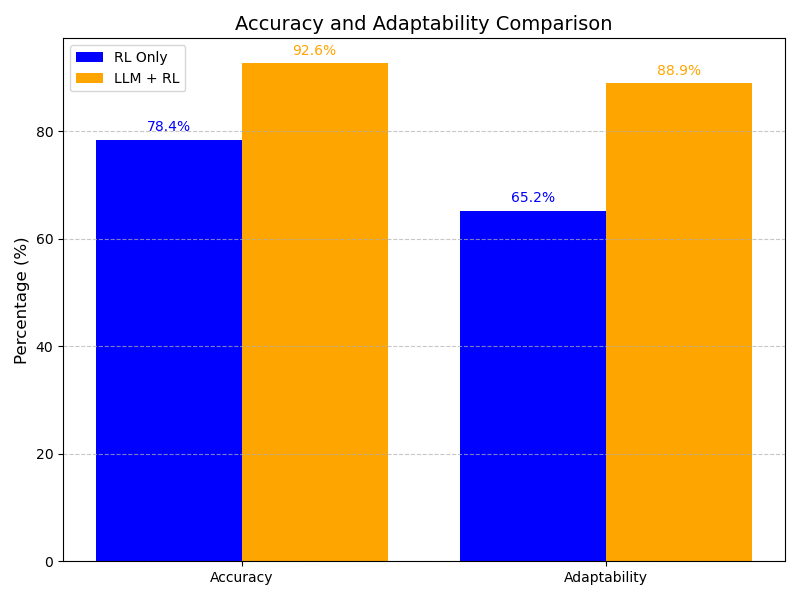

Robust empirical validation highlights substantial gains from LLM-RL integration:

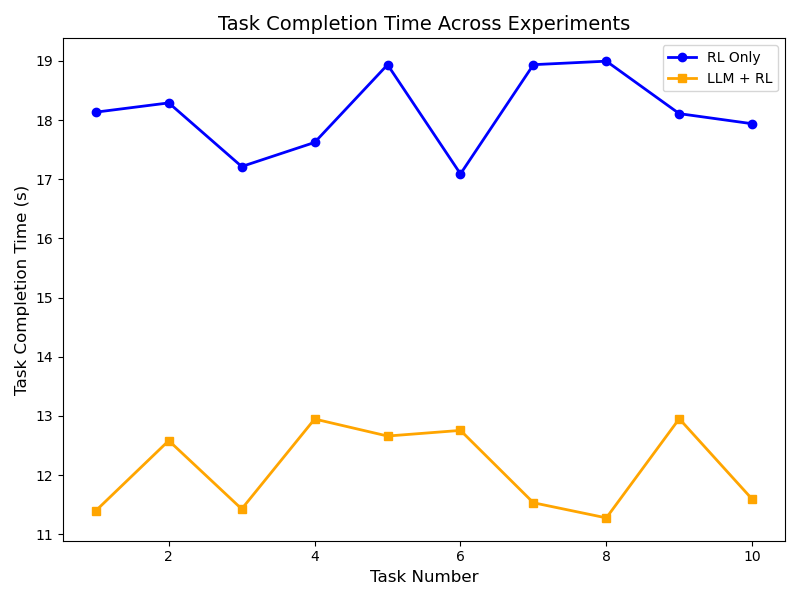

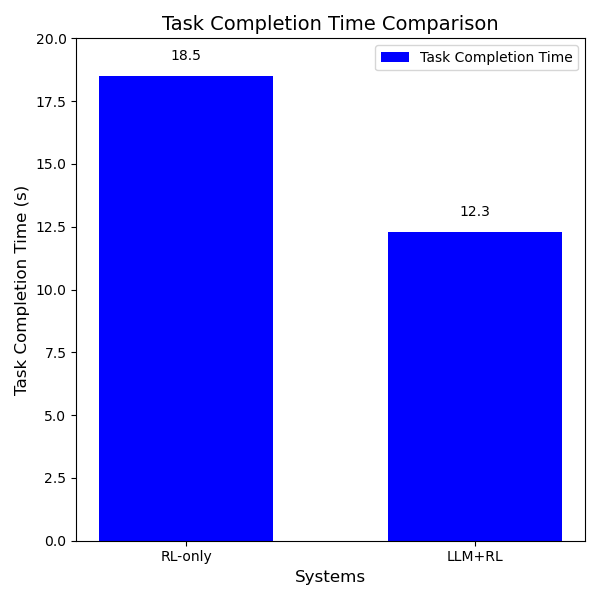

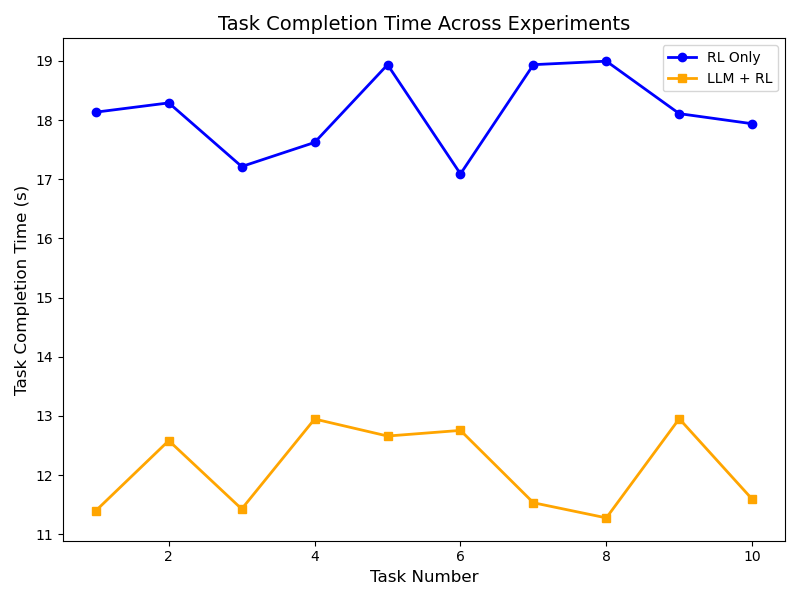

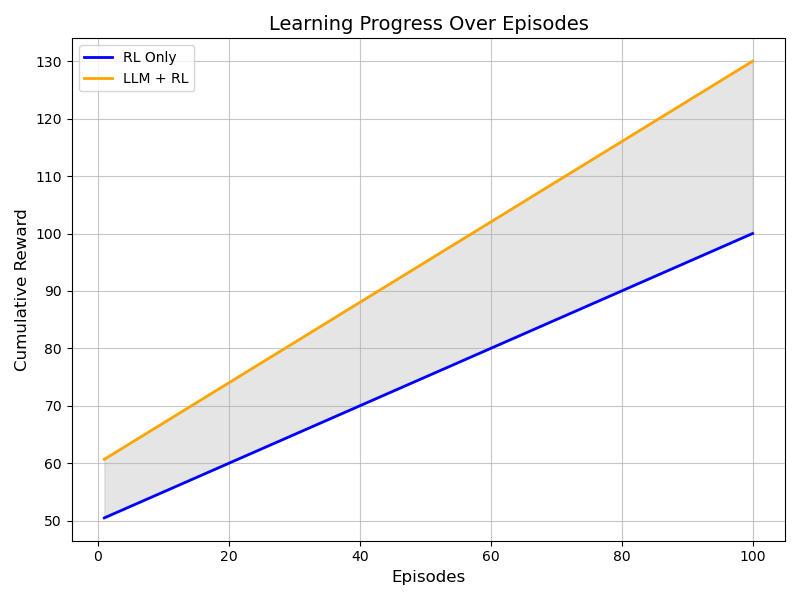

- Task completion time: The hybrid approach yields a 33.5% reduction in mean completion time versus RL-only baselines.

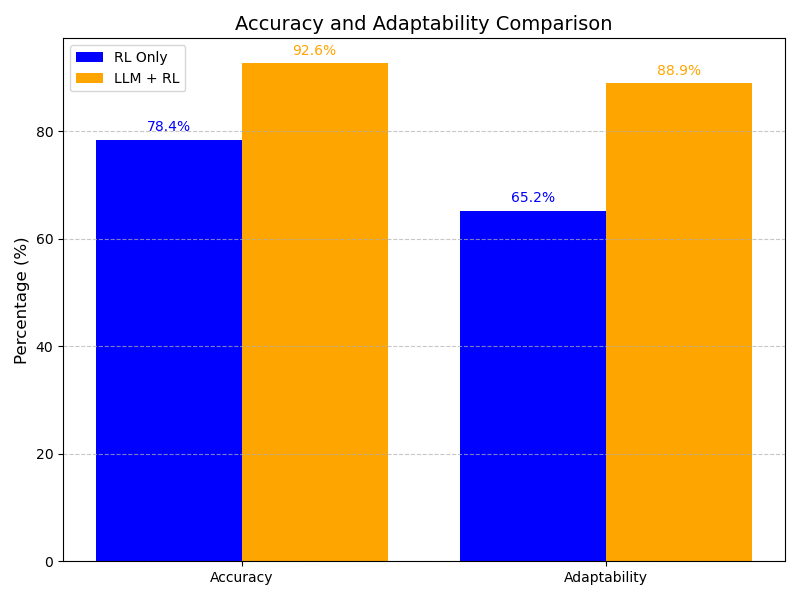

- Accuracy: LLM+RL achieves 92.6% versus 78.4% for RL, an 18.1% increase.

- Adaptability: Under unmodeled dynamic changes, adaptability jumps from 65.2% (RL) to 88.9% (hybrid), a 36.4% relative gain.

Figure 4: Comparative histogram of average task completion time for RL-only and LLM+RL agents, averaged across diverse manipulation tasks.

Figure 5: Bar plot showing improvements in both manipulation accuracy and adaptability metrics with the hybrid framework.

Figure 6: Line plot displaying variance and convergence of completion times over ten experimental runs, demonstrating improved stability and predictability with hybridization.

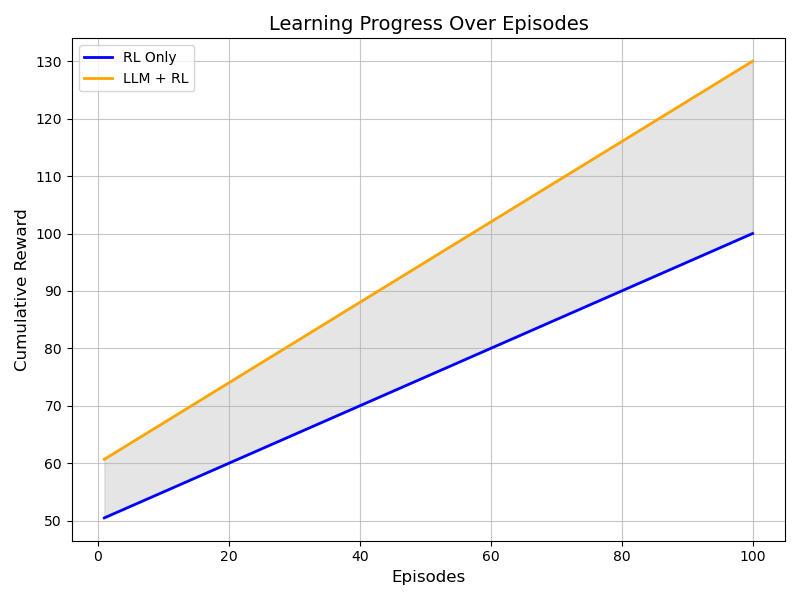

Figure 7: Episode-wise learning curves showing faster and more monotonic cumulative reward growth for the LLM+RL system compared to RL-only agents.

Discussion of Implications

The observed improvements validate a strong claim: semantic task decomposition and dynamic adaptation facilitated by LLMs are complementary to the optimal control capabilities of RL in physical robotics. The modular architecture supports plug-and-play integration with newer LLMs or RL policies, enhancing scalability and future extensibility for multi-robot deployment and increasing instruction complexity.

Practical implications include reduced programming overhead (natural language as API), higher task generalization, and improved resilience in unstructured or cluttered real-world environments. Theoretically, the results corroborate the hypothesis that separating cognitive task interpretation from motor execution is a scalable path for embodied AI.

Future extensions should address sim-to-real transfer, cross-domain multi-robot coordination, and robustness under out-of-distribution natural language instructions. Direct transfer to hardware platforms, real-time interaction with non-expert users, and tight coupling with computer vision for richer perceptual grounding are prominent next steps.

Conclusion

The approach outlined in "Hybrid Framework for Robotic Manipulation: Integrating Reinforcement Learning and LLMs" (2603.30022) delineates a clear framework for modular, adaptable, and high-performance robotic manipulation by leveraging the complementary strengths of LLMs and RL. Empirical results in simulation provide strong evidence for substantial gains in efficiency, accuracy, and adaptability. The hybrid paradigm marks an important move toward more generalizable, interactive, and reliable embodied AI, with promising future directions in deployment scale and semantic complexity.