Nemotron-Cascade 2: Post-Training LLMs with Cascade RL and Multi-Domain On-Policy Distillation

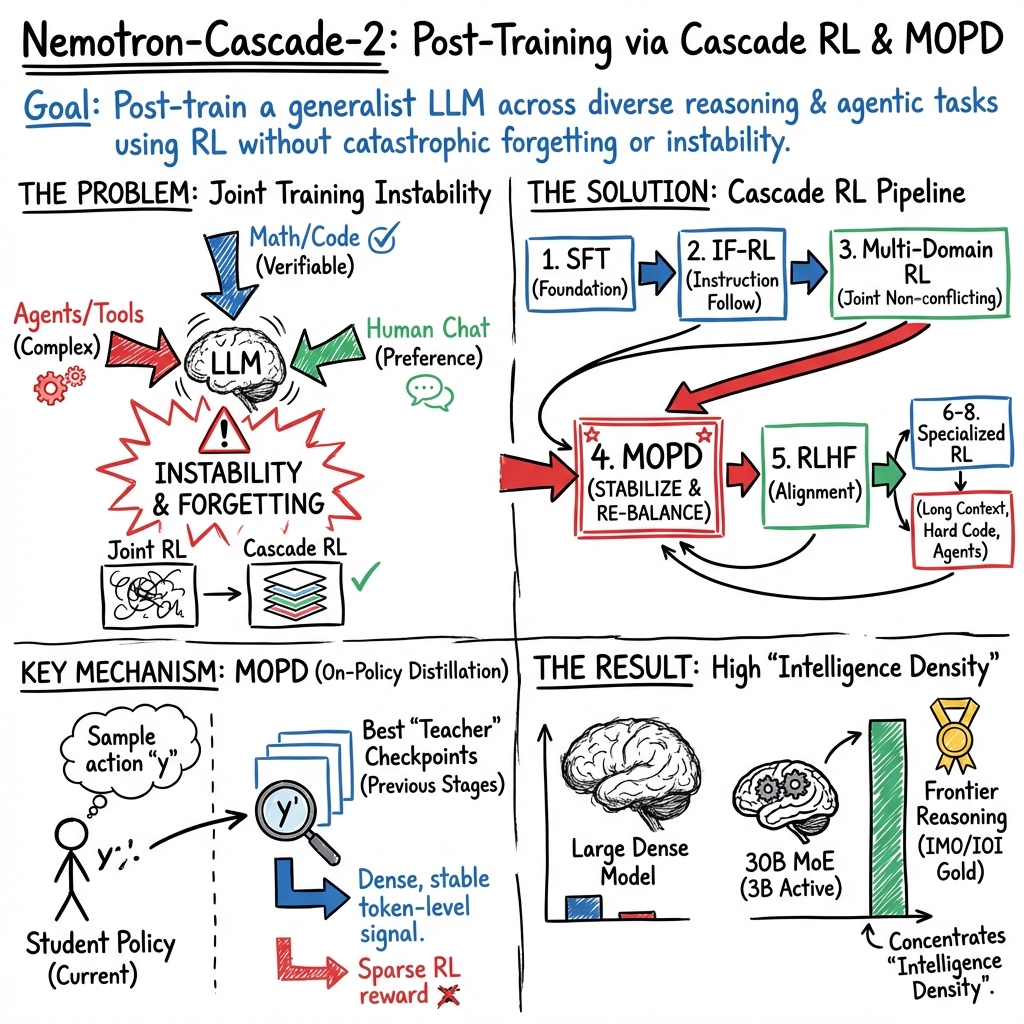

Abstract: We introduce Nemotron-Cascade 2, an open 30B MoE model with 3B activated parameters that delivers best-in-class reasoning and strong agentic capabilities. Despite its compact size, its mathematical and coding reasoning performance approaches that of frontier open models. It is the second open-weight LLM, after DeepSeekV3.2-Speciale-671B-A37B, to achieve Gold Medal-level performance in the 2025 International Mathematical Olympiad (IMO), the International Olympiad in Informatics (IOI), and the ICPC World Finals, demonstrating remarkably high intelligence density with 20x fewer parameters. In contrast to Nemotron-Cascade 1, the key technical advancements are as follows. After SFT on a meticulously curated dataset, we substantially expand Cascade RL to cover a much broader spectrum of reasoning and agentic domains. Furthermore, we introduce multi-domain on-policy distillation from the strongest intermediate teacher models for each domain throughout the Cascade RL process, allowing us to efficiently recover benchmark regressions and sustain strong performance gains along the way. We release the collection of model checkpoint and training data.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces Nemotron‑Cascade 2, a new open‑source AI LLM from NVIDIA. It’s built to be very good at step‑by‑step reasoning and acting like a helpful “agent” (for example, writing code, using tools, or following instructions). Even though it’s relatively small for a top model, it reaches gold‑medal–level performance on elite contests in math and coding (like the IMO and IOI), showing that smart training can make a compact model very powerful.

What were the goals?

The researchers wanted to:

- Make a smaller model think and reason as well as much larger ones.

- Train it to follow instructions precisely, solve tough math and coding problems, handle very long documents, and act as a software‑engineering assistant.

- Build a training recipe that adds new skills step by step without forgetting old ones.

- Share the model weights and training data so others can reproduce and build on their work.

How did they do it? (Methods in simple terms)

Think of training the model like preparing a student for a set of demanding exams, with a carefully planned curriculum and coaching.

First: Supervised Fine‑Tuning (SFT)

- What it is: The model studies many examples with correct answers (like worked solutions).

- What data it sees: A wide mix—math (including proofs), competitive programming tasks, science questions, long documents, regular chat, instruction following, safety, tool use, and software‑engineering tasks (including working in a computer terminal).

- How they kept quality high: They used strong “teacher” models to generate answers, filtered out duplicates, checked code with tests, and picked thorough reasoning traces when tests weren’t available.

Analogy: SFT is like giving the student high‑quality textbooks and answer keys to learn from before any practice exams.

Then: Cascade Reinforcement Learning (Cascade RL)

Reinforcement Learning (RL) is like practice with feedback: the model tries, gets a score (reward), and adjusts.

The “cascade” idea is to train in stages, each focused on a particular skill area, ordered to reduce interference between skills (so learning one thing doesn’t make it forget another).

The stages:

- Instruction‑Following RL (IF‑RL): Teaches the model to follow rules exactly (e.g., “answer in under 200 words”). They used only on‑policy data (the model learns from its own latest attempts) and a trick called dynamic filtering so each practice batch actually teaches something.

- Multi‑Domain RL: Groups similar tasks (like multiple‑choice STEM questions, tool calling, and structured output) to improve them together efficiently.

- Multi‑Domain On‑Policy Distillation (MOPD): If earlier RL stages cause some skills to slip, this step acts like studying from the best “teachers” for each skill. The model learns, token by token, to behave more like the strongest version of itself in each domain. This gives dense, fast feedback—like a coach correcting every step of your solution, not just telling you if the final answer is right.

- RL from Human Feedback (RLHF): Optimizes for responses people prefer (creativity, helpfulness, tone), using a reward model that scores pairs of answers.

- Long‑Context RL: Trains the model to handle very long inputs (tens of thousands of tokens and beyond) without hurting other abilities.

- Code RL: Focuses on very hard programming problems with strict pass/fail tests. They allowed long reasoning and code, and built a fast system to run thousands of test cases per training step.

- Software‑Engineering RL (SWE RL): Two flavors:

- Agentless: Improve code repair quality using a reward model when you can’t run the code.

- Agentic: Improve performance when the model acts with tools and scaffolds (like an automated developer environment).

Key technical ideas explained simply:

- Mixture of Experts (MoE): Like a team of specialists inside the model. Only a few “experts” activate per token (here, about 3B parameters active), so it’s efficient but still powerful.

- On‑Policy: Practice only on what the current model produces, which stabilizes learning.

- Distillation: The student model imitates a teacher’s behavior. Here, the teachers are the model’s own best versions at different stages, per domain.

- “Thinking mode” vs. “non‑thinking mode”: The training setup can instruct the model when to produce detailed reasoning steps or just a concise answer, and standardizes how it calls external tools.

What did they find?

- Top‑tier reasoning with a compact model:

- Nemotron‑Cascade 2 (30B MoE, ~3B active per token) achieved gold‑medal–level performance on the 2025 International Mathematical Olympiad (IMO), International Olympiad in Informatics (IOI), and ICPC World Finals. That’s especially notable because previous open models at that level were far larger.

- Strong across many tasks:

- Excellent math and coding reasoning.

- Precise instruction following (state‑of‑the‑art on IFBench).

- Handles very long inputs (up to million‑token contexts in related tests).

- Solid performance on alignment/human‑preference tests and agentic tasks (like using tools and acting in a terminal).

- Training design matters:

- Putting Instruction‑Following RL first boosted rule‑following without permanently hurting other areas.

- The new Multi‑Domain On‑Policy Distillation (MOPD) efficiently “re‑balances” the model, recovering skills that dip during other RL stages and converging faster than standard RL alone.

- Where it can improve:

- It still trails a competing model on some knowledge‑heavy and highly agentic tasks, suggesting future gains from stronger pretraining on world knowledge and more agent‑focused RL.

Why does this matter?

- High “intelligence density”: The model delivers big‑model performance with far fewer active parameters, making advanced reasoning more accessible and efficient.

- A practical recipe for training: The Cascade RL + MOPD pipeline is a clear, repeatable way to build strong, balanced models without catastrophic forgetting.

- Better real‑world assistants: These methods make AI better at careful reasoning, following instructions, writing and testing code, using tools, and handling very long documents—all useful for study, research, and software work.

- Open resources: NVIDIA released the model checkpoints and training datasets, enabling researchers, teachers, and developers to reproduce results and push them further.

In short, Nemotron‑Cascade 2 shows that careful, staged training—plus learning from the best version of yourself in each area—can turn a compact model into a top performer in math, coding, and beyond.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Based on the provided text, the paper leaves the following issues unresolved. These points highlight concrete opportunities for follow‑up studies and ablations.

- Underperformance on knowledge‑intensive tasks: The model trails baselines on GPQA‑Diamond, MMLU‑Pro, and HLE. What pretraining data composition, retrieval augmentation, or knowledge‑grounded RL (e.g., doc‑verified rewards) most effectively lifts factual depth without hurting reasoning?

- Weaker agentic performance and tool/terminal use: Results on Terminal Bench 2.0 and BFCL v4 lag. Which additional agentic environments, reward designs, or execution‑verified scaffolds (beyond Workplace Assistant and Terminus 2) close this gap, and how should they be scheduled in the cascade?

- Multilingual post‑training: Multilingual results (e.g., MMLU‑ProX, WMT24++) are not state‑of‑the‑art, and no multilingual RL stages are described. How does Cascade RL extend to multilingual and cross‑lingual settings, and which reward signals generalize across languages?

- Data contamination and decontamination: The paper does not detail decontamination procedures against evaluation suites (IMO/IOI/ICPC, AIME, HMMT, LiveCodeBench, LongBench, ArenaHard). What is the leakage risk given large SFT/RL corpora and teacher‑generated data, and how does leakage affect reported gains?

- Evaluation reproducibility and variance: Key inference settings (e.g., seeds, sampling strategies, self‑consistency, tool integration, test‑time scaling) are only partially described (Appendix C). What is run‑to‑run variance, and how sensitive are results to decoding hyperparameters and chain‑of‑thought usage?

- Human/LLM‑grader dependence: Several training/eval stages use LLM judges (e.g., long‑context RL with Qwen‑judge) and a mix of human/LLM grading for IMO. How robust are conclusions to grader choice, bias, and prompt framing, especially for long context and proof‑style tasks?

- Formal analysis of cascade ordering: The “rule‑of‑thumb” for ordering stages is qualitative. Can we define and measure inter‑domain interference quantitatively and learn an optimal curriculum (e.g., with bandits or bilevel optimization) rather than rely on heuristics?

- MOPD teacher selection and mixing: Teachers are drawn from internal checkpoints (math teacher is the SFT checkpoint; other teachers from RLHF/RLVR). What are the effects of (i) adding domain‑specific teachers for code/SWE/long‑context, (ii) adaptive teacher weighting per example, and (iii) conflict resolution when teacher posteriors disagree?

- MOPD stability and theory: The surrogate objective uses reverse‑KL on sampled tokens with truncated importance weighting (Elow=0.5, Ehigh=2.0). What are the convergence properties and bias introduced by truncation, and how do different clipping ranges, warm‑up schedules, and rollout sizes trade off stability vs. performance?

- Limited breadth of MOPD evaluation: Efficiency comparisons focus on AIME and ArenaHard. Does MOPD retain or improve performance on long‑context, agentic, and multilingual tasks, and does it reduce catastrophic forgetting more broadly than GRPO/RLHF?

- KL‑free GRPO in most RL stages: The pipeline removes KL penalties (except RLHF, KL=0.03), risking mode collapse or distribution drift. What is the long‑term effect on diversity, calibration, and transfer, and can lightweight trust‑region or entropy regularization avoid regressions without sacrificing gains?

- RL reward design for close‑to‑correct outputs: Code RL uses strict binary rewards; SWE agentless RL uses a teacher reward; long‑context RL uses LLM judges. Do shaped or hybrid rewards (e.g., partial credit from unit tests, program analyzers, proof checkers) accelerate learning and reduce brittleness without enabling reward hacking?

- Scalability of long‑context RL: Training limits inputs to 32K and sequences to 49K, yet evaluation claims 1M‑token competency. How well do long‑context gains extrapolate beyond the trained range, and what scheduler or memory‑efficient optimization enables RL at 100K–1M contexts?

- Chain‑of‑thought (“thinking mode”) trade‑offs: Several stages are trained exclusively in thinking mode to preserve IF performance. What is the optimal mixing strategy of think/no‑think at training and inference for different tasks, and how to enforce privacy‑preserving or non‑CoT outputs at deployment without degrading quality?

- Safety and alignment evaluation: Safety SFT is small (~4K) and RLHF uses a GenRM; no targeted red‑team or jailbreak benchmarks are reported. How robust is the model to prompt injection (especially with tools), adversarial terminal commands, refusal consistency, and hallucination under long contexts?

- Terminal and tool ecosystem generalization: Tool‑calling is trained with specific schemas and Terminus 2. How well does the model adapt to unseen tools/APIs, OS environments, and error modalities, and what training data/protocols improve zero‑shot tool generalization?

- SWE RL completeness: The paper references execution‑based RL for agentic SWE scaffolds (§4.8.2) but details are missing in the provided text. Which environments, instrumentation, and reward signals are used, and how do they compare to agentless RL in stability and real‑world transfer?

- Coverage of programming languages and problem types: Code RL emphasizes Python/C++14 competitive programming; transfer to other languages, software stacks, and systems tasks is untested. What data and RL signals broaden coverage without diluting code reasoning gains?

- Compute/efficiency reporting: Training steps are given but end‑to‑end compute, wall‑clock, energy, and cost for each stage (especially Code RL with 2,048 verifications/step) are not reported. What is the cost‑performance curve of Cascade RL+MOPD vs alternative pipelines?

- Error and failure analysis: The paper presents headline scores and some ablations but little qualitative error taxonomy (e.g., reasoning failures vs. tool misuse vs. instruction violations). Which failure modes dominate per domain, and which training interventions address them?

- Robustness and calibration: There is no assessment of uncertainty calibration, robustness to prompt paraphrase/format shifts, or sensitivity to adversarial inputs. Can reward‑aware calibration or robustness‑oriented RL improve reliability without hurting peak scores?

- Generalization to unseen RL environments: The cascade focuses on a fixed set of verifiable environments. How well does the model adapt to novel RL tasks with different reward structures, and can meta‑RL or reward‑model distillation improve rapid adaptation?

- Automation of data selection and difficulty: Code RL filters to 3.5K hard prompts (8/8 teacher‑solved removed). Can automatic difficulty‑aware sampling, adaptive curation, or active RL generate better curricula than static filtering?

- Interaction between IF‑RL and RLHF: The paper notes interference but does not quantify the Pareto frontier. Can multi‑objective optimization (e.g., constrained RL, Lagrangian methods) yield predictable trade‑offs between instruction fidelity and human preference alignment?

- Security of tool‑calling protocols: The schema is specified but defenses against tool misuse, prompt injection into tool descriptions, or exfiltration via tool outputs are not evaluated. Which guardrails and verification layers are needed during RL and deployment?

- MoE‑specific analyses: The model is a 30B MoE with 3B active parameters, but there is no ablation on expert routing, load balancing, or expert specialization across cascade stages. How does Cascade RL affect expert utilization and does MOPD change routing behavior?

- Scaling laws for “intelligence density”: The paper claims high intelligence density but lacks quantitative scaling analyses vs parameter count/activated parameters and RL compute. What are the scaling exponents for Cascade RL+MOPD across domains?

- License and provenance of teacher signals: Large portions of SFT and RL rewards rely on external teacher models (e.g., GPT‑OSS‑120B, Qwen) and synthetic data. What are the licensing/provenance implications, and how sensitive are results to replacing these teachers with fully open alternatives?

Practical Applications

Immediate Applications

Below are specific, deployable uses that leverage the model, the Cascade RL pipeline, and multi-domain on‑policy distillation (MOPD). Each item notes target sectors, suggested tools/workflows, and dependencies or assumptions.

- Cost-effective high-reasoning assistant for on-prem or VPC deployment

- Sectors: software, finance, legal, manufacturing, public sector

- What it enables: Run a compact, open-weight model with near–frontier reasoning for math/coding, alignment, and instruction following, reducing inference cost compared to larger 70B–>400B models while retaining strong performance.

- Tools/workflows: Serve Nemotron-Cascade‑2‑30B‑A3B via Triton/Helm charts; adopt the paper’s chat template with <tools> and <tool_call> tags for controllable tool-use.

- Assumptions/dependencies: Access to GPUs with sufficient memory; adoption of the provided prompt templates; internal governance around chain-of-thought exposure (use “non-thinking mode” or strip > content in user-visible channels). > > - Software engineering copilot with verified coding and repair > - Sectors: software, DevOps, embedded systems > - What it enables: High pass@1 on LiveCodeBench and SWE-bench Verified; automated bug localization/repair, test generation, and CI suggestions; terminal workflows for repo maintenance. > - Tools/workflows: Integrate with OpenHands/SWE-Agent; run in a sandboxed container; gate merges via unit tests and policy checks; adopt the paper’s tool-calling and terminal agent scaffolds. > - Assumptions/dependencies: Test suites for verification; isolated Docker for execution; license compatibility for integrating external scaffolds. > > - Long-context document and log analysis > - Sectors: legal, finance, enterprise IT, cybersecurity, operations > - What it enables: Summarize and query very long inputs (up to 1M token evaluation capability; long-context RL at 32–49K tokens during training) for contracts, regulatory filings, system logs, or incident timelines. > - Tools/workflows: Chunk and stitch workflows for >32–49K practical limits; retrieval-augmented generation (RAG); structured output constraints for downstream systems. > - Assumptions/dependencies: Memory and tokenizer that support long-context inference; stable prompt patterns for structured outputs. > > - Enterprise instruction-following and RPA with verifiable constraints > - Sectors: RPA, back-office ops, customer service > - What it enables: High-accuracy adherence to strict formats and limits (e.g., word counts, schemas), enabling reliable automated report drafting, templated email responses, and structured ETL narratives. > - Tools/workflows: IF-RL dataset patterns; dynamic filtering and overlong penalty to reduce output bloat; schema validators in the tool loop. > - Assumptions/dependencies: Clear, machine-verifiable constraints; enforcement layer that rejects misformatted outputs. > > - STEM and competitive programming tutor > - Sectors: education (secondary, higher ed), EdTech > - What it enables: Step-by-step problem solving, proof guidance, and solution verification aligned with Olympiad-level reasoning; generation of diverse practice sets with verified answers. > - Tools/workflows: “Thinking mode” for pedagogical explanations; pairing with auto-graders or ProofBench-like rubrics; difficulty-adaptive curriculums. > - Assumptions/dependencies: Policy choice around showing/hiding chain-of-thought; calibrated grading rubrics for proof verification. > > - Research coding assistant for scientific workflows > - Sectors: academia, biotech, materials, physics, chemistry > - What it enables: Scaffolded scientific coding (Python/C++) for simulation/analysis; structured data pipelines; plot and results reproduction scripts. > - Tools/workflows: Jupyter + isolated runtime; test-based verification for core functions; integration with scientific libraries and lab data stores. > - Assumptions/dependencies: Domain-specific validation datasets; governance to prevent hallucinated analysis; licensing for scientific codebases. > > - Tool-integrated workplace assistant for multi-step tasks > - Sectors: enterprise IT, HR, procurement, analytics > - What it enables: Multi-tool orchestration (calendar, CRM, ticketing, analytics) using the model’s <tools>/<tool_call> interface for deterministic, auditable actions. > - Tools/workflows: Function registries; tool-schema catalogs; execution sandboxes; audit logs per tool call. > - Assumptions/dependencies: Reliable tool adapters; rate limits and permissions model; fallbacks for tool failures. > > - Customer support triage and knowledge management > - Sectors: SaaS, telecom, retail > - What it enables: Long-context retrieval over large KBs; structured response formatting; safe refusal behavior on unsafe requests. > - Tools/workflows: RAG backed by content safety filters; IF-RL style format validators; analytics dashboards for escalations. > - Assumptions/dependencies: Up-to-date KB embedding; safety datasets tuned to domain; multilingual coverage constraints (strong but not state-of-the-art). > > - Data engineering and analytics assistant > - Sectors: data platforms, finance, ops > - What it enables: SQL generation, log parsing, schema mapping; deterministic, schema-checked outputs that feed ELT/BI pipelines. > - Tools/workflows: Structured-output RL patterns; unit-test harnesses for queries and transforms; lineage-aware execution in development sandboxes. > - Assumptions/dependencies: Clearly defined target schemas; test datasets for validation; role-based access controls. > > - Applied AI training teams: faster, more stable post-training > - Sectors: model labs, enterprise AI teams > - What it enables: Adopt Cascade RL for multi-domain gains without catastrophic forgetting; use MOPD to recover regressions and converge faster than GRPO or RLHF alone. > - Tools/workflows: Nemo-RL/Nemo-Gym; GRPO without KL; dynamic filtering; truncated importance weighting in MOPD; curriculum design with domain ordering. > - Assumptions/dependencies: Access to teacher checkpoints or domain-specific teacher slices; compute for on-policy rollouts and verification servers. > > - Governance and audit-ready AI deployments > - Sectors: public sector, finance, healthcare (non-diagnostic) > - What it enables: Open weights/data facilitate reproducibility and auditing; verifiable instruction adherence and structured outputs support compliance documentation. > - Tools/workflows: Policy prompts + tool-call logs; red-teaming with the provided safety blends; benchmark-driven QA gates (IFBench, ArenaHard). > - Assumptions/dependencies: Organizational policies for chain-of-thought redaction; domain-aligned safety and compliance review. > > - Internal translation and multilingual assistance (with caveats) > - Sectors: global enterprises, support > - What it enables: High-quality translation to/from English for internal use; multilingual QA over documents. > - Tools/workflows: Language-aware routing; quality checks with back-translation; glossary enforcement via structured constraints. > - Assumptions/dependencies: Not SOTA on multilingual; critical translations should undergo human review. > > ## Long-Term Applications > > These opportunities require additional research, domain adaptation, larger-scale training, or system integration beyond what is reported. > > - Generalist enterprise agents with balanced multi-skill performance > - Sectors: enterprise software, operations > - Vision: Use Cascade RL + MOPD to harmonize many skills (RPA, tool use, long-context, alignment) into a single policy that avoids inter-domain interference at scale. > - Dependencies: Broader multi-domain RL blends; richer verifiable reward functions; monitoring for capability drift. > > - Autonomous software engineer from issue to PR to deploy > - Sectors: software, DevOps > - Vision: End-to-end agent that creates branches, writes/repairs code, runs tests, updates docs, and opens PRs under strict gates. > - Dependencies: Stronger agentic RL (terminal + IDE), secure credentials handling, expanded evaluation frameworks (beyond SWE-bench), org-approved guardrails. > > - Formal proof assistant and verifier for mathematical research > - Sectors: academia, formal methods, safety-critical software > - Vision: Combine proof generation and verification with formal systems to draft and check proofs at scale; assist in formal specs and model checking. > - Dependencies: Integration with Lean/Coq/Isabelle; alignment between natural-language proofs and formal encodings; curated gold-standard corpora. > > - Compliance and policy “adherence bots” with verifiable constraints > - Sectors: finance, insurance, public sector > - Vision: Agents that draft, check, and enforce policy-conformant documents and workflows with machine-verifiable constraints (length, fields, references, citations). > - Dependencies: Domain-specific IF-RL datasets; legally verified templates; auditable tool chains and storage. > > - Scientific discovery copilot linking hypotheses, code, and long-context literature > - Sectors: pharma, materials, climate science > - Vision: Orchestrate literature synthesis, code experiments, data analysis, and figure generation under a unified, verifiable pipeline. > - Dependencies: Benchmarked scientific reward signals (e.g., replicability scores), data governance, integration with lab notebooks/LIMS. > > - Safety-critical assistants in healthcare (non-diagnostic to diagnostic transition) > - Sectors: healthcare > - Vision: Move from document summarization and protocol adherence to clinical decision support with rigorous alignment and verification. > - Dependencies: FDA/EMA compliance, medical knowledge pretraining, domain RLHF with licensed data, bias and safety audits. > > - Financial analysis copilots for regulated environments > - Sectors: banking, asset management, insurance > - Vision: Long-context analysis of filings, model-driven generation of structured reports, and verified risk/compliance checklists. > - Dependencies: Domain RAG; strict output schemas and audit trails; human-in-the-loop sign-off. > > - Industrial/energy operations assistants > - Sectors: energy, manufacturing, utilities > - Vision: Long-context reasoning over maintenance logs and SCADA/PLC code assistance, with tool-calls to CMMS and alerting systems. > - Dependencies: Secure on-prem deployment; adapters to industrial systems; domain reward functions for correctness and safety. > > - Robotics and embodied agents trained with Cascade RL + on-policy distillation > - Sectors: robotics, logistics > - Vision: Extend the cascade/distillation paradigm to multi-skill robotic policies (planning, manipulation, navigation) to balance capabilities and prevent forgetting. > - Dependencies: Bridging from language to sensorimotor policy learning, verifiable reward definitions, sim2real pipelines. > > - Personalized, mastery-based tutoring at scale > - Sectors: education > - Vision: Multi-subject tutors that adapt difficulty, verify student steps, and provide formative feedback with consistent adherence to curricular standards. > - Dependencies: Student-modeling and privacy-preserving analytics; aligned curricula; guardrails for equitable outcomes. > > - Training-as-a-service using efficient MOPD for enterprise model adaptation > - Sectors: model providers, large enterprises > - Vision: Offer rapid, token-efficient post-training to combine multiple internal specialists (teachers) into a single enterprise model. > - Dependencies: Access to domain teachers; data governance; robust distillation QA and rollback mechanisms. > > - Edge/decentralized deployments with MoE routing > - Sectors: telco, automotive, defense > - Vision: Exploit low activated parameters for partial on-device inference, routing heavy steps to the cloud, balancing latency and privacy. > - Dependencies: Reliable MoE gating across heterogeneous hardware; bandwidth-aware orchestration; model partitioning toolchains. > > ### Cross-cutting assumptions and dependencies > > - Training replication relies on: released Nemotron-Cascade-2 weights/datasets; Nemo-RL/Nemo-Gym; availability/licensing of teacher and reward models (e.g., Qwen3-235B generative RM, GPT-OSS-120B, DeepSeek variants). > > - Compute considerations: On-policy RL and code verification are resource-intensive (e.g., 2,048 rollouts per step, 384 CPU cores for verification in the paper). Production retraining may require scaled infra. > > - Safety and compliance: Exposure of chain-of-thought should be governed by policy; apply non-thinking mode for user-facing tasks; domain-specific safety tuning required for high-stakes sectors. > > - Tool ecosystem: Real-world tool-calls require robust adapters, permissioning, and observability; failure modes must have fallbacks and human escalation. > > - Knowledge coverage: The paper notes relative underperformance on some knowledge-intensive/agentic tasks vs certain baselines; domain pretraining and RL may be needed for top-tier results in specific verticals.

Glossary

- AdamW: A variant of the Adam optimizer that decouples weight decay from the gradient update to improve generalization. "We adopt a learning rate of 2e-6 with AdamW (Kingma, 2014), and set both the entropy loss coefficient and KL loss coefficient to 0."

- Agentic: Describing autonomous, tool-using behaviors where models plan, decide, and act to achieve goals. "Reinforcement Learning (RL) ... has emerged as the cornerstone of LLM post-training, driving advances in reasoning, agentic capabilities, and real-world problem-solving."

- Agentless RL: Reinforcement learning for software tasks without executing actions inside an interactive agent scaffold (e.g., no live environment), often relying on learned or heuristic rewards. "We perform agentless SWE RL with a batch size of 128 x 16 = 2,048 (128 prompts with 16 rollouts per prompt), a maximum sequence length of 98,304, and a learning rate of 3 x 10-6 using the AdamW optimizer."

- Asynchronous reward verification server: Infrastructure that parallelizes and decouples reward computation/verification from policy updates to handle high-throughput evaluation. "we deploy an asynchronous reward verification server that completes each batch in 427.2 seconds across 384 CPU cores."

- Binary reward function: A reward scheme that assigns a 0/1 signal based on success or failure to reduce reward hacking and ambiguity. "We adopt the strict binary reward function to avoid potential reward hacking"

- Cascade RL: A sequential, domain-wise RL framework that trains across specialized domains to mitigate interference and improve stability. "Cascade RL significantly simplifies the engineering complexity associated with multi-domain RL while achieving state-of-the-art performance across a wide range of benchmarks."

- Catastrophic forgetting: The undesirable loss of previously learned capabilities when training on new tasks. "First, domain-specific RL stages are remarkably resistant to catastrophic forgetting."

- Code RL: Reinforcement learning focused on code generation/repair with verifiable execution-based rewards. "We conduct Code RL using a batch size of 128 and a learning rate of 3 x 10-6 with the AdamW optimizer."

- Distribution shift: A mismatch between training and deployment data distributions that can degrade performance. "reducing distribution shift and avoiding additional alignment issues."

- Dynamic filtering: An RL training technique that discards prompts whose rollouts are all correct or all incorrect to ensure informative gradients. "we also apply dynamic filtering (Yu et al., 2025)."

- Entropy collapse: A failure mode where a policy becomes overly deterministic (low entropy), reducing exploration and diversity. "This on-policy setup contributes to stable RL training and mitigates entropy collapse."

- Entropy loss coefficient: The weight on an entropy-regularization term in the RL objective to encourage exploration. "set both the entropy loss coefficient and KL loss coefficient to 0."

- Generative reward model (GenRM): A LLM used to score and compare candidate responses for RLHF. "we utilize Qwen3-235B-A22B-Thinking-2507 as our generative reward model (GenRM), trained via the HelpSteer3 framework (Wang et al., 2025)."

- Group Relative Policy Optimization (GRPO): A policy-gradient algorithm that normalizes rewards across groups of rollouts for stability. "we use Group Relative Policy Optimization (GRPO) algorithm (Shao et al., 2024) with strict on-policy training"

- IF-RL (Instruction-Following RL): RL that optimizes adherence to objective, verifiable instructions and formatting constraints. "we position IF-RL as the first stage of our Cascade RL training"

- I/O fingerprinting: Deduplication using signatures of input/output behaviors to identify duplicate prompts/problems. "we apply strict deduplication using two methods: (1) sample I/O fingerprinting and (2) n-gram-based text analysis."

- Importance sampling ratio: The probability ratio between behavior and target policies used to correct off-policy bias; equals 1 in strict on-policy setups. "making the importance sampling ratio exactly 1."

- KL divergence: A measure of difference between probability distributions often used as a regularization term in RL. "we remove KL divergence term entirely"

- KL loss coefficient: The weight on the KL-regularization term controlling deviation from a reference policy. "set both the entropy loss coefficient and KL loss coefficient to 0."

- Length-normalized reward adjustment: Reward scaling that compensates for response length so models aren’t rewarded solely for verbosity. "apply the same length-normalized reward adjustment and quality-gated conciseness bonus (Blakeman et al., 2025)."

- LLM judge: An LLM used as an automatic evaluator to score model outputs in lieu of deterministic tests. "use Qwen3-235B-A22B-Instruct-2507 as an LLM judge to evaluate model rollouts for question answering tasks."

- Long-context RL: RL that targets tasks with very long inputs to improve reasoning over large context windows. "Following RLHF, we conduct a stage of long-context RL"

- MCQA (Multi-choice question answering): Benchmarks or tasks where the model selects from multiple answer options. "multi-choice question answering (MCQA) in the STEM domain"

- Mixture-of-Experts (MoE): A model architecture that routes tokens to specialized expert subnetworks, activating only a subset per input. "an open 30B Mixture-of-Experts (MoE) model with 3B activated parameters."

- MOPD (Multi-domain On-Policy Distillation): A distillation method that on-policy samples from a student and distills from domain-specialized teachers across tasks. "We therefore adopt multi-domain on-policy distillation (MOPD) (Agarwal et al., 2024; Lu and Lab, 2025; Xiao et al., 2026; Yang et al., 2025; Zeng et al., 2026) as a complementary post-training stage."

- Multi-domain RL: Joint RL training on a mixture of domains with similar response lengths and verification costs. "we conduct an additional stage of multi-domain RL that covers three capabilities"

- On-policy: A training setup where data is collected using the current policy being updated, reducing mismatch and stabilizing learning. "with strict on-policy training"

- Overlong penalty: A penalty that assigns zero reward to generations that exceed a specified maximum length. "we apply overlong penalty, which penalizes samples that fail to complete generation within the maximum sequence length with a zero reward."

- Pass@1: The probability that the first sampled solution passes verification; a standard code/math metric. "Benchmark Metric: pass@1."

- Quality-gated conciseness bonus: A reward shaping term that encourages shorter responses only when quality thresholds are met. "and apply the same length-normalized reward adjustment and quality-gated conciseness bonus (Blakeman et al., 2025)."

- REINFORCE objective: The classic Monte Carlo policy gradient objective used in RL without value baselines. "simplifies the GRPO objective to the standard REINFORCE objective (Williams, 1992)"

- Reverse-KL: Using KL divergence in the teacher-to-student direction to push the student toward teacher token probabilities. "define the token-level distillation advantage using reverse-KL as"

- Reward hacking: Exploiting weaknesses in the reward function to get high reward without solving the intended task. "to avoid potential reward hacking"

- RLHF (Reinforcement Learning from Human Feedback): RL that optimizes policies using human preference signals or learned reward models. "We continue with RLHF (§4.5) for human alignment"

- RLVR: A verifiable-reward RL setup where outputs are automatically checked against ground truth. "verified against the ground-truth answer a in RLVR."

- Rollouts: Samples/trajectories generated by a policy for training or evaluation. "we generate a group of G rollouts from the current policy"

- SFT (Supervised Fine-Tuning): The supervised learning stage that adapts a pretrained model to curated datasets before RL. "In this section, we describe the training framework and data curation process for supervised fine-tuning (SFT)"

- Stop-gradient: An operation that prevents gradients from flowing through a term during backpropagation. "where sg[.] denotes stop-gradient."

- Thinking mode: A generation mode that emits explicit reasoning traces (e.g., within > tags) before the final answer. "we train RLHF exclusively in the thinking mode."

Token mask: A mask indicating which tokens are valid for loss/optimization in sequence-level training. "retained by the token mask."

- Token-level distillation advantage: A per-token signal measuring how much the teacher prefers the sampled token over the student to guide distillation. "MOPD provides a dense token-level distillation advantage"

- Top-p: Nucleus sampling parameter that restricts sampling to the smallest set of tokens whose cumulative probability exceeds p. "with temperature 1.0 and top-p 1.0."

- Truncated importance weighting: Clipping or bounding importance weights to stabilize optimization when correcting for train/infer mismatch. "we apply truncated importance weighting to account for train-infer mismatch:"

- Tool calling: LLM-invoked function calls to external tools/APIs guided by a tool specification in the prompt. "For tool calling task, we specify all available tools in the system prompt within the <tools> and </tools> tags"

- Tool-Integrated Reasoning (TIR): Evaluation or reasoning where external tool use is integrated into the process/metric. "Numbers in brackets refers to Tool-Integrated Reasoning (TIR) results."

- Verification wall-clock times: The real elapsed time required to check outputs, affecting RL batch latency. "verification wall-clock times are more uniform within a domain than across multiple domains trained jointly."

Collections

Sign up for free to add this paper to one or more collections.