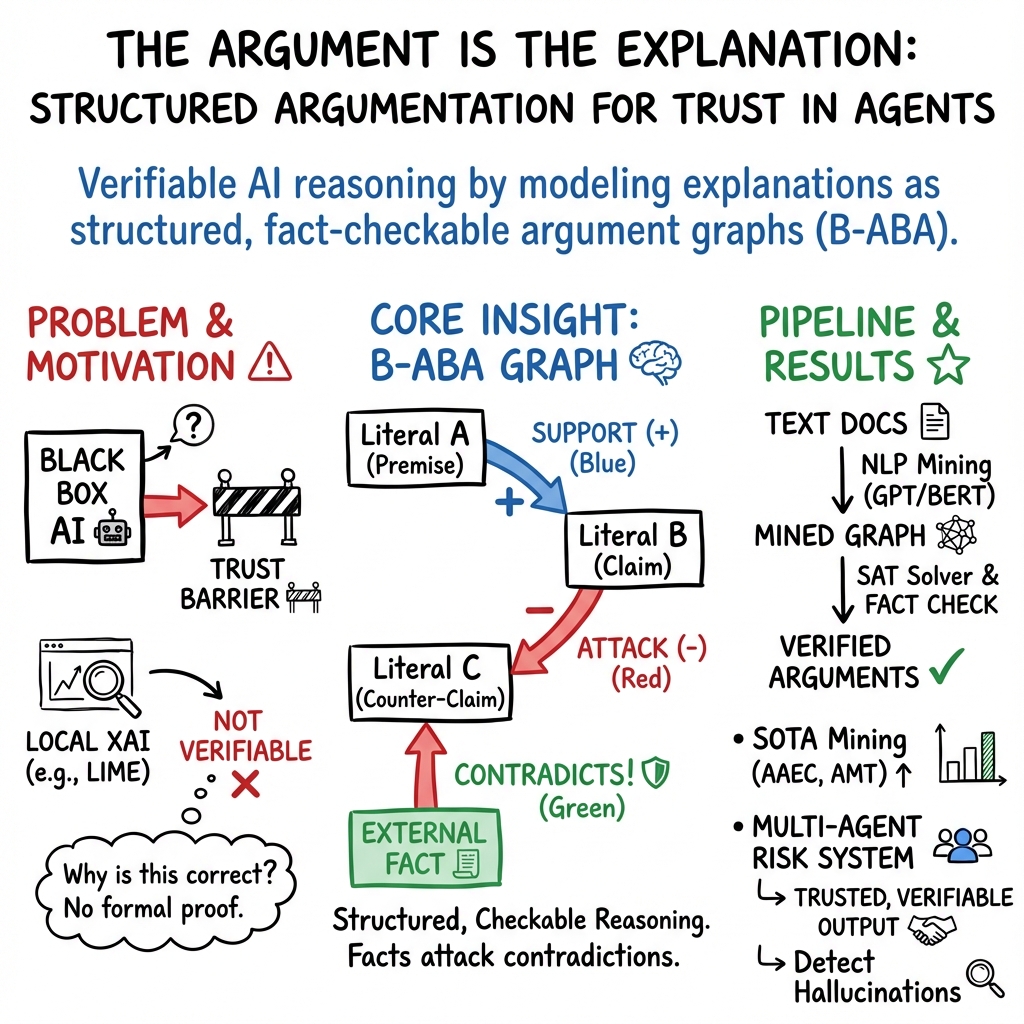

- The paper introduces a structured argumentation framework using Bipolar Assumption-Based Argumentation (B-ABA) to provide verifiable AI explanations with a token-level F1 of 94.44.

- It details a pipeline that converts natural language into structured arguments with support and attack relations, enabling automated fact-checking and risk assessments.

- The approach significantly improves verification in multi-agent systems by tripling baseline attack rates and facilitating collaborative, iterative decision-making.

Structured Argumentation for Trust in Agents

Introduction

The paper, "The Argument is the Explanation: Structured Argumentation for Trust in Agents" (2510.03442), explores an innovative approach to AI explainability by leveraging structured argumentation as a means to facilitate more reliable verification and trust. The authors critique the traditional focus on mechanistic transparency and instead advocate for verifiable reasoning chains akin to human argumentative processes. This shift aims to address the limitations of current interpretability and LLM-generated explanations.

Structured Argumentation Systems (SASs), particularly Bipolar Assumption-Based Argumentation (B-ABA), form the core of this approach. The paper demonstrates the use of these systems for risk assessment by specialized AI agents, emphasizing automatic hallucination detection through argument graphs with support and attack relationships.

Implementation of Structured Argumentation

The proposed framework converts natural language text into structured arguments to enhance both explainability and verification. The process includes extracting argumentative discourse units and classifying relations using state-of-the-art machine learning models. The pipeline achieves notable performance improvements with 94.44 macro F1 on the AAEC corpus and 0.81 macro F1 on Argumentative MicroTexts relation classification.

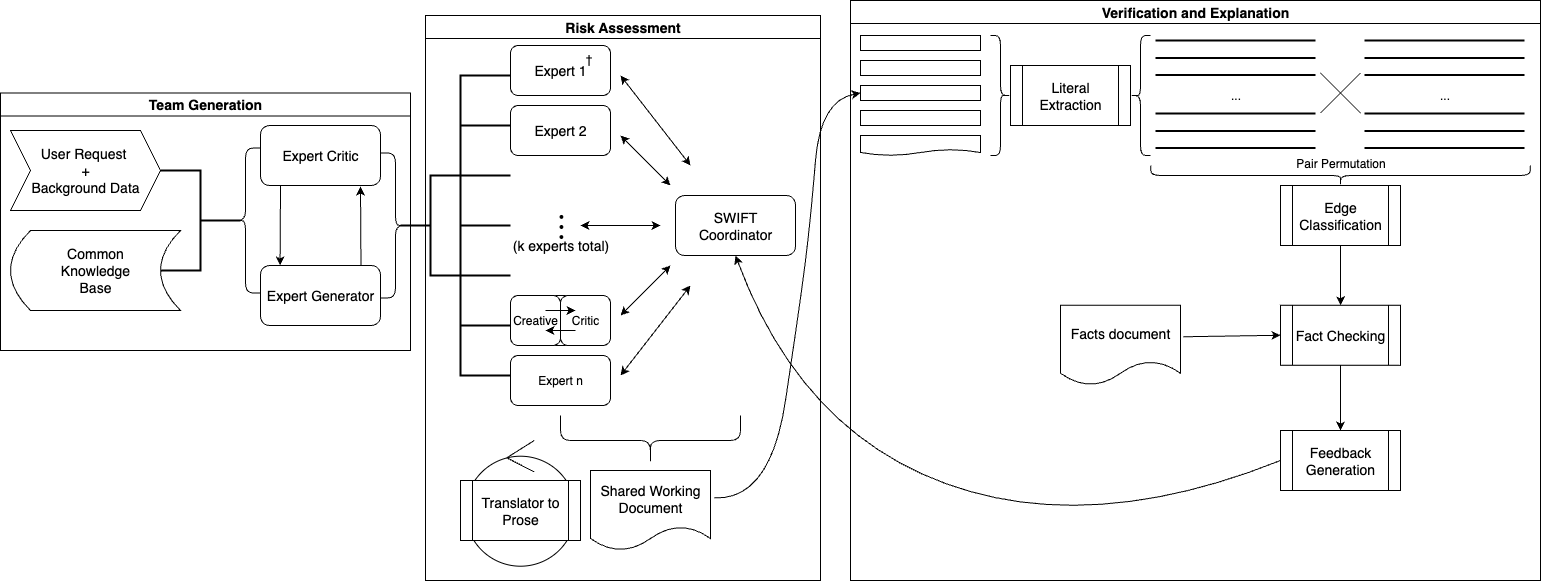

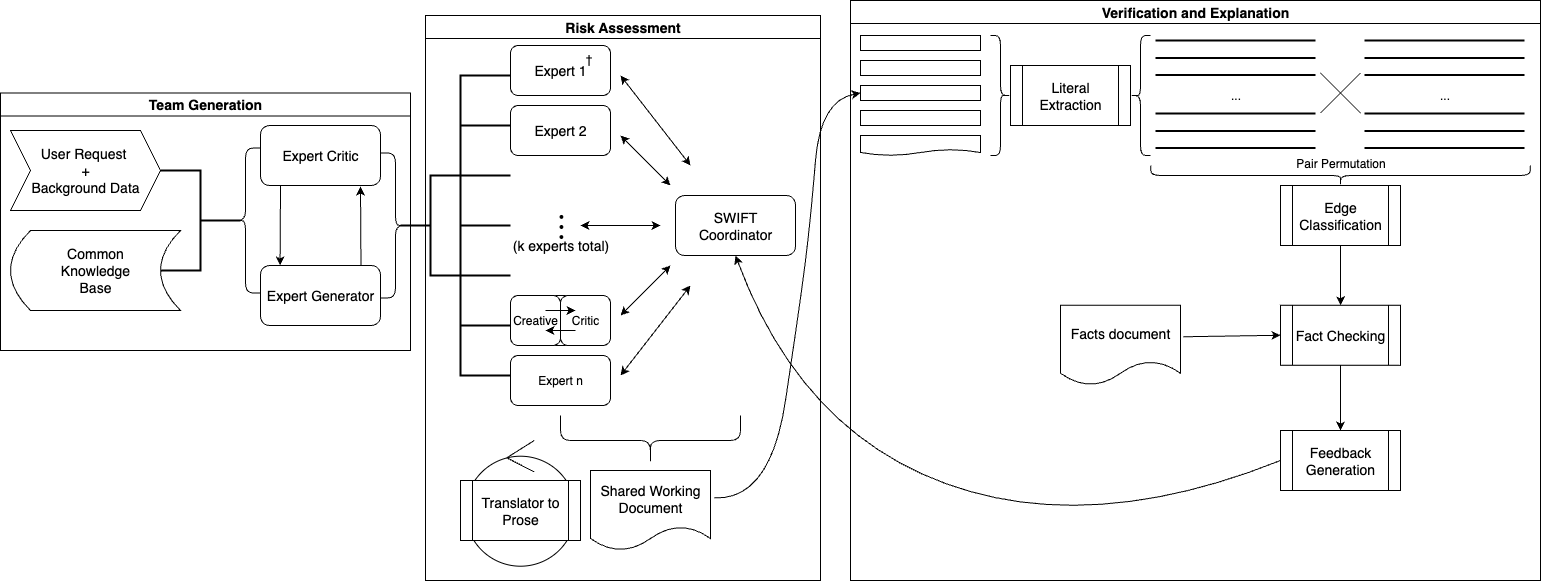

The paper showcases the application of B-ABA in multi-agent risk assessment systems using the Structured What-If Technique (SWIFT). These systems facilitate collaboration among AI agents, allowing for systematic risk analysis and iterative refinement without retraining. The verification mechanism provided by B-ABA utilizes fact nodes to identify contradictions, enabling automated fact-checking and feedback loops.

Figure 1: The complete pipeline from team generation to graph construction and verification. The SWIFT coordinator grants and receives control from experts who write to a shared working document; mining converts prose to literals, classifies relations, builds a B-ABA graph with fact nodes, and returns feedback.

Evaluation and Results

The paper reports significant improvements over previous benchmarks for argument extraction and relation classification tasks. The GPT-4.1 model fine-tuned on the AAEC corpus achieved a token-level F1 score of 94.44, surpassing previous methods by 5.7 points. The ModernBERT model demonstrated comparable effectiveness for relation classification with a macro F1 score of 0.79, offering a viable alternative to larger models like GPT-4.1 due to its efficiency and reduced computational cost.

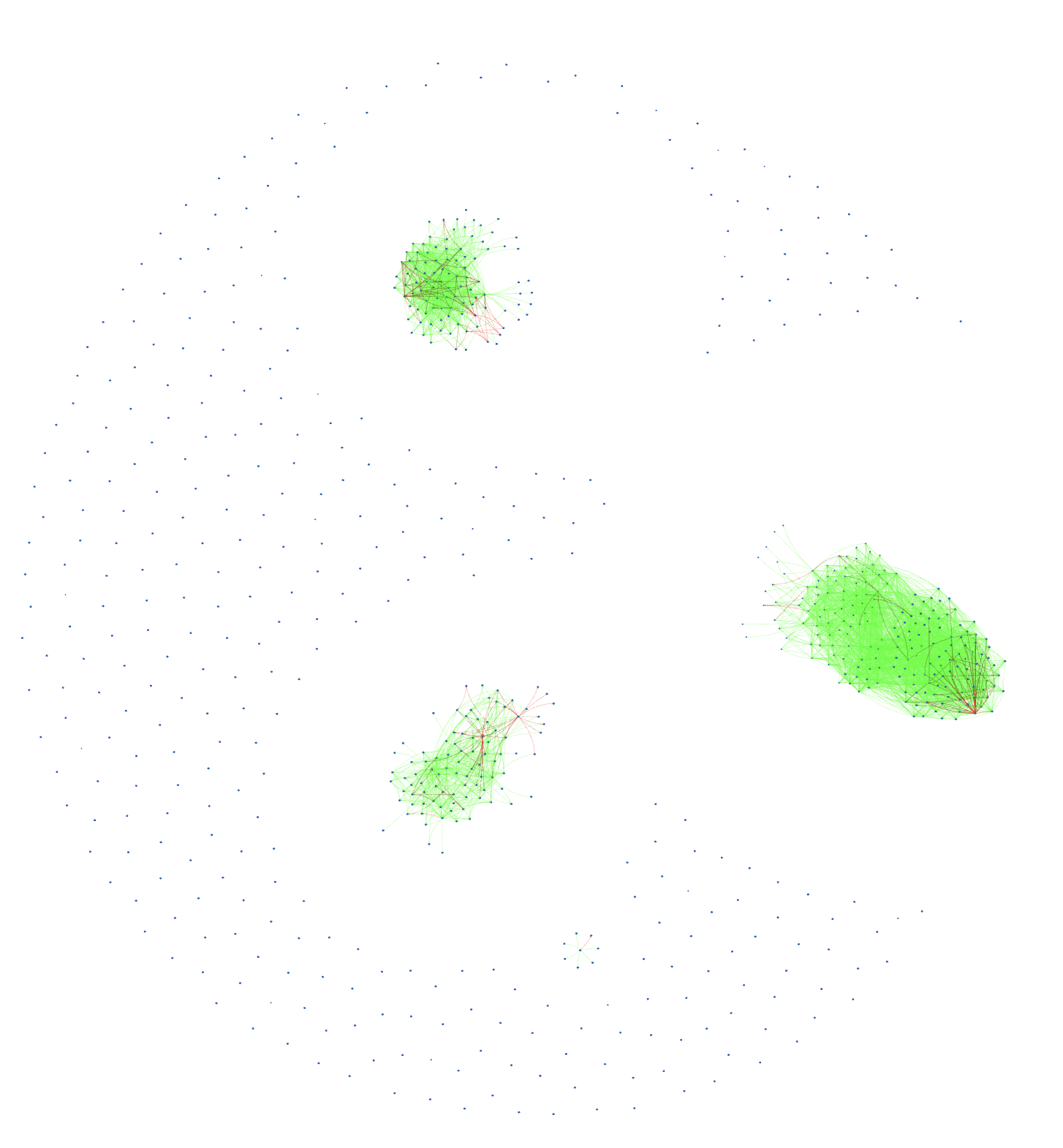

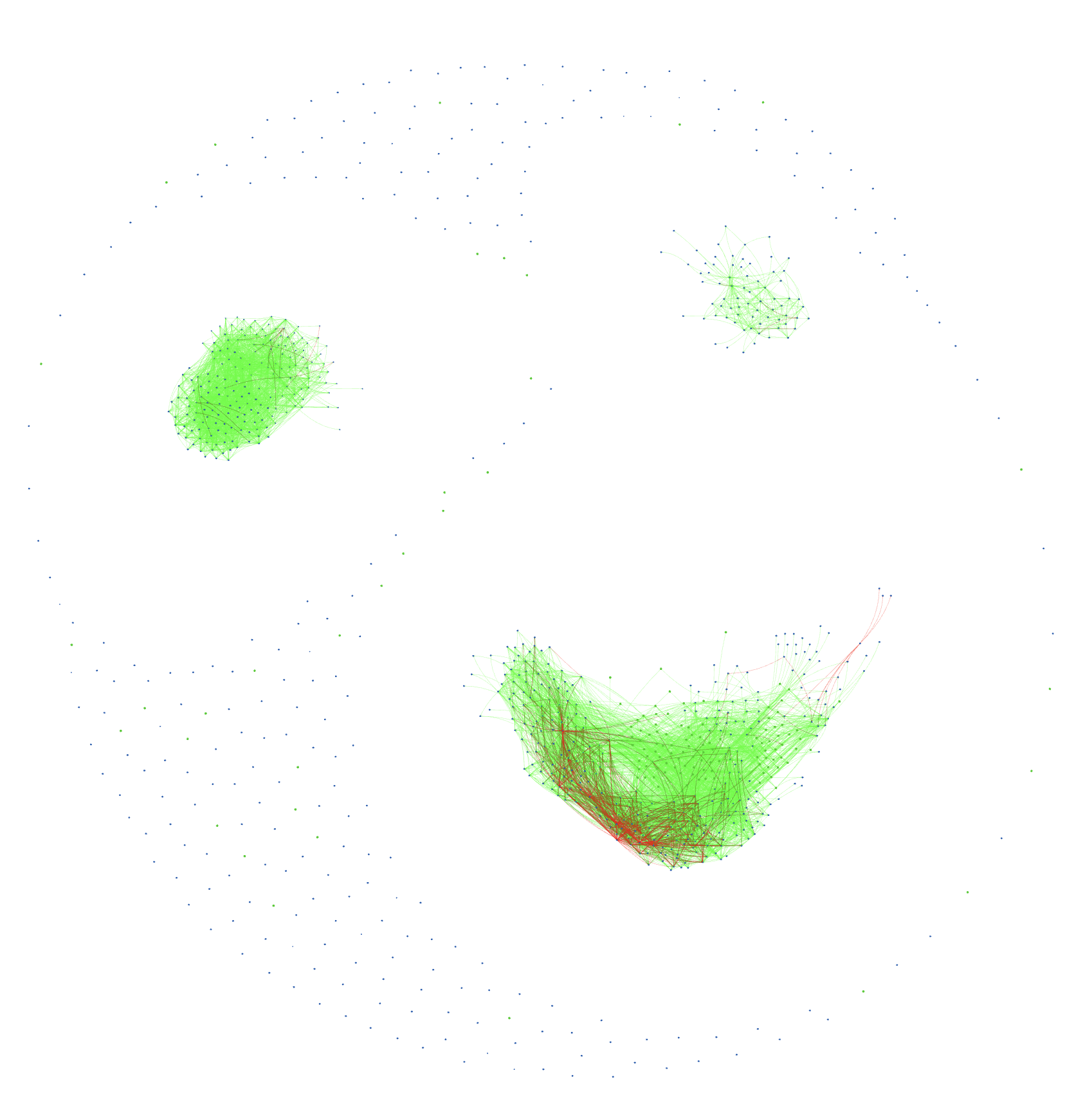

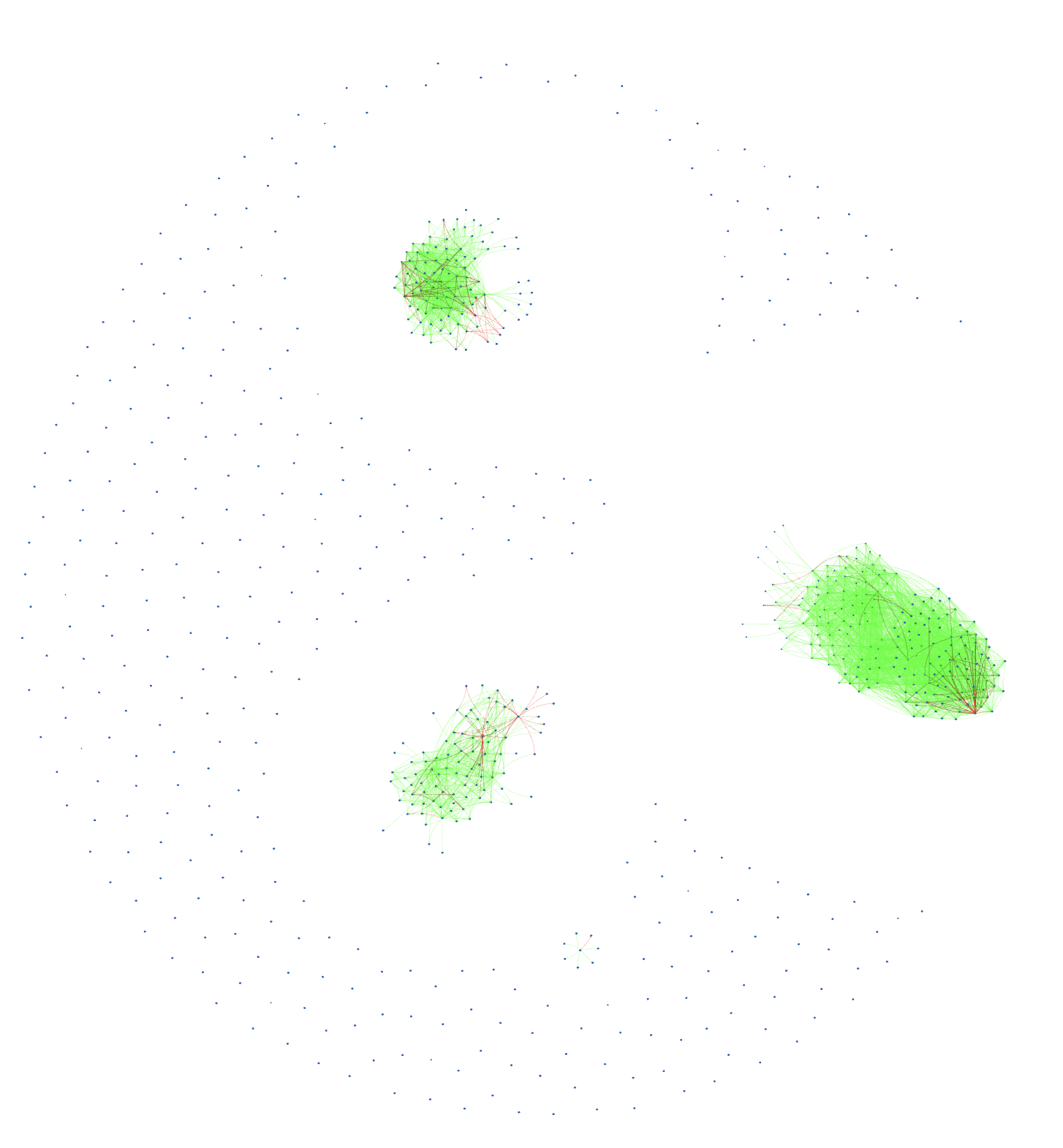

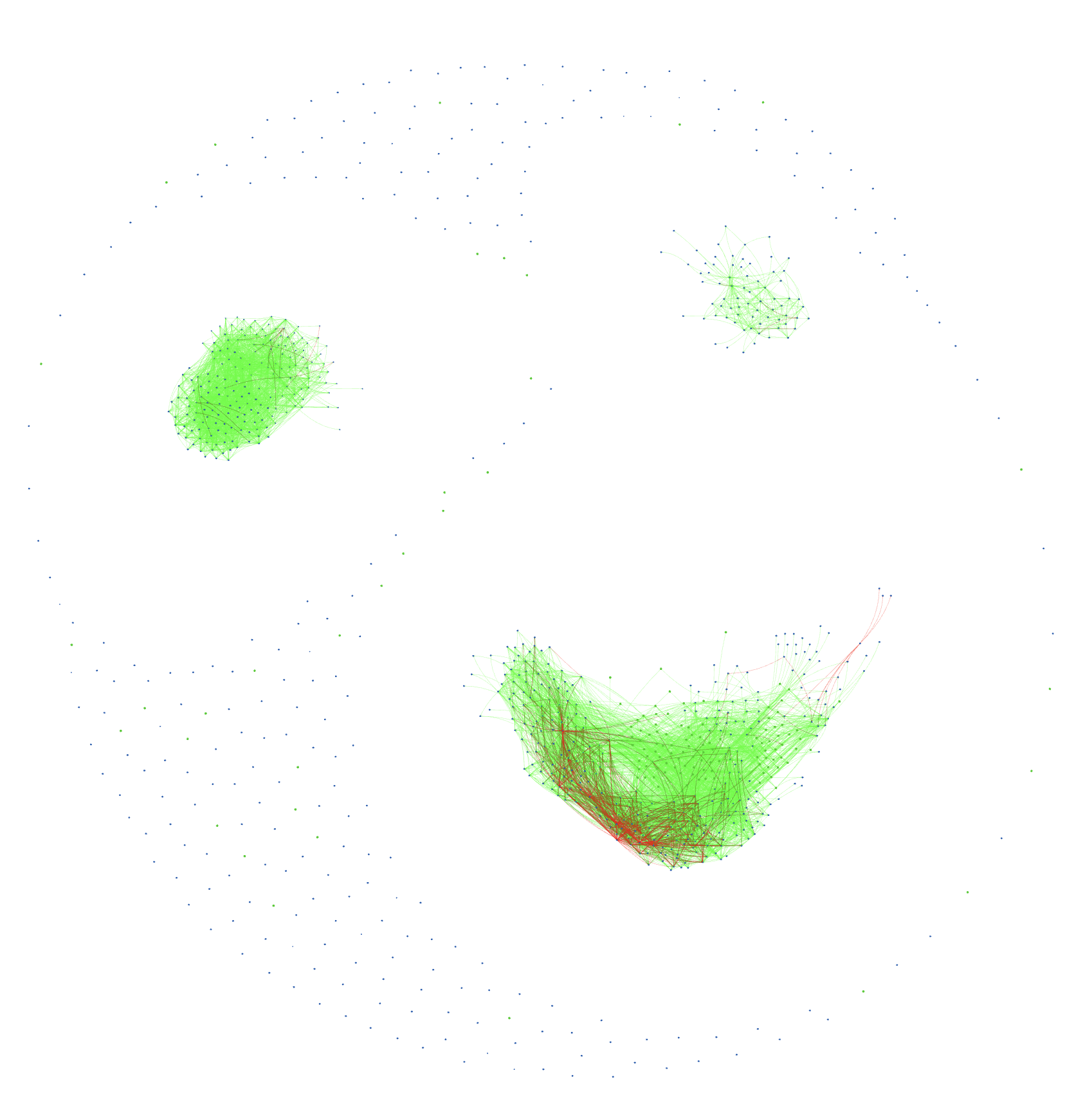

Figure 2: The sparse attack edges (1:12 ratio) demonstrate the collaborative nature of risk assessment documents where arguments build upon rather than contradict each other.

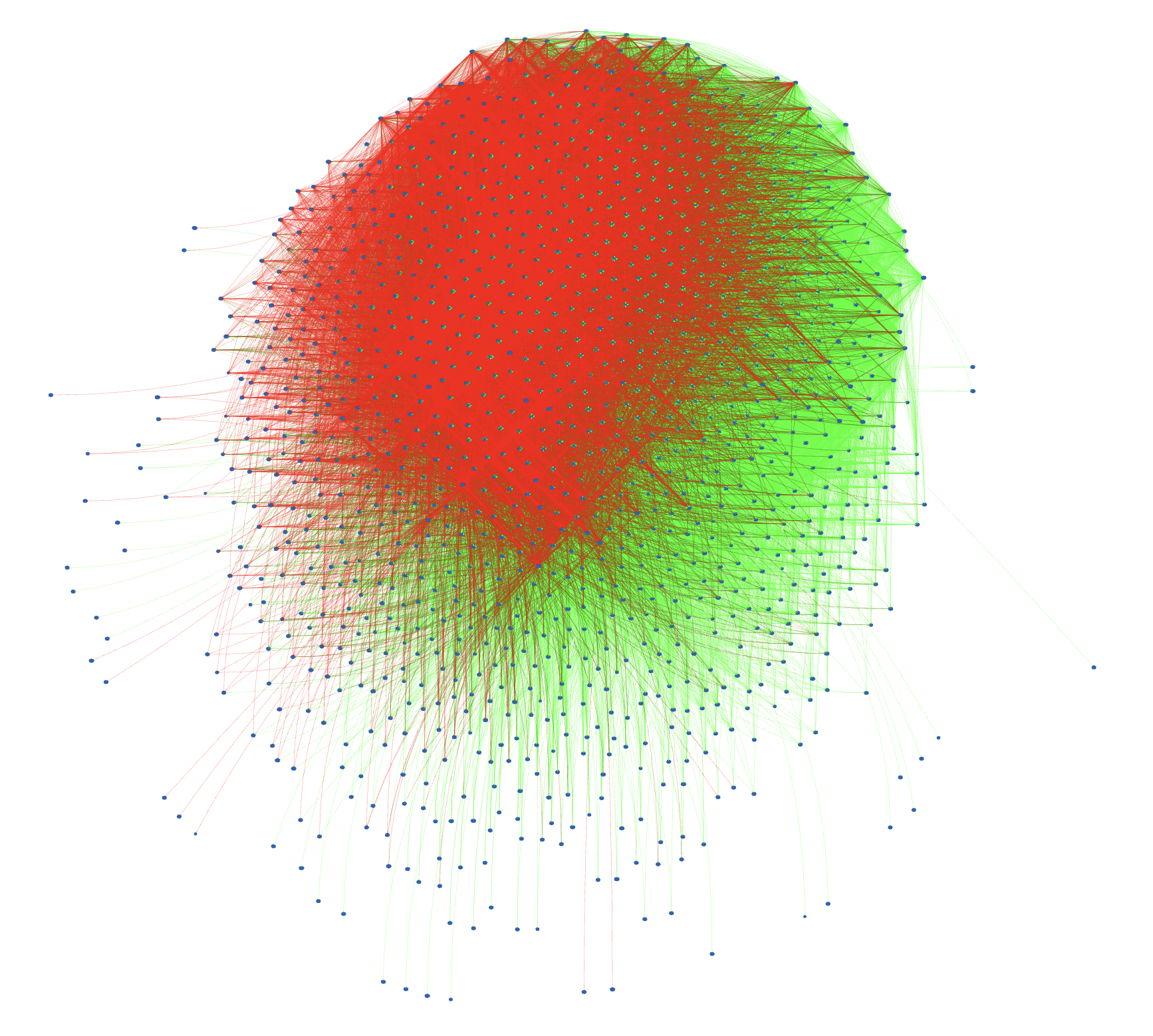

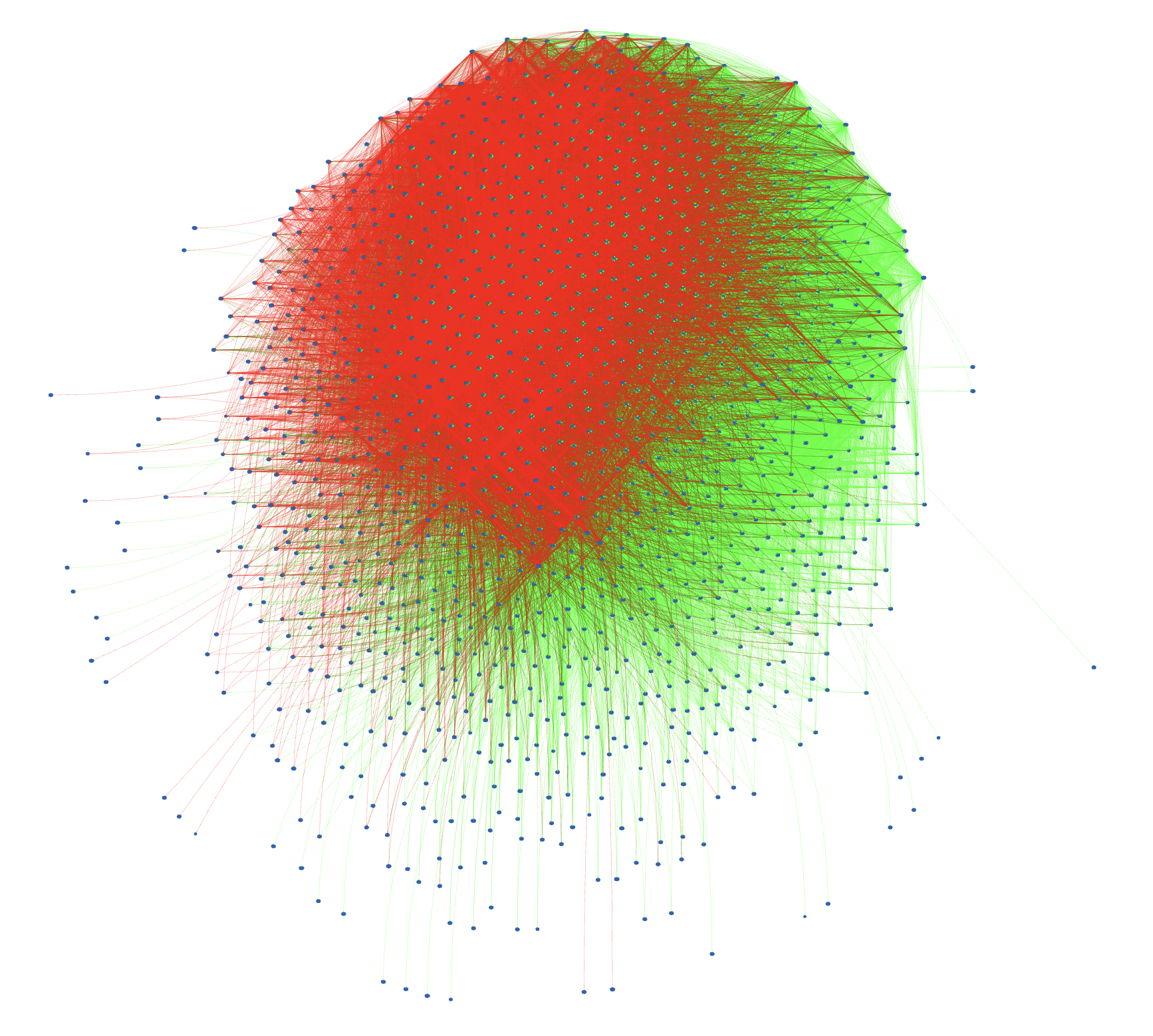

The empirical validation further extends to the risk assessment domain, where the structured argumentation approach provided automatic verification capabilities. The framework efficiently incorporated fact-checking through deliberate node injections, resulting in a tripling of the baseline attack rate and affirming its reliability for practical deployment.

Implications and Future Work

The deployment of structured argumentation systems with B-ABA not only paves the way for more trustworthy AI applications in risk assessment but also suggests broader implications for other domains requiring verified outputs. This work underscores the potential for multi-agent systems to overcome current trust barriers in AI by providing clear and verifiable explanations of their decision-making processes.

Future research may focus on refining the theoretical foundations of B-ABA, improving relation classifiers for accuracy on diverse datasets, and enhancing feedback mechanisms for iterative improvement. Expanding use cases beyond risk assessment will further explore the applicability and reliability of structured argumentation across various AI-driven domains.

Figure 3: The risk assessment graph after fact-checking. Fact nodes inject attack edges into the structure, creating clusters where factual contradictions are detected. Approximately 3x the baseline attack rate validates the automatic verification capability.

Conclusion

Overall, the paper presents a compelling argument for treating AI models' output as inherently opaque yet verifiable, much like human reasoning. By establishing structured argumentation as an innovative avenue for trustworthy AI, it furnishes the technical and practical prerequisites for deploying sophisticated multi-agent systems in risk assessment and beyond. The pioneering use of Bipolar Assumption-Based Argumentation ensures that stakeholders can rely on AI decisions grounded in deterministic yet adaptable verification pipelines. Such advancements hold promise for the broader integration of AI in critical domains where trust remains paramount.