Adapting Pre-trained Language Models to African Languages via Multilingual Adaptive Fine-Tuning

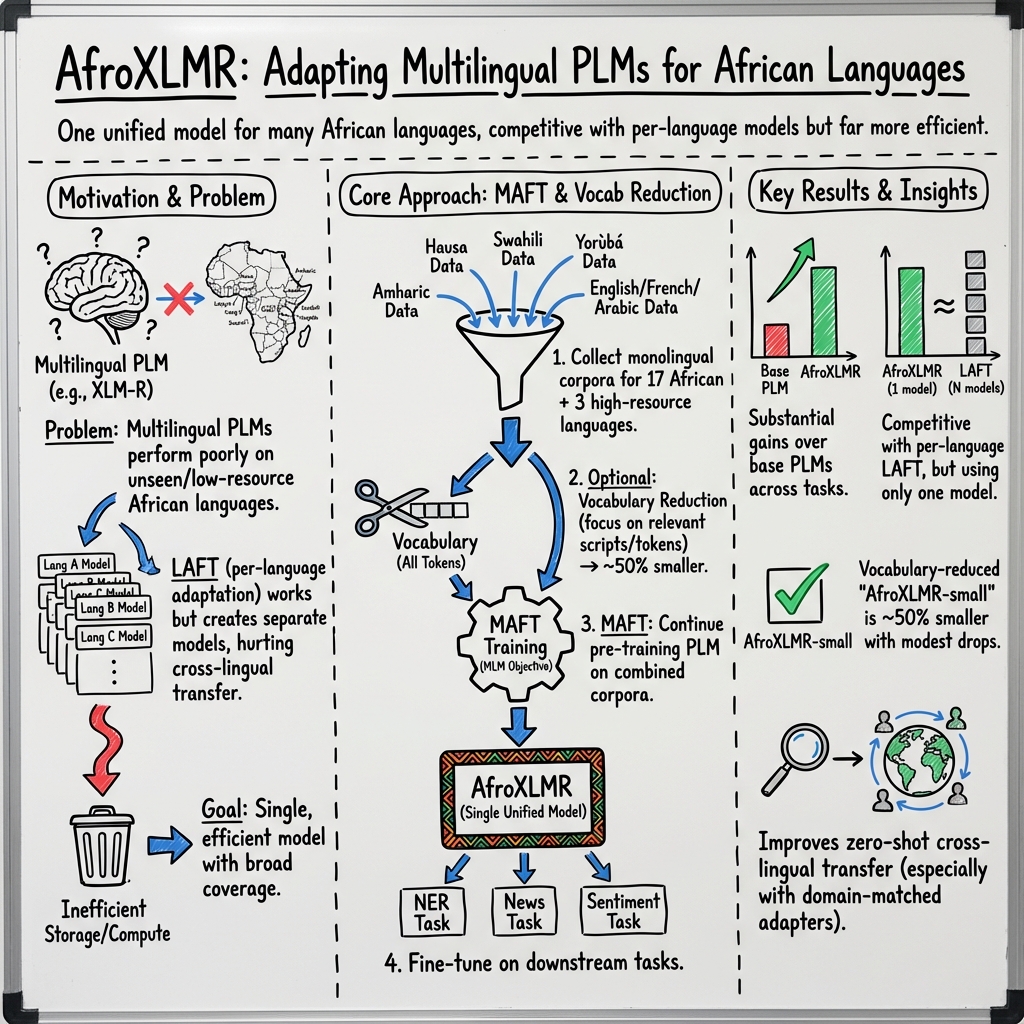

Abstract: Multilingual pre-trained LLMs (PLMs) have demonstrated impressive performance on several downstream tasks for both high-resourced and low-resourced languages. However, there is still a large performance drop for languages unseen during pre-training, especially African languages. One of the most effective approaches to adapt to a new language is \textit{language adaptive fine-tuning} (LAFT) -- fine-tuning a multilingual PLM on monolingual texts of a language using the pre-training objective. However, adapting to a target language individually takes a large disk space and limits the cross-lingual transfer abilities of the resulting models because they have been specialized for a single language. In this paper, we perform \textit{multilingual adaptive fine-tuning} on 17 most-resourced African languages and three other high-resource languages widely spoken on the African continent to encourage cross-lingual transfer learning. To further specialize the multilingual PLM, we removed vocabulary tokens from the embedding layer that corresponds to non-African writing scripts before MAFT, thus reducing the model size by around 50%. Our evaluation on two multilingual PLMs (AfriBERTa and XLM-R) and three NLP tasks (NER, news topic classification, and sentiment classification) shows that our approach is competitive to applying LAFT on individual languages while requiring significantly less disk space. Additionally, we show that our adapted PLM also improves the zero-shot cross-lingual transfer abilities of parameter efficient fine-tuning methods.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about helping computer language tools work better for African languages. Today’s big LLMs (like smart text readers) work well for languages they saw a lot during training (such as English or French), but they struggle with many African languages, especially those not included in their original training. The authors show a way to “teach” one model many African languages at once, so it performs well across them without needing a separate copy for each language. They also shrink the model so it’s easier to store and run.

What questions did the researchers ask?

- How can we adapt existing big LLMs to work better for African languages, especially those the model didn’t learn originally?

- Can we adapt a single model to many African languages at the same time (instead of one model per language) and still get strong results?

- Can we make the model smaller (take less disk space) without losing much accuracy?

- Will this adapted model help with common tasks like finding names in text, sorting news by topic, and understanding sentiment (positive/negative/neutral)?

- Can it also help with “zero-shot” transfer (doing a task in a new language without seeing any labeled examples in that language)?

How did they do it?

Think of a LLM like a student who has read a lot of books in different languages. You can “fine-tune” this student by giving them extra practice in a specific language or topic.

- LLMs used:

- XLM-R and AfriBERTa: two multilingual models that already know several languages.

- Usual approach (LAFT): Fine-tune on one language at a time. This helps that one language a lot, but you end up with many separate copies of the model—one for each language.

- Their new approach (MAFT): Fine-tune on many languages together at once. This creates one shared model (they call their versions AfroXLMR) that improves across languages and supports cross-language learning.

To make this simple:

- Fine-tuning is like extra practice worksheets.

- LAFT (Language Adaptive Fine-Tuning) = one language, one set of worksheets, one custom student.

- MAFT (Multilingual Adaptive Fine-Tuning) = one big set of worksheets covering many languages, one well-rounded student.

They also made the model smaller:

- LLMs store “vocabulary” pieces (tiny chunks of words) for many writing systems. The authors noticed that most African languages in their study use the Latin alphabet or the Ge’ez script (used by Amharic).

- They removed vocabulary bits for scripts not needed for the chosen African languages. It’s like removing keys from a keyboard you don’t use. This cut the model size by about half, with only a small drop in accuracy for most languages.

What tasks did they test?

- Named Entity Recognition (NER): finding names of people, places, and organizations in text.

- News Topic Classification: sorting news into topics (like Sports, Politics, World).

- Sentiment Analysis: deciding if a tweet is positive, negative, or neutral.

They trained and tested on:

- 17 African languages (like Hausa, Igbo, Swahili, Yoruba, Zulu, Amharic, Somali, etc.) plus English, French, and Arabic.

- They also created a new news dataset called ANTC for five languages (Lingala, Somali, Naija—Nigerian Pidgin, Malagasy, isiZulu) by collecting labeled news from VOA, BBC, Global Voices, and Isolezwe.

Training details in everyday terms:

- They kept the same “fill-in-the-blank” learning style used during the original model training (called masked language modeling). This is like giving the student sentences with missing words and asking them to guess the blanks.

- They did this for 3 training rounds (“epochs”) using texts from all selected languages.

They also tried “parameter-efficient” methods:

- Adapters: tiny plug-ins that teach the model new languages or tasks without changing the whole model.

- LT-SFT (Lottery Ticket Sparse Fine-Tuning): finding and training a smaller “winning” sub-network inside the big model, like identifying the most useful neurons and training just those.

What did they find, and why is it important?

Main results:

- One model, many languages: Their MAFT method produced a single model that performed nearly as well as (and sometimes close to) the best per-LLMs. That means you don’t need to store and maintain lots of separate models.

- Better accuracy than the original models:

- On average, the MAFT model beat the original, unadapted models on NER, news topic classification, and sentiment analysis.

- For example, their MAFT version of XLM-R (AfroXLMR-base) improved average NER accuracy compared to the original XLM-R-base.

- Almost as good as one-by-one fine-tuning: Adapting one model per language (LAFT) can still be a tiny bit better, but MAFT is very close—usually within a small fraction—and much more practical because it’s just one model.

- Smaller model, similar performance: After removing unused vocabulary pieces, model size dropped by about 50%, while accuracy only dropped a little for most languages (bigger drops for languages with non-Latin scripts like Amharic and Arabic, because more of their special characters were removed).

- Works for big models too: They applied MAFT to a larger model (XLM-R-large). It improved strongly and matched or beat the per-language approach, again as a single shared model.

- Better zero-shot transfer: With the MAFT-adapted model, “plug-in” methods (adapters and LT-SFT) did better when transferring from English to African languages, especially when trained on texts from the same domain (news). This means the model can handle new languages or tasks with fewer labeled examples.

- New dataset contribution: They released the ANTC dataset for five African languages, giving the community more materials to test and improve models.

Why it matters:

- Practical for real-world use: One strong, shared model is easier to store, share, and deploy than dozens of separate LLMs.

- Supports low-resource settings: Smaller models are important for researchers and developers who don’t have powerful computers or lots of storage.

- Better support for African languages: Improves performance for languages that have been underrepresented in AI tools.

- Boosts cross-lingual learning: The single model transfers knowledge across languages, helping smaller languages benefit from bigger ones.

What’s the potential impact?

- Easier access: A compact, high-quality model means more students, researchers, and developers—especially in Africa—can use and fine-tune it without expensive hardware.

- Stronger tools for many languages: Governments, newsrooms, educators, and startups can build language tools (like better search, content moderation, translation aids) that work across multiple African languages.

- Faster progress: Open-sourced code, models, and the new ANTC dataset help the community improve and test models, accelerating research and real-world applications.

- Better zero-shot performance: With improved transfer methods, future systems may handle new languages and tasks with little to no labeled data—very helpful where labeled data is scarce.

In short

The authors show a smart, practical way to adapt one big LLM to many African languages at once (MAFT), keep it small, and still get strong results on important tasks. This makes advanced language technology more inclusive, affordable, and useful for a wider range of languages and communities.

Practical Applications

Summary

This paper introduces Multilingual Adaptive Fine-Tuning (MAFT) to adapt existing multilingual masked LLMs (e.g., XLM-R, AfriBERTa) to 20 languages widely used in Africa. It delivers single, cross-lingual models (AfroXLMR-base/small/large) that match or approach the performance of per-language adaptation (LAFT) on three tasks—named entity recognition (NER), news topic classification, and sentiment analysis—while requiring far less storage. It also shows how domain-matched, parameter-efficient methods (MAD-X adapters, LT-SFT) improve zero-shot cross-lingual transfer, and contributes ANTC, a new multilingual news-topic dataset. The work further demonstrates a 50%+ reduction in model size via vocabulary pruning (with caveats for non-Latin scripts).

The following applications translate the paper’s findings into deployable solutions and future opportunities.

Immediate Applications

The following applications can be deployed now, using the released AfroXLMR models, code, and datasets.

- Single multilingual NER for African languages

- Sectors: media, government, finance, security, research

- What: Extract people, organizations, and locations from news, social media, customer tickets, and documents across multiple African languages with a single model.

- Tools/Workflows: AfroXLMR-base or AfroXLMR-large + task fine-tuning (MasakhaNER); deploy as one inference service with language identification (LID) at ingress.

- Dependencies/Assumptions: Availability of labeled NER data for domain adaptation; LID accuracy; performance may drop for underrepresented scripts or heavy code-switching.

- Multilingual sentiment analysis for market and political monitoring

- Sectors: marketing, public policy, civic tech, social platforms

- What: Analyze public sentiment in Hausa, Yoruba, Igbo, Naija (Pidgin), Amharic, and English for brand tracking and opinion monitoring.

- Tools/Workflows: AfroXLMR-base fine-tuned on NaijaSenti; parameter-efficient fine-tuning (MAD-X 2.0 or LT-SFT) for domain adaptation on tweets or platform-specific corpora.

- Dependencies/Assumptions: Domain mismatch can degrade accuracy (tweets vs. news); need for up-to-date, code-mixed data; platform API access.

- News topic classification for multilingual content routing

- Sectors: media/publishers, aggregators, search, ad-tech

- What: Auto-categorize African language articles for curation, personalization, and trend analytics.

- Tools/Workflows: AfroXLMR-base or AfroXLMR-large + ANTC and existing news datasets; integrate into CMS pipelines for tagging and routing.

- Dependencies/Assumptions: Category definitions must align with newsroom taxonomies; retraining needed for new sections/domains.

- Unified multilingual NLP for customer support (chatbots and helpdesks)

- Sectors: telecom, fintech, e-commerce, public services

- What: Intent classification and entity extraction across African languages in a single NLU backend, reducing per-LLM sprawl.

- Tools/Workflows: AfroXLMR-base + domain-specific fine-tuning; lightweight adapters per client/domain; single multilingual service with LID.

- Dependencies/Assumptions: Requires labeled intents/entities per deployment; code-switching and colloquial spelling may require additional data.

- Low-resource model deployment for NGOs and small teams

- Sectors: NGOs, startups, academia, civic tech

- What: Use AfroXLMR-small (vocabulary-reduced) to fine-tune and serve models on modest hardware (e.g., free Colab, single-GPU).

- Tools/Workflows: AfroXLMR-small (≈70k vocab) + quantization; simple Hugging Face workflows.

- Dependencies/Assumptions: Slight performance drop vs. base/large models; larger drop for non-Latin/Arabic-script languages; verify task-script fit.

- Zero-shot cross-lingual transfer with parameter-efficient methods

- Sectors: research, industry ML teams, fast prototyping

- What: Train once on English (or one well-resourced language), then transfer NER with news-domain language adapters or sparse subnets to target African languages without labeled target data.

- Tools/Workflows: AfroXLMR-base + MAD-X 2.0 or LT-SFT; train language adapters/subnets on monolingual news corpora; compose with task adapter.

- Dependencies/Assumptions: Source and target label sets must match; domain-matched monolingual text improves transfer; storage for adapters/sparse masks.

- Civic feedback triage and escalation

- Sectors: government, humanitarian organizations, hotlines

- What: Classify and prioritize SMS/WhatsApp messages (e.g., complaints, service requests) and extract key entities for routing.

- Tools/Workflows: AfroXLMR-base + small, labeled samples; active learning to iteratively improve; privacy-preserving deployment.

- Dependencies/Assumptions: Consent and data governance; domain fine-tuning needed for local lexicons and dialects.

- Safety and moderation scaffolding

- Sectors: social platforms, forums, community apps

- What: Build first-pass filters (topic/sentiment + NER cues) to help moderate harmful content in African languages.

- Tools/Workflows: AfroXLMR-base + task-specific fine-tuning; rules/thresholds and human-in-the-loop review.

- Dependencies/Assumptions: Requires annotated toxicity/hate datasets per language; fairness and false-positive risk management.

- Academic baselines and benchmarking for African NLP

- Sectors: academia, student programs, community research

- What: Use AfroXLMR models and ANTC/NaijaSenti/MasakhaNER as standardized baselines for coursework, workshops, and research.

- Tools/Workflows: Hugging Face model hub (AfroXLMR-*), GitHub code; reproducible scripts and evaluation.

- Dependencies/Assumptions: Dataset licensing, compute availability; ethical use and community collaboration.

- Cost- and storage-efficient model operations

- Sectors: MLOps across industries

- What: Replace many per-language LAFT models with a single MAFT model, reducing storage, deployment complexity, and maintenance.

- Tools/Workflows: AfroXLMR-base or -large; central inference with LID and per-task adapters.

- Dependencies/Assumptions: Ensure capacity for concurrent languages/tasks; monitor drift per language and domain.

Long-Term Applications

These opportunities require additional research, scaling, or ecosystem development.

- Expansion to more African languages and scripts

- Sectors: all sectors needing broader coverage

- What: Extend MAFT to dozens more languages (e.g., Tigrinya, Berber/Tifinagh, Arabic-script African languages), addressing tokenizer and vocabulary issues.

- Tools/Workflows: Scaled MAFT; improved per-script subword vocabularies; dynamic vocab selection.

- Dependencies/Assumptions: Availability of monolingual corpora; improved tokenizer design; sustained compute resources.

- Government-scale early warning and misinformation monitoring

- Sectors: public health, civil protection, electoral bodies

- What: Real-time ingestion of local-language media/social posts for topic/sentiment/NER/event signals, feeding dashboards and alerts.

- Tools/Workflows: AfroXLMR-large + streaming pipelines (e.g., Kafka + vector stores); event extraction and entity linking.

- Dependencies/Assumptions: Data-sharing agreements; robust ethics and governance; scalable infrastructure and latency constraints.

- On-device and offline language understanding

- Sectors: rural services, field operations, mobile assistants

- What: Further compress MAFT models for reliable on-device inference in low-connectivity areas (e.g., health outreach, agri-advisory).

- Tools/Workflows: Distillation from AfroXLMR to smaller students; pruning/quantization; dynamic vocabulary per deployment.

- Dependencies/Assumptions: Maintain acceptable accuracy; device heterogeneity; energy constraints.

- Knowledge base construction in African languages

- Sectors: media, cultural heritage, search, digital libraries

- What: Use NER + relation extraction + coreference to populate multilingual knowledge graphs; cross-link with Wikidata.

- Tools/Workflows: Pipeline on AfroXLMR + additional fine-tuned modules (RE/coref); human curation.

- Dependencies/Assumptions: Lack of labeled RE/coref datasets; need canonicalization and disambiguation resources.

- Cross-lingual retrieval and question answering

- Sectors: search, customer support, education

- What: Build CLIR and QA systems that accept queries in one language and fetch answers from content in another.

- Tools/Workflows: Dual-encoder retrieval or dense passage retrieval fine-tuned from AfroXLMR; supervised QA on multilingual corpora.

- Dependencies/Assumptions: Training data for retrieval/QA; evaluation benchmarks; mixed-script handling.

- Domain-specific adapters for regulated sectors

- Sectors: healthcare, legal, finance, agriculture

- What: Plug-and-play domain adapters (language × domain) for compliance-ready NER, classification, and routing.

- Tools/Workflows: AdapterHub-like repositories with domain corpora; governance for adapter provenance and auditing.

- Dependencies/Assumptions: Access to domain text in local languages; regulatory approvals and privacy guarantees.

- Speech-to-NLU pipelines for contact centers and assistants

- Sectors: telecom, public services, retail

- What: Integrate ASR for African languages/code-switching with AfroXLMR-based NLU for voice bots and IVR.

- Tools/Workflows: ASR front-ends + NLU fusion; latency-optimized serving; continual learning from transcripts.

- Dependencies/Assumptions: High-quality ASR models/resources per language; paired speech–text corpora; voice privacy compliance.

- Robustness, fairness, and safety frameworks

- Sectors: platforms, public sector, regulated industries

- What: Systematic auditing and mitigation for bias, toxicity, and error modes across languages and dialects.

- Tools/Workflows: Multilingual fairness evaluation suites; red-teaming; dataset curation with community input.

- Dependencies/Assumptions: Culturally grounded annotations; standardized metrics; ongoing community engagement.

- Participatory data pipelines and annotation ecosystems

- Sectors: academia, NGOs, startups, government

- What: Community-driven collection and labeling of monolingual corpora and task datasets, with active learning and incentives.

- Tools/Workflows: Open platforms for data contribution; annotation guidelines; model-in-the-loop sampling.

- Dependencies/Assumptions: Funding and governance; consent and IP frameworks; multi-stakeholder collaboration.

- Policy and procurement guidelines for public-sector language tech

- Sectors: government, donors, multilaterals

- What: Standardize requirements for African-language NLP (coverage, accuracy, fairness, openness), ensuring inclusive services.

- Tools/Workflows: Reference evaluations (MasakhaNER, ANTC, NaijaSenti); open model registries; audit checklists.

- Dependencies/Assumptions: Inter-agency coordination; legal harmonization; sustainability planning.

Notes on Feasibility and Risks

- Script coverage matters: Vocabulary reduction benefits efficiency but can hurt performance for non-Latin scripts (e.g., Amharic Ge’ez, Arabic). Choose AfroXLMR-base/large (full vocab) or script-aware pruning for such languages.

- Domain alignment is critical: Training language adapters or sparse subnets on domain-matched monolingual corpora (e.g., news for NER) measurably improves zero-shot transfer.

- Storage/compute trade-offs: AfroXLMR-small enables constrained deployments with some accuracy loss; AfroXLMR-large achieves SOTA but needs more compute.

- Ethical use: For monitoring/moderation and government use-cases, establish clear governance, consent, privacy, and redress mechanisms.

Collections

Sign up for free to add this paper to one or more collections.