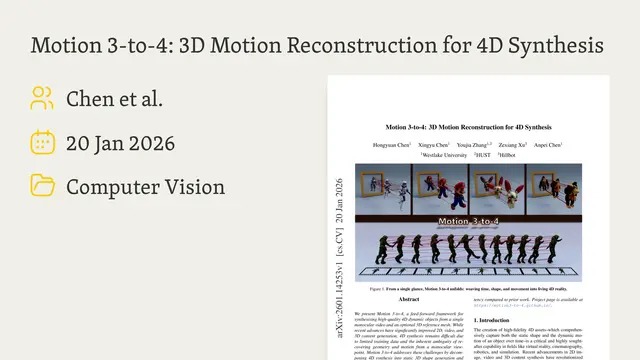

Motion 3-to-4: 3D Motion Reconstruction for 4D Synthesis

An overview of a novel framework for generating dynamic 4D objects from monocular video by disentangling static shape generation from motion reconstruction.Script

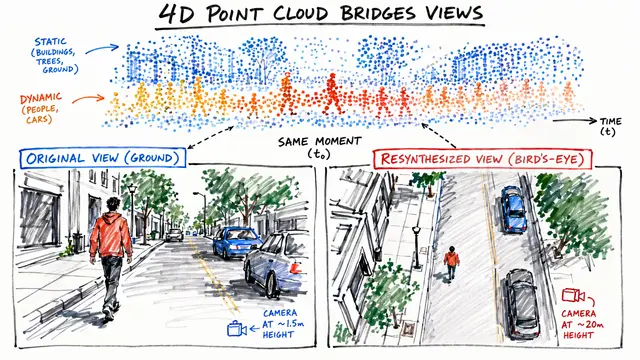

Capturing the world in 4D often feels like solving a puzzle with missing pieces because predicting depth and motion from a flat video is mathematically ill-posed. This paper tackles that "monocular ambiguity" head-on by rethinking how we synthesize dynamic objects.

To solve this, the authors move away from trying to generate full 4D reality from scratch, which is currently hampered by scarce training data. Instead, they simplify the problem by separating the task into two clear steps: obtaining a static 3D shape first, and then predicting how that surface flows through time.

Mechanically, this relies on a dual-module architecture where the system first learns "motion latents" using robust visual features like DINOv2 to understand the scene. Then, a decoder predicts the precise 3D trajectory for every point on the surface relative to a reference frame, effectively flowing the geometry through time.

You can see the power of this approach here, where single videos are transformed into coherent 4D meshes without the long optimization times typically required by older methods. The model maintains fine surface details even as the objects deform, largely because it anchors motion to that initial reference mesh.

Quantitatively, this method outperforms baselines like L4GM on geometric metrics, offering higher accuracy and faster inference speeds. However, it is worth noting that simply displacing points on a reference mesh means the system struggles if the object undergoes drastic topological changes mid-video.

Ultimately, Motion 3-to-4 provides a robust blueprint for high-fidelity 4D asset creation in VR and robotics by treating motion as a surface alignment problem. For more deep dives into computer vision research, visit EmergentMind.com.