4D Gaussian Splatting for Real-Time Dynamic Scene Rendering

This presentation explores a breakthrough framework that extends 3D Gaussian Splatting into four dimensions to achieve real-time rendering of dynamic scenes. By combining explicit 3D Gaussians with implicit 4D neural voxels, the authors demonstrate rendering speeds up to 82 FPS while maintaining exceptional visual quality. The talk covers the core methodology, experimental results showing superior performance over state-of-the-art techniques, and practical implications for virtual reality, augmented reality, and cinematic applications.Script

Rendering a moving scene in real-time has always meant choosing between speed and quality. This paper shatters that compromise by extending Gaussian splatting into the fourth dimension, achieving 82 frames per second while capturing every detail of motion and deformation.

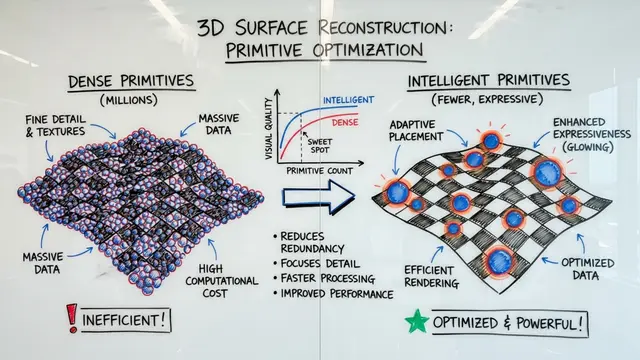

The fundamental problem is inefficiency. When you treat every frame as a separate puzzle, you ignore the continuity that connects them. Previous techniques either sacrificed speed for quality or delivered fast but unconvincing results, especially when objects both move and change shape.

The authors solve this by thinking in four dimensions from the start.

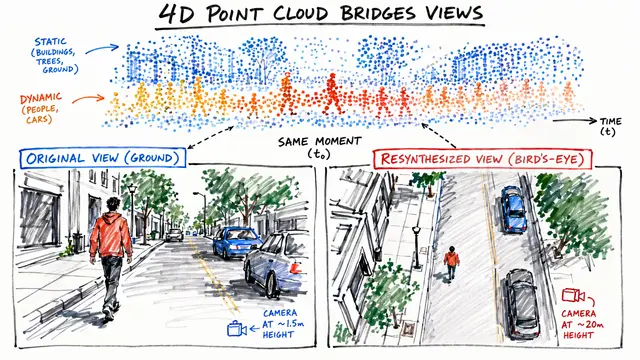

The framework fuses two paradigms. Three-dimensional Gaussians provide the spatial backbone, each acting as an explicit primitive that can be rendered instantly. Meanwhile, four-dimensional neural voxels encoded through HexPlane decomposition learn how those Gaussians should deform across time. A compact neural network bridges the two, predicting motion and shape changes for any timestamp.

The visual results speak to the method's power. In head-to-head comparisons on synthetic datasets, 4D Gaussian Splatting delivers crisp, artifact-free rendering where competing methods introduce blur or fail to capture fine motion details. Notice how surface textures remain sharp even during rapid movement, and deformations appear smooth and physically plausible throughout the sequence.

The numbers confirm what the visuals suggest. On synthetic datasets, the system renders at 82 frames per second while achieving a peak signal-to-noise ratio over 34 decibels and structural similarity near perfection. Even on challenging real-world footage at higher resolution, it maintains 30 frames per second. This is real-time performance with quality that previously required offline rendering.

Four-dimensional Gaussian splatting proves that temporal continuity, when modeled from the beginning, unlocks both speed and fidelity. Visit EmergentMind.com to explore this research further and create your own video presentations.