Learning to Reason in 4D: Dynamic Spatial Understanding for Vision Language Models

This presentation explores a breakthrough in teaching vision-language models to understand dynamic 3D spaces over time. The research introduces DSR Suite, an automated framework that generates training data and benchmarks from real-world videos, paired with a Geometry Selection Module that efficiently integrates geometric priors. The approach achieves 58.9% accuracy on challenging 4D reasoning tasks where state-of-the-art models perform at random-guess levels, while maintaining strong performance on general video understanding benchmarks.Script

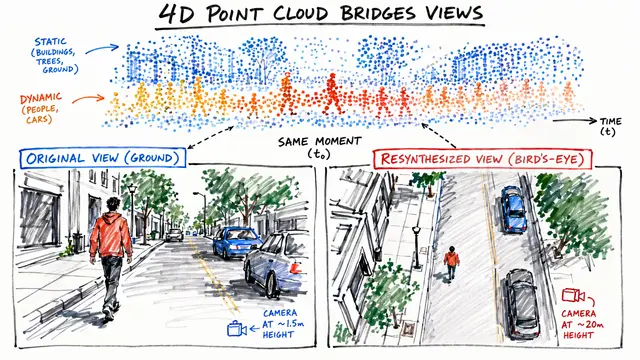

Vision models can recognize objects in images, but ask them how a rotating object will look from another angle in 10 seconds, and they fail spectacularly. Current AI operates in a static 3D world while reality unfolds in 4 dimensions, with geometry constantly transforming over time.

The researchers identified a fundamental blind spot. While vision-language models handle static scenes well, they collapse when reasoning about objects moving through space, changing orientation, or interacting over extended time periods. This isn't a minor performance gap. On dynamic spatial reasoning tasks, even the most advanced models score barely above random chance.

To solve this, the authors built an entire ecosystem for 4D reasoning from scratch.

DSR Suite processes unconstrained video through a multi-stage pipeline. Vision foundation models extract geometric cues, camera poses, and track objects across frames. The system then generates question-answer pairs that require reasoning about how spatial attributes evolve, forcing models to understand procedural geometry rather than static snapshots. This produces DSR-Train with 10,000 videos and DSR-Bench, a human-verified benchmark with 575 videos testing 1,484 distinct reasoning challenges.

But data alone isn't enough. The challenge is how to feed geometric information into vision-language models without destroying their general capabilities. The Geometry Selection Module uses two stacked Q-Formers in sequence. The first compresses question tokens into fixed-size semantic queries. The second selectively attends to geometric priors, extracting only the spatial features relevant to answering that specific question. This prevents the model from drowning in irrelevant 3D data while preserving its ability to handle everyday video understanding tasks.

The results draw a sharp line between approaches. Where existing vision-language models and even specialized spatial reasoning systems barely exceed random performance, the proposed method achieves 58.9% accuracy. More remarkably, it does this without sacrificing general video understanding, a tradeoff that plagued earlier attempts at geometric fusion. Ablations confirm the design works as intended: accuracy scales with the number of geometric queries, and balanced mixtures of template and free-form questions maximize learning efficiency.

This work formalizes what 4D intelligence requires: not just seeing objects move, but understanding how geometry transforms across viewpoints and time. For robotics, navigation, and embodied AI, that distinction is everything. Visit EmergentMind.com to explore more research and create your own video presentations.