MARS: Enabling Autoregressive Models Multi-Token Generation

This presentation explores MARS, a breakthrough fine-tuning approach that enables standard autoregressive language models to generate multiple tokens per decoding step without architectural changes or extra parameters. We examine how MARS preserves strict autoregressive compatibility while achieving up to 1.7× speedup, the critical role of dual-stream training with SFT loss in maintaining quality at scale, and the practical implications of dynamically tunable speed-quality tradeoffs for production deployment.Script

Autoregressive language models generate text one token at a time, burning a full forward pass even for utterly predictable words. MARS breaks this constraint without changing a single layer of your model's architecture.

The authors introduce a fine-tuning routine that converts any instruction-tuned autoregressive model into one capable of multi-token generation, while keeping it fully usable as a standard one-token-at-a-time model. Unlike speculative decoding or methods like Medusa that add extra heads or draft models, MARS requires zero additional parameters and no changes to the transformer architecture itself.

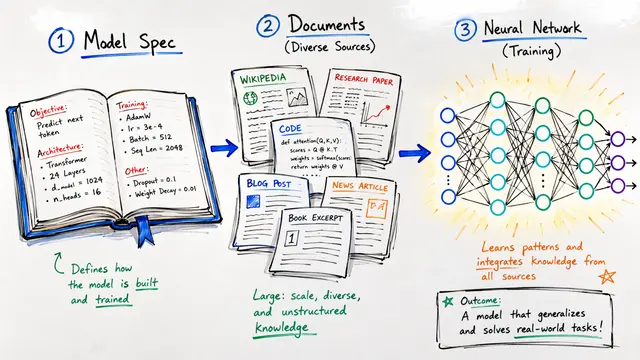

The technique rests on a surprisingly elegant training strategy.

Each training sample flows through two pathways simultaneously. The clean stream applies standard supervised fine-tuning loss on the original text, anchoring the model's autoregressive capabilities. In parallel, the noisy stream masks out consecutive blocks of tokens and trains the model to predict all masked positions using only the context from prior blocks and mask tokens within the current block. Critically, the attention pattern remains strictly causal, and the output logits stay right-shifted, ensuring the model never learns dependencies that violate autoregressive conventions.

The inclusion of the standard supervised fine-tuning loss is not a minor detail—it's essential. Without it, increasing the block size causes catastrophic degradation, especially on reasoning and coding tasks, as the autoregressive signal decays. With the SFT loss balancing the masked block prediction, performance remains rock-solid across block sizes, and at inference the model confidently accepts multiple tokens per step while maintaining or even exceeding baseline accuracy on benchmarks like GSM8K and HumanEval.

These curves reveal the practical payoff. On the left, GSM8K; on the right, HumanEval. The solid lines represent MARS with the dual-stream training. The dashed lines show what happens without the SFT loss—performance collapses as you try to increase speed. The dotted horizontal line is the standard autoregressive baseline. Notice that MARS with SFT loss consistently sits above the baseline across the entire speed-quality frontier, meaning you get faster inference without sacrificing accuracy, and you can tune that tradeoff dynamically at inference time by adjusting a single confidence threshold.

MARS transforms the speed-quality tradeoff from a fixed architectural constraint into a runtime dial you can turn per request, all without adding a single parameter to your model. To explore more research like this and create your own video presentations, visit EmergentMind.com.