Causal Autoregressive Diffusion Language Model

This presentation introduces CARD, a novel framework that synthesizes the quality of autoregressive models with the efficiency of diffusion models. Through causal attention masking and innovative optimization techniques, CARD achieves 3x faster training, dynamic parallel decoding with up to 4x throughput improvements, and matches the generative quality of traditional autoregressive models while outperforming existing diffusion baselines.Script

What if you could train a language model 3 times faster while keeping the same generative quality? The researchers behind this paper introduce CARD, a framework that merges the precision of autoregressive models with the speed of diffusion processes.

Let's start with the challenge they're addressing.

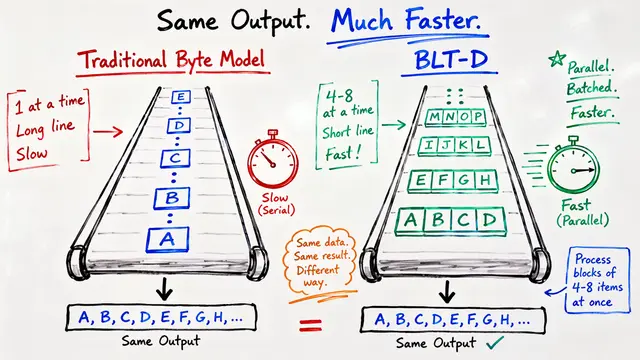

Traditional autoregressive models face a fundamental tradeoff: they produce high-quality text but decode sequentially, one token at a time. While diffusion models can generate tokens in parallel, existing approaches struggle with training efficiency and often can't match autoregressive quality.

Here's where CARD changes the game.

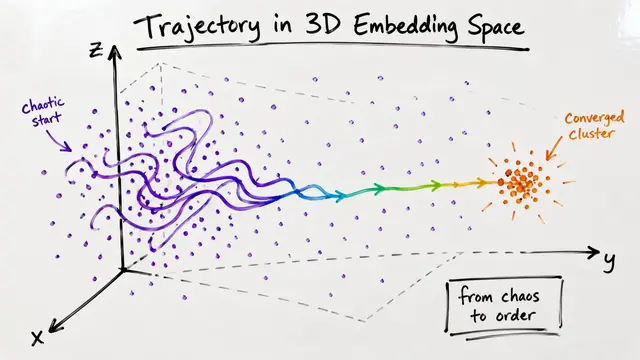

CARD achieves this through two key innovations. For training, a causal attention mask enables dense supervision in a single forward pass, delivering 3 times faster training than block diffusion. For inference, KV-caching and confidence-driven decoding allow dynamic parallel generation with throughput improvements ranging from 1.7 to 4 times.

Two clever mechanisms make this work. Soft-tailed masking focuses corruption at the sequence tail while preserving the clean prefix. Context-aware reweighting dynamically adjusts token importance during ambiguous scenarios, stabilizing optimization across varying noise levels.

The empirical results validate this approach convincingly.

The experiments are compelling. CARD achieves 53.2% zero-shot accuracy, surpassing existing diffusion baselines, while matching the generative quality of autoregressive models. Notably, it continues improving even with limited training data, demonstrating exceptional data efficiency.

Like any innovation, CARD comes with considerations. While it delivers dramatic speed gains and flexible inference, the novel architecture requires adaptation from existing frameworks. Confidence thresholds need careful tuning, and broader validation across diverse language tasks will strengthen its general applicability.

CARD represents a meaningful evolution in language model design. By reducing training costs while maintaining quality, it makes large-scale deployment more accessible. More broadly, it demonstrates that we don't have to choose between autoregressive precision and diffusion efficiency—we can have both.

CARD offers a promising path forward: faster training, flexible inference, and quality that matches the best autoregressive models. Visit EmergentMind.com to explore the full technical details and see how this framework might reshape language modeling.