DFlash: Block Diffusion for Flash Speculative Decoding

This presentation explores DFlash, a breakthrough speculative decoding framework that uses lightweight block diffusion models to accelerate large language model inference. By generating multiple tokens in parallel rather than sequentially, and conditioning the draft model through direct injection of target model context features, DFlash achieves over 6× speedup compared to standard autoregressive decoding and up to 2.5× improvement over state-of-the-art methods like EAGLE-3, all while maintaining exact generation quality.Script

What if we could make language models decode 6 times faster without sacrificing a single token of quality? That's the promise of DFlash, a radical rethinking of how we accelerate large language model inference through block diffusion.

Let's first understand why current acceleration methods hit a wall.

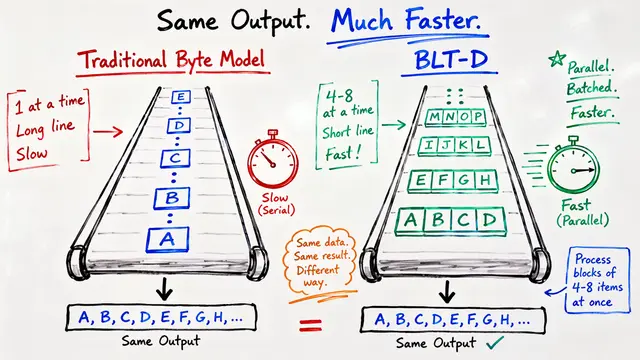

Building on that challenge, current speculative decoding approaches face a fundamental tradeoff. The draft models themselves use autoregressive generation, which means drafting latency grows linearly with each additional token you want to speculate, forcing researchers to use shallow models that produce lower-quality drafts.

DFlash breaks this tradeoff with a completely parallel approach.

At the heart of DFlash is a lightweight block diffusion model that drafts tokens in parallel rather than sequentially. The key innovation is injecting rich context features from the target model directly into every layer of the drafter through Key-Value cache injection, giving the draft model persistent access to high-fidelity information.

This architectural diagram reveals how DFlash maintains quality during parallel drafting. Hidden states from multiple layers of the target language model are extracted and condensed into a context embedding, then injected directly into the Key-Value projections at every layer of the diffusion drafter. This persistent conditioning is what enables the lightweight draft model to produce high-quality speculations that the target model will accept.

Comparing the two approaches reveals why DFlash achieves superior performance. While autoregressive methods like EAGLE-3 pay an increasing latency cost for each additional drafted token, forcing them to use shallow models, DFlash maintains nearly constant drafting cost regardless of depth. This enables deeper, more expressive draft models that produce higher-quality speculation blocks.

The empirical results are striking. On Qwen3-8B, DFlash achieves more than 6 times speedup over standard autoregressive decoding, with up to 2.5 times higher acceleration than EAGLE-3. These gains hold consistently across math, coding, and chat benchmarks, demonstrating that parallel block diffusion fundamentally shifts what's possible in speculative decoding.

Beyond raw speedup numbers, DFlash proves remarkably robust across deployment conditions. The framework scales across different model sizes and generation temperatures, works in high-concurrency serving scenarios, and allows dynamic adjustment of block size at inference time since models trained on larger blocks transfer smoothly to smaller ones.

The implications extend beyond benchmarks. DFlash demonstrates that hybrid paradigms combining diffusion drafting with autoregressive verification can sidestep known quality limitations while achieving practical wall-clock cost reductions. This division of labor suggests a broader future for model hybridization in efficient language model serving.

DFlash proves that parallel block diffusion can serve as the optimal spearhead for speculative decoding, achieving lossless acceleration that fundamentally expands what's possible in production language model inference. Visit EmergentMind.com to dive deeper into this breakthrough approach.