The Hidden Geometry: How Neural Networks Encode Conceptual Structure

This presentation reveals a fundamental discovery about how neural networks organize knowledge internally. Researchers demonstrate that the geometric structure of a network's internal representations is nearly identical to the geometry of its output behavior, and that respecting this intrinsic manifold structure enables smooth, natural control of model outputs. Through experiments on reasoning tasks and visual models, the work shows that conventional linear steering methods violate this geometry, producing incoherent outputs, while manifold-based interventions achieve interpretable behavioral control.Script

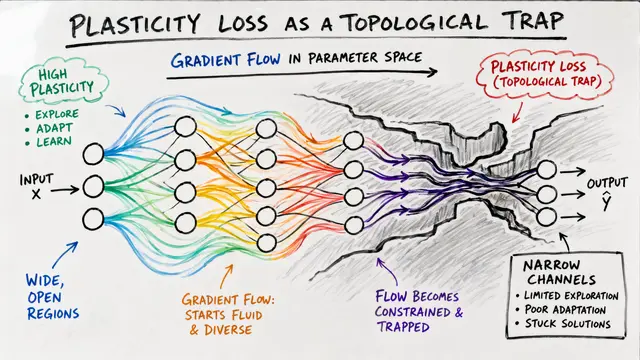

When you steer a neural network by nudging its internal activations, does the path you take through that space matter? This paper reveals that it matters profoundly, because neural representations live on curved geometric structures, not flat spaces, and cutting straight through them produces incoherent, unnatural outputs.

The researchers fit low-dimensional manifolds to both internal activations and output distributions across reasoning tasks. For cyclic concepts like days of the week, both spaces form matching circular structures. For sequential concepts like letters or ages, both form matching linear progressions. The distance correlations between these paired manifolds reach 0.89 to 0.999, revealing an approximate isometry: the two spaces are geometric mirror images.

The causal test: interpolate between activation centroids along the fitted manifold versus straight lines, then measure the resulting output trajectories. Manifold steering produces smooth transitions between adjacent concepts in order. Linear steering causes probability mass to teleport to non-adjacent concepts, with intermediate outputs becoming unnatural. Quantitatively, manifold steering reduces trajectory energy by 2.8 times on average, with statistical significance below 0.001.

The geometry scales beyond one dimension. In tasks with grid-structured concept spaces, the researchers achieve factored control: steering along one intrinsic manifold coordinate modulates that dimension independently, without interfering with the other. This compositionality breaks down under linear steering, where axes become entangled and transitions distorted.

Even in visual domains, geometry determines coherence. Applied to a recurrent world model controlling car position, manifold steering produces perceptually smooth movement through visual space. Linear steering generates ambiguous, blended images that correspond to no real spatial location, the visual equivalent of jumping off-manifold.

The researchers formalize steering under three geometric paradigms: flat Euclidean, density-adaptive manifold, and behavior-pullback metrics. The remarkable finding is that density and pullback geometries yield nearly identical paths, empirically proving that representation and behavior manifolds are dual images of the same conceptual structure. This invalidates the flat geometry assumption underlying conventional linear methods, and it suggests a paradigm shift for interpretability: to control behavior naturally, navigate the intrinsic manifold coordinates that the network has learned. Explore the full geometric framework and create your own video explainer at EmergentMind.com.