Learning a Generative Meta-Model of LLM Activations

This presentation explores a breakthrough approach to understanding and controlling large language models by training diffusion models directly on neural activations. Rather than imposing restrictive assumptions like linearity or sparsity, this work learns the true manifold of activations as a generative prior, enabling high-fidelity interventions and improved interpretability that scales predictably with compute. The resulting Generative Latent Prior achieves superior steering fluency, interpretability probing, and on-manifold projection compared to existing methods.Script

What if we could learn the true shape of thought inside a language model, not by forcing it into boxes, but by letting a generative model discover its natural structure? This paper introduces diffusion models as meta-models for large language model activations, opening a new path to interpretability and control.

Building on that vision, let's first examine why existing approaches fall short.

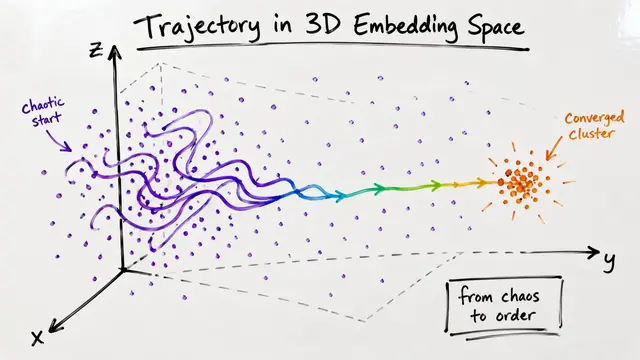

Current interpretability tools like sparse autoencoders and principal component analysis impose linearity or sparsity constraints that don't match the true geometry of activations. When you use these for steering, you often push activations off their natural manifold, resulting in garbled or unnatural outputs.

The authors propose a fundamentally different paradigm.

The Generative Latent Prior, or GLP, trains diffusion models on billions of cached activations from models like Llama1B and Llama8B. By learning to denoise activations through flow matching, the model captures the true distribution without imposing linearity or sparsity, letting structure emerge naturally.

A striking result is that diffusion loss scales predictably: as you invest more compute, performance improves along a smooth power law, and this improvement transfers directly to downstream tasks like steering fluency and interpretability probing.

Now let's see how this translates into practical advantages.

Traditional steering often sacrifices fluency for control strength because interventions push activations into unnatural regions. The GLP corrects this by reprojecting edits back onto the learned manifold, achieving stronger concept control at higher fluency across sentiment steering, persona elicitation, and sparse autoencoder feature interventions.

Inside the GLP, the learned representations themselves become interpretable units. These meta-neurons substantially outperform both sparse autoencoders and the original model's neurons on concept probing tasks, and qualitative analysis shows they correspond to coherent, human-recognizable semantic features.

The current approach has limitations: it models activations independently at each position and layer, missing potential temporal or hierarchical structure. Extending to conditional or multi-modal priors, and leveraging diffusion loss for anomaly detection, are promising next steps.

This work shows that generative diffusion models can learn the true geometry of language model thought, enabling interpretability and control that scale with compute rather than plateau. To dive deeper into this paradigm shift for understanding neural networks, visit EmergentMind.com.