Discretization-Independent Surrogate Modeling over Complex Geometries

This presentation explores a breakthrough approach to accelerating numerical simulations of partial differential equations using hypernetworks and implicit geometric representations. The authors introduce three novel neural network architectures—DV-MLP, DV-Hnet, and NIDS—that predict PDE solutions continuously across arbitrary mesh topologies without preprocessing or pixelization. Validated on 2D Poisson problems and vehicle aerodynamics, these methods dramatically reduce computational costs while maintaining accuracy, opening new possibilities for real-time engineering design optimization and model-based control in domains with complex, variable geometries.Script

Engineering simulations are trapped in a computational bottleneck. Solving partial differential equations for complex geometries can take hours or days, making real-time design optimization nearly impossible. But what if a neural network could learn to predict those solutions instantly, on any mesh, without ever seeing that exact geometry before?

Conventional surrogate models built on convolutional neural networks hit a wall when geometries change. They require pixelization, lose geometric fidelity, and cannot adapt to new mesh structures without retraining. The authors recognized that the problem isn't just computational expense, it's the rigid dependence on discretization itself.

The solution lies in rethinking how neural networks represent geometry and physics together.

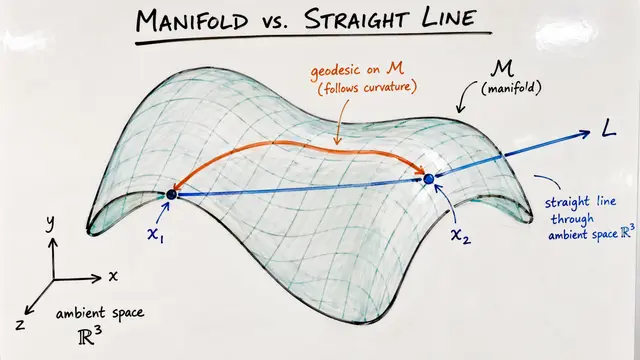

The authors propose three architectures that break free from fixed meshes. DV-MLP is the simplest, feeding spatial coordinates and design variables into a single network. DV-Hnet introduces a hypernetwork that generates the main network's weights based on the design, allowing the model to adapt its internal structure. NIDS takes this further by processing spatial features and design variables in parallel, then combining them through hypernetwork-generated output weights. All three share a key insight: by encoding geometry through minimum-distance functions rather than mesh connectivity, the networks learn continuous, topology-independent representations.

This schematic reveals the architectural distinctions. In DV-MLP, everything flows through one path. DV-Hnet splits the design variables into a hypernetwork that controls the main network's weights, creating design-conditional prediction. NIDS goes one step further, using the hypernetwork only to generate output layer weights, allowing the spatial network to develop rich, reusable feature representations that get combined differently for each design. This modularity is what enables true discretization independence.

Theory is elegant, but does it work on real engineering problems?

The authors tested their approach on two datasets that would break traditional methods. First, a 2D Poisson equation with arbitrary embedded shapes, each on a different unstructured mesh. Second, and more impressively, full vehicle aerodynamics computed using Reynolds-averaged Navier-Stokes equations for 124 distinct car shapes. In both cases, DV-Hnet consistently outperformed the alternatives, converging faster during training and producing more accurate predictions on unseen geometries.

These training curves tell the story. The solid lines track training loss, the dashed lines validation loss. Notice how DV-Hnet, shown in the middle curves, reaches its minimum validation loss earlier than both DV-MLP and NIDS. That's not just faster convergence, it's evidence that the hypernetwork approach is finding better geometric priors, learning representations that generalize more effectively to unseen shapes. The vertical dashed lines mark where each model's best weights were saved for final evaluation.

Here's where the rubber meets the road, literally. This comparison shows velocity vectors in the wake behind a vehicle the network never saw during training. The top panel is the ground truth from a full computational fluid dynamics simulation. The bottom is the DV-Hnet prediction. Most of the flow field is nearly indistinguishable, but look closely at the recirculation zone behind the vehicle. Even in this notoriously difficult region where flow separates and swirls, the network captures the essential physics. There are small discrepancies, visible as slight differences in vector orientation, but the overall structure is preserved at a fraction of the computational cost.

The implications extend far beyond faster simulations. Because these networks produce continuous predictions without discretization constraints, engineers can query solutions at arbitrary points, compute gradients with respect to geometry, and embed surrogate models directly into optimization loops. Design iterations that once took hours now take milliseconds. The dream of interactive, physics-aware design tools becomes feasible. And for systems where geometry changes during operation, like morphing wings or adaptive structures, model-based control suddenly enters the realm of possibility.

The authors proved that neural networks don't need to think in meshes to understand physics on complex geometries. By encoding geometry implicitly and using hypernetworks to condition predictions on design, they've created surrogates that are truly discretization independent. Visit EmergentMind.com to explore this paper further and create your own research video presentations.