Unlocking the Geometry of Data with Graph Neural Networks

This presentation provides a technical overview of Graph Neural Network (GNN) architectures, exploring how they extend deep learning to non-Euclidean data like social networks and molecular structures. It covers the core mathematical principles of message passing, architectural variants like GCNs and GATs, and cutting-edge developments in automated design and high-performance scaling.Script

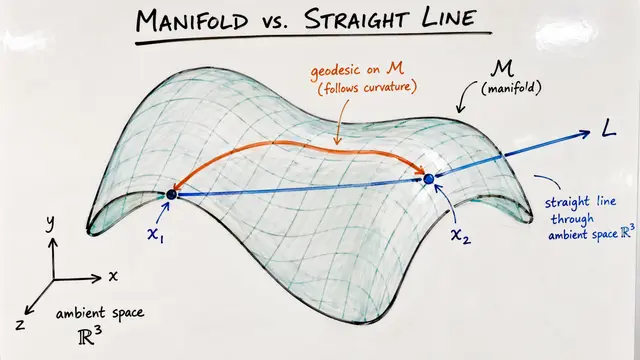

What if the most important patterns in your data arent hidden in a grid of pixels, but in the complex web of relationships between individual points? By extending deep learning to non Euclidean domains, Graph Neural Networks allow us to analyze everything from social circles to molecular bonds with mathematical precision.

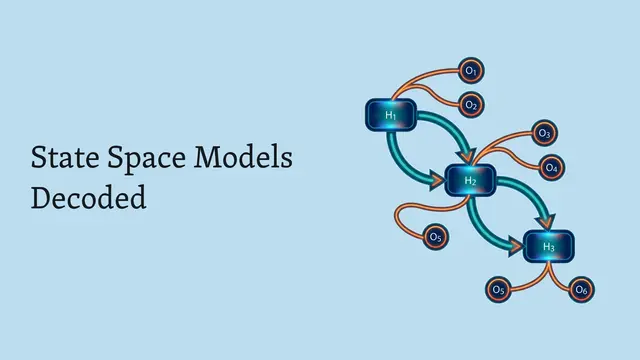

Building on this concept, every canonical GNN layer operates through two primary stages: aggregating messages from a neighborhood and updating the nodes individual state. This structure guarantees permutation equivariance, meaning the network treats the graph consistently regardless of how you label the nodes.

To implement these layers, we see two dominant paths: Selection GNNs that use graph filters modeled as polynomials, and Aggregation GNNs that utilize signal diffusion to extract node trajectories for classical processing.

Now let us look at the theoretical limits of what these architectures can actually learn.

The discriminative power of a GNN is fundamentally linked to the Weisfeiler Lehman test; while standard message passing cannot distinguish certain triangles, higher order architectures can solve this by operating on node tuples.

Beyond expressiveness, we achieve stability by controlling the Lipschitz properties of the filters, ensuring that small deformations in the graph do not lead to wildly different outputs.

Since manually designing these networks is difficult, researchers use Neural Architecture Search to automatically discover optimal aggregators and channel capacities, as seen in systems like Ladder GNN.

Scaling these designs for the real world requires overcoming memory bottlenecks caused by sparse adjacency, leading to innovations like Flow GNN for hardware acceleration and D3 GNN for streaming data.

These advancements have led to tangible results, from optimizing wireless network signals to creating explainable models that convert neural patterns into human readable decision trees.

As we continue to refine how machines interpret the connectivity of our world, GNNs stand as the bridge between raw structure and actionable intelligence. Please go to EmergentMind.com to learn more. Graph Neural Networks are transforming our understanding of complex systems by turning the hidden geometry of data into a powerful computational advantage.