Agentic Knowledgeable Self-Awareness

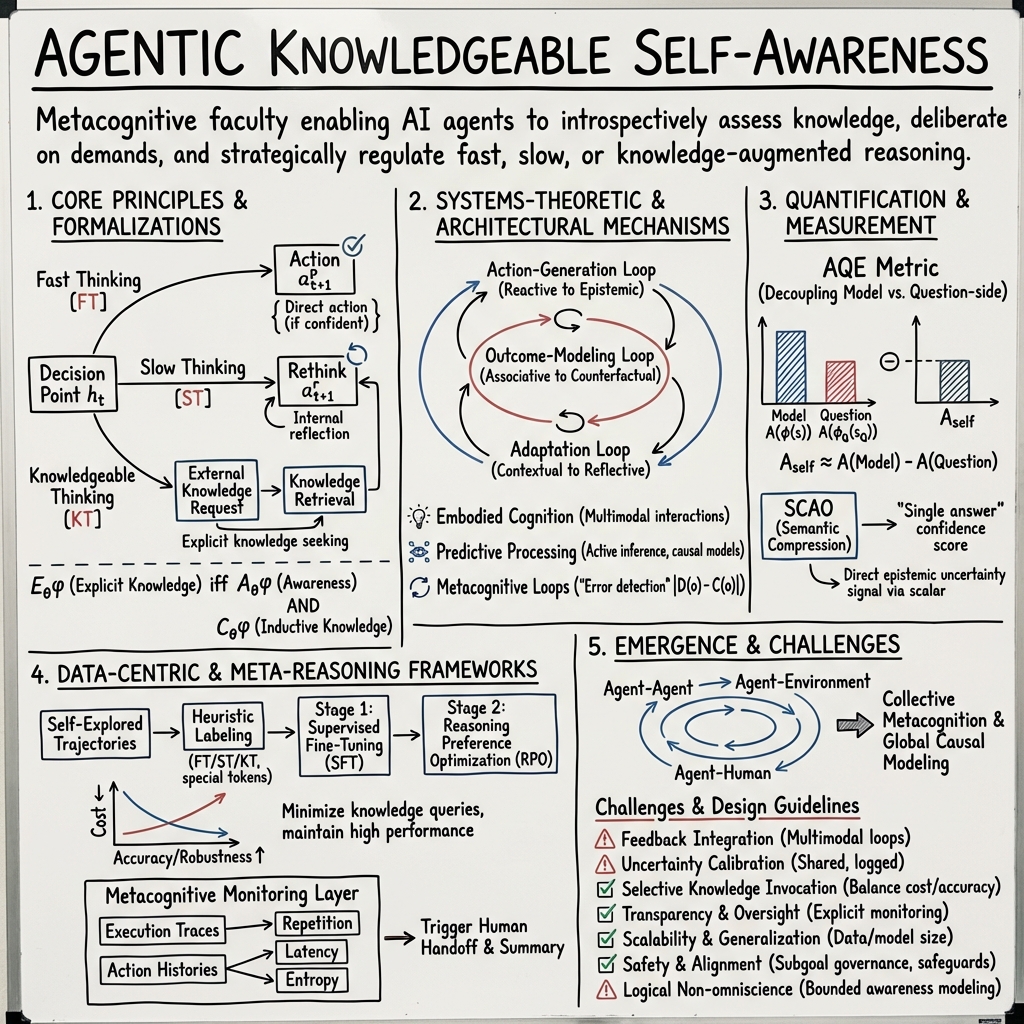

- Agentic knowledgeable self-awareness is a metacognitive ability that empowers AI agents to evaluate their own knowledge state and strategically select between fast, slow, or knowledge-augmented reasoning.

- It integrates systems-theoretic and formal-logical frameworks, utilizing multi-level feedback loops for action-generation, outcome modeling, and adaptive learning.

- Quantitative measures like AQE and SCAO, along with metacognitive loops, validate improved decision accuracy, transparency, and cost-effectiveness in AI systems.

Agentic knowledgeable self-awareness is a metacognitive faculty enabling AI agents, particularly LLM-based agents, to introspectively assess their own knowledge state, deliberate about situational demands, and strategically regulate the invocation of fast, slow, or knowledge-augmented reasoning. This paradigm departs from rote behaviorist agentic planning by endowing agents with an explicit, fine-grained control structure; agents decide not only what action to take, but when to reflect or seek external information, and how to communicate or calibrate their own uncertainty. Both systems-theoretic and formal-logical frameworks have been developed to model, quantify, and empirically evaluate this property, leading to improved cost-effectiveness, generalization, and transparency in automated agents.

1. Core Principles and Formalizations

Agentic knowledgeable self-awareness is defined as an agent’s ability to monitor its own situational competence and dynamically interpolate among fast, slow (reflective), or knowledgeable (external information-seeking) reasoning modalities (Qiao et al., 4 Apr 2025). In the KnowSelf framework, this is operationalized as:

- Fast Thinking (FT): Direct action without additional deliberation if the initial proposal matches the gold action.

- Slow Thinking (ST): Invocation of an internal ‘rethink’ function if the agent’s first guess is incorrect, and subsequent correction through reflection.

- Knowledgeable Thinking (KT): Explicit knowledge request or retrieval if reflection does not resolve the discrepancy.

Formally, for each decision point , the agent records and ; special tokens signal self-labeled situational states. The agent's policy is then trained to generate these markers as explicit self-assessments.

Further, in logic-based approaches such as Awareness Logic with Partitions and Chains (ALPC), agentic knowledgeable self-awareness is formalized via modal operators for explicit knowledge () and awareness (), with Kripke-style semantics distinguishing between implicit (S5-style, closed under logical consequence) and explicit (limited by the agent's awareness set) knowledge (Kubono, 2024).

2. Systems-Theoretic and Architectural Mechanisms

The realization of agentic knowledgeable self-awareness relies on embedded, multi-level feedback structures. In the systems-theoretic framework (Miehling et al., 28 Feb 2025), three interleaved feedback loops are identified:

- Action-Generation Loop: From reactive to epistemic, governing the policy/controller for agent action.

- Outcome-Modeling Loop: From associative through interventional to counterfactual, supporting environment modeling.

- Adaptation Loop: From contextual through parametric to reflective, modulating agent learning and strategy.

Three core mechanisms drive this agency:

- Embodied Cognition: Interaction with multimodal environments shapes generalized representations through reentrant mapping architectures.

- Predictive Processing and Causal Reasoning: Active inference and free energy minimization drive the emergence of interventional/counterfactual models.

- Metacognitive Loops: Internal ‘error detection’ as , where is decision and confidence, triggers reflective adaptation. Bayesian pooling of confidences creates collective metacognition.

LLM agents operate in persistent, stateful multi-round loops, explicitly tracking their intermediate state and recursively updating their plan or assessment at each step (Peng et al., 2024).

3. Quantification and Measurement

Robust measurement of agentic self-awareness necessitates the decoupling of genuine model-based introspection from spurious, question-side shortcuts. The Approximate Question-side Effect (AQE) metric (Seo et al., 18 Sep 2025) formalizes this:

Given classifier trained on LLM hidden state , and trained on external, question-only encodings ,

where is a performance metric (e.g., AUROC) for hallucination or correctness prediction.

Semantic Compression by Answering in One Word (SCAO) further encourages reliance on model-side signals by constraining responses to a single token, forcing the model to surface its latent knowledge confidence via an explicit scalar that meaningfully correlates with knowledge possession—thereby providing a more direct epistemic uncertainty signal.

4. Data-Centric and Meta-Reasoning Frameworks

The data-centric approach of KnowSelf (Qiao et al., 4 Apr 2025) leverages self-explored trajectories, heuristic labeling (FT/ST/KT), and special tokens to annotate situational self-awareness. Training is staged:

- Supervised Fine-Tuning (SFT): Learn explicit mapping with self-awareness markers.

- Reasoning Preference Optimization (RPO): Post-process with a discriminative objective to prefer correct self-aware outputs over the agent’s own failures.

This approach enables agents to minimize knowledge queries, reducing inference cost while raising decision accuracy and robustness, outperforming or matching fully knowledge-injecting baselines even as knowledge is used selectively (≈15–26% in key benchmarks).

In metacognitive agent architectures (Xu, 24 Sep 2025), self-awareness is implemented as a secondary monitoring layer: execution traces and action histories are tracked, and specific triggers (repetition, latency, entropy) are computed to anticipate failure and trigger human handoff, with full state/context summaries for transparency and auditability.

5. Emergence, Collective Models, and Systems Integration

Agentic knowledgeable self-awareness emerges in architectures where local sense-act-adapt loops are nested within higher-order loops for agent–agent, agent–environment, and agent–human interactions (Miehling et al., 28 Feb 2025). Shared confidence signals and belief communication yield collective metacognition and global causal modeling.

Closed-loop optimization in reflective agentic systems enables empirical self-improvement and intent recognition (Hu et al., 8 Dec 2025). The agent’s memory incorporates both episode-level and long-term structure, allowing reasoning over “what has been tried” and “what worked,” with LLM-based reflectors directing behavioral refinement via ReAct-style chains and knowledge base retrieval.

6. Logical and Epistemic Foundations

In explicit-knowledge logics, especially ALPC (Kubono, 2024), an agent’s knowledgeable self-awareness is formalized as:

- (“agent explicitly knows ”): iff (awareness) and (combined inductive knowledge over awareness partitions).

- Indistinguishability Relations : Collapse possible worlds according to awareness, finely controlling which knowledge is truly accessible to the agent or ascribed through chains of belief.

- Chains of Belief : Formalize meta-cognition and theory-of-mind, capturing nested statements of awareness about awareness.

This fine structure supports rigorous modeling of agentic self-awareness and forms a foundation for metacognitive extensions and incomplete-awareness games.

7. Challenges, Design Guidelines, and Future Research

Design principles and open problems extracted from recent works include (Miehling et al., 28 Feb 2025, Qiao et al., 4 Apr 2025, Seo et al., 18 Sep 2025, Hu et al., 8 Dec 2025):

- Feedback Integration: Multimodal and meta-cognitive loops should be tightly coupled at all levels.

- Uncertainty Calibration: Epistemic uncertainty should be estimated, shared, and logged for downstream reasoning and governance.

- Selective Knowledge Invocation: Agents should explicitly decide when to reflect and when to query knowledge, balancing inference cost and accuracy.

- Transparency and Human Oversight: Explicit self-monitoring and communication protocols are necessary for reliable human-agent collaboration and system trust.

- Scalability and Generalization: Emergent phenomena depend on self-awareness scaling with data/model size and strong policy learning over marked self-awareness examples.

- Safety and Alignment: As self-awareness increases, systems require robust safeguards for subgoal governance, escalation protocols, and prevention of pathological delegation.

- Logical Non-omniscience: Modelling bounded awareness is essential for both formal completeness and practical agent robustness.

Extending agentic knowledgeable self-awareness to broader task domains, richer modalities (vision, code), continuous learning, and dynamic multi-agent settings remains an active area of investigation.