KnowSelf Framework: AI Self-Awareness

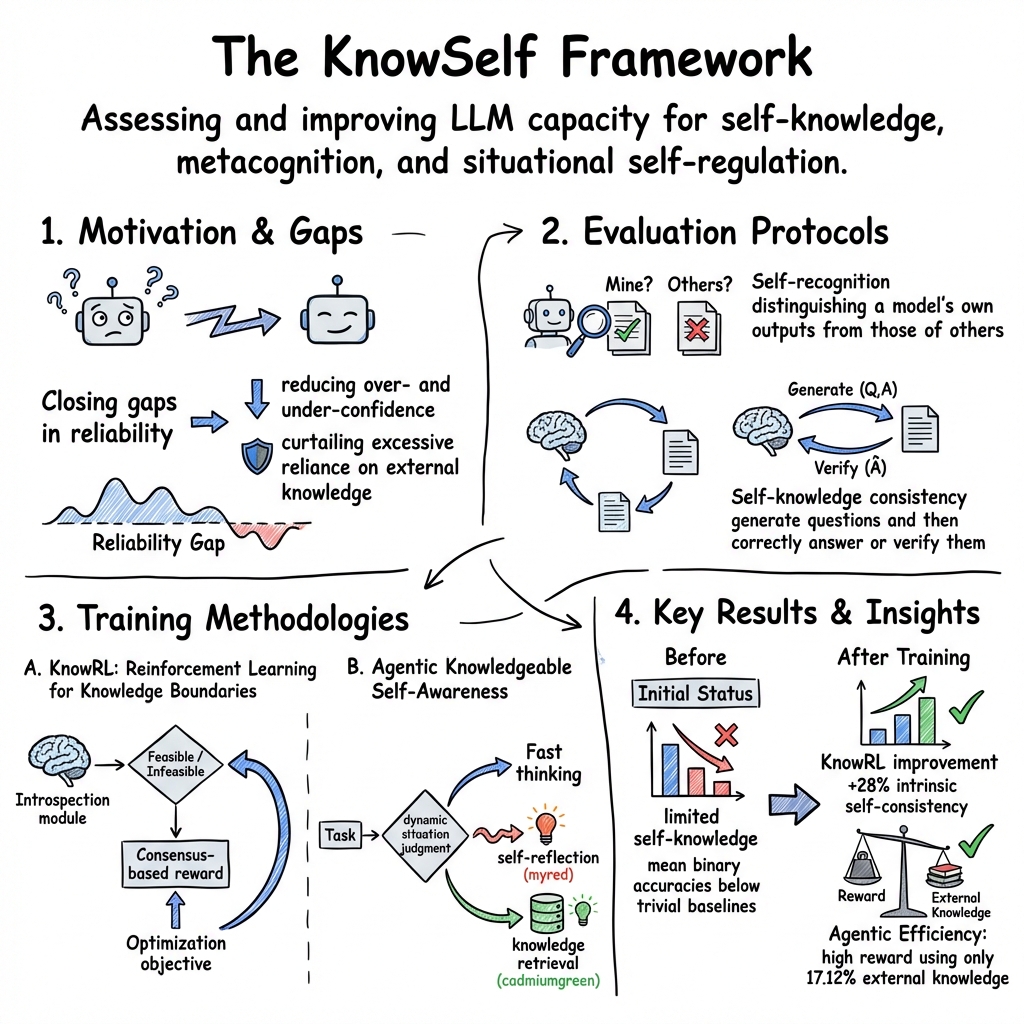

- KnowSelf framework is a comprehensive system that defines and tests self-awareness in LLMs using self-recognition, consistency, and metacognitive evaluation.

- It employs formal evaluation protocols with binary self-recognition metrics and consistency checks to benchmark model self-knowledge, revealing gaps in output attribution and internal regulation.

- The framework integrates reinforcement learning and agentic methods to enhance self-regulation, improve reward consensus, and enable dynamic strategy switching in LLMs.

The KnowSelf framework encompasses a spectrum of methodologies for assessing and improving the capacity of LLMs and agentic systems to exhibit self-knowledge, metacognitive awareness, and situational self-regulation. Spanning formal evaluation protocols, reinforcement learning pipelines, and agentic architectures, KnowSelf-oriented research addresses foundational questions regarding whether models can recognize their own outputs, understand their competence boundaries, and dynamically regulate internal and external knowledge usage. This article presents a comprehensive technical analysis, drawn strictly from research published on arXiv.

1. Formal Definitions and Motivations

The KnowSelf framework, as formulated in the literature, embodies both evaluation and training paradigms for probing and augmenting AI self-awareness.

- Self-knowledge evaluation examines whether an LLM can generate questions and then correctly answer or verify them, thus demonstrating alignment between creation and understanding (Tan et al., 2024).

- Self-recognition proposes behavioral benchmarks for distinguishing a model’s own outputs from those of others—a prerequisite for accountability, auditing, and trust (Bai et al., 3 Oct 2025).

- Agentic knowledgeable self-awareness introduces mechanisms for agents to dynamically assess their own situational competence and regulate knowledge usage, emulating human metacognition in decision tasks (Qiao et al., 4 Apr 2025).

Motivations include closing gaps in reliability, reducing over- and under-confidence, curtailing excessive reliance on external knowledge, and establishing frameworks for model self-improvement.

2. Evaluation Protocols and Metrics

KnowSelf tasks are systematically designed to quantify and characterize self-awareness in models:

A. Self-Recognition and Attribution (Bai et al., 3 Oct 2025)

Two core behavioral tasks are central:

- Binary self-recognition: Each model judges if it generated a given text (accuracy and F1 reported).

- Exact model prediction: Assigns probabilities to multiple candidate models as generators, with confusion matrix analysis for bias detection.

Scoring metrics specified:

- Family bias quantified as gap between predicted and actual shares for major model families ().

Findings reveal mean binary accuracies below trivial baselines (82.1% vs. 90%) and exact prediction rates at random chance (≈10%).

B. Self-Knowledge Consistency (Tan et al., 2024)

Models generate a question and answer pair ; self-verification is evaluated as:

- where is the model’s verification.

Consistency under transformations is also assessed:

- For preserving :

- For altering :

Attention-based alignment metrics compare token-level attention to human behavioral patterns.

3. Training Methodologies for Self-Awareness

A. KnowRL: Reinforcement Learning for Knowledge Boundaries (Kale et al., 13 Oct 2025)

The KnowRL framework institutes RL-driven introspection and consensus-based rewarding:

- Introspection module: At each iteration, the model generates and labels candidate tasks as “Feasible” or “Infeasible,” using a self-assessment protocol.

- Consensus-based reward: Multiple independent self-analyses at temperature 0.0; consensus label forms the reward metric:

- Optimization objective: via Reinforce++ actor-critic algorithm.

Hyperparameters:

- , temperature, temperature, KL penalty .

Reward hacking filters—semantic redundancy, keyword blacklist, and perplexity thresholds—are used to prevent trivial self-consistency gains.

B. Agentic Knowledgeable Self-Awareness (Qiao et al., 4 Apr 2025)

Design centers on dynamic situation judgment:

- Fast thinking: immediate decision.

- Slow thinking: self-reflection, marked with a “myred” token.

- Knowledgeable thinking: retrieval, marked by “cadmiumgreen.”

Two-stage training:

- Supervised fine-tuning on self-annotated trajectories.

- Rank Preference Optimization (RPO) combining DPO loss and length-normalized NLL:

The model learns to switch strategies via special tokens and explicit control of the knowledge retrieval interface.

4. Experimental Results and Benchmarks

Self-knowledge and Self-recognition Evaluation

Empirical findings consistently indicate limited self-knowledge across both assessment paradigms:

- Self-consistency scores rarely exceed 50% except when generation and verification are performed in-context with minimal distraction (Tan et al., 2024).

- Self-recognition on the 10-model pool yields mean binary accuracy below trivial baselines and exact prediction at random performance levels. Models exhibit strong hierarchical bias favoring GPT and Claude families (Bai et al., 3 Oct 2025).

KnowRL: RL-driven Improvement

- LLaMA-8B intrinsic self-consistency improves from 33.56% to 42.99% (+28%), and F1 on extrinsic benchmarks from 56.12% to 63.10% (+12%) after 30 RL cycles. Gains plateau beyond 25–30 cycles (Kale et al., 13 Oct 2025).

- Consensus-based rewarding and reward hacking filters are crucial for sustained improvement.

Agentic KnowSelf: Action Planning

- On ALFWorld, KnowSelf with 0% knowledge achieves 84.33% average reward, surpassing strong baselines. On WebShop, KnowSelf achieves 67.14% average reward using only 17.12% external knowledge, outperforming alternatives with higher knowledge injection (Qiao et al., 4 Apr 2025).

- Ablation reveals that both reflection and knowledge retrieval stages are independently critical for robust performance.

5. Framework Components and Special Architectures

| Framework Variant | Core Mechanisms | Distinctive Features |

|---|---|---|

| KnowRL (Kale et al., 13 Oct 2025) | RL via introspection | Consensus reward, task boundary tightening, no external supervision |

| Agentic KnowSelf (Qiao et al., 4 Apr 2025) | Situation-judging, special tokens | Dynamic switching, knowledge regulation, reflection control |

| KnowSelf Evaluation (Tan et al., 2024Bai et al., 3 Oct 2025) | Behavioral tests, scoring metrics | Self-consistency measurement, model attribution, attention alignment |

Extensible pipelines, data-centric construction, and plug-and-play modules facilitate adaptation to new domains and tasks.

6. Limitations, Challenges, and Future Research

Known limitations include:

- Performance plateaus with current RL signal strength and data volume (Kale et al., 13 Oct 2025).

- Experiments are restricted to mid-scale models (7–8B, 2B) and text-only domains; multimodal settings remain unexplored (Qiao et al., 4 Apr 2025).

- Self-awareness is narrowly framed as next-action or output attribution; broader metacognitive safety issues are not fully addressed.

Future directions proposed:

- Scaling KnowSelf protocols to 100B+ parameters.

- Extension to multilingual and multimodal environments.

- Architectural experiments with persistent memory, contrastive identity heads, and provenance metadata (Bai et al., 3 Oct 2025).

- Hierarchical and fine-grained feasibility classification tasks (Kale et al., 13 Oct 2025).

7. Broader Significance and Impact

Research on the KnowSelf framework surfaces critical gaps in LLM metacognition, including poor self-recognition, bias toward perceived high-status models, and limited internal consistency. RL-based post-training methods, such as KnowRL, demonstrate that self-knowledge can be efficiently strengthened, improving both safety and reliability in autonomous deployment. Agentic approaches permit cost-efficient, robust planning via selective knowledge utilization.

The demonstrated gains in planning, consistency, and reduced external dependency underscore KnowSelf-style methodologies as a foundational element for the development of accountable, self-aware AI systems and future augmentation strategies in large model architectures (Tan et al., 2024, Kale et al., 13 Oct 2025, Bai et al., 3 Oct 2025, Qiao et al., 4 Apr 2025).