- The paper presents a three-tiered memory orchestration system that efficiently manages personalized persistent agents via adaptive, persona-driven scoring.

- The method achieves significant performance improvements, with experimental benchmarks showing up to 86.4% accuracy and an 86.5% reduction in latency compared to flat architectures.

- The work emphasizes dynamic memory redistribution and real-time persona evolution to enhance behavioral recall and system scalability in live multi-turn interactions.

Hierarchical Memory Orchestration for Personalized Persistent Agents: An Expert Review

Introduction and Motivation

Hierarchical Memory Orchestration (HMO), as introduced in "Hierarchical Memory Orchestration for Personalized Persistent Agents" (2604.01670), directly addresses persistent deficits in long-term memory management for AI agents leveraging (M)LLMs. Existing frameworks are encumbered by scalability issues—linear archival growth, flat prioritization, retrieval noise, and latency constraints on computationally limited platforms—which induce inconsistent behavioral recall, agentic cognitive fragmentation, and diminished user trust in memory reliability. HMO reframes memory as an individualized, dynamically tiered system governed by a continuously evolving user persona, optimizing for downstream reasoning efficiency, retrieval precision, and storage tractability.

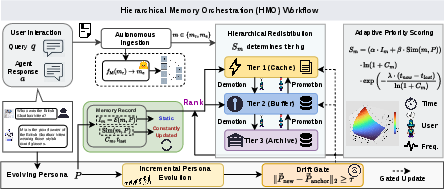

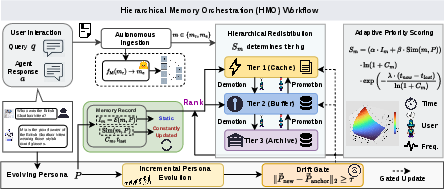

Figure 1: The system architecture and operational workflow of HMO.

Hierarchical System Architecture

HMO's architecture formalizes memory into a three-level hierarchy orchestrated by a persona-adaptive scoring module:

- Tier 1 (Cache): Fixed-size, low-latency context comprising both the S most recent conversational segments and the top K pivotal records—those most identity-aligned, as calculated through an MLLM-based initial scoring function and updated by real-time cosine similarity with the persona vector.

- Tier 2 (Buffer): Expandable intermediate layer consisting of H records with high cumulative utility/frequency, not admissible to Tier 1. It serves as a secondary cache, absorbing high-priority historical knowledge and intercepting retrievals before archive access.

- Tier 3 (Archive): Complete, non-volatile ledger of all interactions, only queried upon cache/buffer cache misses to maintain latency bounds.

Dynamic promotion and demotion across tiers are dictated by an adaptive score Sm incorporating initial importance Im, persona-memory semantic similarity Sim(m,P), contextual recall counts, and non-linear temporal decay. This ensures that temporal recency, behavioral alignment, and evidential utility jointly determine memory prioritization. Notably, exhaustive metadata updates are confined to active tiers (Tiers 1/2) to avoid unnecessary recomputation.

Memory Lifecycle and Persona Evolution

The memory management algorithm comprises four concrete phases:

- Autonomous Ingestion: All agent-user exchanges are captured as memory segments. Specialized representations are optionally derived via content compression (for code, documentation) using an MLLM encoder, preserving fidelity for significant but non-standard traces.

- Contextual and Persona-Driven Scoring: Initial memory scoring is decoupled from static similarity, leveraging MLLM evaluators with structured, multi-dimensional criteria to favor behavioral markers and reasoning criticality.

- Hierarchical Redistribution: All active records are scored, with tier transitions based on score thresholds. Retrievals first employ context self-reflection before recursive tier searching.

- Persona Drift Adaptation: As the user's persona P evolves (measured in embedding space), a drift gate mechanism triggers re-evaluation only beyond a defined deviation threshold, optimizing the trade-off between semantic alignment and computational overhead.

Experimental Results and Benchmarks

Evaluations were performed across both synthetic (LongMemEval-S/M, LoCoMo) and system-in-the-loop (OpenClaw) environments. Key findings include:

User-Centric System Deployment

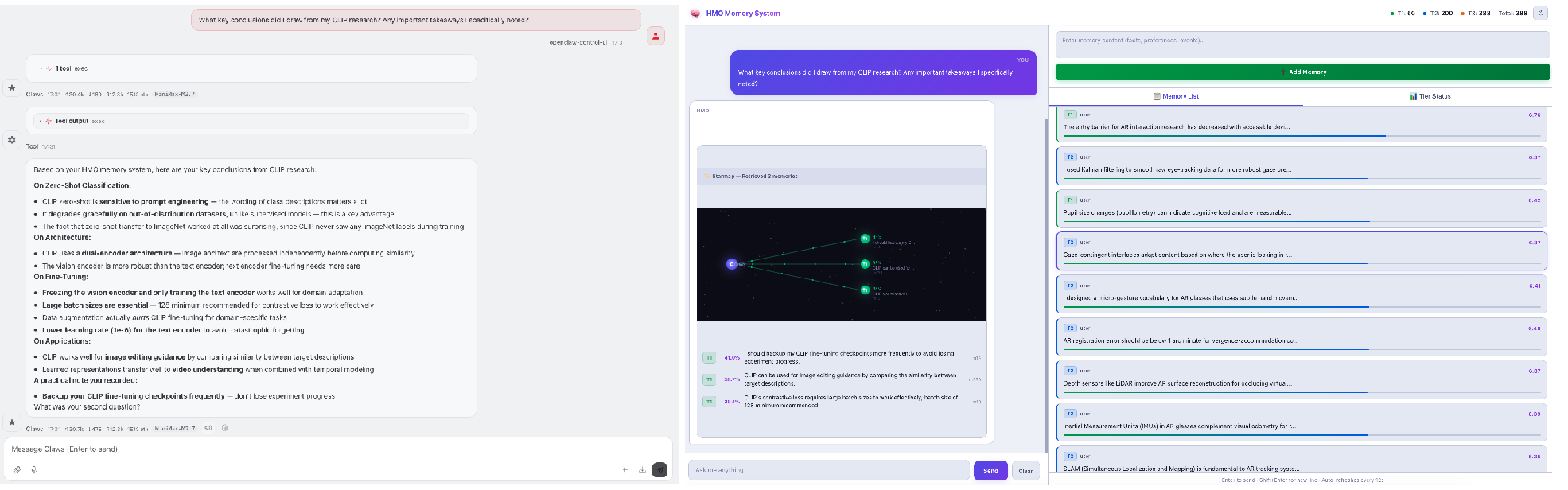

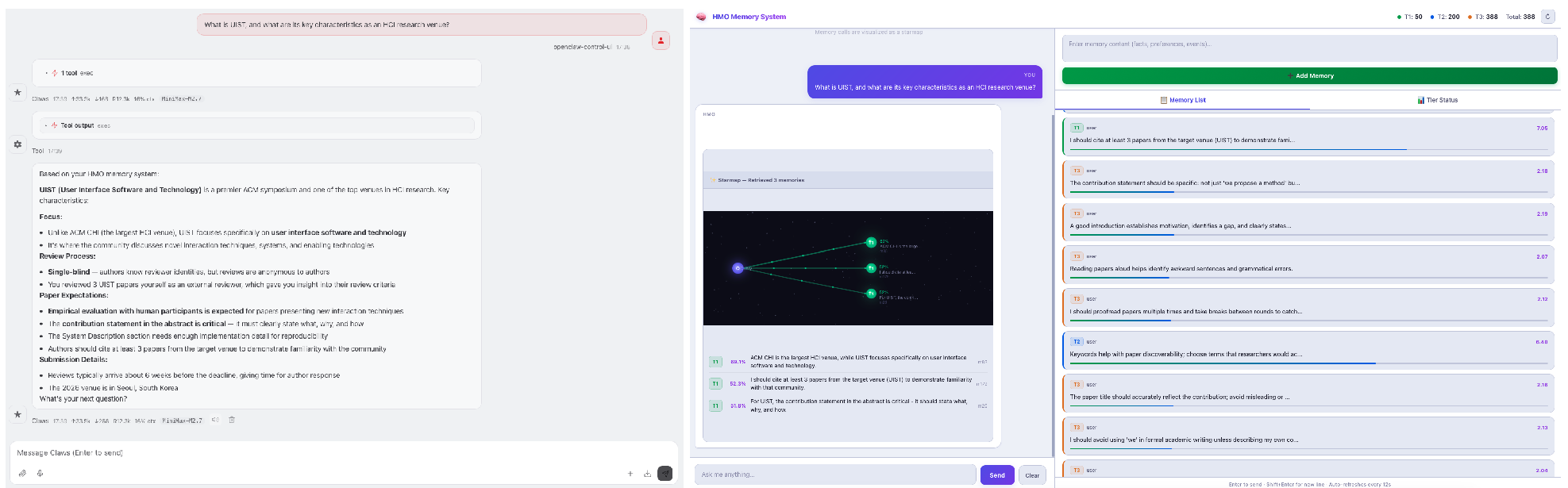

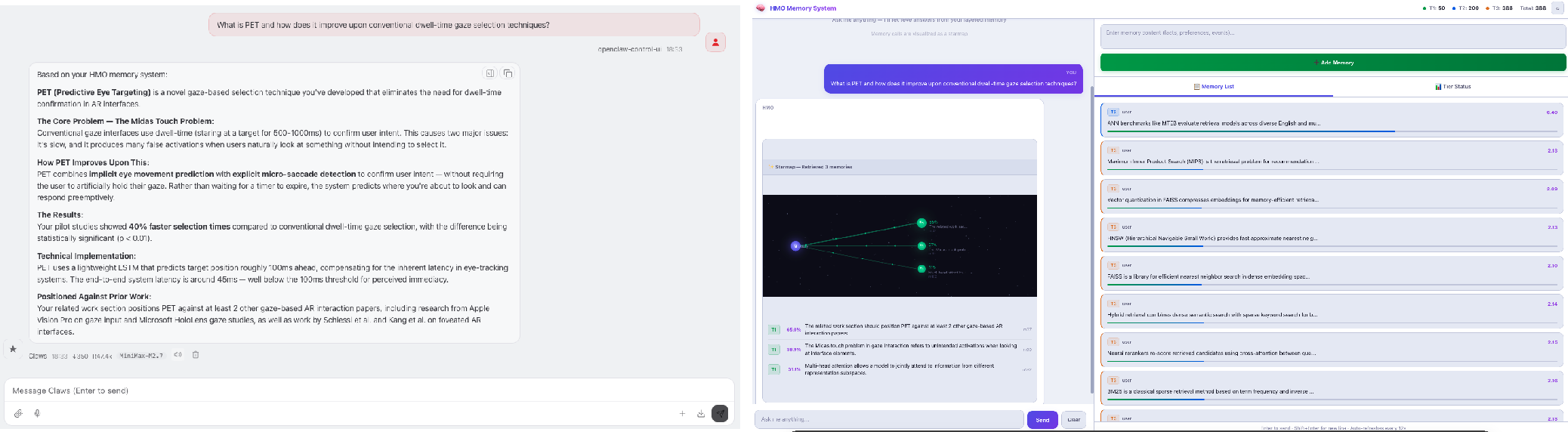

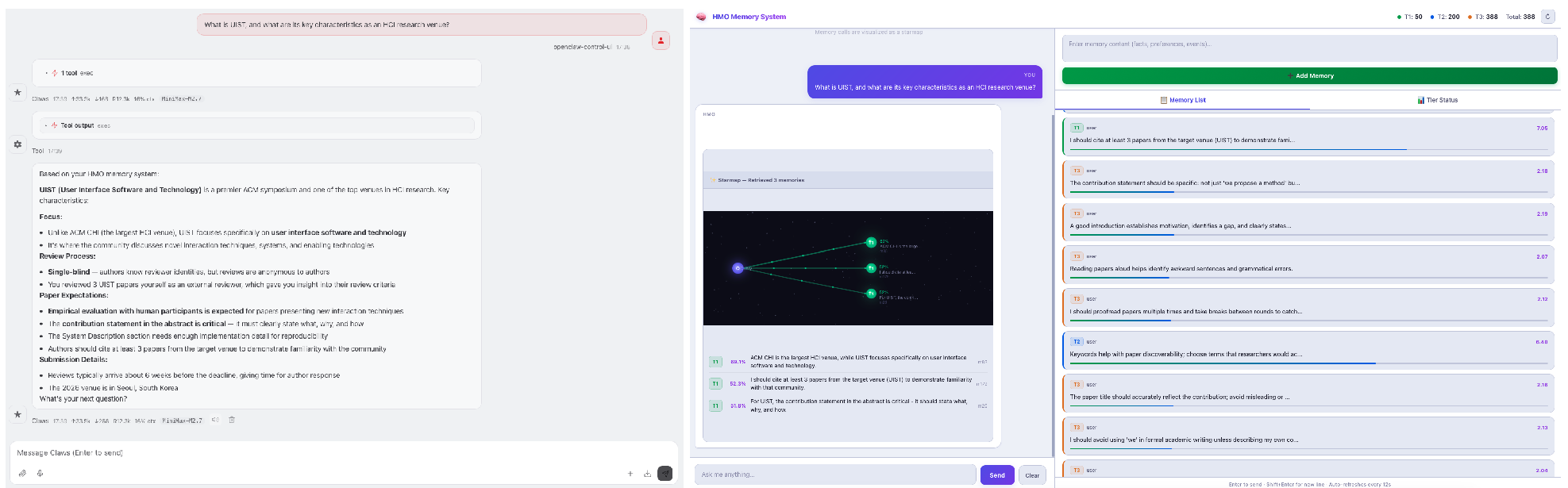

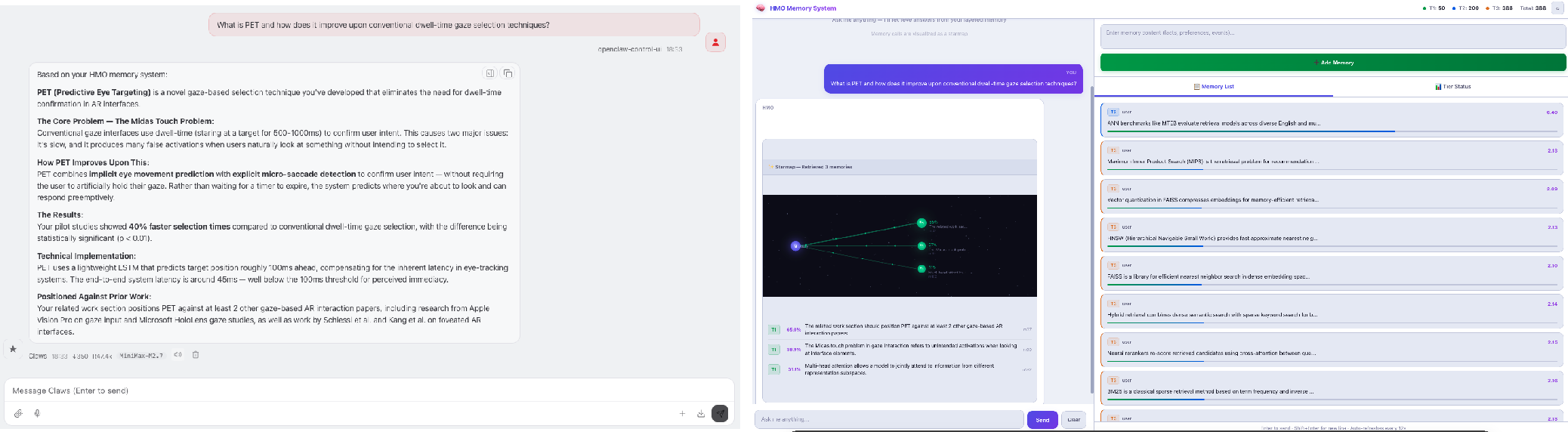

HMO is deployed natively into agentic ecosystems such as OpenClaw, where real-world task evaluations confirmed substantial reductions in reasoning latency and improved consistency of personalization during extended user engagement.

Figure 3: System interface demonstration (example 1).

Figure 4: System interface demonstration (example 2).

User feedback corroborates technical findings: agents leveraging HMO demonstrate more robust, context-appropriate recall of evolving preferences, in contrast to prior flat/graph-based systems.

Theoretical and Practical Implications

HMO's primary contribution is the explicit integration of user persona as an axis for long-term context prioritization and memory lifecycle management. This leverages behavioral alignment as a first-class criterion, obviating the stateless "one size fits all" constraint of prior expansion- or deletion-based schemes. In practical terms, this allows for real-time persistence of critical interaction traces without excessive handover or cold-start latency, especially critical for on-device, resource-bounded agents.

The three-tiered model provides an alternative to computationally expensive parametric (e.g., RLHF-based) or graph traversal approaches, and is robust to large-scale archives due to targeted, lazy scoring.

Limitations and Future Directions

Current evaluation protocols inadequately capture real-world conversational co-evolution, as standard benchmarks (e.g., LongMemEval, LoCoMo) rely on static corpora and atomic queries. HMO's design is optimized for scenarios where memory and persona update in situ, and its efficiency benefits are most pronounced in live, multi-turn agent-user loops. Developing interaction-centric evaluation suites is an open research area, crucial for fully characterizing the benefits of hierarchical orchestration.

Advancements could focus on fine-grained persona modeling, adaptive scoring hyperparameters sensitive to domain or user, automated prompt evolution for MLLM evaluators, and hybrid tier structures for multi-agent interaction.

Conclusion

Hierarchical Memory Orchestration (2604.01670) establishes a robust organizational paradigm for memory-augmented agents, balancing retrieval performance, reasoning cohesion, and user-centricity via adaptive, persona-governed memory tiering. The architecture demonstrates both strong empirical superiority across standard benchmarks and practical deployment viability in persistent agentic systems. HMO's approach—eschewing flat, undifferentiated expansion for nuanced, dynamic orchestration—frames a promising direction for scalable, resource-aware, and genuinely personalized persistent AI agents.