- The paper introduces a decay-driven memory control mechanism that dynamically modulates memory activation through hierarchical decay and reinforcement.

- The paper employs a three-layer memory architecture integrating procedural, semantic, and episodic traces to enhance cross-session reasoning and retrieval efficacy.

- The paper demonstrates significant improvements in efficiency and accuracy on long-horizon tasks compared to static, always-on memory architectures.

Oblivion: Self-Adaptive Agentic Memory Control through Decay-Driven Activation

Introduction and Context

"Oblivion: Self-Adaptive Agentic Memory Control through Decay-Driven Activation" (2604.00131) introduces a framework for memory-augmented LLM agents where memory accessibility decays dynamically, rather than relying on explicit deletion or static retrieval. Departing from conventional "always-on" and flat memory architectures, Oblivion formulates agent memory as a control problem that implements hierarchical memory traces modulated by usage, uncertainty, and decay—substantially enhancing agentic reasoning across long-horizon, context-shifting tasks.

System Architecture

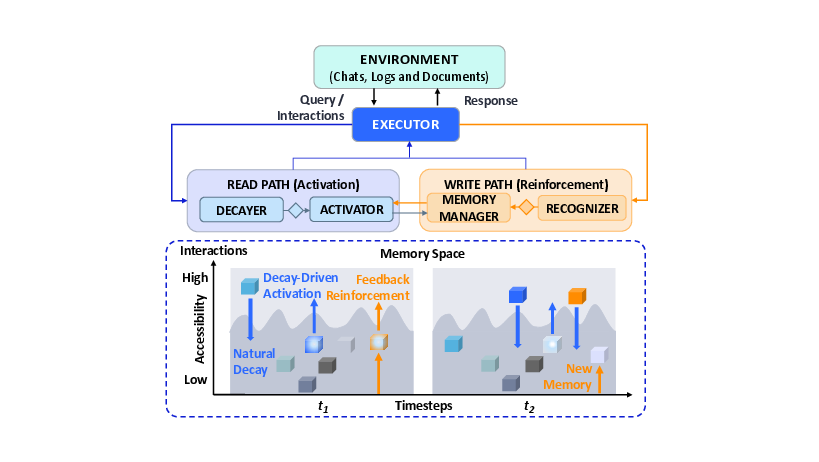

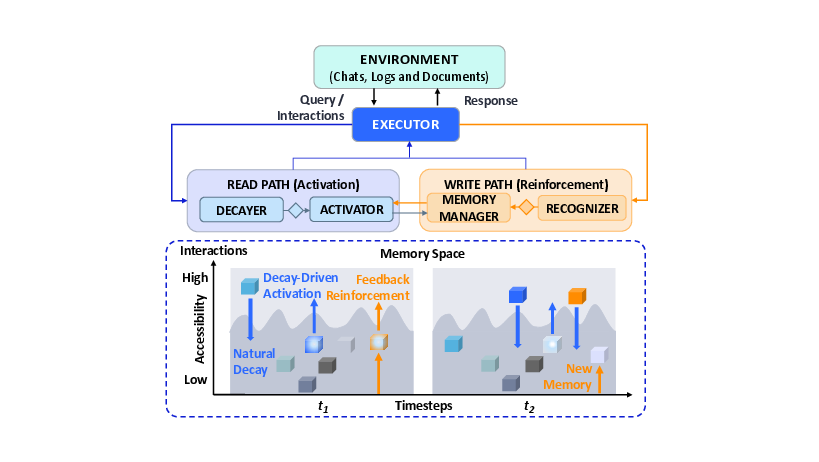

Oblivion employs a hierarchical external memory, organized into three layers: Dynamic Procedural Memory (L1), Semantic Memory (L2), and Preemptive Episodic Memory (L3). The Executor module orchestrates a read/write-decoupled loop, ensuring self-adaptive management of memory traces via:

- Read Path: Gated retrieval mechanisms driven by agent uncertainty and retention scores based on Ebbinghaus-inspired decay models.

- Write Path: Targeted reinforcement of only those memories contributing to agent responses, ensuring usage-proportional retention.

The hierarchical representation ensures persistent strategies reside in L1, factual grounding in L2, and rich episodic context in L3, supporting both long-term strategy and short-term adaptation.

Figure 1: Oblivion facilitates memory-augmented agents by decay-driven activation over hierarchical memory traces. The Executor orchestrates the read path for uncertainty-gated retrieval and the write path for feedback-driven updates, enabling dynamic control over memory activation.

The read activation is performed only when buffer sufficiency and low uncertainty tests dictate, minimizing unnecessary context accrual and latent memory interference. The write-side control ensures only response-relevant memories are reinforced, with others decaying in accessibility rather than being deleted.

Decay-Driven Memory Dynamics

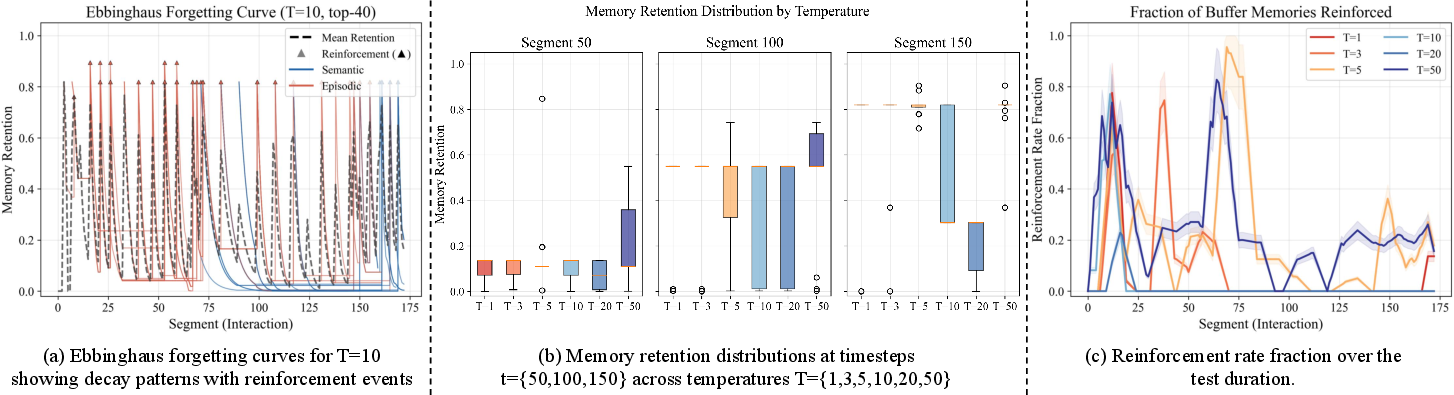

Oblivion's memory traces decay according to interaction-based forgetting curves. Retention scores are computed using a parametric exponential decay model where the effective lifetime of a trace is a function of utility, frequency, and a decay temperature:

Modulating the decay temperature parameter T exposes a spectrum between aggressive forgetting and effectively static memory; tuning T is critical to balancing memory accessibility and retrieval buffer saturation.

Experimental Results

Static Multi-Session QA

Oblivion significantly outperforms direct and memory-augmented baselines (e.g., LME-RFT, EverMemOS) on the LongMemEval benchmark:

- On the "S" setting (noisy retrieval), GPT-4.1-mini with Oblivion achieves up to 90.60% accuracy versus 89.00% (direct) and 81.27% (EverMemOS).

- Substantial accuracy gains are observed in cross-session reasoning and temporal reasoning tasks, indicating differential retention and hierarchical trace organization mitigate prompt pollution and the "needle-in-a-haystack" problem as history length increases.

Dynamic Long-Horizon Interaction

On GoodAILTM, Oblivion exhibits robust scenario-solving rates under context spans (2K, 32K, 120K):

- GPT-4.1-mini with Oblivion solves 8.41 (2K) and 6.79 (32K) scenarios on average, outpacing direct (6.29/6.05) and memory-based (5.37/6.68) methods.

- The advantage is accentuated as context span increases, validating the decay-driven prioritization of high-utility traces and stratum-aware organization under memory pressure.

Hierarchical Memory and Ablation

Ablation analysis demonstrates that full hierarchical memory (L1/L2/L20) yields superior retrieval accuracy and scenario-solving rates, with modest token and latency overhead compared to brute-force context approaches. Systems limited to flat semantic or episodic stacks suffer from a significant drop in accuracy and efficiency, reinforcing the benefit of decoupled and stratified memory schema.

Efficiency Considerations

Oblivion achieves major reductions in average token usage and operational costs under large context settings (32K, 120K):

- At 120K context, Oblivion reduces token cost by 73% relative to FullCTX, with only a modest increase in response latency.

- Efficient uncertainty gating and decay-driven buffer maintenance ensure that only highly relevant evidence is incorporated into the working set, providing scalable agentic memory management for long-term deployments.

Theoretical and Practical Implications

Oblivion operationalizes memory as a latent, dynamically accessible substrate, prioritizing traces through decay and reinforcement rather than deletion. This enables:

- Resilience to context drift: Agents can adapt to evolving histories, prioritizing currently relevant information without losing the capacity to recover previously attenuated traces.

- Cognitive plausibility: The architecture aligns with findings in human memory research, particularly controlled forgetting, trace reactivation, and the constructive interplay between episodic and semantic substrates.

- Model-agnostic deployability: The control protocol operates independent of backbone model weights, facilitating integration across LLM families and settings.

The work questions the sufficiency of flat, always-on memory architectures, instead advancing agent memory towards adaptive, relevance-modulated recall, with implications for continual learning, alignment, and ethical forgetting in autonomous systems.

Future Directions

Oblivion's framework invites further research into:

- Dynamic adaptation of decay temperature and other meta-parameters per agent/task/user context.

- Extension to non-textual memories (multimodal, cross-lingual), integration with modular retrieval agents, and application in open-ended, real-world interaction settings.

- Combining independent LLMs for extraction, control, and generation to minimize propagation of extraction/generation errors and support scalability to small-model deployment.

- Investigating the intersection with end-to-end learned memory systems—elucidating where explicit control layers or hybridization with learned memory modules provide optimal tradeoffs in long-horizon, multi-task, and multi-agent environments.

Conclusion

Oblivion reframes memory control in agentic LLM architectures as a problem of accessibility modulation rather than static retrieval or deletion. By leveraging hierarchical memory, decay-driven activation, and usage-based reinforcement, the framework achieves high memory efficiency, accuracy, and reasoning fidelity on long-horizon tasks while being computationally scalable and cognitively aligned. These findings suggest meaningful paths for the evolution of agentic memory in autonomous artificial systems.