PivotRL: High Accuracy Agentic Post-Training at Low Compute Cost

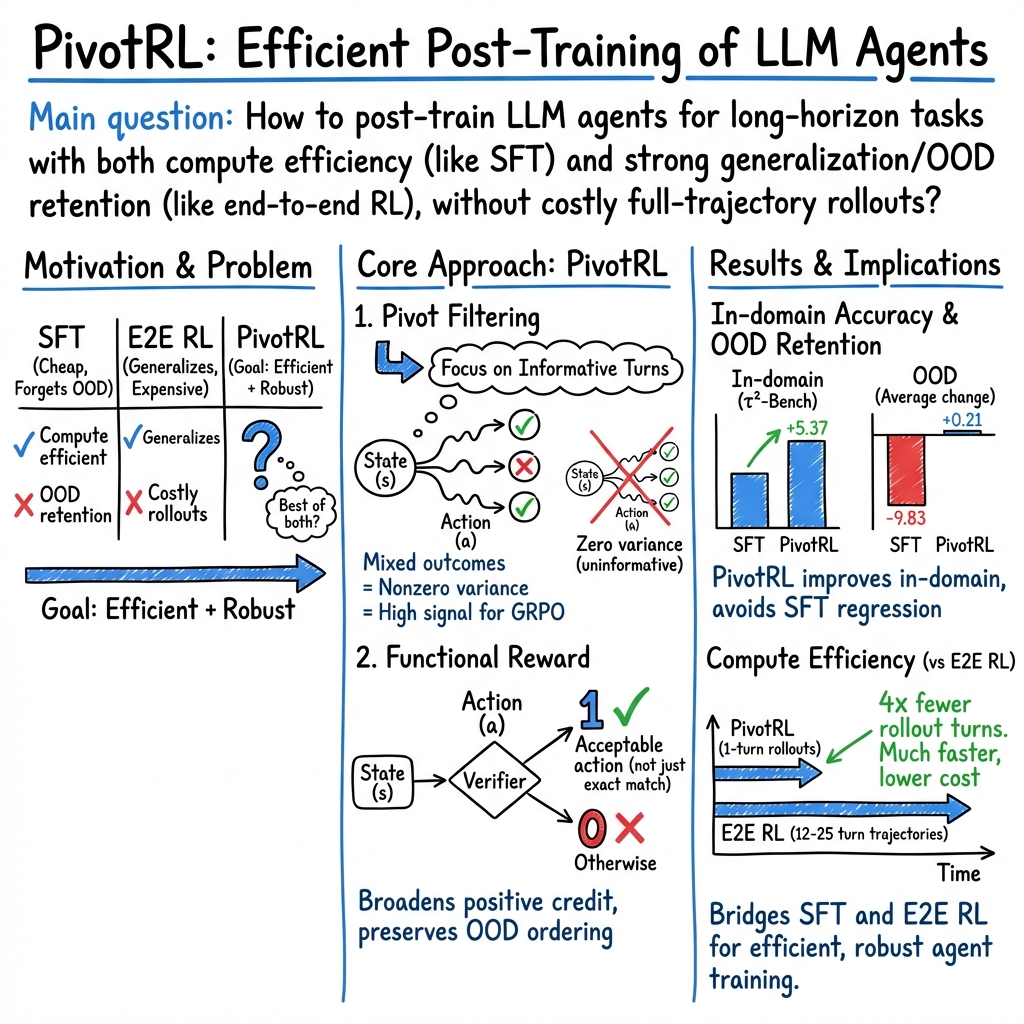

Abstract: Post-training for long-horizon agentic tasks has a tension between compute efficiency and generalization. While supervised fine-tuning (SFT) is compute efficient, it often suffers from out-of-domain (OOD) degradation. Conversely, end-to-end reinforcement learning (E2E RL) preserves OOD capabilities, but incurs high compute costs due to many turns of on-policy rollout. We introduce PivotRL, a novel framework that operates on existing SFT trajectories to combine the compute efficiency of SFT with the OOD accuracy of E2E RL. PivotRL relies on two key mechanisms: first, it executes local, on-policy rollouts and filters for pivots: informative intermediate turns where sampled actions exhibit high variance in outcomes; second, it utilizes rewards for functional-equivalent actions rather than demanding strict string matching with the SFT data demonstration. We theoretically show that these mechanisms incentivize strong learning signals with high natural gradient norm, while maximally preserving policy probability ordering on actions unrelated to training tasks. In comparison to standard SFT on identical data, we demonstrate that PivotRL achieves +4.17% higher in-domain accuracy on average across four agentic domains, and +10.04% higher OOD accuracy in non-agentic tasks. Notably, on agentic coding tasks, PivotRL achieves competitive accuracy with E2E RL with 4x fewer rollout turns. PivotRL is adopted by NVIDIA's Nemotron-3-Super-120B-A12B, acting as the workhorse in production-scale agentic post-training.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “PivotRL: High Accuracy Agentic Post-Training at Low Compute Cost”

Overview: What is this paper about?

This paper introduces PivotRL, a new way to train AI models that must solve multi-step, real-world tasks—like using tools, writing code, browsing the web, or operating a computer terminal. These tasks take many “turns” (decisions) and often require the AI to interact with an environment. PivotRL aims to get the accuracy and generalization of reinforcement learning (RL) while keeping the low compute cost of supervised fine-tuning (SFT), which is the common “learn from examples” approach.

Key questions the paper asks

Before this work, there was a trade-off:

- Supervised fine-tuning (SFT) is cheap and fast but often forgets skills and struggles on new, different tasks (called out-of-domain or OOD tasks).

- End-to-end reinforcement learning (E2E RL) keeps skills and generalizes well but is slow and expensive because it needs many full, multi-step practice runs.

The authors ask: Can we get the best of both—SFT’s efficiency and RL’s generalization—without running full-length, costly practice sessions?

How does PivotRL work? (In simple terms)

Think of training an AI like coaching a player in a long game:

- SFT = showing the player full recordings of expert games and asking them to copy every move.

- E2E RL = letting the player replay entire games many times, learning from trial and error—effective but time-consuming.

- PivotRL = jumping to the most important moments in a recorded game (the “pivots”) where different choices can clearly lead to a win or a loss, and practicing just those moments. Then, instead of demanding the player copy the exact move, you give credit for any move that works.

Here are the two big ideas, with everyday analogies:

- Pivots: These are “fork-in-the-road” turns—key decision points where trying different actions sometimes succeeds and sometimes fails. Practicing at these points gives strong learning signals because the difference between good and bad choices is clear.

- Functional rewards: Instead of rewarding only the exact same text or command as the original example, PivotRL rewards any action that works. It’s like grading math by checking if the answer is correct, even if the steps look different, rather than demanding the student write the same words as the textbook.

What the training process looks like:

- Start with expert recordings (trajectories) of how to solve tasks.

- Break them into turns and “profile” each turn to find pivots—moments where the model’s possible actions lead to mixed results (some success, some failure).

- Run short, local trials from those pivot states only (no full-length runs).

- Use a simple “verifier” to check whether each attempted action is functionally correct (e.g., a normalizer, rule check, or lightweight judge).

- Update the model using RL on these short trials.

Why the theory supports this:

- Mixed turns teach more: The authors show that turns with higher “reward variance” (a mix of successes and failures) produce stronger learning signals. In plain terms: practice where it’s possible to learn, not where everything always works or always fails.

- Functional rewards prevent forgetting: They prove that rewarding all acceptable actions shifts the model toward good behaviors without messing up its preferences on unrelated actions. That helps the model keep its skills on other tasks (better OOD performance).

Main results and why they matter

Across four agent-focused domains (tool use, coding, terminal control, web browsing), PivotRL was tested against standard SFT using the same data and starting model.

What they found:

- Better in-domain accuracy than SFT with the same training data. On average, PivotRL improved performance about 4 percentage points more than SFT (e.g., +14.11 vs +9.94 points over the base model in one analysis).

- Much better at keeping skills on different tasks (OOD retention). While SFT caused large drops on non-agentic tasks (about −9.8 points on average), PivotRL stayed near baseline (about +0.21 on average). In other words, PivotRL avoids the “forgetting” problem.

- Comparable to full RL at a fraction of the cost. On a tough software-engineering benchmark (SWE-Bench), PivotRL reached similar accuracy to E2E RL using about 4× fewer rollout turns and about 5.5× less wall-clock time.

- Both parts matter. Removing pivot filtering or functional rewards reduced gains, confirming that each is necessary for the full effect.

- Used at production scale. NVIDIA deployed PivotRL in training its large models (Nemotron-3-Super), where it served as a workhorse for agentic post-training.

Why this is important:

- You get the generalization benefits of RL without paying for heavy, full-length simulations.

- You keep broader abilities while specializing in new skills, avoiding the common “learn one thing, forget another” problem.

Implications: What could this change?

- Faster, cheaper training for agent-like AI: Teams can improve multi-step tool-using agents with less compute and time.

- Better reliability in the real world: By keeping old skills while learning new ones, AI stays more useful across varied tasks.

- Practical adoption: Because PivotRL uses short, local practice with simple verifiers, it’s easier to scale and integrate into existing training pipelines.

- Next steps: The authors plan to use more flexible verifiers (including “AI-as-judge” approaches) and smarter ways to pick pivots during training.

Quick recap

- Problem: SFT is cheap but forgets; E2E RL generalizes but is expensive.

- Idea: Practice only at the most informative decision points (pivots) and reward any action that works (functional rewards).

- Results: Better accuracy where trained, much less forgetting elsewhere, and far lower compute than full RL.

- Impact: Makes training smarter, faster, and more scalable for real-world AI agents.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper; each point is framed to guide actionable follow-up research.

- Verifier quality and noise sensitivity: The method hinges on domain-specific verifiers for “functional equivalence,” but the paper does not quantify how false positives/negatives (or bias) in the verifier affect learning dynamics, policy quality, or OOD retention. There is no robustness analysis to noisy, stochastic, or drifting verifiers (e.g., changing web pages, flaky tests), nor techniques to calibrate or denoise verifier signals.

- Local–global reward alignment: PivotRL optimizes short, turn-level rewards without demonstrating that locally “acceptable” actions consistently improve end-to-end task success. The paper lacks ablations varying local rollout horizon length, or analyses of potential misalignment where locally correct steps lead to globally suboptimal trajectories.

- Pivot selection staleness and bias: Offline turn profiling uses a frozen reference policy

π₀and fixed thresholds (e.g.,K,A_diff) to select “mixed-outcome” pivots, but the informativeness of turns changes as training proceeds. There is no mechanism (or evaluation) for online re-profiling, adaptive re-weighting, or curriculum strategies to refresh the pivot set as the policy evolves. - Ignored “uniform” turns: By filtering out turns with near-zero variance (uniformly solved/failed), the method may neglect states that become learnable later or critical for long-horizon credit assignment. No experiments test curriculum reintroduction of these turns or dynamic thresholds to gradually expand coverage.

- Hyperparameter sensitivity: Key choices (

Kfor profiling, group sizeG, KL/clip coefficients,A_diff, verifier thresholds) are not stress-tested. There is no systematic sensitivity analysis or tuning guidelines to ensure reproducibility across tasks and model sizes. - Sample- and compute-accounting gaps: Claims of 4× fewer rollout turns and ~5.5× faster training omit costs of offline pivot profiling (multiple

Krollouts per turn), token-generation differences, GPU-hours, and memory/throughput overheads. A full compute audit (including offline profiling) and breakdown by environment latency is missing. - Theoretical assumptions vs. practice: Analyses assume finite action spaces, fixed per-state distributions, population-level GRPO along KL paths, and binary rewards; real training uses sequence actions, finite

G, clipping, noisy estimators, and evolving state distributions. There is no theory addressing estimator variance, function approximation, non-binary/verifier noise, or convergence/stability under PivotRL’s practical choices. - OOD retention guarantees: Theorem 3.3 relies on the assumption that “task-unrelated actions” correspond to the complement of the acceptable set

M(s), which is rarely true in practice. There is no formal or empirical causal test showing that preserving conditional rankings within/outsideM(s)reliably prevents OOD degradation when the state distribution shifts. - SWE-Bench inconsistency and failure modes: PivotRL underperforms SFT on SWE-Bench Verified in Table 1, yet is described as competitive with E2E RL. The paper does not analyze when/why PivotRL lags SFT or E2E RL (e.g., verifier gaps, pivot scarcity, long-horizon dependencies), nor identify failure cases or domain characteristics where PivotRL is unsuitable.

- Baseline comparability and variance: Details of E2E RL baselines (algorithms, reward shaping, KL schedules) and multi-seed variability are limited; results lack confidence intervals or statistical tests. It remains unclear how robust findings are across seeds, hyperparameters, and baseline choices.

- Generality across model sizes and families: Experiments largely use a single base model (Qwen3-30B-A3B-Thinking-2507). It is unknown whether gains and OOD retention hold for smaller/larger models, different architectures, or multilingual models.

- Applicability to domains without strong verifiers: The approach assumes accessible, reliable verifiers for local equivalence. Its portability to open-ended language tasks, multimodal agents, embodied environments (e.g., robotics), or safety-critical settings without programmatic verifiers is untested.

- LLM-as-judge/process RM integration: Although proposed as future work, the paper does not evaluate LLM judges or process reward models for functional rewards, nor provide methods for bias control, calibration, or cost containment in judge calls.

- Safety and side-effect control: There is no discussion of sandboxing, side-effect mitigation, or safety verifiers when executing tool/API/terminal actions during rollouts. The method could inadvertently increase the probability of harmful but locally “acceptable” actions under narrow verifiers.

- Exploration–exploitation trade-offs: Reward-variance-driven pivot selection may bias training toward inherently high-entropy or noisy states, impacting stability. Alternative informativeness metrics (e.g., disagreement, uncertainty, influence functions) are not explored.

- Coverage and diversity of states: PivotRL spends rollout budget only on selected turns, risking lower coverage of state–action space. There is no measurement of coverage/diversity, nor mechanisms (e.g., stratified sampling, novelty search) to prevent overfitting to a narrow set of pivots.

- Importance sampling and estimator bias: The GRPO-style objective with local rollouts and clipping introduces bias–variance trade-offs not analyzed for this setting. The paper lacks diagnostics on effective sample sizes, gradient noise with finite

G, or variance-reduction strategies tailored to turn-level RL. - Token- vs. turn-level training: Actions are entire assistant turns rather than token sequences, but the impact of this granularity on stability, credit assignment, and language quality is not studied. Comparisons to token-level RL or hybrid token/turn objectives are absent.

- Long-horizon compounding effects: Local updates may not address compounding errors across many turns. There is no analysis on how PivotRL affects trajectory-level robustness (e.g., recovery from earlier mistakes, backtracking, tool error handling).

- Integration confounds in large-scale deployment: In Nemotron-3-Super, PivotRL is combined with other RL stages (reasoning/chat), making it hard to attribute gains to PivotRL alone. Controlled ablations isolating PivotRL’s contribution at scale are missing.

- Reproducibility artifacts: The paper does not release pivot selection code, verifier implementations, or detailed configs/seeds. Without open resources, verifying claims and transferring the method to new domains remains difficult.

- Cost of building verifiers: Practical effort to design, validate, and maintain programmatic verifiers per domain is not quantified. Methods to automatically learn or induce verifiers (and their reliability) are not investigated.

- Environmental drift and non-determinism: Especially in web and tool-use settings, external changes can invalidate verifiers and pivot profiles. There is no strategy for detecting drift or adapting pivot/verifier pipelines over time.

- Adaptive KL and clipping schedules: The impact of per-state or adaptive KL coefficients, dynamic clipping, and their interaction with pivot selection is not examined; stability–performance trade-offs are unknown.

- Negative side effects on non-agentic text quality: While OOD benchmarks are reported, there is no fine-grained assessment of stylistic drift, verbosity, hallucination rates, or instruction-following fidelity outside the evaluated sets.

- Data generation dependence: The approach assumes access to high-quality expert trajectories. How trajectory quality, diversity, and annotation noise affect PivotRL’s efficacy is not explored; guidance for constructing effective SFT traces for PivotRL is missing.

- Scaling to multi-tool orchestration: Many agentic tasks require planning across multiple tools and long chains. The method’s performance on compositional tool use, cross-tool dependencies, and orchestration policies is not evaluated.

Practical Applications

Immediate Applications

The following applications can be deployed now by leveraging PivotRL’s turn-level RL, pivot filtering, and verifier-based rewards to achieve higher in-domain accuracy with minimal OOD regression and lower rollout cost.

- Software engineering: PR auto-fixers driven by verifier-based RL

- Sector: Software

- What: Fine-tune coding agents to resolve GitHub issues, generate patches, run tests/linters, and open PRs with human-in-the-loop review.

- Tools/workflows: Use unit/integration tests and static analyzers as functional verifiers; integrate with SWE-Bench or SWE-Gym harnesses; schedule pivot profiling to mine mixed-outcome turns; deploy with CI runners and repo sandboxes.

- Assumptions/dependencies: Sufficient test coverage; safe execution sandboxes; access to repos/CI; high-quality expert trajectories; reliable verifiers (tests, linters) as rewards.

- Why PivotRL: Comparable accuracy to E2E RL with ~4x fewer rollout turns and ~5.5x less wall-clock time; preserves OOD reasoning skills relative to SFT.

- IT operations and DevOps terminal agents

- Sector: Software / IT Ops

- What: Agents that execute administrative CLI tasks (provisioning, log triage, config edits) with dry-run and idempotent checks.

- Tools/workflows: Terminal-Bench-like environments; verifiers using command exit codes, config diff checks, policy/regex guards; pivot selection to focus on ambiguous steps where commands succeed/fail variably.

- Assumptions/dependencies: RBAC and least-privilege; ephemeral environments; robust “dry-run” verifiers; command allowlists and audit logs.

- Why PivotRL: Significant gains on terminal tasks while avoiding OOD degradation typical of SFT.

- Enterprise browsing and research agents for competitive intelligence and sourcing

- Sector: Enterprise software / Knowledge management

- What: Multi-step browsing agents that find, extract, and cross-validate facts from the web and internal portals.

- Tools/workflows: BrowseComp harness; verifiers based on schema/URL/domain constraints, snippet-matching, cross-source consistency; pivot filtering to target high-uncertainty navigation steps.

- Assumptions/dependencies: Reliable scraping/browser automation; content sanitization; rate limits; legal/compliance guardrails; verifiers that tolerate functional equivalence (e.g., different but correct URLs/snippets).

- Why PivotRL: Large in-domain gains in browsing tasks with near-zero OOD regression.

- Conversational tool-use agents for customer support and internal service desks

- Sector: Customer support / ITSM

- What: Agents that call back-end tools (ticketing, KB search, account actions) during multi-turn dialogues.

- Tools/workflows: Verifiers formed from tool schemas, ticket resolution states, KB grounding checks; pivot selection from dialogue turns where tools may be over/under-called; deploy via chat platforms with human-in-the-loop escalation.

- Assumptions/dependencies: High-quality tool schemas; deterministic tool responses or robust acceptance checks; logging for replayable expert traces.

- Why PivotRL: Credits functionally correct tool calls (not just string matches), improving action coverage and reducing brittle overfitting.

- Compute-efficient agentic post-training in model providers and MLOps

- Sector: AI infrastructure

- What: Replace or complement SFT with PivotRL in post-training stacks to add agentic skills without catastrophic forgetting.

- Tools/workflows: Implement a “pivot profiler” (offline variance-based turn miner), “verifier SDK” (tests, schemas, rule checks, LLM-judge fallback), and “PivotRL trainer” atop

NeMo-RLandNeMo-Gym; monitor reward variance dashboards to gate rollout budgets. - Assumptions/dependencies: Access to expert trajectories; scalable short-rollout environments; KL-regularized GRPO setup; careful verifier calibration.

- Why PivotRL: Production-proven (Nemotron-3-Super), lower rollout cost than E2E RL, better OOD retention than SFT on identical data.

- Dataset curation and QA via pivot variance analytics

- Sector: Model evaluation / Data ops

- What: Use reward-variance profiling to prune uninformative turns (uniform pass/fail) and prioritize ambiguous, high-signal data for RL.

- Tools/workflows: “Pivot variance dashboard” to monitor per-domain informativeness; automated sampling policies that sustain variance through training.

- Assumptions/dependencies: Stable offline scoring with verifiers; representative on-policy sampling.

- Why PivotRL: Directly supported by theory (variance scales the natural gradient norm), improving sample efficiency and training stability.

- Safer rollout budgeting and governance metrics

- Sector: Policy / Sustainability / Compliance

- What: Introduce procurement and internal governance metrics such as “rollout turns per 1% accuracy gain” and “OOD retention after post-training” for agent deployment approvals.

- Tools/workflows: Track KL, reward variance, and OOD benchmarks pre/post training; mandate functional-verifier rewards for high-stakes automations.

- Assumptions/dependencies: Standardized OOD suites; auditable RL logs; sector-specific safety thresholds.

- Why PivotRL: Transparent compute savings and OOD retention align with green AI and safety goals.

- Enhanced IDE coding assistants with robust tool use

- Sector: Software / Developer tools

- What: Local code-edit suggestions, refactors, and test scaffolding with functionally verified steps (compile, tests pass) rather than brittle exact matches.

- Tools/workflows: IDE plug-ins invoking local test/verifier calls; pivot-trained models for ambiguous coding steps (e.g., search vs. implement).

- Assumptions/dependencies: Fast local test execution; partial project builds; reliable static checks.

- Why PivotRL: Better generalization and fewer regressions in non-agentic coding tasks than SFT.

Long-Term Applications

These require additional research, scaling, safety validation, or domain verifiers before widespread deployment.

- Clinical tool-use agents for documentation and care pathways

- Sector: Healthcare

- What: Agents that draft notes, order sets, and guideline-checked recommendations while calling EHR APIs and medical calculators.

- Tools/workflows: Verifiers grounded in clinical guidelines and order constraints; simulated patient sandboxes; human oversight workflows.

- Assumptions/dependencies: Clinically validated verifiers; strict privacy/security; regulatory approval; bias audits; robust OOD guarantees under distribution shift.

- Why PivotRL: Functional reward supports equivalence classes (multiple acceptable orders); pivot filtering concentrates training on ambiguous clinical decisions.

- Multimodal GUI and OS automation (enterprise RPA 2.0)

- Sector: Enterprise automation / Productivity

- What: Agents that operate desktop/web GUIs across apps, handling dialog boxes, forms, and multi-step workflows.

- Tools/workflows: OSWorld-like environments; visual verifiers (DOM/state diffs, OCR checks); “PivotRL-M” extensions to handle multimodal states/actions; sandboxed VMs.

- Assumptions/dependencies: Reliable vision-state verifiers; robust screen parsing; deterministic replay; cost-effective simulation at scale.

- Why PivotRL: Cuts rollout cost vs full E2E RL while preserving general-purpose competencies.

- Autonomous scientific research assistants

- Sector: R&D / Education

- What: Literature triage, protocol planning, data analysis pipelines with verifiable intermediate milestones (e.g., reproducible figures, statistical checks).

- Tools/workflows: Verifiers based on unit tests for analyses, DOI/source validation, methods checklists; pivot mining over steps with high disagreement (search queries, data cleaning choices).

- Assumptions/dependencies: Strong provenance tooling; domain-specific verifier libraries; access to paywalled content via compliant channels.

- Why PivotRL: Encourages diverse but functionally correct actions (alternative search queries, equivalent preprocessing), improving robustness.

- Finance back-office and compliance agents

- Sector: Finance

- What: Reconciliation, KYC/AML document processing, policy-execution workflows with rule/verifier checks.

- Tools/workflows: Verifiers from policy engines (e.g., OPA), ledger consistency checks, audit trails; pivot selection in steps with historically mixed outcomes.

- Assumptions/dependencies: Strict auditability; adversarial robustness; legal/regulatory approval; privacy-preserving sandboxes.

- Why PivotRL: Conservatively shifts mass to acceptable actions while preserving unrelated behavior orderings, a desirable property for compliance.

- Industrial and household robotics task planning

- Sector: Robotics / Manufacturing

- What: High-level language-to-action planning where multiple action sequences are functionally equivalent.

- Tools/workflows: Sim-to-real training with simulation verifiers (goal-state checks, safety constraints); pivot mining to emphasize ambiguous subgoals.

- Assumptions/dependencies: Accurate simulators; reliable state verifiers; safe real-world execution and fail-safes; multimodal policy integration.

- Why PivotRL: Functional rewards naturally capture equivalence classes of plans, improving generalization and sample efficiency.

- Energy grid and infrastructure operations copilots

- Sector: Energy / Utilities

- What: Agents proposing control-room actions or maintenance schedules with strict safety/verifier gates.

- Tools/workflows: Grid simulators; constraint and safety verifiers; pivot identification for edge-case operations (e.g., contingency scenarios).

- Assumptions/dependencies: High-fidelity simulators; rigorous certification; human-on-the-loop control; cyber-physical security.

- Why PivotRL: Focuses learning on rare, mixed-outcome edge cases that dominate operational risk.

- Public-sector digital services agents

- Sector: Government

- What: Assistants that navigate complex portals to complete benefit applications or records requests.

- Tools/workflows: Verifiers based on form validation, receipt issuance, and policy compliance; pivot profiling for steps with high user error.

- Assumptions/dependencies: Accessibility, privacy compliance, and robust change management as portals evolve; legal approvals.

- Why PivotRL: Reduces brittle overfitting and sustains OOD competencies necessary for dynamic government workflows.

- Ecosystem tools: verifier marketplaces and dynamic sampling

- Sector: AI tooling

- What:

- “Verifier marketplace” of reusable, domain-specific functional checks (tests, schema rules, LLM-judge templates).

- “Dynamic pivot sampling” services that maintain reward variance online (as hinted by future work on dynamic sampling).

- Assumptions/dependencies: Standardized verifier APIs; governance for LLM-judge bias; community curation and security review.

- Why PivotRL: Verifier quality is a key dependency; commoditizing verifiers lowers adoption friction across sectors.

Cross-cutting assumptions and dependencies

- Availability and quality of expert trajectories to seed turn-level training.

- Existence of robust, domain-appropriate functional verifiers; where unavailable, LLM-as-a-judge introduces bias and requires calibration and audits.

- Safe, sandboxed environments for short on-policy rollouts (tests, terminals, browsers, simulators).

- Tuning and monitoring of KL regularization, reward variance, and pivot thresholds to prevent mode collapse or overfitting.

- Data governance, privacy, and security controls for tool-using agents; sector-specific regulatory approvals (healthcare, finance, government).

- Sustained OOD retention depends on preserving probability ordering for task-unrelated actions and maintaining diverse training distributions.

Glossary

- Acceptable actions: Actions deemed valid at a given state by a task-specific verifier. "the set of locally acceptable actions under a domain-specific verifier."

- Action space: The set of all possible actions (e.g., assistant completions) available to the policy at a turn. "Since the action space of assistant turns is exponentially large,"

- Agentic: Pertaining to agents that take sequences of actions in an environment to accomplish goals. "Long-horizon agentic tasks require many turns of LLM interaction with an environment."

- Agentic coding: Software engineering tasks where an agent uses tools and code over multiple steps to resolve issues. "on agentic coding tasks, PivotRL achieves competitive accuracy"

- Behavior cloning: Supervised imitation of expert demonstrations by maximizing likelihood of expert actions. "marginal gains over standard behavior cloning on identical data"

- Binary verifier rewards: Reward signals that are strictly 0/1 based on a verifier’s pass/fail judgment. "For binary verifier rewards, larger variance means a more mixed success/failure turn,"

- Catastrophic forgetting: Loss of previously learned capabilities when fine-tuning on new tasks. "heavily mitigates catastrophic forgetting."

- Conditional distributions: Probability distributions conditioned on membership in a subset (e.g., acceptable vs. non-acceptable actions). "preserves the conditional distribution on both (i) the set of acceptable actions and (ii) its complement."

- End-to-end reinforcement learning (E2E RL): RL that rolls out full trajectories from the initial state and updates policies from the resulting returns. "end-to-end reinforcement learning (E2E RL) preserves OOD capabilities,"

- Exact string matching: Scoring actions by requiring their text to exactly match the demonstrated string. "exact string matching with the SFT data demonstration."

- Fisher geometry: The information-geometric structure induced by the Fisher information metric for defining natural gradients. "under this Fisher geometry."

- Fisher norm: The norm induced by the Fisher information metric, used to measure the size of natural gradient updates. "the Fisher norm of the natural gradient of the statewise reward objective"

- Functional-equivalent actions: Different action strings that achieve the same effect or functionality in the environment. "utilizes rewards for functional-equivalent actions rather than demanding strict string matching"

- Functional reward: A reward that credits functionally correct actions regardless of textual form. "functional reward ensures that correct but textually different actions receive credit."

- Generative action spaces: Large, structured spaces of possible generated outputs where many distinct strings can be correct. "In generative action spaces, many tool calls, shell commands, or search steps are locally acceptable"

- GRPO (Group Relative Policy Optimization): A policy optimization method that normalizes advantages across a group of rollouts at a state. "Group Relative Policy Optimization (GRPO)"

- Importance sampling weight: A ratio correcting for distribution mismatch between sampling and target policies during updates. "is the importance sampling weight,"

- Imitation suboptimality: The performance gap that arises from relying solely on imitation learning without online interaction. "offline imitation suboptimality grows quadratically with task horizon"

- KL path: The trajectory of policies parameterized by a KL-regularized exponential tilting of rewards. "the KL path"

- KL-projection: The projection that finds the closest policy (in KL divergence) satisfying constraints (e.g., increased mass on acceptable actions). "is the KL-projection of the reference policy"

- KL-regularized statewise update: A policy update that maximizes reward while penalizing deviation from a reference via KL divergence at a fixed state. "KL- regularized statewise update"

- Local RL: Reinforcement learning that performs short, targeted rollouts from intermediate states rather than full trajectories. "we consider a local RL paradigm."

- Long-horizon: Tasks requiring many sequential decisions or turns to reach an outcome. "Long-horizon agentic tasks require many turns"

- LLM-as-a-judge: Using a LLM to evaluate the quality or correctness of actions or outputs. "LLM-as- a-judge frameworks"

- Miss rate: The probability that strict matching rejects actions that a functional verifier would accept. "we define the miss rate as"

- Natural gradient: A gradient defined with respect to the Fisher information metric, providing geometry-aware updates. "denote the natural gradient of Js"

- Negative log-likelihood: A standard supervised loss that penalizes the log probability assigned to the correct labels/actions. "The standard negative log-likelihood loss for SFT is given by"

- On-policy distillation: Distillation that uses trajectories generated by the current policy to reduce distribution shift. "on-policy distillation mitigates off-policy distribution shifts"

- On-policy rollout: Sampling actions and trajectories using the current policy during training. "many turns of on-policy rollout"

- Online data aggregation: Collecting additional on-policy data to correct covariate shift and improve generalization. "environment interaction via online data aggregation"

- Out-of-domain (OOD): Inputs or tasks outside the training distribution, often used to assess generalization. "out-of-domain (OOD) performance"

- Population GRPO score: A theoretical measure of the GRPO update magnitude computed under the population distribution. "Define the population GRPO score"

- Reference policy: The baseline policy used for KL regularization and comparison in RL objectives. "Let To denote the reference policy,"

- Reward variance: The variability of rewards across sampled actions at a state, which scales the learning signal. "reward variance is not just a heuristic diagnostic:"

- Rollout budget: The compute or sample budget allocated for generating rollouts during training. "allocating rollout budgets"

- Rollout turns: The number of decision steps taken during rollouts, used as a cost/effort measure. "with 4x fewer rollout turns."

- Statewise expected reward: The expected reward computed for a fixed state over the action distribution. "statewise expected reward objective"

- Supervised fine-tuning (SFT): Post-training by maximizing likelihood on labeled demonstrations without environment interaction. "supervised fine-tuning (SFT) is compute efficient,"

- Turn-level training: Training where each action corresponds to an entire assistant turn (completion) rather than individual tokens. "In turn-level training, we extract assistant turns"

- Verifier: A programmatic or learned checker that evaluates whether an action is functionally correct. "domain-appropriate verifiers"

- Verifier-based reward: Reward assigned based on a verifier’s judgment of functional correctness instead of exact matching. "verifier-based reward"

Collections

Sign up for free to add this paper to one or more collections.