SmartSearch: How Ranking Beats Structure for Conversational Memory Retrieval

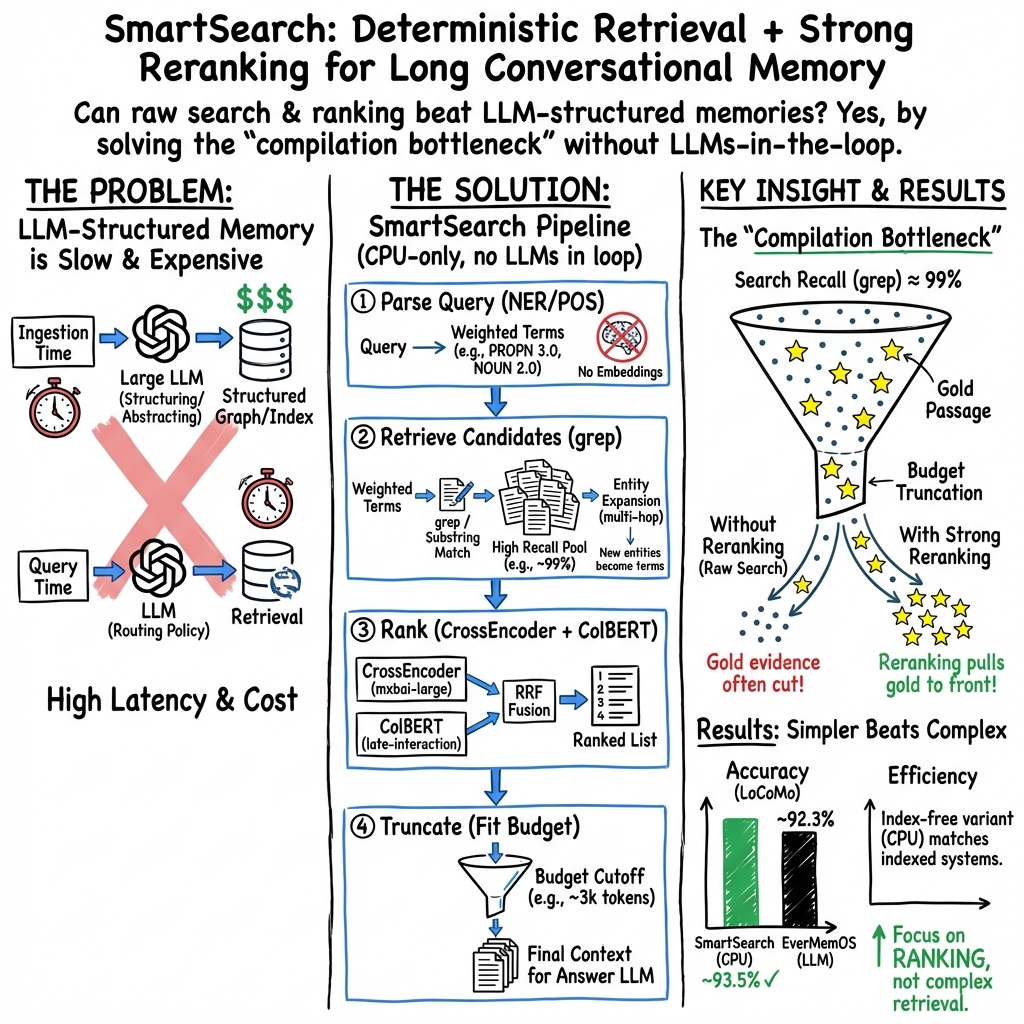

Abstract: Recent conversational memory systems invest heavily in LLM-based structuring at ingestion time and learned retrieval policies at query time. We show that neither is necessary. SmartSearch retrieves from raw, unstructured conversation history using a fully deterministic pipeline: NER-weighted substring matching for recall, rule-based entity discovery for multi-hop expansion, and a CrossEncoder+ColBERT rank fusion stage -- the only learned component -- running on CPU in ~650ms. Oracle analysis on two benchmarks identifies a compilation bottleneck: retrieval recall reaches 98.6%, but without intelligent ranking only 22.5% of gold evidence survives truncation to the token budget. With score-adaptive truncation and no per-dataset tuning, SmartSearch achieves 93.5% on LoCoMo and 88.4% on LongMemEval-S, exceeding all known memory systems under the same evaluation protocol on both benchmarks while using 8.5x fewer tokens than full-context baselines.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces SmartSearch, a simple way for chatbots to “remember” long conversations. Instead of using big, expensive AI models to reorganize and search the chat history, SmartSearch uses fast, rule-based tools to find the right parts of the conversation and a small model to sort those parts by usefulness. The surprise: this simple approach beats many complex systems while being faster and cheaper.

What questions were the researchers trying to answer?

They focused on three easy-to-understand questions:

- Do we really need big AI models to restructure and search conversation histories, or can simple, deterministic (non-random) tools do the job?

- What actually limits accuracy: finding the right passages, or choosing which ones to show to the answering AI when there’s limited space?

- Can a lightweight system run quickly on a regular CPU (no GPU) and still perform as well as or better than more complicated methods?

How does SmartSearch work?

Think of answering a question about a long chat like being a detective with a big case file and only a small notepad. You need to find the right clues and copy the most important ones onto your notepad before you ask the expert for a verdict. SmartSearch follows four steps:

- Step 1: Understand the question with simple language tools

- It uses NER (named entity recognition) to spot names of people, places, and things, and POS (parts of speech) to spot word types (like nouns and verbs).

- Analogy: It highlights “proper nouns” (like “Alice,” “New York”) and other key words so search focuses on the most important terms.

- Step 2: Find matches in the chat by exact word search

- It does fast “substring matching” (like using Ctrl+F) to find passages that contain those key words.

- If a found passage mentions a new, relevant name (a fresh clue), SmartSearch adds it and searches again. This is “multi-hop” expansion.

- It only falls back to “semantic search” (embeddings) for about 1% of tricky cases.

- Step 3: Sort the results by usefulness (reranking)

- A small model called a CrossEncoder and a compact semantic model (ColBERT) score how relevant each passage is, then combine their rankings.

- Analogy: If you found 400 possible clues, these models help put the best ones at the top of the stack.

- Step 4: Pack the best clues into a space limit

- There’s a “token budget,” like a page limit for your notepad. SmartSearch includes passages in rank order until it runs out of space.

- It can also use a “score-adaptive” method: if the top passage is extremely strong, it includes fewer total passages; if none are clearly strong, it includes more. Analogy: Decide how much to pack based on how clearly valuable the top items are.

All of this runs on a CPU in about 650 milliseconds per query, with no big AI model in the loop for searching.

What did they find?

Here are the main results and why they matter:

- Simple search is enough to find the right clues

- Exact word search (like Ctrl+F) could reach about 98–99% of the needed evidence in tests. This means fancy, heavy restructuring of the conversation isn’t necessary to locate the right passages.

- The real bottleneck is ranking, not retrieval

- Without smart sorting, only about 22.5% of the needed evidence fits into the space limit shown to the answer model. With reranking, the right evidence gets moved to the top and makes it into the final context. This is the key to higher accuracy.

- Strong performance on two benchmarks

- LoCoMo (shorter conversations, ~9,000 tokens): about 93.5% accuracy with around 3,100 tokens shown to the answer model—about 8.5× fewer tokens than feeding the entire conversation.

- LongMemEval-S (long conversations, ~115,000 tokens): about 88.4% accuracy with ~3,400 tokens.

- In both cases, SmartSearch matches or beats systems that do heavy pre-processing with big models.

- An “index-free” version also works

- Even a version that just uses exact word search (no fancy search index) plus simple query expansion performs very well, especially on long conversations.

- Where it shines and where it struggles

- Shines: Open-ended questions that need the “feel” of the conversation. Because SmartSearch pulls raw text (not summaries), it preserves tone and details.

- Needs work: Temporal questions (who did what, when). The clues are often found, but the final answering model sometimes struggles to piece together the timeline.

Why does this matter?

- Faster and cheaper

- No big model calls during search, no GPU required. This means less cost and lower latency.

- Simpler systems can be better

- You don’t need to restructure the whole conversation with an expensive AI to get great results. Deterministic tools plus a small reranker can outperform more complicated setups.

- Smarter use of space

- Since the main problem is fitting the right passages into a small space (“token budget”), focusing on ranking and clever packing (score-adaptive truncation) gives big gains.

- Scales from short to long conversations

- The same general setup works across very different conversation lengths, and the adaptive packing helps adjust automatically.

Final takeaway

SmartSearch shows that for helping chatbots remember long chats, simple search-plus-sort beats heavy, complex memory engineering. Exact matches find almost everything you need; the real trick is ranking the results so the most useful evidence fits into the limited space shown to the answer model. This approach is fast, cheap, and competitive with the best systems—making it a practical choice for building more reliable, long-term conversational assistants.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a consolidated list of concrete gaps and open issues left unresolved by the paper that future work can address:

- Generalization beyond current benchmarks:

- Validate SmartSearch on real-world, noisy, multi-speaker conversational logs (e.g., ASR transcripts, chat platforms) where entity mentions are inconsistent (nicknames, typos, pronouns) and language is less keyword-rich than LoCoMo or structured sessions in LongMemEval-S.

- Extend to multilingual and code-switched conversations and assess robustness of spaCy NER/POS (English-only in this work).

- Reliance on NER/POS for term weighting:

- Quantify sensitivity to the choice and quality of NER/POS taggers (e.g., spaCy small vs. large vs. alternative taggers) and to tagging errors.

- Explore whether the fixed weights (PROPN 3.0, NOUN 2.0, VERB 1.0, +1.0 NER bonus) are optimal across domains; develop principled or learned weighting schemes and evaluate transfer.

- Missing coreference and anaphora handling:

- Incorporate coreference resolution so pronouns and nominal mentions can retrieve relevant passages when named entities are absent.

- Evaluate the impact of coreference-aware expansion vs. current entity discovery on both short and long conversations.

- Handling surface-form variation and noise:

- Investigate robust fuzzy or phonetic string matching (e.g., edit distance, char n-grams) gated by POS/NER to mitigate typos, inflections, and paraphrases without over-retrieving.

- Revisit morphological normalization with stricter constraints (e.g., POS-consistent lemmatization, whitelist-based) to avoid the noise observed with naive expansion.

- Query expansion design and error control:

- Analyze failure modes of PRF and entity discovery (e.g., topic drift, error cascading across hops) and design mechanisms to bound expansion-induced noise.

- Develop confidence-based or learned expansion policies that decide when to expand and which terms to include per query.

- Multi-hop retrieval at scale:

- Derive oracle traces for LongMemEval-S (or comparable long-context datasets) to quantify hop distribution and validate the “97% single-hop” finding beyond LoCoMo.

- Investigate more complex multi-hop tasks (beyond 2–3 hops) and whether deterministic expansion suffices.

- Reranking training and domain fit:

- Assess domain mismatch of MS MARCO–trained CrossEncoders for conversational memory and measure gains from fine-tuning on conversation-specific passage ranking datasets.

- Explore listwise reranking approaches (as suggested by cited work) and compare against pointwise CrossEncoder + RRF in this setting.

- Score fusion and calibration:

- Examine why offline proxies predicted three-way RRF gains that did not materialize online; develop better fusion diagnostics and training for calibrated combinations (e.g., learned score fusion).

- Calibrate CrossEncoder scores across queries/domains to stabilize score-adaptive truncation thresholds.

- Truncation policy robustness:

- Test whether the proposed score-adaptive truncation (, top-K) generalizes to different answer LLMs and domains given potential CE score calibration shifts.

- Investigate alternative query-adaptive budget allocation (e.g., uncertainty-aware pruning, reinforcement learning for budget control) and compare across corpora.

- Compilation bottleneck mitigation:

- Implement and evaluate the proposed passage deduplication/merging and query-conditioned compression to verify predicted gains (e.g., gold-preserving 10% token reduction) and quantify impact on accuracy and latency.

- Explore structure-preserving compression for temporal/chronological information to aid downstream reasoning.

- Temporal reasoning gap:

- Add lightweight temporal normalization/indexing (e.g., timeline construction, date normalization, session ordering cues) to retrieval and measure whether it closes the ~7–10 pp gap on temporal questions.

- Conduct controlled studies to disentangle retrieval vs. answer-LLM inference errors on temporal tasks and test prompts/tools (e.g., explicit temporal reasoning instructions) that reduce inference failures.

- Scalability and systems performance:

- Characterize time/space complexity and throughput of grep-based retrieval as corpus size grows beyond ~115k tokens to millions, and identify breakpoints where inverted or dense indices become necessary.

- Provide hardware-dependent latency/throughput curves (CPU models, cores, memory) and profile bottlenecks under concurrent load.

- Index-free vs. indexed tradeoffs:

- Define decision criteria for when to enable dense retrieval fallback, and evaluate its marginal utility across domains and query types (beyond the reported ~1% cases).

- Analyze recall/precision and latency-cost tradeoffs when mixing grep with lightweight indices (e.g., suffix arrays, compressed suffix tries) that preserve determinism but scale better.

- Passage segmentation and granularity:

- Specify and ablate passage chunking strategies (per-turn vs. sliding windows vs. semantic segments) to quantify effects on candidate ranks, token budgets, and gold coverage.

- Study how segmentation interacts with deduplication and compression.

- Robustness to adversarial/ambiguous content:

- Evaluate performance with injected distractors, contradictory updates, or style shifts to test the system’s resilience to noise and content drift.

- Measure stability under frequent knowledge updates and deletions (e.g., back-and-forth user claims), especially for knowledge-update and temporal categories.

- Cross-domain and modality generalization:

- Test on non-conversational long-context tasks (documents, code bases, forums) to assess portability of deterministic retrieval and ranking.

- Explore multi-modal settings where conversations include images or audio; define retrieval strategies for non-text artifacts.

- Evaluation methodology:

- Complement LLM-judge metrics with human evaluation and report inter-annotator agreement to mitigate judge variance confounds; establish a standardized protocol for cross-paper comparability.

- Release consistent evaluation scripts/prompts and, where licensing permits, gold passage annotations for long-context datasets to enable oracle analysis beyond LoCoMo.

- Reproducibility and deployment details:

- Disclose full implementation details (hardware specs for the 650 ms latency, code, configs, segmenters, exact weights, and prompts) and release code/models to facilitate replication.

- Quantify compute and energy costs per query relative to LLM-heavy baselines to substantiate the claimed efficiency benefits.

- Edge and constrained environments:

- Assess feasibility of running the 435M CrossEncoder and ColBERT on resource-constrained devices (mobile/edge) or with quantization; characterize accuracy–latency–memory tradeoffs.

- Security and privacy considerations:

- Analyze potential for data leakage or privacy risks when operating directly on raw conversation logs and propose mechanisms (redaction, on-device processing) to mitigate them.

- Failure case taxonomy:

- Provide a systematic error analysis across categories (not only temporal) to identify patterns where ranking, expansion, or parsing fails, and derive targeted remedies.

Practical Applications

Overview

The paper introduces SmartSearch, a deterministic, CPU-friendly retrieval-and-ranking pipeline for long conversational memory. It replaces heavy LLM-based ingestion and query-time orchestration with:

- NER/POS-weighted substring matching for high-recall retrieval

- Rule-based entity discovery for multi-hop expansion

- A lightweight, CPU-run CrossEncoder (+ optional ColBERT fusion) for ranking

- Score-adaptive truncation to fit ranked evidence into an LLM token budget

This yields near-oracle recall, strong accuracy on long-context benchmarks, and 6–9× token savings versus full-context baselines—without GPUs or embedding-heavy indices. Below are practical applications and the conditions under which they are feasible.

Immediate Applications

The following can be deployed with today’s tools (e.g., SpaCy, grep, mxbai CrossEncoder, ColBERT), on CPU, and with minimal or no indexing.

- Customer support and CRM assistants that “remember” long histories

- Sectors: software, e-commerce, telecom, finance

- What: Retrieve salient passages from months of tickets/chats/CRM logs to answer user queries with minimal tokens and low latency.

- Tools/workflows:

- NER-weighted grep over conversation logs; optional entity expansion

- CPU CrossEncoder + (optional) ColBERT rank fusion microservice

- Score-adaptive truncation to control LLM costs

- Integrate as a retriever in LangChain/LlamaIndex or as a REST microservice

- Assumptions/dependencies:

- Consistent entity mentions (names, account IDs); English or strong NER model for the target language

- Data connectors and access controls to CRM/help desk systems

- Works best up to medium-scale corpora; very large archives may need inverted indices

- Enterprise meeting/email assistants with long-term memory

- Sectors: productivity software, enterprise IT

- What: Summarize and answer questions across months of emails, chats, and meeting transcripts using CPU-only retrieval.

- Tools/workflows: Connectors to O365/Google Workspace/Slack; NER-based grep; CE+RRF ranking; adaptive truncation

- Assumptions/dependencies: Org permissioning and PII handling; mixed-quality transcripts may reduce substring match effectiveness

- On-device personal knowledge assistants (privacy-preserving)

- Sectors: daily life, mobile/desktop software

- What: Local retrieval over notes, journals, and messages without embeddings or cloud calls; fast, offline “memory.”

- Tools/workflows: Local grep; small NER models (SpaCy or device-optimized); CPU CE reranker; minimal UI

- Assumptions/dependencies: Device CPU budget; small-to-medium corpus; per-user LLMs and NER quality

- Healthcare clinician-facing retrieval over longitudinal EHR notes

- Sectors: healthcare

- What: Retrieve prior visits, meds, and lab narratives quickly for clinical questions while keeping data on-prem.

- Tools/workflows: SciSpaCy (clinical NER), grep over encounter notes, CPU CE reranker; adaptive truncation to fit into clinical LLM

- Assumptions/dependencies: HIPAA/GDPR compliance, domain NER customization, careful evaluation for clinical safety; temporal reasoning is a known weak point (supplement with time metadata)

- Legal and compliance e-discovery over communications

- Sectors: legal, finance, enterprise compliance

- What: Quickly surface relevant passages from Slack/email/chat for audits or investigations without building/storing large embedding indices.

- Tools/workflows: Air-gapped CPU retriever (grep + CE); entity expansion for people/orgs; audit trail logging; export-ranked-evidence workflow

- Assumptions/dependencies: Very large corpora may require inverted indices; multilingual corpora require local NER models; deduplication helps reduce redundancy

- Developer productivity: retrieve PR, issue, and design history

- Sectors: software/devtools

- What: Answer questions spanning many PRs/issues/design docs; cut LLM tokens for repo or org memory.

- Tools/workflows: Grep over markdown/issues; NER for entities (repos, services); CE reranker; optional code-aware rankers later

- Assumptions/dependencies: Code-specific NER limited; informal references reduce exact-match efficacy; scale control via per-repo scoping

- Knowledge base and forum search with low-cost RAG

- Sectors: education, software support, communities

- What: Retrieve exact Q&A/forum passages with deterministic, explainable matching and CE reranking for better answer grounding.

- Tools/workflows: KB/forum dump; NER-weighting; CE ranking; budgeted compilation to LLM

- Assumptions/dependencies: Works best where posts contain explicit entity mentions; multilingual requires extra NER

- Public-sector citizen-support agents with offline/CPU retrieval

- Sectors: government services

- What: Recall citizen history across interactions securely and cost-effectively without GPU infrastructure.

- Tools/workflows: On-prem CPU service; grep + CE; adaptive truncation; auditability

- Assumptions/dependencies: Data retention rules; multilingual needs; privacy constraints

- Cost- and energy-reduction retrofits for existing RAG systems

- Sectors: cross-industry policy/ops

- What: Replace embedding-heavy retrieval with NER-weighted grep + CE ranking to lower storage, inference, and token costs.

- Tools/workflows: Drop vector indices where feasible; add score-adaptive truncation module; CPU-only deployment

- Assumptions/dependencies: Acceptable accuracy on target domain with exact-match dominant queries; fallbacks for vocabulary gaps (small dense model)

- “SmartSearch Retriever” plugin for LLM frameworks

- Sectors: software tooling

- What: A packaged retriever module with NER parsing, grep retrieval, CE/RRF ranking, and adaptive truncation.

- Tools/workflows: Plugins/extensions for LangChain/LlamaIndex; containerized microservice; observability dashboards

- Assumptions/dependencies: Availability of compatible CE model weights; licensing constraints for commercial use

- Oracle-based observability for RAG pipelines

- Sectors: ML Ops, academia

- What: Use oracle-trace style analysis to diagnose whether failures stem from retrieval or ranking (“compilation bottleneck”).

- Tools/workflows: Offline evaluation script that approximates gold-evidence coverage; Dijkstra-based shortest-path retrieval traces; reranker A/B tests

- Assumptions/dependencies: Requires gold evidence labels or high-quality proxies; adds evaluation compute offline, not at query time

- Score-adaptive truncation as a drop-in module

- Sectors: software

- What: Dynamic token budget allocation driven by cross-encoder scores; improves worst-case recall across corpora without per-dataset tuning.

- Tools/workflows: Top-K preselection; fractional threshold τ = α·max score; conservative fallback

- Assumptions/dependencies: Needs a CE scoring stage; careful setting of α for desired token/recall trade-offs

- Privacy-first enterprise deployments without embedding storage

- Sectors: security, enterprise IT

- What: Simplify data governance by eliminating persistent embedding indices and relying on raw text + deterministic retrieval.

- Tools/workflows: Text-store access controls; audit logging; ephemeral candidate sets; on-prem CPU services

- Assumptions/dependencies: Organizations accept grep/substring workloads; proper retention/deletion policies for raw logs

Long-Term Applications

These require additional research, scaling, domain adaptation, or regulatory clearance.

- Multilingual and informal-text robustness

- Sectors: cross-industry, public sector, consumer

- What: Extend NER-weighted retrieval to low-resource languages, code-switching, nicknames, and slang.

- Tools/workflows: Train/adapt NER models; lightweight morphological/phonetic expansion; small dense fallback tuned per language

- Assumptions/dependencies: Availability of high-quality multilingual NER; evaluation datasets; privacy for training data

- Scaling to web- or enterprise-scale corpora

- Sectors: enterprise search, legal/compliance, finance

- What: Hybridize with inverted indices or sharded grep to handle 108–109 tokens while retaining deterministic behavior.

- Tools/workflows: Index-backed AND/OR retrieval, tiered storage, distributed CE reranking, passage deduplication before ranking

- Assumptions/dependencies: Engineering effort for distributed search; latency budgets; memory constraints

- Time-aware retrieval and reasoning

- Sectors: healthcare, finance, operations

- What: Incorporate time normalization and timeline-aware ranking to close the temporal reasoning gap identified in the paper.

- Tools/workflows: Temporal NER/normalization; time-aware features in CE; session diversity priors; timeline construction before truncation

- Assumptions/dependencies: Annotated temporal corpora; careful evaluation to avoid hallucinated orderings

- Regulatory-grade clinical assistants

- Sectors: healthcare

- What: Longitudinal retrieval for clinical decision support with validated safety and bias controls.

- Tools/workflows: Domain-adapted NER; curated clinical rankers; robust audit trails; integration with HL7/FHIR; model governance

- Assumptions/dependencies: FDA/CE regulatory pathways; prospective studies; hospital IT integration

- Litigation-scale e-discovery and investigations

- Sectors: legal, finance

- What: Handle millions of documents and multi-year communications with near-real-time ranking and strong provenance.

- Tools/workflows: Distributed retrieval; aggressive passage deduplication and compression; listwise ranking; explainability tooling

- Assumptions/dependencies: Compute and storage budgets; multilingual/cross-format ingestion (PDFs, scans)

- Human-robot interaction (HRI) agents with persistent conversational memory

- Sectors: robotics, consumer electronics

- What: Robots that recall user preferences safely and locally, using CPU-only retrieval with minimal energy draw.

- Tools/workflows: ASR to text; on-device retriever; latency-aware truncation; fallbacks for speech disfluencies

- Assumptions/dependencies: Robust speech-to-text; far-field noise; privacy expectations

- On-device mobile assistants across apps

- Sectors: consumer, mobile OS

- What: Cross-app memory with local retrieval/ranking and tight token budgets (battery- and privacy-friendly).

- Tools/workflows: OS-level permissions; app-scoped stores; model quantization; incremental sync

- Assumptions/dependencies: Platform APIs; model size constraints; user consent UX

- Standardization and policy for low-carbon, low-cost retrieval

- Sectors: policy, sustainability, procurement

- What: Guidelines favoring CPU-first retrieval, token budgeting, and data-minimizing pipelines for public deployments.

- Tools/workflows: Carbon and cost benchmarks; procurement templates; compliance checklists; audit-ready logs

- Assumptions/dependencies: Stakeholder buy-in; standardized metrics for energy and accuracy

- Passage deduplication and context compression toolkits

- Sectors: software, academia

- What: Open-source modules to remove redundancy and compress context while preserving gold evidence.

- Tools/workflows: Similarity from CE/ColBERT; near-duplicate clustering; query-conditioned pruning

- Assumptions/dependencies: Strong evaluation to prevent evidence loss; task-specific tuning

- Education: semester-long tutoring with durable memory

- Sectors: education

- What: Tutors that recall student progress across sessions and courses, including multi-session queries.

- Tools/workflows: LMS connectors; student-data privacy; session diversity heuristics; parent/teacher oversight

- Assumptions/dependencies: Consent and FERPA/GDPR compliance; adaptation to varied writing styles and languages

- Real-time trading/comms monitoring for compliance

- Sectors: finance

- What: Continuous retrieval/ranking over streaming chats/emails for early risk signals with explainable matches.

- Tools/workflows: Incremental corpora updates; low-latency ranking; event/entity watchlists; audit dashboards

- Assumptions/dependencies: High-throughput pipelines; strict false-positive/negative tolerances

Notes on Feasibility and Dependencies

- Language and NER quality: The approach benefits from consistent named entities and strong NER/POS tagging; performance may degrade with noisy or multilingual text without adaptation.

- Corpus scale: Index-free grep is effective up to medium-scale corpora; very large deployments should add inverted indices or hybrid retrieval.

- Temporal reasoning: Identified as a weakness; augment with temporal normalization and time-aware ranking for time-critical domains.

- Privacy and governance: Eliminating embedding indices simplifies deletion and reduces data sprawl; ensure raw-text retention policies and access control are in place.

- Compute budgets: Designed for CPU and ~650 ms latency per query; on-device variants require model size/latency optimization and quantization.

- Licensing: Verify licenses for SpaCy models, rerankers (e.g., mxbai), and any embeddings (if used as fallback).

Glossary

- BM25: A probabilistic information retrieval ranking function that uses term frequency and inverse document frequency to score documents. "BM25~\citep{robertson2009} provides probabilistic ranking and is the standard for keyword retrieval."

- Catastrophic forgetting: The tendency of a model to lose previously learned knowledge when trained on new data, unless regularized. "prevent catastrophic forgetting~\citep{kirkpatrick2017}."

- ColBERT: A late-interaction dense retrieval model that computes fine-grained token-level similarities between queries and documents. "fused with ColBERT~\citep{khattab2020} via Reciprocal Rank Fusion (RRF)"

- Compilation bottleneck: A limitation where relevant evidence is found but not surfaced due to truncation/ranking before the LLM, making ranking the core bottleneck. "Oracle analysis on two benchmarks identifies a compilation bottleneck:"

- CrossEncoder: A reranking model that jointly encodes the query and candidate passage to score their relevance. "The only ML component is a CrossEncoder reranker (mxbai-rerank-large-v1, 435M parameters, DeBERTaV3)"

- DeBERTaV3: An encoder architecture variant used in high-quality rerankers. "(mxbai-rerank-large-v1, 435M parameters, DeBERTaV3)"

- Dense retrieval: Retrieval based on learned embeddings to capture semantic similarity beyond exact term matches. "Dense retrieval: Embedding-based methods such as DPR~\citep{karpukhin2020} and ColBERT~\citep{khattab2020} bridge vocabulary gaps but are slower and require pre-computed indices."

- DPR: Dense Passage Retrieval; a dual-encoder approach for retrieving passages using dense embeddings. "such as DPR~\citep{karpukhin2020}"

- Entity discovery: Extracting new named entities from retrieved text to expand subsequent searches. "rule-based entity discovery for multi-hop expansion"

- Gold evidence: Labeled ground-truth passages that contain the necessary information to answer a question. "only 22.5\% of gold evidence survives truncation to the token budget."

- IDF: Inverse Document Frequency; a measure of how informative a term is across a corpus, used in weighting schemes like BM25. "BM25's corpus-statistical weighting (IDF)"

- Index-free: Operating without precomputed indices (e.g., inverted or embedding indices), often by searching raw text directly. "an index-free variant that drops all precomputed indices (Section~\ref{sec:expansion})"

- J-score: A binary LLM-judge evaluation metric indicating whether an answer is correct. "binary J-score"

- Late-interaction scoring: A retrieval scoring approach where token-level interactions are computed at scoring time rather than during encoding. "Late-interaction scoring~\citep{khattab2020}."

- Listwise ranking: Ranking that optimizes over the entire set of candidates jointly, rather than individual pairs. "Listwise ranking: Recent work~\citep{li2026} shows that ranking across entire candidate sets (listwise) outperforms pointwise ranking for long-context dialogue."

- LoCoMo: A benchmark for conversational memory with long dialogues and diverse question types. "On the LoCoMo benchmark, this architecture achieves 91.9\% accuracy"

- LongMemEval-S: A benchmark featuring very long conversation histories for evaluating memory systems. "LongMemEval-S~\citep{wu2024longmemeval}"

- MS MARCO: A large-scale passage ranking dataset commonly used to train rerankers. "fine-tuned on MS~MARCO~\citep{nguyen2016}."

- Multi-hop: Retrieval or reasoning that requires chaining information across multiple steps or passages. "Multi-hop reasoning has been studied extensively in open-domain QA."

- Named Entity Recognition (NER): Identifying spans in text that mention entities like people, organizations, or locations. "SpaCy NER/POS tagging extracts and weights search terms directly from the query."

- Oracle analysis: An evaluation method using gold evidence to derive the optimal retrieval trace for each query. "Oracle analysis (Section~\ref{sec:oracle}) confirms this suffices:"

- Pointwise scoring: Ranking approach where each query-document pair is scored independently. "Pointwise scoring via Sentence-Transformers~\citep{reimers2019}."

- POS tagging: Part-of-speech tagging; labeling words by their syntactic category (e.g., noun, verb). "SpaCy en_core_web_sm~\citep{honnibal2020} extracts terms via POS tagging and NER."

- Pseudo-relevance feedback (PRF): Expanding a query using terms from top-ranked initial results to improve recall. "Pseudo-relevance feedback (PRF): After main search hops, a PRF hop extracts frequent content words"

- RAG: Retrieval-Augmented Generation; combining retrieved evidence with generation by a LLM. "RAG~\citep{lewis2020} established the paradigm of augmenting LLMs with retrieved evidence."

- Reciprocal Rank Fusion (RRF): A method to combine multiple rankings by summing reciprocal ranks with weights. "RRF: "

- Reranking: Reordering an initial set of retrieved candidates using a more accurate but costlier model. "Cross-encoder reranking: Models like ms-marco-MiniLM-L-12-v2~\citep{reimers2019} score query-document pairs directly"

- Score-adaptive truncation: Pruning candidates based on scores relative to the top-scoring item to fit within a token budget. "With score-adaptive truncation and no per-dataset tuning, SmartSearch achieves 93.5\% on LoCoMo and 88.4\% on LongMemEval-S"

- Spearman rho: A nonparametric measure of rank correlation between two variables. "Spearman of 0.19--0.62 with the CrossEncoder, indicating complementary failure modes."

- Temporal reasoning: Reasoning that requires understanding the ordering and timing of events. "Temporal Reasoning Remains the Main Gap"

- Token budget: The maximum number of tokens allowed in the context passed to the LLM. "before token-budget truncation"

- Top-K: Selecting the K highest-scoring items from a ranked list. "after a top- pre-selection step (e.g., by RRF score)"

Collections

Sign up for free to add this paper to one or more collections.