Ghosts of Softmax: Complex Singularities That Limit Safe Step Sizes in Cross-Entropy

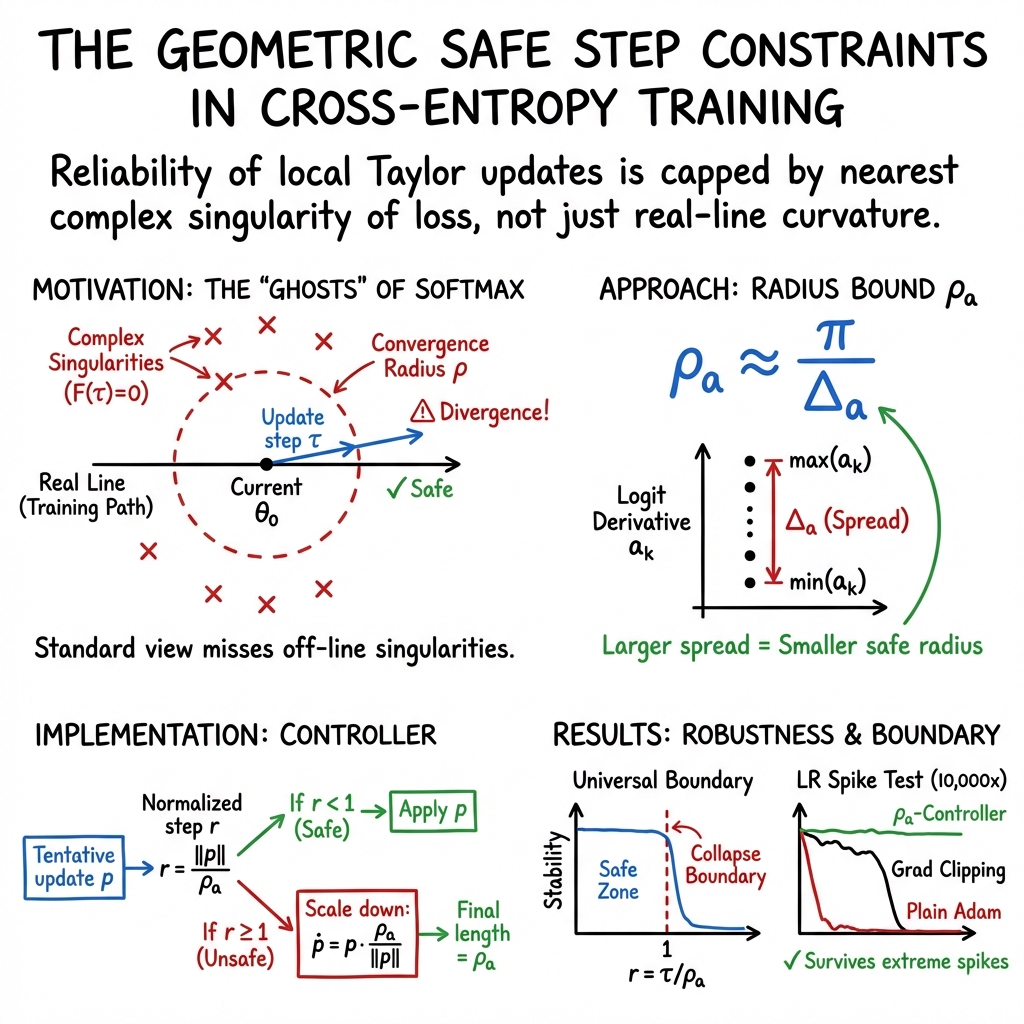

Abstract: Optimization analyses for cross-entropy training rely on local Taylor models of the loss to predict whether a proposed step will decrease the objective. These surrogates are reliable only inside the Taylor convergence radius of the true loss along the update direction. That radius is set not by real-line curvature alone but by the nearest complex singularity. For cross-entropy, the softmax partition function $F=\sum_j \exp(z_j)$ has complex zeros -- ``ghosts of softmax'' -- that induce logarithmic singularities in the loss and cap this radius. To make this geometry usable, we derive closed-form expressions under logit linearization along the proposed update direction. In the binary case, the exact radius is $ρ*=\sqrt{δ2+ π2}/Δ_a$. In the multiclass case, we obtain the lower bound $ρ_a=π/Δ_a$, where $Δ_a=\max_k a_k-\min_k a_k$ is the spread of directional logit derivatives $a_k=\nabla z_k\cdot v$. This bound costs one Jacobian-vector product and reveals what makes a step fragile: samples that are both near a decision flip and highly sensitive to the proposed direction tighten the radius. The normalized step size $r=τ/ρ_a$ separates safe from dangerous updates. Across six tested architectures and multiple step directions, no model fails for $r<1$, yet collapse appears once $r\ge 1$. Temperature scaling confirms the mechanism: normalizing by $ρ_a$ shrinks the onset-threshold spread from standard deviation $0.992$ to $0.164$. A controller that enforces $τ\leρ_a$ survives learning-rate spikes up to $10{,} 000\times$ in our tests, where gradient clipping still collapses. Together, these results identify a geometric constraint on cross-entropy optimization that operates through Taylor convergence rather than Hessian curvature.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (in plain words)

Training a neural network is like walking downhill to reach the lowest point of a landscape (the “loss”). Most optimizers decide how far to step using a local sketch of the landscape (a line or a gentle curve drawn near your feet). This paper shows there’s a hidden, geometry-based limit on how far that local sketch can be trusted. For the popular cross-entropy loss (used with softmax), that limit is set by “ghosts” — invisible blockers that live in the math world of complex numbers. If your step is too big and crosses this limit, your local sketch stops matching the real landscape, and training can suddenly blow up.

The authors explain this limit, show how to estimate it cheaply for any step direction, and demonstrate that keeping steps within this “safe radius” dramatically improves training stability.

The big questions the paper asks

- Why do training runs with cross-entropy sometimes fail suddenly, even when the learning rate seemed fine before?

- Can we compute a simple, architecture-agnostic number that says “this step is safe” vs “this step is risky”?

- Can that number be used to control step sizes and prevent collapses — even under huge, sudden learning-rate spikes?

How they approached it (everyday explanation)

Think of three ideas:

- A local sketch only works nearby When we use a Taylor approximation (a line or a curve made from derivatives) to predict what will happen after a step, that sketch is only trustworthy within a certain “radius” around the current point. Outside that radius, the sketch can mislead you badly, no matter how many terms you add.

- The “ghosts of softmax” set the radius Cross-entropy uses softmax, which turns raw scores (called logits) into probabilities using exponentials. The key object is the partition function:

For real steps, is positive. But if you look in the complex-number world (mathematicians do this to understand how series behave), has zeros. Those zeros act like invisible blockers — “ghosts” — that cap how far your Taylor sketch converges. If your step is longer than the distance to the nearest ghost, the sketch can fail.

- A simple, computable bound on the safe step

Directly finding these ghosts is hard, but the authors find a practical lower bound by linearizing how each logit changes along your intended step direction:

- Imagine asking: “If I nudge parameters a tiny bit this way, how fast does each class score go up or down?” Call these rates .

- Measure the spread of these rates: (the fastest-up minus the fastest-down score along that direction).

- Then a conservative “safe radius” is

This is cheap to compute (one Jacobian–vector product — think “one extra forward-like pass that asks how outputs change if I move a tiny bit in this direction”).

They also study a special case (binary classification) where the exact safe radius is:

where is the current gap between the two logits. This shows that confident samples (large ) often have more slack, but the worst case matches the simple bound .

The main findings and why they matter

To make the bound easy to use, they define a normalized step size:

- Let be the length of your proposed update.

- Let .

- Interpretation: means “inside the safe radius” (your local sketch should be reliable); means “risky territory.”

What they found:

- Across six different model architectures and many step directions, they never saw failures when . Once reached about $1$ or larger, collapses appeared (accuracy crashed, loss exploded).

- This boundary held not just along the gradient direction but also along many random directions — once steps were measured using , the transition was consistent.

- Temperature scaling behaved exactly as predicted. Changing the softmax “temperature” rescales the safe radius; when they replot results using , the messy spread of collapse points across temperatures tightens dramatically.

- A simple controller that enforces (i.e., keeps ) made training robust to extreme learning-rate spikes (up to 10,000×), where plain training and even gradient clipping failed.

- As a proof of concept, a controller that sets the learning rate using only local geometry (no hand-tuned schedule) reached 85.3% on ResNet-18/CIFAR-10, beating the best fixed learning rate (82.6%) in their tests.

Why this is important:

- It reveals a new kind of constraint that’s different from the usual “curvature” or “smoothness” rules. Even if the loss surface looks flat (low curvature), the safe radius can still be small because of these “ghost” limitations.

- It explains why late in training, when predictions get sharper, runs may suddenly become unstable: the derivative spread grows, so the safe radius shrinks.

- It gives a practical, optimizer-agnostic rule-of-thumb to avoid dangerous steps.

A simple picture of the method (with analogies)

- Taylor approximation = a local map: It tells you what the terrain looks like right next to you.

- Convergence radius = how far that map stays accurate: Past that, the map can mislead.

- Ghosts = invisible holes in a shadow version of the terrain (complex numbers): You can’t see them on the normal trail, but they still limit how far the map works.

- = how unevenly class scores change if you step in a specific direction: If some scores shoot up while others dive, the spread is big, and the safe radius shrinks.

- Controller = a smart brake: Before taking a step, measure ; if , scale the step down so you stay within the safe radius.

What this could change going forward

- Safer training by design: Instead of relying on trial-and-error learning-rate schedules or broad heuristics like gradient clipping, you can directly tie step sizes to a geometry-based safety bound.

- Fewer sudden collapses: Particularly late in training or under unexpected conditions (like a bug or a one-off spike), this bound helps prevent wiping out progress.

- Wide applicability: The bound depends on local output geometry, not on a specific optimizer, model, or hand-tuned schedule.

- Future directions: Extending this idea to multi-step planning, understanding how activations introduce their own singularities, and making fast, production-ready versions of the controller.

In short: the paper uncovers a simple, powerful rule — keep your steps smaller than a radius set by the “ghosts of softmax” — and shows it reliably marks the line between safe and dangerous updates in cross-entropy training.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

The paper establishes a one-step, complex-analytic constraint for softmax cross-entropy and proposes a tractable lower bound on the Taylor convergence radius. The following gaps and open questions remain:

- Tightness of the multiclass bound: quantify the gap between the lower bound and the exact radius for ; derive tighter, computable bounds that leverage more structure than the spread (e.g., top-k derivative gaps, ordering of , and weights ).

- Exact zero localization for multiclass: develop scalable algorithms to compute or approximate the nearest zero of per sample/batch without linearization, with provable error bounds and practical runtime.

- Validity of logit linearization: characterize when the first-order logit model is conservative; derive second-order corrections or certified remainder bounds that incorporate logit curvature along the step.

- Activation non-analyticities: rigorously analyze how piecewise-linear (ReLU) or other non-analytic activations affect analyticity of , the existence/locations of singularities, and the applicability of the complex-radius argument when activation patterns change along the step.

- Multi-step guarantees: extend the one-step convergence-radius constraint to multi-step dynamics with momentum/Adam; establish conditions under which occasional violations () lead to irreversible collapse vs. recoverable behavior; design provably safe multi-step controllers.

- Metric and parameterization dependence: study how the Euclidean step norm interacts with reparameterizations and scale invariances (e.g., BatchNorm, LayerNorm); investigate radius definitions in alternative metrics (e.g., Fisher/natural gradient) and their invariance properties.

- Interaction with adaptive optimizers: formally connect preconditioning (Adam, Adagrad) to and ; design optimizers that maximize progress subject to an constraint, including principled step-size selection with preconditioning.

- Stochastic mini-batch setting: replace worst-case (max over samples) aggregation with probabilistic guarantees (e.g., quantile or tail bounds on ) to mitigate outlier domination; relate chosen quantile to a target failure probability.

- Outlier sensitivity and robustness: develop robust estimators of (e.g., trimmed maxima, influence diagnostics) with safety guarantees; study effects on stability and generalization when outliers tighten the radius.

- Layerwise/blockwise control: investigate per-layer or blockwise radii and composition rules to reduce conservatism versus a single global bound; assess when layerwise control yields larger safe global steps.

- Computational overhead at scale: reduce the cost of one JVP per sample to estimate via batching, subsampling with confidence bounds, sketching, low-rank structure, or amortization across steps.

- Directional worst cases: characterize directions that minimize for fixed ; relate to trust-region or adversarial direction selection; provide worst-case guarantees across all directions.

- Descent guarantees inside the radius: beyond Taylor convergence, establish sufficient conditions under which implies actual loss decrease (e.g., via complex-analytic remainder bounds for ).

- Beyond softmax cross-entropy: extend the singularity/radius analysis to multi-label logistic, focal loss, label smoothing, contrastive/InfoNCE losses, and regression losses; identify their partition-function analogs and zero sets.

- Sequence models: for autoregressive NLL summing many log-partition terms across time, derive how per-token radii compose; identify the effective step constraint as a function of sequence length and temporal dependencies.

- Normalization layers and state: analyze how BatchNorm/LayerNorm (train vs. eval modes, running statistics) affect and computation and stability; devise controllers aware of stateful normalization dynamics.

- Regularization and auxiliary terms: study how weight decay, dropout, mixup/cutmix, or auxiliary objectives alter analyticity and the effective radius; reconcile multiple objectives with possibly different singularity structures.

- Temperature-scaling theory limits: formally prove when holds under different ways of implementing temperature (e.g., scaling logits vs. last-layer weights); quantify and explain residual deviations in the fingerprint experiments.

- Data-dependent factors: relate class imbalance, label noise, and margin distributions to the distribution of per-sample ghosts and the evolution of during training; predictive modeling of when small radii will emerge.

- Stronger multiclass analytic results: apply theory of zeros of exponential polynomials to obtain sharper zero-free regions than , incorporating magnitudes and spacing.

- Practical controller design: guidelines for batch scope (mini-batch vs. dataset), update frequency of , and combination with gradient clipping or line search; ablate controller choices on diverse workloads.

- Large-scale validation: evaluate the controller and -threshold on modern LLMs/ViTs and large datasets; document failure modes and performance/throughput trade-offs under realistic training regimes.

- Numerical precision effects: study interactions between mixed precision, loss scaling, and the radius controller; determine whether finite-precision artifacts mimic or mask singularity-driven failures.

- Ghost localization without linearization: explore Padé approximants, Prony/ESPRIT methods, or complex-step probing to estimate nearest zeros of when logits are nonlinear in , with accuracy/runtime analyses.

- Generalization impacts: assess whether enforcing consistently improves or harms final test performance across tasks; identify causal mechanisms (e.g., avoiding catastrophic spikes vs. restricting exploration).

- Distributed training: design and analyze mechanisms to compute/enforce constraints under data/model parallelism, gradient staleness, and asynchrony; quantify the effect on safety and throughput.

- Hybrid curvature–convergence controllers: combine curvature/Lipschitz information with the bound to reduce conservatism while retaining safety; derive joint bounds and update rules.

- Progress limits under safety: characterize the maximal safe decrease per step given a constraint; formulate and solve an optimal control problem balancing progress and safety.

- Robustness to adversarial inputs: examine whether adversarial perturbations systematically shrink ; evaluate whether the controller mitigates or exacerbates adversarial training instabilities.

- Reproducibility and standardization: provide standardized APIs for per-sample JVP and estimation across frameworks; verify cross-framework consistency of and controller behavior.

Practical Applications

Immediate Applications

Below are actionable, deployable-now use cases derived from the paper’s findings and bound (ρa = π/Δa), organized by sector and accompanied by potential tools/workflows and feasibility notes.

- Step-size safety guard (“radius clip”) for deep learning training

- Sector: software (ML frameworks, MLOps), cloud/HPC training

- What: Wrap any optimizer (SGD, AdamW, Adafactor) with a post-update scaler that enforces τ ≤ ρa by computing the current update direction v = p/||p||, estimating Δa via one Jacobian–vector product (JVP) per sample (or minibatch), computing ρa = π/max_x Δa(x; v), and rescaling p by min(1, ρa/||p||).

- Tools/products/workflows: PyTorch Lightning/Accelerate callback; Keras/TF optimizer wrapper; Optax (JAX) transform; MLFlow plugin logging r = τ/ρa and gating step application

- Assumptions/Dependencies: softmax cross-entropy loss; logits approximately linear over the step; forward-mode AD/JVP availability (or efficient emulation); added compute overhead (≈ one JVP per sample; can batch/approximate); bound is conservative and uses batch max over Δa.

- Per-step learning-rate auto-tuner using the normalized step r

- Sector: software (training systems), industry/academia

- What: Automatically set η each step to hit a target r∗ < 1 (e.g., 0.9): η = r∗ ρa/||v||; eliminates hand-designed LR schedules and improves stability/throughput.

- Tools/products/workflows: “GhostGuard LR” module that plugs into existing training loops; integration with schedulers (cosine, cyclic) as a hard cap

- Assumptions/Dependencies: same as above; r-threshold selection (e.g., 0.7–0.95) remains a practical knob but is less sensitive than LR.

- Divergence early-warning and training diagnostics via r-monitoring

- Sector: MLOps/observability, enterprise ML

- What: Log and alert on r ≳ 1 to preempt loss spikes; visualize r-distribution over time and by layer/model head; correlate with failure events to trigger mitigations (reduce LR, increase temperature, apply smaller micro-batches).

- Tools/products/workflows: dashboards in Weights & Biases/MLFlow; Prometheus/Grafana metrics exporters

- Assumptions/Dependencies: compute of ρa in the loop (or at check intervals); best with per-step statistics; interpretation relies on softmax CE geometry.

- Temperature-aware stability control

- Sector: all training domains; safety/reliability

- What: Use the scaling law ρa(T) = πT/Δa to transiently increase temperature T when r nears 1, then anneal back; stabilize spikes (e.g., at the start of fine-tuning or after LR increases).

- Tools/products/workflows: scheduler that adjusts T and LR jointly to keep r below 1

- Assumptions/Dependencies: model supports logits temperature; trade-off with calibration/confidence; still requires Δa estimate.

- Bottleneck-sample identification and data triage

- Sector: data engineering/quality

- What: Identify samples with largest Δa (tightest per-sample radius) to: (i) flag label noise/outliers, (ii) prioritize curriculum or reweighting, (iii) route to targeted augmentation.

- Tools/products/workflows: batch hooks that record top-k Δa samples; dataset cleaners that surface recurring bottlenecks

- Assumptions/Dependencies: per-sample JVPs; sampling or top-2 class approximations for large outputs (e.g., language modeling).

- Robust hyperparameter search and automatic schedule capping

- Sector: AutoML, platform engineering

- What: During sweeps (LR, warmup, weight decay), enforce r ≤ 1 as a guardrail; prevents wasted runs due to collapse and reduces compute costs.

- Tools/products/workflows: scheduler wrappers in Ray/Tune, Vertex AI, SageMaker; “fail-safe” caps for aggressive schedules

- Assumptions/Dependencies: moderate overhead of ρa computation; logit linearization reasonable for candidate steps.

- Stability hardening for high-risk pipelines (e.g., BatchNorm/metrics)

- Sector: vision, speech, recommendation systems

- What: Apply radius clip during phases known to be fragile (e.g., right after LR spikes, domain shifts) to avoid corrupting batch statistics or erasing learned representations.

- Tools/products/workflows: conditional controller enabled when r spikes, or for the first N steps of new phases

- Assumptions/Dependencies: CE training; adds some extra compute during guarded phases.

- Reporting and reproducibility practices

- Sector: academia/industry publication and compliance

- What: Report r histograms, mean/percentile r over training, and controller use; improves interpretability of training stability and supports reproducibility.

- Tools/products/workflows: experiment templates and reporting checklists; CI validation that r stays below threshold

- Assumptions/Dependencies: adoption in experiment protocols; standardized logging.

- Sector-specific stability wins (training-time)

- Healthcare: safer training of medical imaging classifiers without manual LR schedules; reduces risk of catastrophic updates on scarce data

- Finance: stable fine-tuning of sequence models under regime shifts

- Robotics: safer on-policy updates in imitation/supervised stages before RL

- Assumptions/Dependencies: CE loss in the training stage; per-sample JVP overhead acceptable (or approximated); compliance with data governance (no new privacy risks).

Long-Term Applications

These opportunities require further research, engineering, or scaling before broad deployment.

- Trust-region optimizers based on analytic convergence radii

- Sector: software (optimizers), academia

- What: Design “ghost-aware” optimizers that accept/reject steps using r or tighter ρ estimates (including numerical root-finding for F(t) zeros), moving from heuristic clipping to principled trust regions.

- Potential products: next-gen AdamW variants or second-order methods with radius-informed step proposals

- Dependencies: fast, low-variance estimators of ρ that scale to large models; multi-step theory linking one-step radius to cumulative stability.

- Layerwise or modulewise radius estimation and allocation

- Sector: deep learning research, large-scale training

- What: Estimate per-layer Δa and apportion a global step budget to layers with larger radii; decouple risky layers (e.g., heads) from stable backbones.

- Potential products: layerwise LR scaling driven by ρ; adapters that gate specific modules when r-local ≥ 1

- Dependencies: per-layer JVPs and instrumentation; aggregation logic for distributed training.

- Loss and architecture design to enlarge the convergence radius

- Sector: research, platform teams

- What: Explore alternatives that move “ghosts” farther from the origin (e.g., tempered/regularized softmax, label smoothing schedules, alternative normalizers); design architectures or regularizers that control Δa growth.

- Potential products: “radius-friendly” loss families; Δa-regularization penalties; training recipes that modulate Δa dynamics

- Dependencies: theoretical/empirical validation of generalization impacts; trade-offs with calibration and accuracy.

- Extensions beyond softmax cross-entropy

- Sector: academia/industry

- What: Analyze and bound radii for other objectives with partition-like structures (e.g., contrastive/infoNCE losses, CTC, multi-label) and for nonlinearity-induced singularities (activation functions).

- Potential products: generalized “radius clip” for a broader set of losses; libraries exposing r for different tasks

- Dependencies: new theory and numerics for each loss; empirically verified conservatism and cost.

- Efficient, large-vocabulary approximations for LLMs

- Sector: LLM training, NLP

- What: Approximations for Δa that avoid full-vocabulary JVPs (e.g., top-k logits, sampled softmax, head-only estimates) while preserving safety guarantees.

- Potential products: CUDA kernels for batched JVPs; proxy metrics calibrated to ρ

- Dependencies: accuracy–cost trade-off studies; distributed reduction (max Δa across data-parallel shards).

- Federated/on-device and online learning safeguards

- Sector: mobile/edge, robotics

- What: Use r as a local safety gate for client-side updates or real-time controllers; reject or downscale risky updates before aggregation/actuation.

- Potential products: lightweight “r-safety” module in federated SDKs or control stacks

- Dependencies: ultra-low-overhead ρ proxies; partial-information settings (no full-batch Δa).

- Training orchestration and resource policy

- Sector: policy, sustainability, platform operations

- What: Policies that require stability metrics (e.g., r ≤ 1 percentile targets) in large-scale training; auto-pausing or rollback when fleets show r spikes; compute-allocation that prioritizes stable regimes.

- Potential products: governance dashboards; SLAs incorporating stability KPIs

- Dependencies: organizational adoption; standardized measurement; understanding of trade-offs between speed and stability.

- Education and curriculum

- Sector: academia/education

- What: Course modules and interactive labs demonstrating Taylor convergence limits and “ghosts of softmax”; training exercises with r-controlled schedules.

- Potential products: notebooks, visualizers (“GhostScope”) showing F(t) zeros and r-evolution

- Dependencies: didactic material and tooling; integration into ML curricula.

- Safety-aware schedules and controllers in AutoML

- Sector: AutoML, enterprise ML

- What: Multi-objective controllers that co-optimize speed and stability by targeting r bands, modulating LR, temperature, batch size, and gradient accumulation dynamically.

- Potential products: AutoML “stability knobs” exposed as high-level intents (“fast but safe”)

- Dependencies: robust multi-signal control policies; benchmarking across tasks/models.

- Multi-step theory and guarantees

- Sector: research

- What: Extend one-step radius guarantees to multi-step dynamics, including cumulative effects, cancellation, and interaction with momentum/adaptive preconditioners.

- Potential products: provable convergence regimes that incorporate analytic-radius constraints; certified-safe training protocols

- Dependencies: new theory and empirical validation; potentially tighter radii than π/Δa via numerical root-finding.

Notes on feasibility across items:

- Core assumptions that recur: training uses softmax cross-entropy; logits are approximately linear over the proposed step; ρa is a lower bound (conservative); the maximum Δa over the set controls safety; temperature and margin (δ) affect slack.

- Key dependencies: availability and cost of JVPs (forward-mode AD or efficient approximations); distributed aggregation of Δa in data-parallel setups; engineering integration in existing training stacks.

- Known limitations: the bound is sufficient but not necessary (training may survive r > 1 without guarantees); extensions to other losses and activation-induced singularities require new analysis; overhead may be non-trivial without approximations in very large models.

Glossary

- Adam: A popular adaptive optimization algorithm that uses estimates of first and second moments of gradients to scale updates. "or a preconditioned direction for Adam"

- analytic (function): A function that equals its Taylor series in a neighborhood of a point. "A function is analytic at if it equals its Taylor series in some neighborhood:"

- analytic continuation: The extension of an analytic function to a larger domain in the complex plane. "equals the distance from the origin to the nearest singularity of 's analytic continuation to ."

- branch point: A type of complex singularity where a multi-valued function (like the logarithm) cannot be made single-valued. "creating a branch point of the logarithm."

- Cauchy--Hadamard theorem: A result giving the radius of convergence of a power series as the distance to the nearest singularity. "By Cauchy--Hadamard (Section~\ref{sec:prelim}), convergence holds inside the disk set by the nearest complex singularity."

- complex extension: Viewing a real function as a function of a complex variable to analyze its singularities. "the complex extension has a pole at ."

- complex singularity: A point in the complex plane where a function fails to be analytic. "set not by real-line curvature alone but by the nearest complex singularity."

- convergence disk: The disk in the complex plane centered at the expansion point within which the Taylor series converges. "the convergence disk has radius ."

- convergence radius: The maximal distance from the expansion point for which a Taylor series converges. "Inside the convergence radius (green), all orders approximate well."

- cross-entropy: A loss function measuring the negative log-likelihood of the correct class under a predicted distribution. "Cross-entropy training works well but can fail suddenly."

- decision flip: A change in the predicted class due to parameter updates. "samples that are both near a decision flip and highly sensitive to the proposed direction tighten the radius."

- directional convergence radius: The radius of convergence of the Taylor series along a particular direction in parameter space. "The directional convergence radius along is:"

- entire (function): A function that is holomorphic over the entire complex plane. "The linear term is entire;"

- Euler's identity: The relation , linking fundamental constants. "Euler: "

- ghosts of softmax: The paper’s term for complex zeros of the softmax partition function that induce logarithmic singularities. "has complex zeros---``ghosts of softmax''---that induce logarithmic singularities"

- gradient clipping: A technique that limits the norm or magnitude of gradients to improve training stability. "gradient clipping still collapses."

- Hessian: The matrix of second derivatives of a function, describing local curvature. "with gradient and Hessian "

- holomorphic: Complex differentiable (analytic) at every point in an open set in the complex plane. "then is holomorphic there."

- Jacobian (matrix): The matrix of first-order partial derivatives of a vector-valued function. "where is the Jacobian matrix"

- Jacobian--vector product (JVP): The product of a Jacobian matrix with a vector, computable without explicitly forming the Jacobian. "This bound costs one Jacobian--vector product"

- KL divergence: A measure of dissimilarity between two probability distributions (Kullback–Leibler divergence). "A separate proof via real-variable KL divergence bounds confirms the same scaling"

- L-smoothness: A condition that the gradient is Lipschitz continuous with constant L, often used to bound step sizes. "This yields a fundamentally different constraint from -smoothness."

- Lipschitz constant: The smallest constant bounding how fast a function (or its gradient) can change. "it assumes the gradient is Lipschitz with constant~"

- log-partition function: The logarithm of the sum of exponentials of logits; normalizes probabilities in softmax. "The second term is the log-partition function"

- logit: The raw, unnormalized score output by a classifier before applying softmax. "A neural network maps input and parameters to raw, unnormalized scores called logits:"

- logit gap: The difference between two class logits, often the top-2, reflecting classification margin. "where is the logit gap between classes;"

- logit linearization: Approximating logits as linear functions of the step size along a direction. "we derive closed-form expressions under logit linearization along the proposed update direction."

- logit-derivative spread: The range (max minus min) of directional derivatives of logits along a step direction. "where is the spread of directional logit derivatives"

- logarithmic singularity: A point where a logarithmic term becomes singular (non-analytic), often due to a zero inside the log. "that induce logarithmic singularities in the loss"

- normalized step size: A dimensionless ratio comparing the actual step to the estimated safe radius. "The normalized step size separates safe from dangerous updates."

- partition function: The sum of exponentials of logits; for softmax, it ensures probabilities sum to one. "the softmax partition function has complex zeros"

- pole: A type of isolated singularity where a function grows unbounded like 1/(z−z0)k. "the complex extension has a pole at ."

- preconditioned direction: A search direction transformed by a preconditioner (e.g., from an optimizer) to improve conditioning. "or a preconditioned direction for Adam"

- softmax: A function that converts logits into probabilities by exponentiating and normalizing. "For cross-entropy, the softmax partition function "

- Taylor polynomial: A finite-degree polynomial approximation of a function based on derivatives at a point. "a local Taylor polynomial of the loss."

- Taylor series: An infinite series expansion of a function around a point using its derivatives. "The Taylor series of around converges for "

- temperature scaling: Rescaling logits by a temperature parameter to control confidence or smoothness. "Temperature scaling confirms the mechanism:"

- top-2 reduction: Focusing on the two most relevant classes (top two logits) to simplify analysis. "under a top-2 reduction with margin "

Collections

Sign up for free to add this paper to one or more collections.