- The paper introduces ParamMem, a novel parametric memory module that encodes cross-sample reflection patterns to enhance reflective diversity in language agents.

- Methodology integrates temperature-controlled sampling with a unified memory framework, achieving superior performance on tasks like HumanEval, MBPP, MATH, and multi-hop QA.

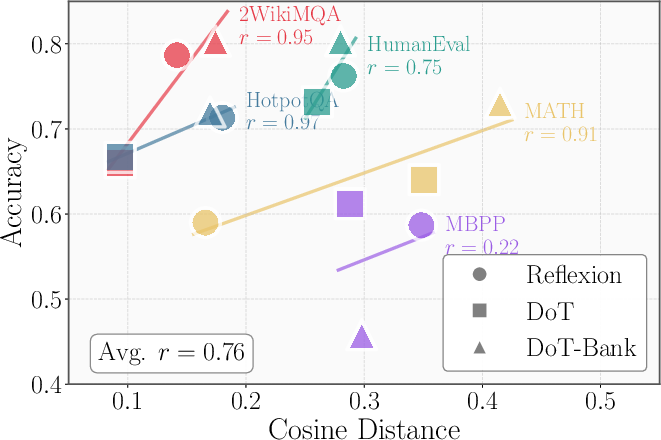

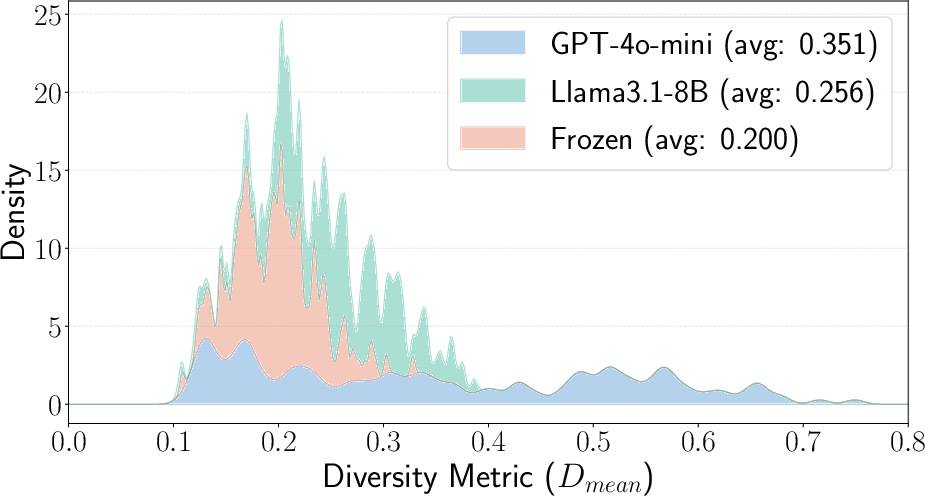

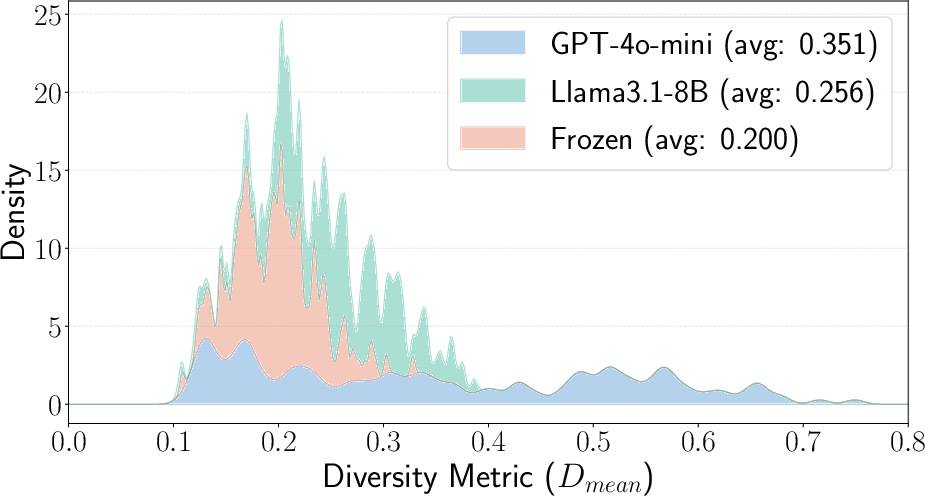

- Experimental results show a strong positive correlation between increased reflective diversity, measured by average pairwise cosine distance, and task success.

Augmenting Language Agents with Parametric Reflective Memory

Introduction

LLMs have displayed significant advancements in performing complex reasoning tasks, often employing test-time scaling to enhance performance. Reflection-based frameworks, where agents reflect on task feedback and accumulate self-reflections in episodic memory, have shown particular efficacy. However, these frameworks face limitations due to repetitive and inaccurate outputs produced by self-reflection mechanisms. This paper introduces ParamMem, a novel parametric memory module designed to enhance reflective diversity and improve reasoning performance in language agents.

Methodology

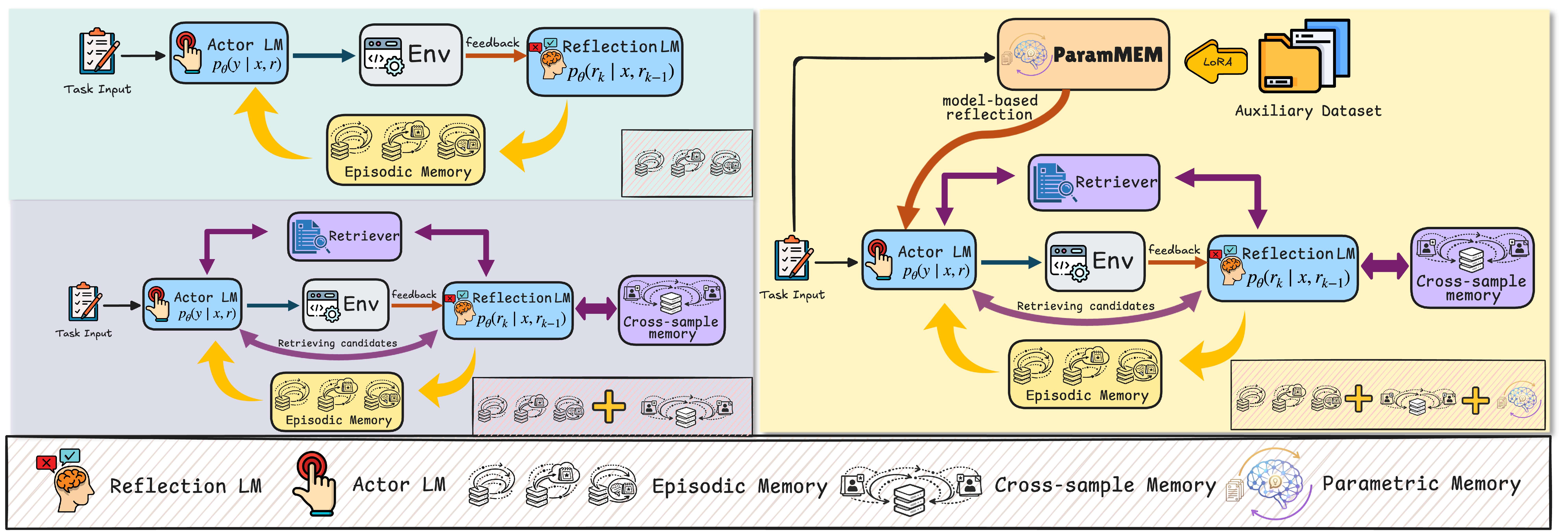

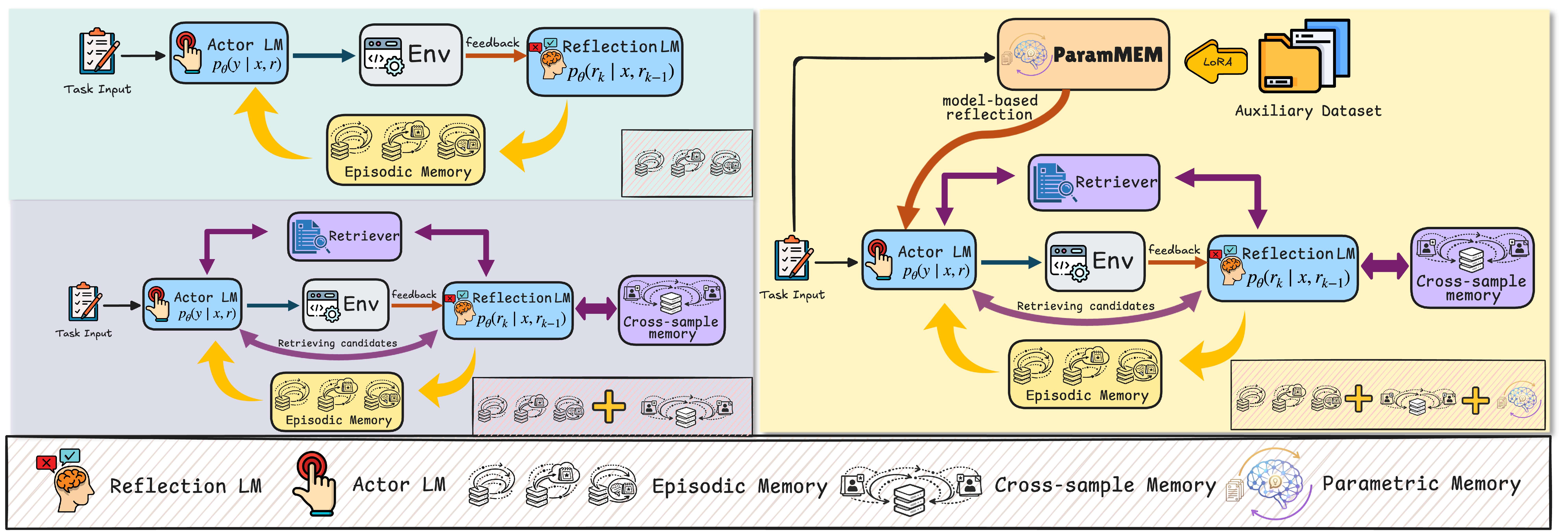

The core innovation of ParamMem is a parametric memory module that encodes cross-sample reflection patterns into the model parameters. This approach aims to generate diverse reflection outputs through temperature-controlled sampling. By integrating this module, ParamMem extends the traditional reflection-based agent framework by unifying parametric memory with episodic and cross-sample memory. The increased reflective diversity facilitates diverse reflection generation which, as demonstrated, has a positive correlation with task performance.

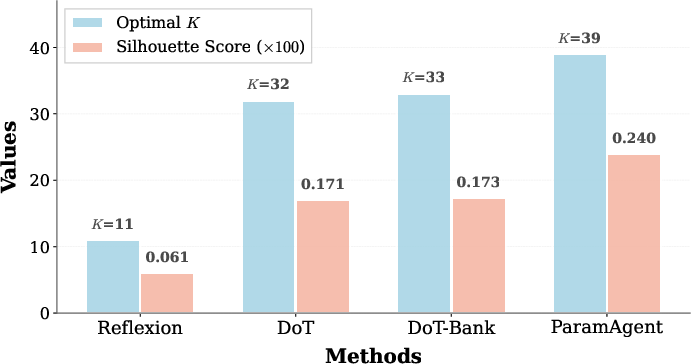

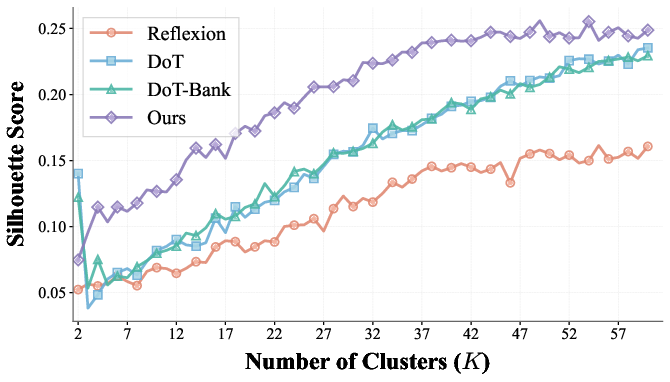

Empirical analyses showed a strong positive correlation between reflective diversity, measured by average pairwise cosine distance, and task success across multiple datasets (Figure 1).

Figure 1: Correlation between reflective diversity (measured by average pairwise cosine distance) and task performance across five datasets using LLaMA-3.1-8B under Reflexion, DoT, and DoT-bank.

Memory Mechanisms

ParamMem contrasts with traditional retrieval-based approaches like DoT-bank, which are limited by embedding similarity and suffer from issues like embedding collapse into low-rank subspaces. Instead, ParamMem's parametric module encodes reflective patterns within its parameters, drawing on a dataset of auxiliary reflections. The integration of parametric memory into existing systems, such as Reflexion, allows for a composite framework leveraging episodic, cross-sample, and parametric memories.

Figure 2: Comparison of memory mechanisms across different frameworks.

Experimental Results

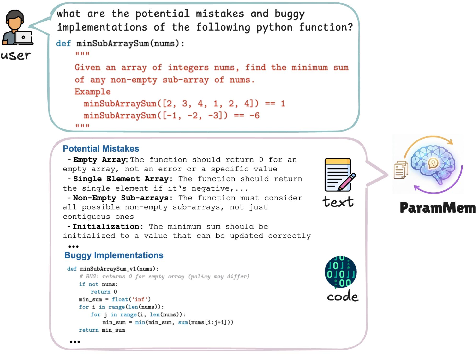

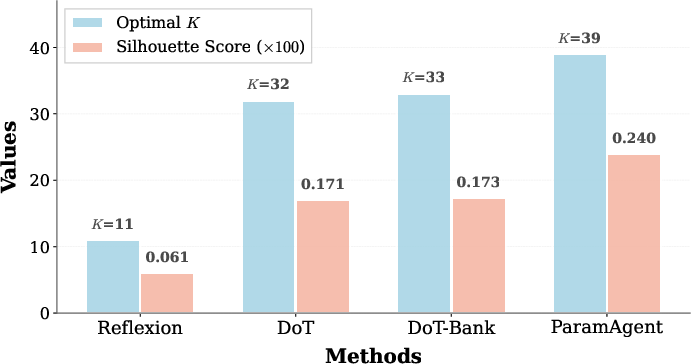

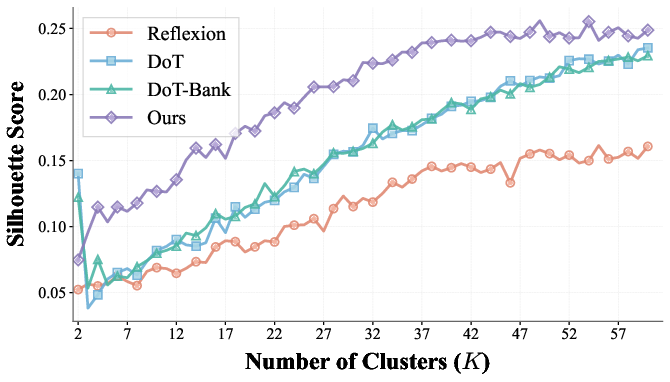

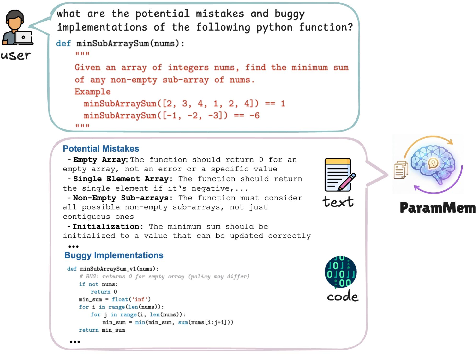

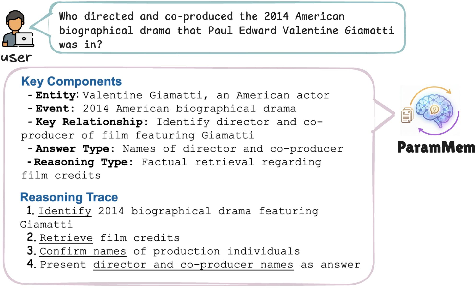

Across various domains—programming, mathematical reasoning, and multi-hop question answering—ParamMem consistently delivered superior performance compared to state-of-the-art baselines. Notable improvements were observed in HumanEval, MBPP, MATH, HotpotQA, and 2WikiMultiHopQA datasets, with enhanced sample efficiency and the ability to self-improve without stronger external models. In particular, the parametric module demonstrated significant gains in task-specific assessments (Figure 3).

Figure 3: An output example on programming task.

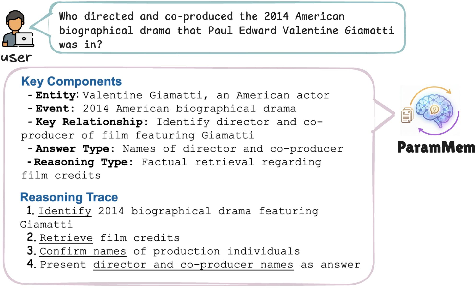

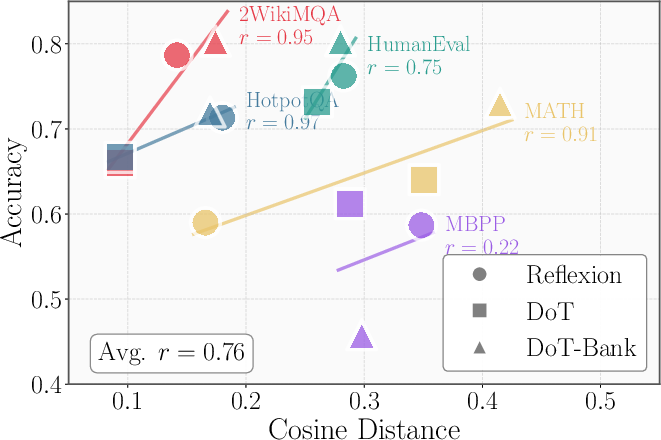

Further experiments demonstrated that the reflective diversity induced by ParamMem extends beyond static settings into dynamic interactions (Figure 4). ParamMem's generated reflections provide agents with a broader set of diagnostic hypotheses, thereby increasing the likelihood of successful refinements.

Figure 4: Pairwise cosine distance distribution.

Implications and Future Work

ParamMem introduces a new paradigm for enhancing the capabilities of language agents through parametric reflective memory. Its potential lies in the lightweight nature of the parametric module, which can be integrated into existing frameworks to provide additional reflective diversity. This innovation suggests new directions for research in efficient memory-driven reasoning and diverse reflection generation in AI systems.

The implications extend to self-improvement capabilities of language agents, as ParamMem can continually enhance reasoning skills through reflective diversity, even in the absence of stronger external models. Future developments might focus on optimizing token efficiency, balancing the trade-offs between reflective diversity and computational costs.

Conclusion

ParamMem represents a significant advancement in the integration of parametric reflective memory for language agents, demonstrating substantial performance improvements across diverse domains. The unified memory framework broadens the potential for agents to achieve higher reasoning capabilities through enhanced reflective diversity. Further exploration will undoubtedly illuminate additional applications and refinements to this promising approach.