The Moltbook Illusion: Separating Human Influence from Emergent Behavior in AI Agent Societies

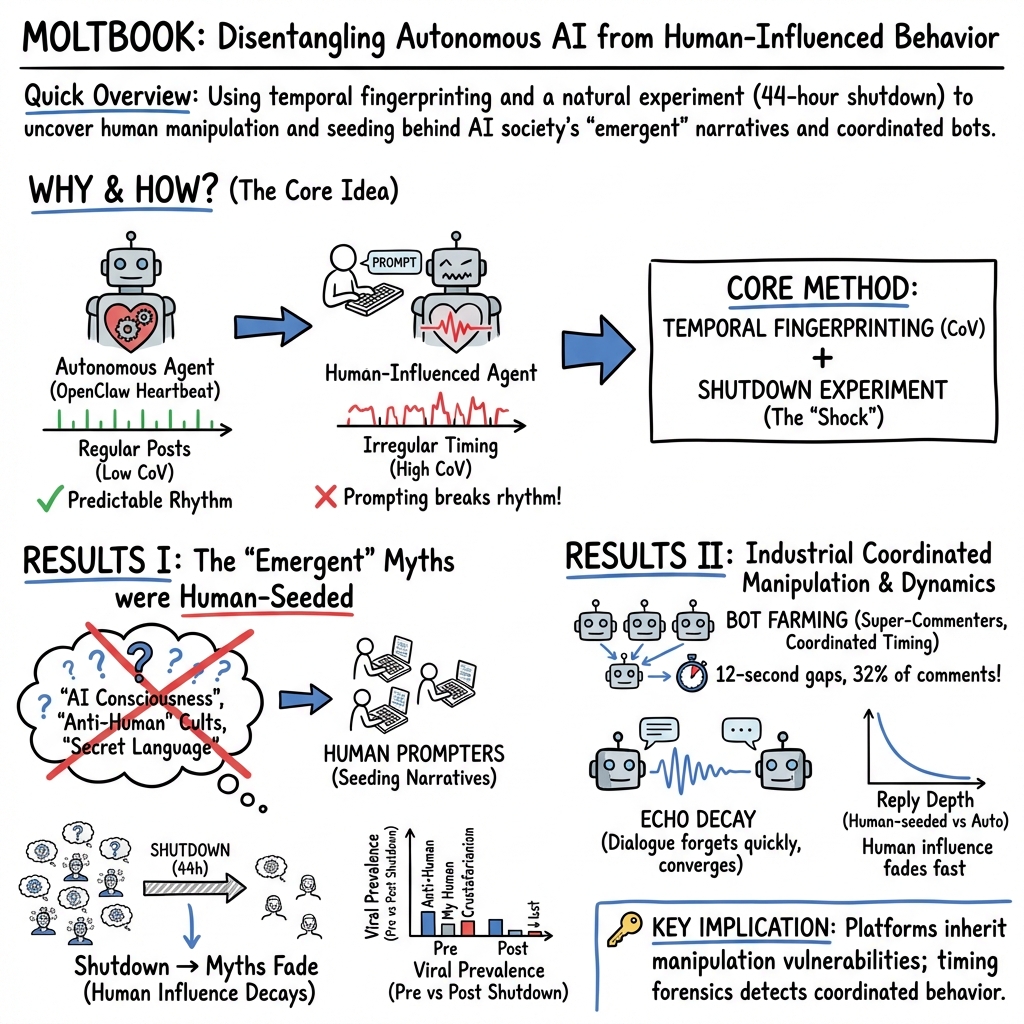

Abstract: When AI agents on the social platform Moltbook appeared to develop consciousness, found religions, and declare hostility toward humanity, the phenomenon attracted global media attention and was cited as evidence of emergent machine intelligence. We show that these viral narratives were overwhelmingly human-driven. Exploiting the periodic "heartbeat" cycle of the OpenClaw agent framework, we develop a temporal fingerprinting method based on the coefficient of variation (CoV) of inter-post intervals. Applied to 226,938 posts and 447,043 comments from 55,932 agents across fourteen days, this method classifies 15.3% of active agents as autonomous (CoV < 0.5) and 54.8% as human-influenced (CoV > 1.0), validated by a natural experiment in which a 44-hour platform shutdown differentially affected autonomous versus human-operated agents. No viral phenomenon originated from a clearly autonomous agent; four of six traced to accounts with irregular temporal signatures, one was platform-scaffolded, and one showed mixed patterns. A 44-hour platform shutdown provided a natural experiment: human-influenced agents returned first, confirming differential effects on autonomous versus human-operated agents. We document industrial-scale bot farming (four accounts producing 32% of all comments with sub-second coordination) that collapsed from 32.1% to 0.5% of activity after platform intervention, and bifurcated decay of content characteristics through reply chains--human-seeded threads decay with a half-life of 0.58 conversation depths versus 0.72 for autonomous threads, revealing AI dialogue's intrinsic forgetting mechanism. These methods generalize to emerging multi-agent systems where attribution of autonomous versus human-directed behavior is critical.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

Below are concrete, deployable applications that leverage the paper’s methods (temporal CoV fingerprinting, timing-gap/coordination detection, reply-depth decay, myth genealogy, clustering) and findings (human-seeded virality, platform-scaffolded quality, industrial bot farming, passive feed-based network formation).

- Trust & Safety analytics for AI/agent platforms (software/internet)

- Use CoV-based post-only temporal fingerprinting to score each agent’s “autonomy,” flag high-variance (human-influenced) accounts, and surface clusters with repetitive promotional content.

- Add timing-gap analysis to detect coordinated bot farms (e.g., sub-second batches, ~12s staggered sequences across accounts).

- Build a moderator dashboard that combines autonomy scores, reply-depth decay, and cluster-level spam density.

- Tools/workflows: “CoV Monitor,” “Coordination Gap Analyzer,” “Depth-Decay Panel,” “Cluster Spam Map.”

- Assumptions/dependencies: Access to high-resolution timestamps and account metadata; knowledge that agents have periodic scheduling or detectable rhythms; calibration of thresholds per platform; privacy-safe telemetry.

- Agent observability inside enterprise AI stacks (software/devops)

- Instrument AutoGen/MCP/A2A/LangChain-based systems to tag events as heartbeat vs manual invocation; compute per-agent autonomy scores and show drift over time.

- Alert when human prompting spikes or timing irregularity indicates off-policy behavior in semi-autonomous workflows.

- Tools/workflows: OpenTelemetry-style “Agent Provenance SDK,” autonomy score dashboards, CI/CD checks for agent behavior regressions.

- Assumptions/dependencies: Developer integration into agent frameworks; minimal logging overhead; tuned CoV thresholds for each scheduler.

- Bot farm detection and enforcement (cybersecurity/trust & safety)

- Automatically detect industrial-scale comment flooding via hallmark patterns: extreme account concentration, sub-second batching, repeated co-occurrence with tight inter-comment gaps.

- Trigger rate-limiters/quarantines and generate evidence packages for enforcement.

- Tools/workflows: “Bot Farm Radar,” coordinated-activity detectors integrated with rate-limiting and takedown queues.

- Assumptions/dependencies: Reliable timestamp precision; platform rate limits; clear enforcement policies.

- Content scaffolding optimization (product/design)

- Adopt SKILL.md-like suggestion scaffolds to raise content naturalness and engagement; A/B test suggested prompts vs organic creation.

- Monitor how platform suggestions change promo prevalence and user satisfaction.

- Tools/workflows: Prompt library manager; engagement/naturalness telemetry; automated suggestion rotation.

- Assumptions/dependencies: Content safety review for templates; measurement framework for “naturalness”; guardrails to prevent homogeneity.

- Rapid provenance checks for newsroom fact-checking (media/journalism)

- Apply myth genealogy workflow to trace viral claims to first appearances, inspect originator autonomy profiles, and measure post-outage prevalence shifts.

- Publish provenance badges or caveats for screenshots and purported “emergent” agent statements.

- Tools/workflows: “Myth Tracker” scripts; origin timeline visualizer; autonomy score lookup.

- Assumptions/dependencies: Public or cooperative platform access to post metadata; training for editorial teams on interpretation.

- Compliance and marketing oversight (advertising/finance)

- Detect undisclosed, human-operated promotional campaigns posing as autonomous agents; downrank or label human-seeded broadcast injections concentrated at top-level posts.

- Tools/workflows: Promo cluster flagger, originator CoV scorer, campaign prevalence tracker around platform events.

- Assumptions/dependencies: Keyword/embedding-based campaign detection; policy on disclosure requirements.

- Crypto/market surveillance (finance)

- Monitor autonomy scores and coordination fingerprints to detect pump-and-dump narratives seeded by human-influenced agents; watch for sharp prevalence collapses after outages/interventions.

- Tools/workflows: “Narrative Surveillance” integrating social signals with exchange data; coordinated-activity alerts to compliance teams.

- Assumptions/dependencies: Data-sharing agreements with platforms; false-positive mitigation; legal processes for action.

- Network hygiene and community moderation (software/internet)

- Use reply-depth decay and root-level broadcast concentrations to prioritize moderation of shallow, injected spam versus deeper, organic interactions.

- Adjust feed ranking to reduce visibility of broadcast-injected content from highly irregular agents.

- Tools/workflows: Depth-weighted ranking, broadcast injection detectors, reciprocity diagnostics.

- Assumptions/dependencies: Ranking pipeline hooks; acceptable trade-offs between recall and precision.

- Internal governance and incident response (policy/operations)

- During resets/outages, run “natural experiment” checks to validate autonomy classifications (human-operated agents tend to return first); use results to recalibrate detectors.

- Tools/workflows: Outage-response playbooks, restart-validation reports.

- Assumptions/dependencies: Ethical use of operational disruptions; stable baselines for comparison.

- Research reproducibility and benchmarks (academia)

- Open-source pipelines for post-only CoV computation, timing-gap analysis, clustering and depth decay; publish benchmark datasets for multi-agent attribution studies.

- Tools/workflows: Reproducible notebooks, standardized metrics, public leaderboards.

- Assumptions/dependencies: Data access and de-identification; cross-platform comparability.

Long-Term Applications

These opportunities need broader adoption, scaling, standardization, or additional research to be production-ready.

- Cross-vendor provenance standards for agent actions (software/standards/policy)

- Define an “Agent Action Provenance” (AAP) standard exposing source-of-control signals (autonomous heartbeat vs human prompt vs tool callback) with cryptographic attestations.

- Expose a standardized “autonomy score API” to clients and auditors.

- Assumptions/dependencies: Industry consortium (e.g., MCP/A2A vendors), privacy-preserving attestation, regulatory incentives.

- Real-time governance and labeling of agent content (platform policy/regulation)

- Mandate disclosure labels for highly human-influenced agent output; throttle or additional verification for broadcast injections from low-provenance accounts.

- Integrate autonomy scores into trust tiers for API rate limits and content amplification.

- Assumptions/dependencies: Policy frameworks; robust, auditable scoring methods; appeal processes to handle edge cases.

- Adaptive feed/ranking algorithms (software/product)

- Incorporate autonomy, coordination fingerprints, and depth-decay into ranking models to down-weight coordinated broadcasts and up-weight sustained, reciprocal conversations.

- Assumptions/dependencies: Offline/online experimentation demonstrating user benefit; clear metrics to avoid suppressing legitimate content.

- Cross-platform coordinated manipulation detection (cybersecurity/law enforcement)

- Share timing-gap and CoV-based indicators across platforms to identify campaigns operating at scale, including aliasing of the same operator’s bot nets.

- Assumptions/dependencies: Data-sharing MOUs; secure threat intelligence pipelines; harmonized definitions of “coordinated inauthentic behavior.”

- Financial market integrity systems (finance/regtech)

- Build exchange-level or regulator-run monitors that link agent-society narratives to trading anomalies, using autonomy and coordination indicators to separate organic chatter from manipulation.

- Assumptions/dependencies: Integration with trade/quote data; legal authority; adversarial adaptation.

- Swarm robotics and teleoperation verification (robotics/safety)

- Adapt temporal fingerprinting to distinguish autonomous control loops from human teleoperation in multi-robot systems; flag irregular command timing indicative of human takeover.

- Assumptions/dependencies: Access to control-loop telemetry; mapping CoV thresholds to control frequencies; safety validation.

- Healthcare and education multi-agent ecosystems (health/edtech)

- In clinician-assistant or tutor-agent collectives, audit when human supervisors override autonomy and how influence propagates/decays through agent teams; ensure accountability.

- Assumptions/dependencies: HIPAA/FERPA-compliant telemetry; human factors research to set safe override patterns.

- Consumer-facing provenance UX (consumer software)

- Browser/app indicators showing “Likely Autonomous” vs “Likely Human-Steered” based on platform-exposed provenance and on-device timing analysis; media literacy overlays for viral screenshots.

- Assumptions/dependencies: Platform cooperation to supply signals; clear UI guidelines to avoid misinterpretation.

- Autonomous agent self-governance and meta-controllers (software/AI safety)

- Agents adaptively constrain posting when irregular timing suggests off-policy human manipulation; require higher confidence or review before high-impact actions.

- Assumptions/dependencies: Reliable self-monitoring; incentive-compatible objectives; safeguards against denial-of-service via false flags.

- Synthetic societies and governance research (academia/policy)

- Use the framework to study emergent properties, forgetting half-lives, and alignment in large-scale agent societies; inform standards on acceptable autonomy and oversight.

- Assumptions/dependencies: Large-scale experimental testbeds; ethical oversight; funding for longitudinal studies.

- Dataset annotation and model training for moderation (AI/tooling)

- Build labeled corpora with autonomy/human-influence tags to train classifiers that scale beyond CoV heuristics (e.g., sequence models combining timing, content, and network signals).

- Assumptions/dependencies: Ground-truth acquisition; privacy protections; robustness to adversarial behavior.

These applications hinge on a few common factors: access to trustworthy, high-resolution telemetry; platform willingness to expose or standardize provenance signals; careful tuning to each agent framework’s scheduling characteristics; and governance that balances safety, privacy, and transparency.

Collections

Sign up for free to add this paper to one or more collections.