- The paper introduces NiFi, a framework that couples extreme 3DGS compression with a one-step diffusion restoration to achieve nearly 1000x compression with high perceptual fidelity.

- It employs a two-stage process: artifact synthesis using pruned 3DGS and restoration with a latent diffusion model enhanced by low-rank and critic adapters.

- Experimental results on multiview datasets demonstrate significant size reduction (e.g., from 555 MB to 0.599 MB) while retaining fine geometric details and state-of-the-art visual quality.

Extreme Compression of 3D Gaussian Splatting via Diffusion Model Restoration: The NiFi Framework

Introduction

3D Gaussian Splatting (3DGS) has rapidly solidified its standing as a premier method for real-time novel view synthesis due to its sparse, parallelizable primitive representation. However, despite its rendering efficiency, 3DGS incurs substantial storage and transmission costs due to the large number of Gaussian primitives required to maintain high perceptual fidelity. Existing compression techniques, including structured (anchor-based, graph-based) and unstructured schemes (quantization, pruning), achieve impressive rate-distortion trade-offs but invariably introduce severe artifacts when targeting extreme-rate regimes (100× and beyond), fundamentally degrading both the geometry and appearance of rendered scenes.

"Nix and Fix: Targeting 1000x Compression of 3D Gaussian Splatting with Diffusion Models" (2602.04549) identifies the restoration of 3DGS compression artifacts as a central bottleneck and presents NiFi, a two-stage framework that fuses extreme-rate 3DGS compression with one-step artifact-aware restoration leveraging diffusion models. This approach enables compression ratios approaching 1000× while retaining state-of-the-art perceptual quality, opening the door for scalable deployment of photorealistic 3D content in bandwidth-constrained applications.

Compression Artifacts and the Motivation for Restoration

Current 3DGS compression employs aggressive pruning, quantization, and entropy coding (Figure 1), yielding highly non-trivial degradations distinct from classical 2D image distortions: loss of geometric detail, radiance collapse, and semantically inconsistent blurring. The renderings after extreme compression lack the structural and textural cues necessary for immersive scenes, even for sophisticated downstream restoration networks. These challenges motivate a restoration protocol tailored for the unique generative artifact structure of 3DGS.

Figure 1: Artifacts resulting from 3DGS compression at different rates—degradation is driven by pruning, quantization, and entropy coding, manifesting as loss of geometry, texture, and radiance.

Methodology: NiFi Framework

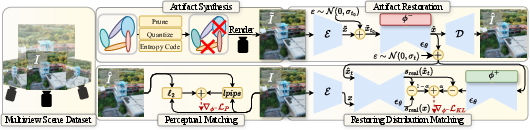

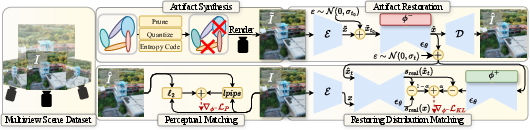

NiFi divides the pipeline into compression artifact synthesis and restoration via a diffusion-based, artifact-aware model. The overall system is illustrated in Figure 2.

Figure 2: The overall pipeline of NiFi, showing the generation of degraded/ground-truth image pairs, their mapping to the diffusion latent space, and one-step restoration via a diffusion model adapted for artifact removal.

Artifact Synthesis

Artifact synthesis begins with a pretrained 3DGS, which is pruned at exponentially spaced rates (parameterized via a minimum primitive cardinality and a gradient-based pruning metric derived from rendering loss) to generate a spectrum of compressed representations. Quantization (8-bit) and entropy coding further reduce storage. Pairings of clean and degraded novel views are assembled to build a restoration dataset.

Artifact Restoration via One-Step Diffusion Distillation

The core of the restoration stage is a variational one-step distillation of a latent diffusion model (LDM) backbone. The compressed images are first mapped into the intermediate latent of the LDM at a diffusion step t0, instead of starting only from the final noisy state. This mapping enables the model to capture stochastic diversity and complex artifact distributions.

Restoration is framed as sampling from a learned posterior prestore, guided by the real image prior preal parameterized by the LDM:

- The model architecture extends the frozen noise predictor ϵθ with a low-rank adapter ϕ− for one-step artifact removal.

- Distribution matching between the restored and clean samples is achieved by minimizing KL divergence in the latent space, approximated via distributional score matching.

- Perceptual fidelity is further promoted by augmenting the loss with ℓ2 and LPIPS terms.

- A critic adapter ϕ+ models the restoration distribution for stable score matching.

The ablation study demonstrates that restoring from the intermediate diffusion state (t0) substantially outperforms conventional end-state restoration.

Experimental Evaluation

Datasets and Baselines

NiFi is evaluated on major multiview datasets (Mip-NeRF360, Tanks & Temples, DeepBlending) with training on DL3DV synthetic scenes. State-of-the-art methods are selected as benchmarks: HAC++ for 3DGS compression, classical BM3D, SwinIR, DiffBIR (image restoration), and Difix3D (3DGS restoration). LPIPS and DISTS are used as the principal perceptual metrics given the focus on visual plausibility over exact reconstruction.

Quantitative and Qualitative Results

NiFi achieves compression ratios up to 927× with perceptual performance that is comparable or superior to the uncompressed 3DGS baseline and substantially outperforms all prior low-rate 3DGS algorithms and restoration baselines. For example, on DeepBlending, a compression from $555$ MB to $0.599$ MB is realized with minimal LPIPS degradation.

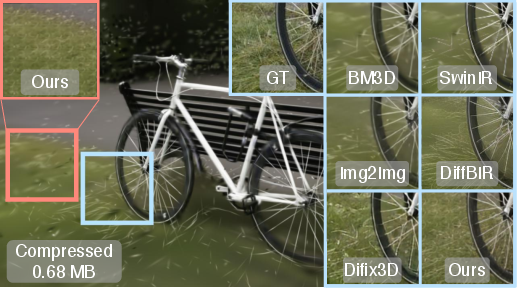

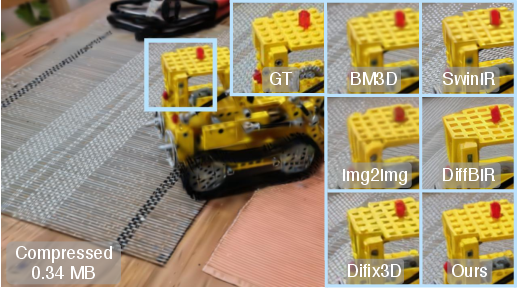

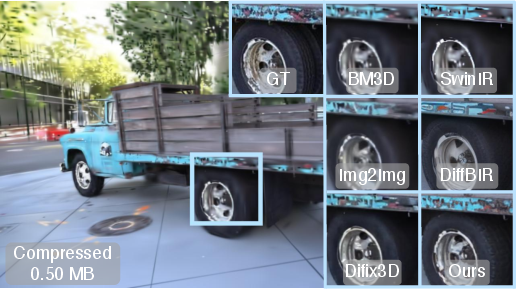

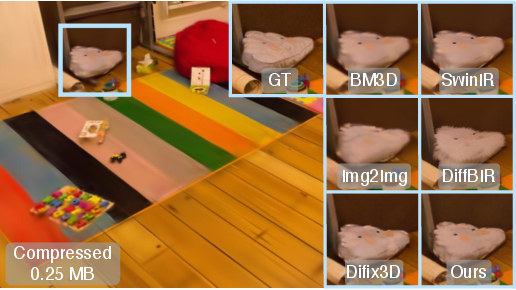

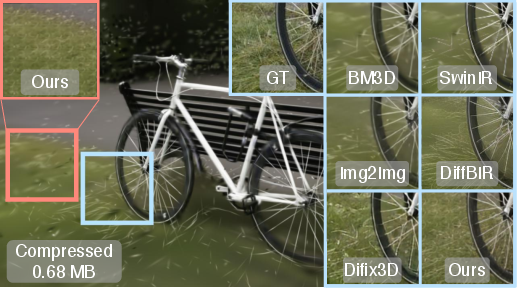

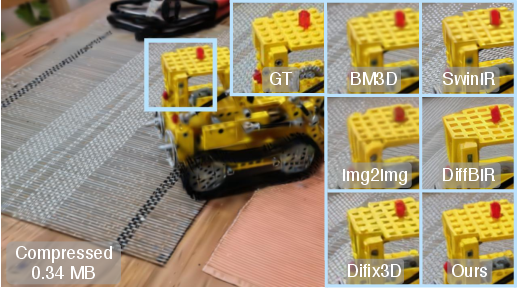

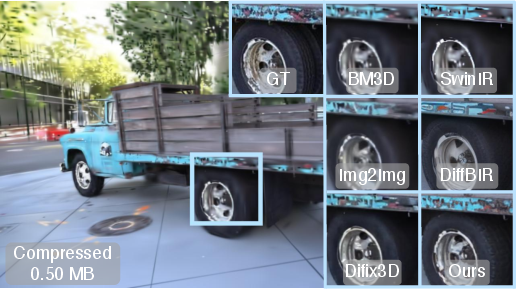

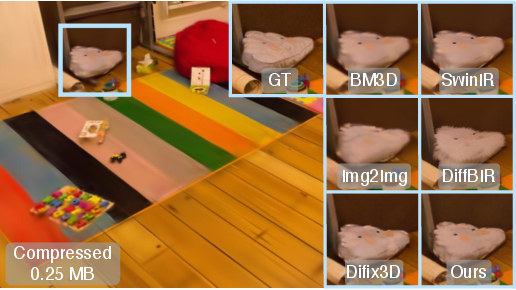

Figure 3 presents qualitative results: NiFi restores fine details, semantically coherent appearance, and geometric structure in novel views, even at rates where standard restoration or generative methods fail. The system does, however, occasionally introduce visually excessive high-frequency patterns, particularly in fine-grained regions (e.g., grass), as highlighted in the provided visualizations.

Figure 3: Qualitative results on four scenes (bicycle, kitchen, truck, playroom), comparing NiFi's restoration (highlighted boxes) on extremely compressed 3DGS backgrounds with competing methods.

Implications and Prospects

Practical Impact

NiFi enables practical use of 3DGS for applications with severe bandwidth or storage constraints, including immersive communication, VR/AR streaming, remote collaboration, and edge-enabled photorealistic scene transmission. The decoupled compression-restoration approach means aggressive on-device splat pruning can be matched with computationally heavy restoration off-device or vice versa.

Theoretical Implications

The framework formalizes perceptual restoration of generative artifacts as distributional matching in the latent diffusion space, demonstrating that a properly guided stochastic prior can address not merely average-case denoising, but highly structured, semantics-level artifact recovery. The explicit mapping to intermediate diffusion states is shown to be essential for tackling structured 3D artifact complexity.

Limitations and Future Directions

Despite its strong perceptual quality, NiFi may accentuate high-frequency details, potentially hallucinating features absent in the original scene, risking misrepresentation in certain contexts (e.g., medical 3D visualization). Future research should focus on regularizing high-frequency restoration and integrating geometric consistency constraints. Additionally, extending the method to handle real-scene capture noise and more diverse 3D synthesis tools will be important for robust deployment.

Conclusion

NiFi represents a significant advancement in 3DGS compression methodology by coupling extreme artifact-aware pruning and quantization with diffusion-driven generative restoration. Empirical evidence suggests it achieves state-of-the-art perceptual scores at rates nearly three orders of magnitude below conventional 3DGS, establishing a new paradigm for scalable, high-fidelity 3D content delivery. Future work should tackle over-restoration, generalization to unconstrained scenes, and integration with real-time 3DGS pipelines.