Multiplex Thinking: Reasoning via Token-wise Branch-and-Merge

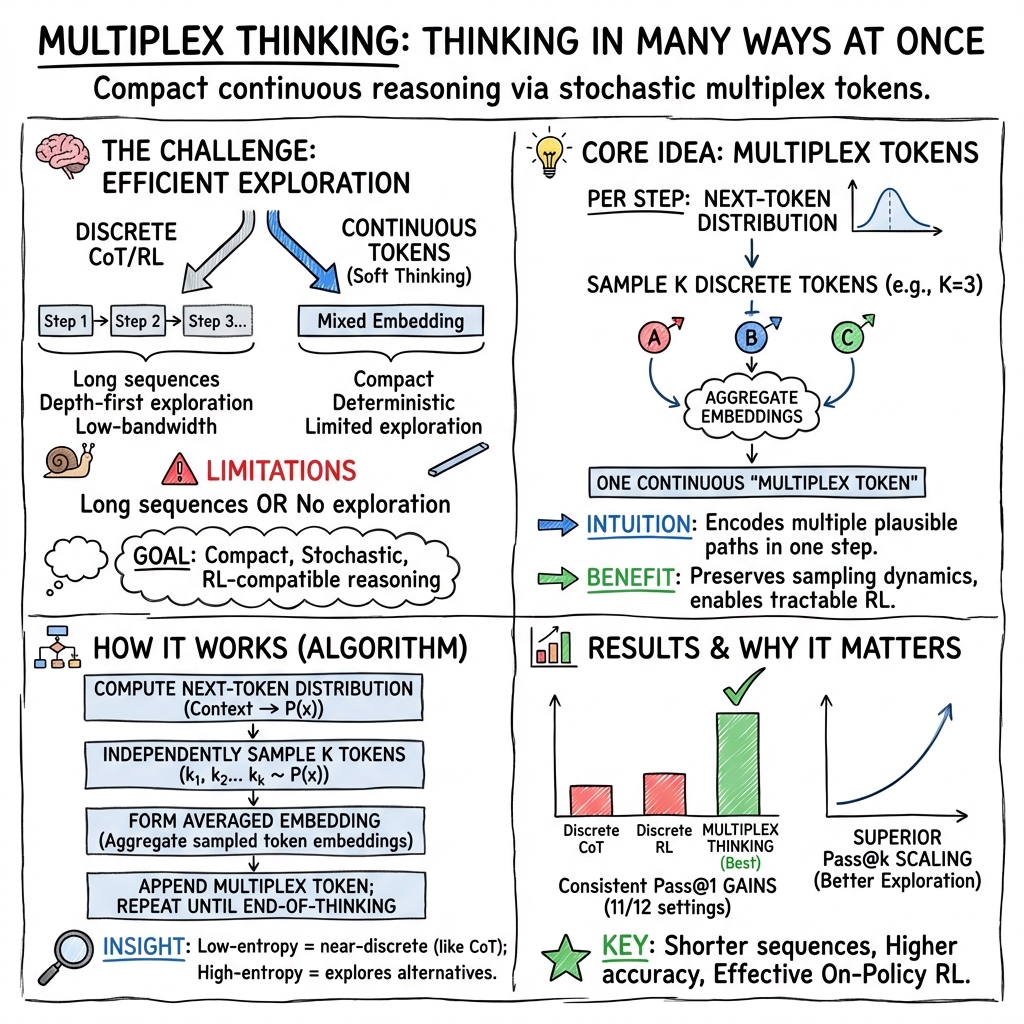

Abstract: LLMs often solve complex reasoning tasks more effectively with Chain-of-Thought (CoT), but at the cost of long, low-bandwidth token sequences. Humans, by contrast, often reason softly by maintaining a distribution over plausible next steps. Motivated by this, we propose Multiplex Thinking, a stochastic soft reasoning mechanism that, at each thinking step, samples K candidate tokens and aggregates their embeddings into a single continuous multiplex token. This preserves the vocabulary embedding prior and the sampling dynamics of standard discrete generation, while inducing a tractable probability distribution over multiplex rollouts. Consequently, multiplex trajectories can be directly optimized with on-policy reinforcement learning (RL). Importantly, Multiplex Thinking is self-adaptive: when the model is confident, the multiplex token is nearly discrete and behaves like standard CoT; when it is uncertain, it compactly represents multiple plausible next steps without increasing sequence length. Across challenging math reasoning benchmarks, Multiplex Thinking consistently outperforms strong discrete CoT and RL baselines from Pass@1 through Pass@1024, while producing shorter sequences. The code and checkpoints are available at https://github.com/GMLR-Penn/Multiplex-Thinking.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces a new way for big LLMs to “think” through hard problems, especially math. Instead of writing out long step‑by‑step explanations with one word at a time, the model creates special “multiplex tokens” that pack several possible next steps into a single token. This makes reasoning shorter, faster, and more flexible, while still letting the model explore different ideas and learn from trial and error.

What questions are the researchers trying to answer?

- Can a model represent multiple possible reasoning paths at once, inside a single token, so it doesn’t have to write long chains of thought?

- Can this “soft” token still behave like normal sampling (trying different words), so it works well with reinforcement learning (RL), which needs exploration?

- Does this approach actually improve accuracy on tough math problems, and does it keep getting better when you allow more tries?

- How wide should each multiplex token be (how many candidate words to bundle), and does bundling reduce the total length of the model’s response?

How does the method work?

Think of normal LLM reasoning like walking down a single path: at each step, the model picks one next word (a “token”). If the model later wants to try another path, it has to start over and generate another full sequence. That’s slow and costly.

Multiplex Thinking changes that:

- At each reasoning step, the model doesn’t pick just one word. It samples K candidate words independently (like picking several possible directions at once).

- Each word has an “embedding,” which you can imagine as its GPS coordinates in “word meaning space.” The model combines the K embeddings (by averaging or weighting them by confidence) into one continuous vector. This is the “multiplex token.”

- If the model is confident (its probabilities are sharp), the K samples often agree, and the multiplex token behaves like a normal single word. If the model is unsure, the samples differ, and the multiplex token compactly represents several plausible next steps without making the text longer.

Why this helps with learning:

- The probability of a multiplex token is just the product of the probabilities of the K sampled words. That makes it easy to calculate how likely a full “multiplex reasoning trace” is.

- Because the method keeps true randomness (stochastic sampling), it works naturally with reinforcement learning (RL), where the model tries actions, gets rewards (like points for correct answers), and improves based on its own recent behavior.

Key ideas explained in everyday terms:

- Token: A piece of text the model outputs (often a word or part of a word).

- Embedding: A numeric representation of a token’s meaning (like coordinates).

- Entropy: A measure of uncertainty. High entropy means the model sees many good options; low entropy means it’s confident.

- Reinforcement Learning (RL): Training by trial and error. The model gets a reward when its answer matches the correct one and learns to do better.

- Pass@k: If you let the model try k times, Pass@k is the chance that at least one of those tries is correct.

How did they test it?

They built Multiplex Thinking on two open models (DeepSeek‑R1‑Distill‑Qwen at 1.5B and 7B sizes) and trained with a common RL method (GRPO). They evaluated on tough math datasets (like AIME 2024/2025, AMC 2023, MATH‑500, Minerva Math, and OlympiadBench) and compared against:

- Discrete Chain‑of‑Thought (CoT): standard step‑by‑step reasoning with one token per step.

- Discrete RL: same models fine‑tuned with RL but still using one token per step.

- Stochastic Soft Thinking: a recent continuous‑token baseline that adds noise to enable exploration.

They measured both Pass@1 (one try) and Pass@k up to 1024 (lots of tries), and also looked at how long the responses were.

What did they find, and why is it important?

Here are the main takeaways, summarized to highlight what matters:

- Higher accuracy on hard math: Multiplex Thinking beat strong baselines in most settings, including both model sizes and multiple datasets. It did better at Pass@1 and kept improving more than others when allowed more tries (Pass@k up to 1024).

- Shorter reasoning: Because each multiplex token can carry multiple ideas, the model’s responses were shorter on average but still more accurate. That means less text to generate and read.

- Better exploration for RL: The method kept healthy uncertainty (entropy) longer during training, which helps RL find better solutions instead of getting stuck too early on one path.

- Works even without training: An “inference‑only” version (just using multiplex tokens at test time, no extra training) already improved over standard CoT, showing the representation itself is powerful.

- Practical tuning: Using K ≥ 2 (bundling at least two candidate tokens per step) gave big gains, and increasing K further helped but with diminishing returns. In short, small K values are often enough.

- Robust design: Different ways of combining the K embeddings (simple average vs. probability‑weighted) both worked well, suggesting the core idea—packing multiple options into one token—is what drives the benefit.

These results matter because they suggest we can get smarter reasoning without always paying the high “token cost” of long chains of thought. That means better performance and lower compute, both during training and when you run the model to solve problems.

What is the impact and what could come next?

Multiplex Thinking offers a more efficient way for LLMs to reason:

- It can make models faster and cheaper to use by shortening responses while keeping or improving accuracy.

- It fits naturally with reinforcement learning, helping models learn better strategies through trial and error.

- It can be combined with existing techniques that try multiple full solutions (like Self‑Consistency or Tree‑of‑Thought), because multiplex tokens improve the per‑step representation rather than just increasing the number of full attempts.

In simple terms: this approach helps models think more like people do—keeping several promising ideas in mind at once—without writing long, slow explanations. That could make future AI systems better at math, logic, and planning, all while using less time and money.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains uncertain or unexplored in the paper and could guide future research.

- Theoretical validity of probability factorization: rigorously justify that treating a multiplex token’s log-probability as the sum/product over independently sampled constituents yields an unbiased REINFORCE gradient when the model actually conditions on a single aggregated continuous embedding.

- Credit assignment within a multiplex token: develop methods to attribute reward to individual sampled constituents in a multiplex token (e.g., per-sample advantages, counterfactual baselines) to reduce variance and improve learning stability.

- Independence assumption and diversity: test sampling without replacement or diversity-promoting strategies across the K samples to avoid duplicate picks in low-entropy regimes and assess effects on exploration and accuracy.

- Early termination policy: systematically ablate how to handle

"[eot]"among K samples (e.g., any, majority, threshold, weighted criteria) and measure impacts on premature termination, accuracy, and sequence length. - Adaptive K scheduling: learn to vary K per step/problem based on uncertainty, compute budget, or reward signals; analyze trade-offs versus fixed-K training and inference.

- Aggregation function design: explore nonlinear or learned aggregators (attention/MLP/gating) over sampled embeddings; compare to simple averaging/reweighting and assess robustness, on-manifold constraints, and stability.

- Information preservation and interference: quantify how well multiplex tokens preserve multimodality through transformer layers (e.g., probing/decoding sub-modes across depth), and characterize interference among superposed paths.

- Probability calibration and gradient scaling: study calibration of log-probabilities under the product-of-probability semantics, gradient magnitude growth with K, and normalization strategies to stabilize optimization.

- RL objective design: evaluate process-level/verifier rewards, hybrid process+outcome rewards, and alternative on-policy algorithms (PPO variants, KL regularization schedules, entropy bonuses) beyond GRPO with zero KL/entropy penalties.

- Sensitivity to sampling hyperparameters: perform thorough analyses over temperature, top-p, and their schedules during training and evaluation; establish robust default settings and failure modes.

- Train–test K mismatch: test training with one K and inferencing with another (smaller/larger) to understand generalization and budget adaptation.

- Compute and wall-clock efficiency: report end-to-end throughput, latency, GPU memory/KV-cache behavior, and cost per correct solution versus discrete baselines; separate token-count savings from actual runtime gains.

- Long-context behavior: extend length-scaling beyond 5k tokens and analyze asymptotic trade-offs between sequence length and K for very long reasoning chains.

- Domain generalization: validate on non-math tasks (code generation, commonsense, multi-hop QA, symbolic reasoning), multilingual and multimodal settings, and tool-augmented reasoning to assess breadth of applicability.

- Model scaling: test on substantially larger models (e.g., 13B–70B, MoE) to verify whether interference resolution and gains scale; measure stability and catastrophic forgetting risks during RL.

- Integration with parallel reasoning: empirically combine multiplex thinking with Self-Consistency, Best-of-N, Tree-of-Thoughts, and verifier-guided search; quantify additive gains and compute efficiency.

- Robustness and safety: evaluate hallucination, faithfulness of reasoning traces, adversarial robustness, and calibration under distribution shift; compare to discrete CoT and Soft Thinking.

- Data contamination checks: audit training data (DeepScaleR-Preview) for overlap with evaluation sets (e.g., AIME/AMC) and report decontamination procedures to ensure evaluation hygiene.

- Special-token portability: assess reliance on

"[bot]"/"[eot]"across different tokenizers/models; provide strategies for models lacking these tokens and for multilingual tokenization. - Failure analysis: characterize tasks/steps where multiplex harms performance (e.g., deterministic arithmetic), and devise dynamic fallback to discrete decoding or K=1 when appropriate.

- Training duration and data scaling laws: study effects of longer RL training, larger/cleaner datasets, and curriculum design on performance and stability.

- Hyperparameter robustness: map sensitivity to K, aggregation weights, learning rate, batch size, and reward scaling; provide principled tuning guidelines.

- Search-theoretic analysis: formalize the relationship between K and BFS-like coverage, derive bounds on discovery probability of rare solutions versus discrete DFS-like decoding.

- Sampling correlation control: investigate anti-correlation or repulsive sampling across K (e.g., determinantal point processes) to enhance coverage without increasing K.

- LM-head reweighting circularity: analyze whether reweighting by the model’s own probabilities introduces undesirable feedback loops or bias, and explore external/learned reweighting schemes.

- Tool use and external computation: test multiplex thinking when reasoning includes calls to calculators, code execution, or APIs; design mechanisms for branching/merging around discrete tool outcomes.

Glossary

- Augmented state space: The expanded action space formed by considering composite outcomes of multiple token samples, typically of size |V|K. "augmented state space |V|K."

- Best-of-N (BoN): A parallel reasoning strategy that samples N solutions and selects the best according to a reward. "Best-of-N (BoN) selection using outcome rewards (Cobbe et al., 2021) or process rewards (Lightman et al., 2023),"

- Breadth-first search (BFS): A search strategy that explores multiple branches level by level; used metaphorically for decoding that maintains multiple alternatives. "decode in a more breadth-first search (BFS)-like manner (Zhu et al., 2025)."

- Catastrophic forgetting: The tendency of a model to forget previously learned information when trained on new tasks. "full-model retraining can induce catastrophic forgetting (Xu et al., 2025)."

- Chain-of-Thought (CoT): A prompting technique that makes models generate intermediate reasoning steps before the final answer. "LLMs often solve complex reasoning tasks more effectively with Chain- of-Thought (CoT),"

- Continuous latent space: A continuous representation space in which reasoning can be performed instead of discrete tokens. "Training LLMs to reason in a continuous latent space, 2025."

- Continuous reasoning tokens: Non-discrete tokens used to encode multiple potential reasoning paths compactly. "This cost motivates continuous rea- soning tokens that can compactly encode a 'superposition' over multiple candidate reasoning paths"

- Depth-first search (DFS): A search strategy that commits to a single path until completion before backtracking; used metaphorically for sequential decoding. "exploring alternatives often resembles depth-first search (DFS): each sampled trace commits to a single trajectory before branching to others"

- Embedding matrix: The parameter matrix mapping vocabulary indices to dense vector representations. "we map s¿ through the embedding matrix E E RVxd,"

- Entropy: A measure of uncertainty in a probability distribution; higher entropy indicates more spread over options. "When the logits are highly peaked (low entropy),"

- Group Relative Policy Optimization (GRPO): A reinforcement learning algorithm used to train the models. "The models are optimized using Group Relative Policy Optimization (GRPO) (Shao et al., 2024)."

- Gumbel-Softmax trick: A method to introduce stochasticity into soft selections, enabling differentiable sampling. "injects stochasticity via the Gumbel-Softmax trick to mitigate the 'greedy pitfall'"

- Hidden-state token: A continuous token formed from model hidden states rather than vocabulary embeddings. "a hidden- state token (Hao et al., 2025)"

- i.i.d.: Independent and identically distributed; describes repeated sampling from the same distribution. "the empirical distribution of K i.i.d. samples converges to the model's LM head distribution"

- Joint entropy: Entropy of a joint random variable capturing the combined uncertainty of multiple components. "analyze the entropy of ci as a joint entropy,"

- KL penalty: A regularization term based on Kullback–Leibler divergence used in RL training to control policy shift. "zero KL penalty and entropy penalty."

- LM head: The final layer projecting hidden states to vocabulary logits and probabilities. "the model's LM head distribution Te (. | e(q), czi)."

- LM-head reweighting: A weighting strategy that scales sampled tokens by their LM-head probabilities during aggregation. "LM-head reweighting: we set w[v] = K . [...] To (vle(g), c <i)"

- Logits: Unnormalized scores output by the model prior to applying softmax to obtain probabilities. "given the next-token logits,"

- Multiplex Thinking: A stochastic, sampling-based continuous reasoning mechanism that branches and merges token samples. "we propose Multiplex Thinking, a stochastic soft reasoning mechanism"

- Multiplex token: A continuous token created by aggregating K independently sampled discrete tokens’ embeddings. "aggregates their embeddings into a single continuous multiplex token."

- Multiplex width K: The number of independent token samples aggregated into a single multiplex token at each step. "the multiplex width K"

- On-policy: RL training that samples trajectories from the current policy being optimized. "directly optimized with on-policy reinforcement learning (RL)."

- One-hot vector: A sparse vector with a single 1 indicating a chosen token index and 0s elsewhere. "averaging their one-hot vectors:"

- Pass@k: The probability that at least one correct solution exists among k sampled trajectories. "Across challenging math reason- ing benchmarks, multiplex thinking consistently outperforms strong discrete CoT and RL baselines from Pass@1 through Pass@1024,"

- Policy distribution: The probability distribution over actions (tokens) under the current model policy. "Determinism collapses the token-level policy distribution,"

- Policy entropy: Entropy of the model’s action distribution, indicating its level of exploratory uncertainty. "compute Hstart as the average policy entropy"

- Probability mass: The total probability assigned to specific outcomes or regions in a distribution. "steering probability mass toward higher-reward reasoning trajectories."

- Process-level rewards: RL rewards that evaluate intermediate reasoning steps rather than only final outcomes. "using outcome- or process-level rewards (Guo et al., 2025),"

- Reinforcement Learning (RL): A training paradigm that optimizes model behavior via rewards from sampled trajectories. "reinforcement learning (RL) can further im- prove reasoning"

- Reinforcement Learning with Verifiable Rewards (RLVR): An RL framework that leverages tasks with verifiable answers to compute rewards. "Reinforcement Learning with Verifiable Rewards (RLVR) RLVR trains LLMs on tasks with verifiable answers"

- Representational misalignment: A mismatch between input embeddings and the prediction head that can degrade performance. "representational misalignment (Zhang et al., 2025)"

- Response length: The number of tokens generated in an answer or reasoning trace. "A maximum response length of 4096 tokens is enforced"

- Rollout: A complete sampled reasoning trajectory from start to answer under the policy. "multiplex trajectories can be directly optimized with on-policy reinforcement learning (RL)."

- Sampling budget: The number of trajectories sampled for evaluation or selection (e.g., Pass@1–Pass@1024). "across sampling budgets (Pass@1-Pass@1024),"

- Self-Consistency: A parallel decoding strategy that samples multiple rationales and aggregates answers for robustness. "Self-Consistency or BoN"

- Shannon entropy: The standard entropy formulation measuring uncertainty over a discrete distribution. "The entropy of this single-step sampling is given by the standard Shannon entropy:"

- Soft Thinking: A deterministic continuous-token method that aggregates embeddings using the next-token distribution. "Soft Thinking (Zhang et al., 2025) enhances LLM performance"

- Stochastic Soft Thinking: A variant of Soft Thinking that injects noise (e.g., Gumbel) to enable exploration. "Stochastic Soft Thinking: A recent strong training- free continuous reasoning baseline (Wu et al., 2025)."

- Superposition: A compact representation of multiple plausible paths simultaneously encoded in one token. "encode a 'superposition' over multiple candidate reasoning paths"

- Temperature: A sampling parameter controlling randomness by scaling logits before softmax. "the temperature of 1.0"

- Test-time compute: The computational cost incurred during inference, e.g., number of samples or tokens generated. "directly translating to reduced test-time compute."

- Top-p: Nucleus sampling parameter that restricts sampling to the smallest set of tokens whose cumulative probability ≥ p. "the top-p of 1.0"

- Tree-of-Thought (ToT): A search-based reasoning approach that branches over intermediate steps to explore solutions. "Tree-of-Thought (Yao et al., 2023)"

- Verifiable reward function: A function that assigns rewards based on whether a sampled answer matches a ground truth. "a verifiable reward function v can provide a reward"

- Vocabulary embedding prior: The inductive bias from using the model’s learned vocabulary embeddings for reasoning tokens. "preserves the vocabulary embedding prior"

- Vocabulary-space weighting: Weights applied per vocabulary index when aggregating sampled tokens into a continuous vector. "vocabulary-space weighting wi E RV"

Practical Applications

Immediate Applications

Below is a concise set of deployable use cases that leverage the paper’s findings on stochastic, token-efficient reasoning via multiplex tokens. Each item notes sectors, potential tools/workflows, and key assumptions or dependencies.

- Software: Production LLM inference acceleration for reasoning-heavy tasks

- What: Replace standard CoT decoding with “Multiplex Thinking-I” (inference-only) to reduce sequence length while maintaining or improving accuracy on reasoning tasks.

- Tools/Workflows: Integrate the open-source repo and checkpoints; add a “multiplex mode” to serving stacks (e.g., SGLang/vLLM-like routers), expose K as a decoding hyperparameter, optionally adapt K based on next-token entropy.

- Assumptions/Dependencies: Works best on tasks with structured reasoning and clear next-token distributions; requires minor engineering to add sampling-and-aggregation per step.

- Education: Math tutoring and homework help systems

- What: Improve correctness and reduce latency in step-by-step math assistance using multiplex tokens; present “compact” chains of thought that skip redundant verbosity.

- Tools/Workflows: Multiplex Thinking-I in tutoring apps; configurable K-width; Pass@k evaluation and entropy monitoring to adapt hint granularity.

- Assumptions/Dependencies: Math reasoning aligns with demonstrated benchmarks; verifiable answers enable easy quality control.

- Software Engineering: Algorithmic coding assistants with test-driven verification

- What: Use multiplex decoding to explore multiple plausible reasoning/code paths per token, then validate against unit tests to select the best solution with fewer full-length samples.

- Tools/Workflows: CI-integrated unit-test loops; outcome rewards from passing tests; plug-in multiplex decoder for code generation prompts.

- Assumptions/Dependencies: High-quality test coverage to provide verifiable rewards; tasks that benefit from multi-path exploration.

- Operations/Customer Support: Troubleshooting and decision trees

- What: BFS-like exploration within compact traces to reduce token and time costs for agents diagnosing routine issues (e.g., IT support flows).

- Tools/Workflows: Entropy-aware decoding that widens K at uncertain decision points; Pass@k sampling capped for cost; lightweight verifiable outcomes (e.g., can the issue be resolved in N steps).

- Assumptions/Dependencies: Availability of checkable endpoints (resolution states) and guardrails; domain-specific scripts.

- Finance: Compliance Q&A and policy interpretation with checkable outputs

- What: Apply multiplex reasoning to tasks with verifiable criteria (e.g., rule lookup, documentation consistency checks) to reduce inference cost.

- Tools/Workflows: “Multiplex policy checker” that flags and cross-references citations; compact rationales; Pass@k scaling dashboards.

- Assumptions/Dependencies: Restricted to well-structured, verifiable questions; requires curated corpora and deterministic validation rules.

- Robotics (High-level planning in simulation)

- What: Use multiplex tokens to represent multiple candidate action sequences within a single compact plan; select plans via simulation-based verifiable rewards.

- Tools/Workflows: Sim-in-the-loop planners; K set higher on high-entropy steps; outcome rewards from plan feasibility.

- Assumptions/Dependencies: Simulators that can verify plan correctness; safe separation between language-level planning and control.

- Academia/Research: On-policy RL with multiplex rollouts on verifiable tasks

- What: Directly optimize multiplex trajectories via RLVR/GRPO to study exploration, entropy dynamics, and sample efficiency on math/logical datasets.

- Tools/Workflows: Use provided codebase (verl + SGLang); standard RL configs; track entropy reduction ratios and response length.

- Assumptions/Dependencies: Verifiable datasets (e.g., MATH-500, AIME); compute for small-scale RL (1.5B–7B models).

- Model Evaluation: Pass@k scaling and entropy-aware assessment

- What: Adopt Pass@k (up to 1024) and entropy metrics to quantify upper-bound exploration and sustained diversity in reasoning models.

- Tools/Workflows: Bootstrapped Pass@k curves; entropy dashboards; response-length monitoring to track token efficiency.

- Assumptions/Dependencies: Logging infrastructure; comparable decoding settings across models and baselines.

- Edge and Mobile Apps: Compact reasoning on-device

- What: Deploy small distilled models with multiplex inference to cut response length and energy use in math puzzle or study apps.

- Tools/Workflows: Quantized 1.5B models; adaptive K based on entropy; strict max-length budgets with multiplex compression.

- Assumptions/Dependencies: Edge-friendly serving; tasks with short, checkable reasoning; careful battery and latency profiling.

- Parallel Reasoning Frameworks: Efficient diversification with fewer full rollouts

- What: Combine Self-Consistency/Best-of-N with multiplex tokens to diversify candidates per step without linearly scaling sequence generation.

- Tools/Workflows: “Multiplex Self-Consistency” wrapper; choose K to target early forks; majority voting on final answers.

- Assumptions/Dependencies: Non-trivial reasoning tasks; compatibility with existing sampling strategies.

Long-Term Applications

The following applications require further research, scaling, validation, or domain adaptation beyond math reasoning, as well as robust safety and compliance frameworks.

- Healthcare: Decision support on structured, verifiable subtasks

- What: Use multiplex reasoning to explore care-plan options where outcome proxies are verifiable (e.g., guideline concordance checks, order set validation).

- Tools/Workflows: “Multiplex guideline checker” with process-level rewards; audit logs capturing compact multiplex rationales.

- Assumptions/Dependencies: Strong clinical validation; human oversight; domain-specific reward shaping and safety constraints.

- Legal/Policy: Structured legal reasoning and citation verification

- What: Multiplex planning for legal argument scaffolding with rule consistency checks; compact rationales that retain forks at high-entropy decision points.

- Tools/Workflows: Benchmarks with verifiable criteria (citation accuracy, statutory alignment); entropy-aware K selection.

- Assumptions/Dependencies: Domain datasets with ground truth; risk-management and compliance reviews.

- Enterprise Planning and Forecasting (Finance/Energy/Supply Chain)

- What: Scenario exploration within single compact traces; combine multiplex tokens with simulation or digital twins to verify outcomes.

- Tools/Workflows: “Multiplex scenario planner” that couples LLM reasoning with simulation returns; RLVR for planning metrics (e.g., cost, reliability).

- Assumptions/Dependencies: High-quality simulators; stable reward functions; interpretability requirements.

- Multi-Agent & Search Systems: Tree-of-Thought plus multiplex tokens

- What: Embed multiplex at each node of ToT/graph search to represent several branch candidates without generating full-length paths per branch.

- Tools/Workflows: “Multiplex ToT” scheduler; node-level entropy thresholds; branch-and-merge visualization tools.

- Assumptions/Dependencies: Coordinated scheduling across agents; robust stopping criteria; compute orchestration.

- Base Model Training: Architectures pre-equipped for multiplex reasoning

- What: Pretrain models to natively support stochastic multiplex tokens ([bot]/[eot] conventions, aggregation layers) and optimize for token efficiency.

- Tools/Workflows: Pretraining objectives that reward compact reasoning; curriculum that scales K; calibration pipelines.

- Assumptions/Dependencies: Significant training compute; careful mitigation of representational misalignment and catastrophic forgetting.

- Adaptive Decoders: Entropy-aware K selection and confidence-to-width mapping

- What: Automatically adjust multiplex width K per step based on entropy, confidence, or uncertainty estimates to balance exploration and cost.

- Tools/Workflows: “Adaptive multiplex decoder” libraries; calibration metrics; policy entropy monitors.

- Assumptions/Dependencies: Reliable uncertainty estimates; tuning to avoid instability; domain-specific thresholds.

- Data Compression and Analytics: Compact CoT storage and retrieval

- What: Store multiplex rationales as compressed superpositions; reconstruct diverse branches on demand for audit or analytics.

- Tools/Workflows: CoT-compression schemas; replay decoders; diversity and fork-point analytics.

- Assumptions/Dependencies: Loss-aware compression; compatible decoding; privacy and governance controls.

- Edge Robotics: On-board high-level task reasoning at lower energy cost

- What: Use multiplex tokens to encode alternative plan steps without lengthy chains, enabling faster deliberation under real-time constraints.

- Tools/Workflows: Real-time planners; simulation-backed verification; entropy gating for K.

- Assumptions/Dependencies: Hardware constraints; latency guarantees; safety testing.

- Sustainable AI and Policy: Compute-efficient reasoning standards

- What: Establish best practices and benchmarks that reward token efficiency (shorter sequences) for reasoning models to reduce energy footprint.

- Tools/Workflows: Standardized Pass@k+efficiency metrics; reporting requirements; procurement criteria for public-sector deployments.

- Assumptions/Dependencies: Community consensus; measurement infrastructure; lifecycle analyses.

- Cross-Domain Benchmarks and RLVR Pipelines

- What: Extend multiplex RL to domains beyond math (e.g., structured data QA, scientific problem solving) with verifiable rewards and process-level signals.

- Tools/Workflows: Dataset creation; verifiable or process rewards; wide-scale ablations of aggregation strategies and K-widths.

- Assumptions/Dependencies: Availability of domain-specific ground truths; robust reward design; reproducible pipelines.

Collections

Sign up for free to add this paper to one or more collections.