- The paper demonstrates that system predictability directly affects the convergence rate of optimization algorithms like DEER and DeepPCR on parallel architectures.

- It establishes a framework using the Polyak–Łojasiewicz condition to link merit function conditioning with system dynamics via Lyapunov exponents.

- Empirical results on mean field RNNs and Langevin dynamics reveal a threshold phenomenon where negative Lyapunov exponents ensure rapid convergence even in locally unstable regions.

Predictability Enables Parallelization of Nonlinear State Space Models

This paper explores the relationship between the predictability of nonlinear dynamical systems and the conditioning of parallelizable optimization problems used to evaluate them. The primary focus is on how system dynamics influence the efficiency and convergence of algorithms such as DEER and DeepPCR, which reframe state space evaluations as optimization problems, and their applicability to modern parallel computing architectures.

Background

Parallelization and Nonlinear State Space Models

The quest to harness parallel computing capabilities, such as GPUs, for nonlinear state space models (SSMs) has been hindered by the inherently sequential nature of these models. Algorithms like DeepPCR and DEER have proposed reformulating the sequential dynamics into a parallelizable optimization framework, leveraging techniques like the Gauss-Newton method for efficient computation. The challenge, however, lies in understanding the factors affecting the conditioning of these optimization problems.

Predictability and System Dynamics

Predictability, characterized through concepts like Lyapunov exponents, plays a critical role in determining system behaviors. A system is deemed predictable if small perturbations diminish over time, and unpredictable or chaotic if they amplify. These definitions provide a basis for understanding the convergence behavior of parallelizable algorithms in computational settings.

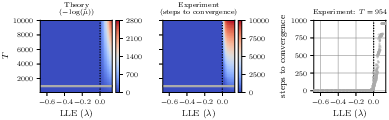

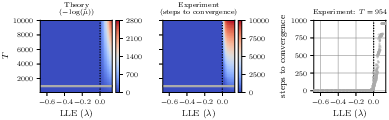

Figure 1: Threshold phenomenon in DEER convergence based on system predictability. In a family of RNNs, DEER has fast convergence for predictable systems and prohibitively slow convergence for chaotic systems. Left (Theory): We depict Theorem's relationship between LLEs and DEER convergence.

Conditioning of Merit Functions

Establishing the PL Condition

The merit function derived from the state space model is shown to satisfy the Polyak–Łojasiewicz (PL) condition, which ensures that gradient-based methods can achieve convergence. The merit function's conditioning directly correlates with system dynamics, where the PL constant is governed by the smallest singular value of the Jacobian matrix of the system residuals.

Influence of Lyapunov Exponents

The conditioning of the optimization problem, and consequently the convergence rate of methods like DEER, is tightly bound to the largest Lyapunov exponent (LLE). For predictable systems (λ<0), the optimization landscape is well-conditioned, enabling rapid convergence. Conversely, unpredictable systems (λ>0) lead to flat or degenerate landscapes, resulting in slow convergence.

Empirical Validation

Mean Field RNNs and Threshold Phenomenon

Experiments with mean field RNNs underscore the phase transition from predictability to chaos. As the LLE increases through zero, a rapid change in the number of optimization steps required for convergence is observed, corroborating the theoretical insights.

Applications to Langevin Dynamics

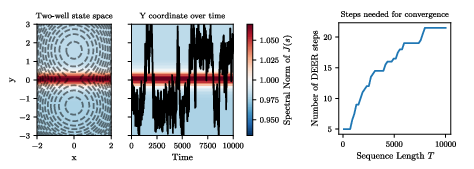

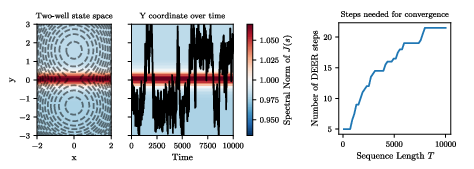

Applications to Langevin dynamics in a two-well potential system demonstrate that even with locally unstable regions, overall negative LLE ensures efficient DEER performance. This highlights the advantage of using LLE as a predictive measure for algorithm performance.

Figure 2: DEER converges quickly for Langevin dynamics in a two-well potential. Despite instability between wells, the system's negative LLE supports efficient parallel convergence.

Conclusion

The findings from this research offer critical guidance for leveraging parallel computation in nonlinear state space models. By pinpointing predictability as a key factor, practitioners can better design and implement SSMs that harness the full potential of parallel architectures, achieving significant performance improvements in systems exhibiting negative LLEs.

Overall, this study provides a framework for anticipating the computational behavior of nonlinear dynamical systems and sets the stage for future explorations into optimizing parallel algorithms across diverse scientific and engineering domains.