Untangling the Influence of Typology, Data and Model Architecture on Ranking Transfer Languages for Cross-Lingual POS Tagging

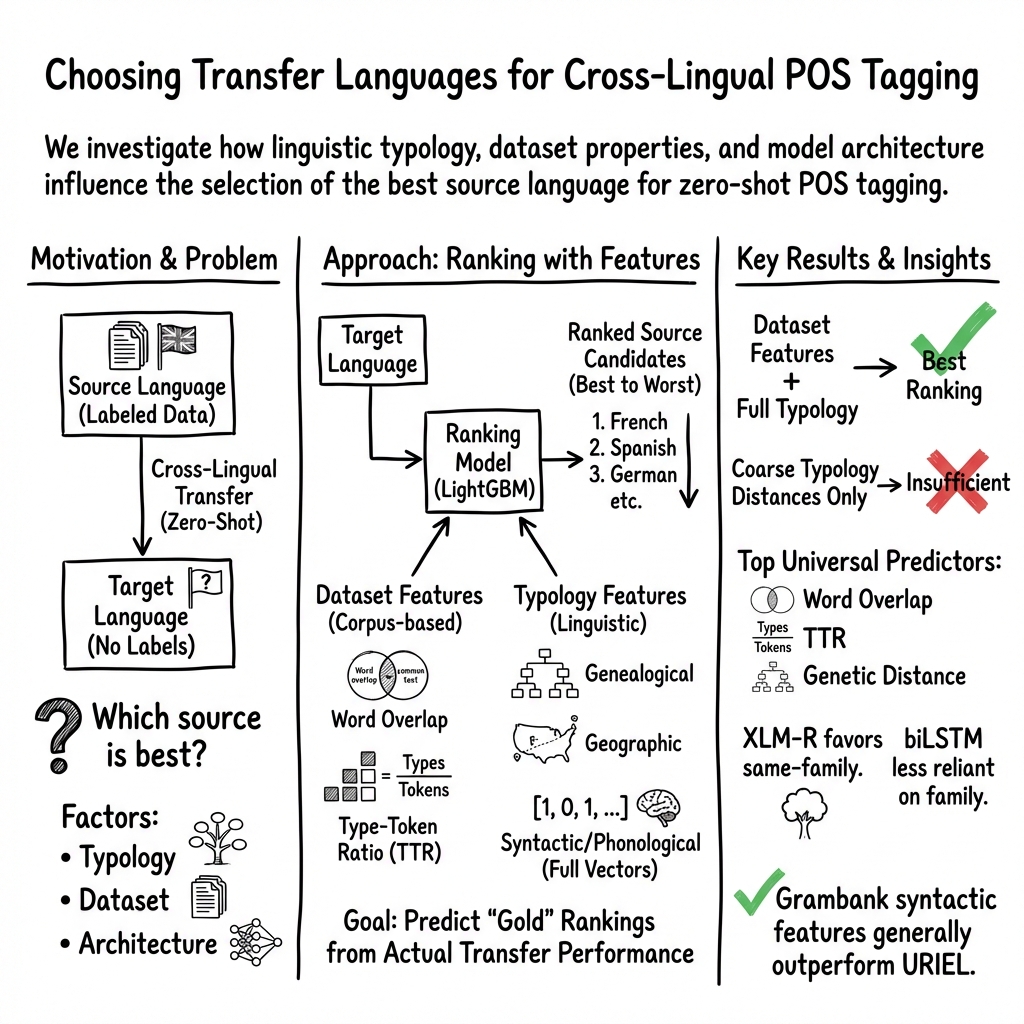

Abstract: Cross-lingual transfer learning is an invaluable tool for overcoming data scarcity, yet selecting a suitable transfer language remains a challenge. The precise roles of linguistic typology, training data, and model architecture in transfer language choice are not fully understood. We take a holistic approach, examining how both dataset-specific and fine-grained typological features influence transfer language selection for part-of-speech tagging, considering two different sources for morphosyntactic features. While previous work examines these dynamics in the context of bilingual biLSTMS, we extend our analysis to a more modern transfer learning pipeline: zero-shot prediction with pretrained multilingual models. We train a series of transfer language ranking systems and examine how different feature inputs influence ranker performance across architectures. Word overlap, type-token ratio, and genealogical distance emerge as top features across all architectures. Our findings reveal that a combination of typological and dataset-dependent features leads to the best rankings, and that good performance can be obtained with either feature group on its own.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

Imagine you’re trying to learn a new language, but you don’t have a teacher for it. One trick is to learn from a different language that’s similar and use what you learn to help with the new one. This paper studies how to choose the “best helper language” so that computers can do a basic language task—part‑of‑speech (POS) tagging—well in languages with little data.

POS tagging means labeling each word in a sentence as a noun, verb, adjective, and so on. The authors test different ways to pick a good “transfer language” (the helper) for a “target language” (the one we care about), and they compare older and newer AI models.

What questions were they trying to answer?

The researchers focused on four simple questions:

- Which features (things we can measure about languages and datasets) best predict a good helper language?

- Do those important features change depending on the kind of AI model we use (older biLSTMs vs. modern multilingual transformers like XLM‑R and mBERT)?

- Is it better to use detailed, fine‑grained facts about language structure, or just broad, coarse summaries?

- Do we really need to look at properties of the actual datasets (like shared words), or can we decide using only linguistic facts about the languages?

How did they study it?

To keep things fair and clear, they built a “recommender system” that ranks possible helper languages for each target language. Then they checked how good those recommendations were.

Here’s the approach in everyday terms:

- Task: POS tagging (labeling words as noun/verb/etc.) for many languages.

- Models:

- An older type: biLSTMs (a kind of neural network that reads text left‑to‑right and right‑to‑left).

- Newer types: multilingual LLMs (MLMs) like XLM‑R and mBERT. These are huge models trained on many languages. They were fine‑tuned on one source language and then used “zero‑shot” on the target language (meaning: no labeled examples from the target language during fine‑tuning).

- “Gold rankings”: For each target language, they actually trained POS taggers using every possible helper language and measured performance. That gave them the true “top helpers” for that target.

- Ranker (the recommender): They trained a learning‑to‑rank system (gradient‑boosted decision trees using LambdaRank) to predict the best helper languages from different features.

They tested two types of features:

- Dataset‑dependent features (what’s similar in the actual text data?)

- Word overlap: How many words are the same between the source and target datasets.

- Type‑token ratio (TTR): How “varied” the words are. It’s the number of unique words (types) compared to total words (tokens). They looked at each language’s TTR and the difference between them.

- Typological/linguistic features (what’s similar in the languages themselves?)

- Genetic (genealogical) distance: How closely related the languages are on the family tree (like Spanish and Italian vs. Spanish and Korean).

- Geographic distance: How far apart the languages are on the map.

- Fine‑grained language features: Things about word order, sounds, and sound inventories (which vowels and consonants they use). These came from two databases:

- URIEL (built from sources like WALS).

- Grambank (a newer database with better coverage for some features).

They tried two ways to use these fine‑grained features:

- Coarse “distance” scores (a single similarity number).

- Full detailed vectors (many small features combined), using an element‑wise match to highlight what the two languages truly share.

To see how well the ranker worked, they used a top‑5 scoring measure (NDCG@5), which basically checks “Did the system’s top 5 recommendations match the real top 5?” and gives higher points for getting the top slots right.

What did they find?

Here are the main takeaways and why they matter:

- Combining both worlds is best: Using both dataset features (like word overlap, TTR) and detailed typological features produced the best rankings. That means it helps to consider both “How similar are the texts?” and “How similar are the languages?”

- Either side can still work alone: You can get good results using mainly typology or mainly dataset features. So if you lack one type of information (say, no access to the target’s dataset), you can still make decent choices with the other.

- Fine beats coarse: Detailed, fine‑grained linguistic features (the full vectors) led to better rankings than using a single coarse “distance” number. This suggests the small details of how languages work really help.

- Top features were consistent: Across old and new models, the most helpful signals were:

- Word overlap

- Type‑token ratio (TTR)

- Genealogical (family‑tree) distance

- This means these factors are generally useful, no matter the model architecture.

- Grambank often helped: Using Grambank’s linguistic features usually performed a bit better than URIEL’s for some models, especially biLSTMs and XLM‑R.

- A small twist with mBERT: mBERT showed slightly different behavior in some settings (for example, it wasn’t always helped by dataset features as much), but overall patterns stayed similar.

Why does this matter?

Choosing the right helper language can make a big difference, especially for languages that don’t have much labeled data. This research shows a practical way to make that choice smarter:

- It provides a data‑driven “helper language picker” that can save time and improve accuracy.

- It proves that both how similar the texts are and how related the languages are really matter.

- It offers general rules that work across different kinds of AI models, which can guide researchers and engineers without endless trial‑and‑error.

- Ultimately, this helps bring better language technology (like tagging, parsing, translation) to many more languages around the world.

Key terms explained

- Part‑of‑speech (POS) tagging: Labeling each word as noun, verb, adjective, etc.

- Cross‑lingual transfer: Training on one language to help another.

- Zero‑shot: Using a model on a new language without training it on labeled examples from that language.

- Typology: The structural features of a language (like word order or sound patterns).

- Genealogical distance: How closely related two languages are (like cousins vs. distant relatives).

- Type‑token ratio (TTR): A way to measure word variety; more unique words compared to total words means a higher TTR.

Glossary

- antipodal distance: The maximum distance between two points on a sphere (points opposite each other), used to normalize geographic separation. Example: "Defined as the orthodromic distance divided by the antipodal distance between rough locations of source and target languages on the surface of the Earth."

- biLSTMs: Bidirectional Long Short-Term Memory neural networks that process sequences in both forward and backward directions. Example: "We train a suite of 378 biLSTMs using Stanza"

- cosine distance: A similarity measure derived from the cosine of the angle between two vectors, here used to compare feature vectors. Example: "computes the cosine distance: $1 - cos(a,b) = d$."

- cross-lingual transfer learning: Using knowledge or models from one language to improve performance on another language. Example: "Cross-lingual transfer learning is an invaluable tool for overcoming data scarcity"

- Discounted Cumulative Gain (DCG): An information retrieval metric that measures ranking quality by accumulating gains discounted at lower ranks. Example: "the Discounted Cumulative Gain (DCG) at position p is defined as"

- element-wise and operation: A feature-comparison operation applying logical AND to corresponding elements of two binary vectors. Example: "using an element-wise and operation to compare and "

- feature importance scores: Quantitative measures of how much each feature contributes to a model’s predictions. Example: "we generate feature importance scores"

- finetune: Further train a pretrained model on a specific task or dataset to adapt it. Example: "We finetune XLM-R and M-BERT equivalently"

- Genetic Distance: A measure of genealogical separation between languages based on lineage trees. Example: "Genetic Distance& Genealogical distance derived from language descent trees described in Glottolog."

- Geographic Distance: A measure of spatial separation between languages based on their locations on Earth. Example: "Geographic Distance& Defined as the orthodromic distance divided by the antipodal distance between rough locations of source and target languages on the surface of the Earth."

- Glottolog: A database of language genealogies and classifications used to derive genetic distances. Example: "described in Glottolog."

- gold ranking-data: Ground-truth rankings used as labels for training or evaluating ranking models. Example: "We generate gold ranking-data"

- Grambank: A typological database providing structured features about languages. Example: "we experiment with switching to Grambank (CC BY 4.0)"

- held out test set: A portion of data reserved from training for unbiased evaluation. Example: "on a held out test set"

- hyperparameters: Configuration values that govern training behavior of models but are not learned from data. Example: "default Stanza hyperparameters"

- Ideal Discounted Cumulative Gain (IDCG): The DCG of a perfect ranking, used to normalize DCG into NDCG. Example: "The Ideal Discounted Cumulative Gain (IDCG) is calculated"

- k-nearest-neighbors: An algorithm used here for imputing missing values by referencing the closest data points. Example: "using k-nearest-neighbors."

- LambdaRank: A learning-to-rank algorithm that optimizes ranking metrics via gradient boosting. Example: "of the LambdaRank algorithm."

- leave-one-out cross-validation: An evaluation method that repeatedly trains on all but one item and tests on the held-out item. Example: "we evaluate our ranking models with leave-one-out cross-validation."

- LightGBM: A gradient boosting framework that efficiently trains decision tree models. Example: "using the LightGBM implementation (MIT License)"

- MissForest: A nonparametric algorithm for imputing missing data using random forests. Example: "MissForest algorithm for nonparametric missing value imputation"

- multilingual LLMs (MLMs): Pretrained models covering multiple languages used for cross-lingual tasks. Example: "pretrained multilingual LLMs (MLMs)"

- NCDG@p: (As written in the paper) The position-limited form of the normalized ranking metric used in evaluation. Example: "we use NCDG@p, a metric that considers the top-p elements"

- NDCG@5: The normalized ranking quality metric computed for the top five items. Example: "We report the average NDCG@5 across all leave-one-out models."

- Normalized Distributed Cumulative Gain (NCDG): (As written in the paper) A normalized measure of ranking quality comparing DCG to the ideal DCG. Example: "we evaluate our ranking models using Normalized Distributed Cumulative Gain (NCDG)."

- orthodromic distance: The great-circle distance between two points on a sphere, measuring shortest Earth-surface paths. Example: "Defined as the orthodromic distance divided by the antipodal distance"

- part-of-speech tagging: Assigning grammatical categories (e.g., noun, verb) to words in text. Example: "for part-of-speech tagging"

- phonological: Pertaining to the sound system of a language; used here as a feature category. Example: "Syntactic, phonological and inventory features"

- (phonetic) inventory: The set of phonemes (distinct sounds) in a language; used as a typological feature set. Example: "phonological, (phonetic) inventory"

- self-attention dropout: A regularization technique that randomly drops connections in attention layers during training. Example: "We use 10% dropout between transformer layers and 10% self-attention dropout."

- Stanza: An NLP toolkit used here to train and evaluate biLSTM taggers. Example: "We train a suite of 378 biLSTMs using Stanza"

- type-token ratio: A measure of lexical diversity computed as unique types divided by total tokens. Example: "type-token ratio in the source language corpus"

- Typology-Vector: Vectorized representations of typological features (e.g., syntactic, phonological) for languages. Example: "Typology-Vector features are represented by distance measures"

- Universal Dependencies (UD): A framework and collection of treebanks for consistent morphosyntactic annotation across languages. Example: "Universal Dependencies 2.0 (UD)"

- URIEL: A typological and multilingual knowledge base providing language feature vectors and distances. Example: "Many typological analyses of crosslingual transfer rely on URIEL"

- word overlap: The proportion of word forms shared between source and target corpora, used as a dataset-dependent feature. Example: "word overlap, type-token ratio in the source language corpus"

- XLM-R: A pretrained multilingual transformer model (XLM-RoBERTa) used for cross-lingual transfer. Example: "We finetune XLM-R and M-BERT equivalently"

- M-BERT: Multilingual BERT, a pretrained transformer covering many languages. Example: "We finetune XLM-R and M-BERT equivalently"

- zero-shot cross-lingual transfer: Applying a model to a target language without using any labeled data from that language. Example: "Finetuning MLMs for zero-shot cross-lingual transfer is a useful technique to extend their reach"

Collections

Sign up for free to add this paper to one or more collections.