Choosing Transfer Languages for Cross-Lingual Learning

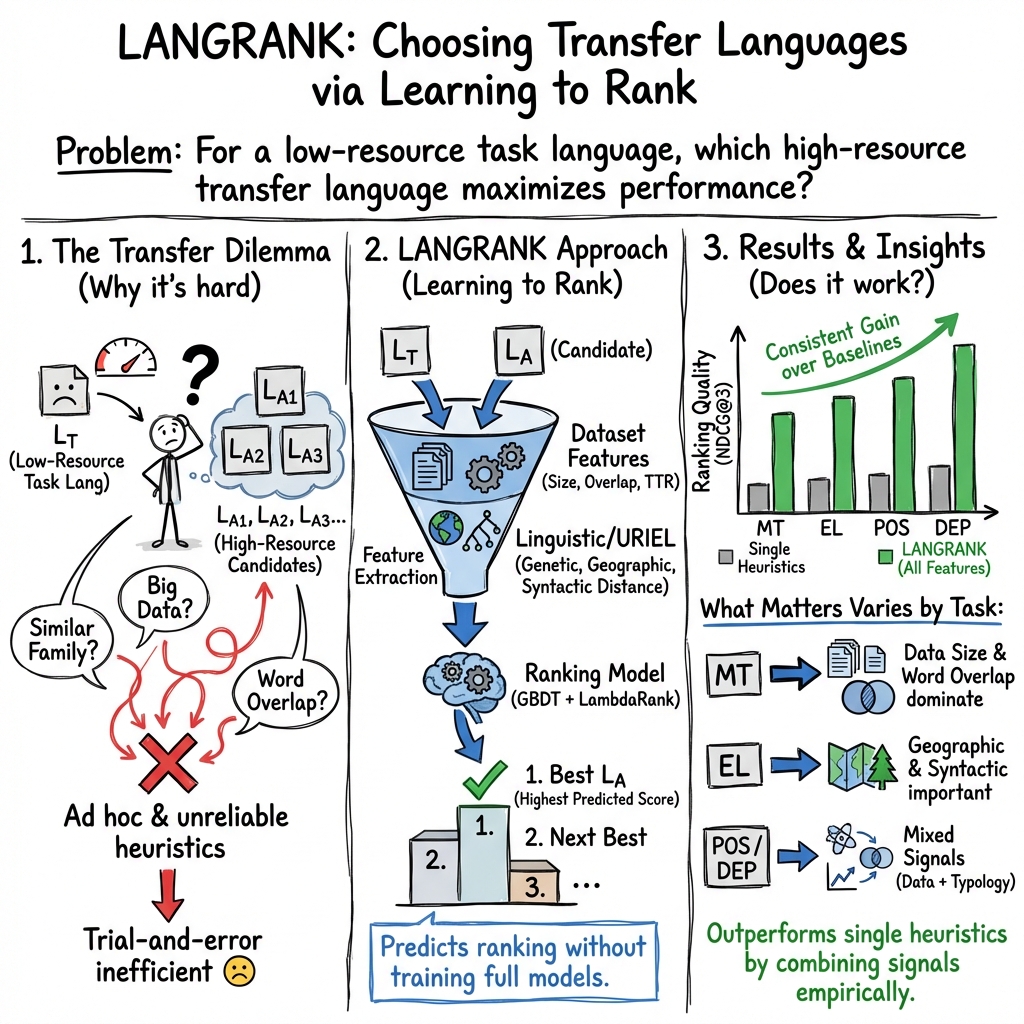

Abstract: Cross-lingual transfer, where a high-resource transfer language is used to improve the accuracy of a low-resource task language, is now an invaluable tool for improving performance of NLP on low-resource languages. However, given a particular task language, it is not clear which language to transfer from, and the standard strategy is to select languages based on ad hoc criteria, usually the intuition of the experimenter. Since a large number of features contribute to the success of cross-lingual transfer (including phylogenetic similarity, typological properties, lexical overlap, or size of available data), even the most enlightened experimenter rarely considers all these factors for the particular task at hand. In this paper, we consider this task of automatically selecting optimal transfer languages as a ranking problem, and build models that consider the aforementioned features to perform this prediction. In experiments on representative NLP tasks, we demonstrate that our model predicts good transfer languages much better than ad hoc baselines considering single features in isolation, and glean insights on what features are most informative for each different NLP tasks, which may inform future ad hoc selection even without use of our method. Code, data, and pre-trained models are available at https://github.com/neulab/langrank

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about helping computers work better with languages that don’t have much data (called “low‑resource languages”). The idea is to “borrow help” from another language that has more data (a “transfer language”). The big question is: Which language should we borrow from to get the best results? Instead of guessing, the authors build a system that predicts the best transfer languages for a given task and language.

What questions did the researchers ask?

They focused on three simple questions:

- If we want to improve a low-resource language on a specific task (like translation), which other language should we use to help?

- Can a computer automatically rank the best helping languages instead of relying on human hunches?

- Which language features (like similarity, location, or data size) matter most for different tasks?

How did they study it?

To make this understandable, think of picking a study buddy. You’re trying to learn math (the task), and you can choose from many classmates (transfer languages). You want the buddy who will help you improve the most. Rather than trying every buddy one by one each time, you train a system that learns which buddy usually helps best, based on useful clues (features).

Step 1: Try lots of pairs to create training examples

The authors first did a lot of experiments: for many low-resource “task” languages and many possible “transfer” languages, they trained models and recorded how well each pair worked. This created a big “scoreboard” of which pairs performed well.

Step 2: Train a ranker (a smart picker)

They then trained a ranking model (called LANGRANK) to predict, for a new task language, which transfer languages are likely to be most helpful. Technically, they used gradient boosted decision trees with a ranking method (LambdaRank). You can think of this as a set of if‑then rules arranged like a tree that learns patterns such as “If the two languages share many words and the helper has a big dataset, that’s a good match.”

What clues (features) did they use?

They used two kinds of clues about each task/transfer language pair:

- Dataset-based clues (what’s in the data):

- Size of the training data for each language and the size ratio (bigger helper datasets can help more).

- Word overlap: how many words or subwords are shared between the two languages (more overlap can make learning easier).

- Type-Token Ratio (TTR): a simple measure of how diverse the vocabulary is (helps hint at how similar the languages’ word patterns are).

- Language-based clues (from linguistics, not tied to a specific dataset):

- Geographic distance (are the languages used near each other?).

- Genetic (family tree) distance (are they related historically?).

- Syntactic, phonological, and inventory distances (do they have similar grammar and sounds?).

- A combined “featural” distance that mixes several properties.

They tested LANGRANK on four common NLP tasks:

- Machine Translation (MT): translating into English.

- Entity Linking (EL): matching names in another language to entries in English Wikipedia.

- Part-of-Speech (POS) Tagging: labeling words as noun, verb, etc.

- Dependency Parsing (DEP): figuring out “who did what to whom” in a sentence.

What did they find, and why is it important?

The authors found that:

- Their ranking system (LANGRANK) chose good transfer languages much better than simple one-feature rules (like “pick the closest language family” or “pick the one with most shared words”).

- For different tasks, different clues matter most:

- Machine Translation: dataset features (especially data size and word/subword overlap) are very important.

- POS Tagging: dataset size and vocabulary diversity (TTR) matter a lot.

- Entity Linking: language-relationship clues (like geography and syntax) matter more, because the EL data doesn’t include full sentences and has fewer helpful dataset clues.

- Dependency Parsing: a mix of geography, language family, and word overlap works best.

- Even when they only used linguistics database info (without any dataset stats), their method still beat simple rule-based choices. This means the approach can help even before you collect data.

Why this matters:

- It saves time and effort. Instead of running expensive experiments for every possible language pair, you can try just the top few recommendations.

- It gives practical guidance: for example, in translation, start by looking for a helper language with a big dataset and lots of shared words or subwords.

What could this change in the real world?

- Faster support for low-resource languages: Organizations can bring better language tools (like translation or text analysis) to more languages by smartly picking helper languages.

- Better planning: Before collecting data, teams can decide which languages are worth investing in as helpers.

- Smarter heuristics: Even if you don’t use the model, the learned “rules of thumb” (e.g., “for translation, prioritize size and overlap”) can improve decision-making.

In short

- Purpose: Pick the best helper language to improve NLP for low-resource languages—automatically and smartly.

- Approach: Learn to rank transfer languages using a mix of dataset clues and linguistic similarities.

- Results: The learned ranker beats simple one-feature rules across translation, entity linking, POS tagging, and parsing.

- Impact: Less trial-and-error, faster progress for many languages, and clearer guidance on what matters for each task.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, actionable list of what remains missing, uncertain, or unexplored in the paper’s approach and findings.

- Cost of supervision for training the ranker: Creating labels requires an exhaustive sweep of task–transfer pairs (training and evaluating many models), which is computationally and data expensive; how can one learn an effective ranker with far fewer transfer experiments (e.g., via active learning, bandits, or Bayesian optimization)?

- Generalization across tasks and languages: The learned rankers are trained on specific tasks (MT, EL, POS, DEP) and language sets (TED, UD v2.2, Wikipedia EL); how well do they extrapolate to unseen tasks, domains, or language families, especially when typologically distant languages are present?

- Method-specific rankings: The “best” transfer language is tied to a particular transfer paradigm per task (joint training vs. zero-shot vs. fine-tuning); how stable are rankings across different transfer methods, model architectures, and training pipelines for the same task?

- Multi-source transfer not addressed: The framework ranks single transfer languages; how to rank combinations of transfer languages, determine optimal subsets, and learn weights for multi-source transfer?

- Cold-start scenarios: Dataset-dependent features require task-language and candidate transfer corpora; what can be done when the task language (and/or transfer language) has little or no available data, beyond URIEL-only features?

- Missing/limited features for some tasks: EL lacks most dataset features (e.g., TTR, subword overlap), which hurt performance; can robust, script-agnostic character or phonetic overlap features (e.g., transliteration-based, IPA-based) improve predictions when sentence-level corpora are unavailable?

- Script and orthography mismatch: Word/subword overlap is near-zero across different scripts; how to design overlap or similarity features (transliteration, Unicode normalization, phonological mappings) that remain informative across scripts?

- Sensitivity to subword segmentation choices: Subword overlap depends on BPE/segmentation hyperparameters and joint vocabularies; how do different segmentation strategies (BPE vocab size, unigram LM, shared vs. separate vocabs) affect both overlap features and rankings?

- Limited lexical similarity proxies: TTR distance is a crude proxy for morphology/lexical variation; can richer morphology-aware or cognate/loanword similarity measures (e.g., character n-grams, morpheme overlap, cognate detection) improve ranking quality?

- Domain similarity not modeled: Overlap and size do not capture cross-language domain/topic similarity; can domain proximity (e.g., topic distributions, register, genre) be estimated and used as a feature to better predict transfer success?

- Data quality and noise: Corpus quality (noise, annotation consistency, tokenization) is not modeled; can quality metrics (e.g., label noise estimates, sentence alignment quality, treebank guidelines compatibility) better explain transfer outcomes?

- Cross-lingual embedding quality as a feature: For zero-shot settings (e.g., DEP), transfer success depends on cross-lingual embedding alignment; can alignment quality indicators (e.g., bilingual lexicon induction accuracy, hubness metrics) be incorporated?

- Annotation scheme compatibility: For UD-based tasks, differences in guidelines across treebanks influence transfer; how to quantify and include annotation compatibility as a feature?

- Directionality in MT is fixed (to English): Rankings were learned for source→English; do optimal transfer languages change when translating into non-English targets or when reversing direction?

- Dataset bias and coverage: Evaluation relies on TED talks (MT) and UD v2.2 (POS/DEP) with specific language coverage; how do conclusions change with other domains (news, web, conversational) and underrepresented families/scripts?

- Small EL task set: Only 9 task languages in EL led to overfitting and poor generalization (e.g., Telugu, Uyghur); how much task-language diversity is needed for a stable, generalizable ranker, and can multi-task rankers mitigate data scarcity?

- Handling missing features: Some dataset-dependent features are unavailable for certain languages (e.g., zero training data); what principled imputation or feature-dropping strategies maintain robust performance?

- Training signal truncated to top-10: Learning uses only top-ymax=10 labels and evaluation focuses on NDCG@3; how do results change with alternative relevance signals (e.g., full pairwise orders, continuous scores) or different K?

- Uncertainty estimates: The ranker provides point predictions without uncertainty; can calibrated confidence/variance estimates or Bayesian ranking inform how many transfer languages to try?

- Alternative rankers and meta-learning: Only GBDT/LambdaRank was explored; would neural rankers, listwise objectives, meta-learning (e.g., MAML-style), or hybrid models improve generalization, especially with sparse features?

- Interpretability depth: Feature importance counts provide coarse insight; can more rigorous interpretability (e.g., SHAP values, partial dependence, counterfactual analyses) reveal actionable, causal patterns for each task?

- Feature completeness of URIEL: URIEL distances may be missing or noisy for some languages; what is the impact of missingness and can learned language embeddings (e.g., from multilingual LMs) or augmented typology improve typological features?

- Impact of multilingual pretraining: The study pre-dates massive multilingual encoders (e.g., mBERT, XLM-R); does optimal transfer-language choice change under such models, and can language-embedding similarity outperform current features?

- Budget- and cost-aware selection: The framework does not consider data acquisition cost, licensing, or annotation budgets; how to incorporate costs and constraints (e.g., maximize performance per unit cost) into the ranking?

- Robustness to hyperparameter and seed variance: Transfer performance is noisy across runs; how sensitive are rankings to training variance, and can aggregated or variance-aware labels stabilize the ranker?

- Granularity of size features: Size is measured in sentences/entities; does token count, average sentence length, or vocabulary size yield better signals than sentence counts alone?

- Fairness and coverage across families: Do rankings disproportionately favor certain families (e.g., Turkish/Hungarian as frequent fallbacks) and how to ensure equitable recommendations for typologically diverse targets?

- Active guidance for data creation: The paper suggests pre-collection guidance potential but does not operationalize it; how to use the ranker (or a URIEL-only variant) to prioritize which transfer corpora to build to maximize downstream gains across many task languages?

- Stability across resource levels: Do the learned criteria change with varying task-language resource levels (true zero-shot vs. few-shot vs. mid-resource), and should the ranker be conditioned on resource regime?

- Evaluation in realistic search loops: Beyond NDCG and top-K best score curves, how much time/compute does the ranker save in practice under realistic try-and-evaluate budgets, and what is the expected regret vs. exhaustive search?

Practical Applications

Practical Applications of “Choosing Transfer Languages for Cross-Lingual Learning (LANGRANK)”

The paper introduces LANGRANK, a learning-to-rank method that predicts the best high-resource “transfer” languages to improve NLP for low-resource “task” languages, using dataset-dependent features (e.g., size, lexical overlap) and dataset-independent typological features (from URIEL). It demonstrates gains across machine translation (MT), entity linking (EL), part-of-speech tagging (POS), and dependency parsing (DEP), with interpretable feature importance that can guide heuristics even without the model.

Below are actionable applications derived from the paper’s findings, methods, and innovations.

Immediate Applications

- Industry: Rapid transfer-language selection in NLP product pipelines

- Sectors: Software, Localization, Consumer Tech (chatbots, voice assistants), E-commerce, Search

- What: Integrate LANGRANK to recommend top-K transfer languages for MT, POS, DEP, and EL to bootstrap low-resource language support.

- Workflow:

- Compute features (dataset size, word/subword overlap, TTR) for candidate donor corpora; retrieve URIEL distances via

lang2vec. - Run pre-trained LANGRANK (e.g.,

neulab/langrank) to rank donors. - Train/evaluate models using only top-K donors (per Fig. 2, K≈3–5 achieves near-optimal performance).

- Tools/Products: CI/CD step or AutoML module for “Transfer Language Recommendation”; MLOps dashboards showing donor rankings and feature attributions.

- Assumptions/Dependencies: Availability of URIEL features for candidate languages; basic corpora for overlap/size stats (or use URIEL-only model); task compatibility with tested settings; domain mismatch risks.

- Cost-efficient experiment design and compute budgeting

- Sectors: Software, Cloud/AI Platforms

- What: Replace brute-force sweeps over donors with targeted trials on top-K recommended donors to cut compute and time.

- Workflow: Use NDCG@3-guided selections to cap experimentation; monitor best-of-top-K model performance as a stopping criterion.

- Assumptions: Ranking generalizes to your tasks/domains; some measured task scores for calibration.

- Data acquisition prioritization for low-resource languages

- Sectors: Academia, NGOs, Government (Language Technology, Emergency Response), Localization

- What: Use URIEL-only LANGRANK to select promising donor languages before any data is collected; focus collection on predicted high-yield donors and target corpora that increase overlap.

- Tools/Products: Donor prioritization scorecards; grant/funding proposals guided by “transfer potential.”

- Assumptions: Adequate URIEL coverage; typological distances are reliable proxies; socio-political feasibility to access corpora.

- Heuristic rules-of-thumb when models aren’t available

- Sectors: Academia, Startups, Open-source

- What: Apply feature-importance insights to design quick heuristics:

- MT: favor donors with high lexical overlap and large datasets; subword overlap matters.

- POS: dataset size and TTR distance smallness are key.

- EL: geographic and syntactic proximity dominate when dataset features are sparse.

- DEP: combine geographic/genetic proximity with word overlap.

- Assumptions: Similarity of your task/data to paper’s setups; careful with domain/script differences.

- Vendor selection and localization strategy

- Sectors: Localization, Media/Content Platforms

- What: Choose pivot/bridge languages for annotation projection and translation workflows to reduce cost and turnaround.

- Assumptions: Availability of bilingual resources and projection pipelines; alignment with target scripts.

- Rapid response for crisis language support

- Sectors: Emergency Management (e.g., LORELEI-style), Public Health

- What: Pick transfer “mentor” languages to speed deployment of EL, NER, and MT for emergent languages during incidents.

- Assumptions: Access to multilingual embeddings (for zero-shot DEP), gazetteers for EL, basic normalization tools.

- Domain-focused model bootstrapping

- Sectors: Healthcare, Finance, Customer Support

- What: Use donor ranking to spin up initial models for triage chat, KYC document parsing, or policy text translation in underserved languages.

- Dependencies: Domain corpus availability; compliance with data privacy and regulatory constraints.

- Open-source and research reproducibility

- Sectors: Academia, OSS

- What: Adopt

neulab/langrankto standardize donor selection across studies; improve comparability and reduce experimental variance. - Assumptions: Community adoption; maintainers update for new tasks.

- Dataset curation to maximize transferability

- Sectors: Data Providers, Crowdsourcing Platforms

- What: Curate/expand corpora to increase lexical/subword overlap with target languages (e.g., shared named entities, script-normalized text).

- Dependencies: Script harmonization (e.g., Unicode normalization, transliteration); BPE segmentation tooling.

Long-Term Applications

- Generalizing LANGRANK to new modalities and tasks

- Sectors: Speech (ASR/TTS), OCR, Multimodal AI, Information Retrieval, Summarization, QA

- What: Extend features to include phonotactic/signal features (for ASR), visual features (OCR), discourse/syntax features (summarization/QA), and domain shift metrics.

- Products: “Cross-lingual AutoML” that selects donors, schedules joint/zero-shot training, and adapts subword vocabularies.

- Dependencies: New training sweeps to learn task-specific rankers; collection of cross-modal typological analogs.

- SaaS “Transfer Language Recommender” platforms

- Sectors: AI Platforms, Localization Tech, Enterprise NLP

- What: A commercial service that ingests your corpora, computes features, ranks donors, and orchestrates top-K training with dashboards and governance controls.

- Features: API endpoints, compliance/audit trails, cost/performance trade-off simulators, fairness-aware recommendations.

- Dependencies: Continuous learning from customer data (privacy-preserving), integration with compute providers and model registries.

- Policy and funding optimization through “language hub” mapping

- Sectors: Government, NGOs, International Development, Education

- What: Identify donor “hub” languages that maximize downstream coverage; inform investments in data creation and teacher training for linguistic clusters.

- Products: Regional transfer networks; “linguistic investment portfolios.”

- Dependencies: Updated URIEL-like databases; sociolinguistic coverage; equitable prioritization guidelines.

- Active data creation and crowdsourcing orchestration

- Sectors: Crowdsourcing, Community Language Projects

- What: Rank which new annotations (language, domain) will yield maximal cross-lingual gains; route tasks to communities accordingly.

- Dependencies: Incentive structures; platform support for multilingual QA; monitoring of bias and representativeness.

- Embedded language adaptation for devices and edge AI

- Sectors: Robotics, IoT, Automotive, Consumer Devices

- What: On-device advisory services recommending donor languages for small-footprint ASR/NLU models to enable local-language interactions.

- Dependencies: Efficient feature computation on-device; offline typological vectors; model compression compatibility.

- Standards and procurement guidelines

- Sectors: Public Sector, Large Enterprises

- What: Incorporate typology-aware transfer selection as a best practice in RFPs and compliance frameworks; require disclosing donor selection methodology and impact on minority languages.

- Dependencies: Community consensus; evaluation benchmarks to validate fairness and effectiveness.

- Fairness and ethics-aware donor selection

- Sectors: Policy, Responsible AI

- What: Adjust rankings to balance performance with preservation of linguistic diversity; avoid reinforcing dominance of major “hub” languages at the expense of marginalized ones.

- Dependencies: Sociotechnical metrics; participatory design with language communities.

Cross-cutting Assumptions and Dependencies

- Coverage and quality of URIEL typological vectors; many under-documented languages may be missing or inaccurately represented.

- Availability and quality of task-specific corpora to compute dataset-dependent features (size, overlap, TTR); script differences may reduce apparent overlap unless normalized/transliterated.

- The provided models were trained on specific setups (e.g., TED MT into English, UD datasets, zero-shot settings); domain and directionality shifts may reduce ranking fidelity.

- Access to multilingual embeddings and subword segmentation tools for comparable features across languages.

- Licensing and privacy constraints for data; compute resources for training top-K donor models.

- Risk of bias toward large, well-resourced donor languages; governance mechanisms needed to ensure equitable outcomes.

By operationalizing LANGRANK (or its distilled heuristics) in the above contexts, teams can cut exploratory cost, accelerate time-to-support for low-resource languages, and make more principled, interpretable choices about cross-lingual transfer.

Glossary

- Ablation study: An experimental analysis where components or features are systematically removed to assess their impact on performance. "through an ablation study"

- Annotation projection: A transfer technique that projects annotations from a resource-rich language to a low-resource language via parallel or aligned data. "annotation projection"

- Antipodal distance: The maximum possible distance between two points on the Earth's surface (half the Earth's circumference), used for normalizing geographic distance. "divided by the antipodal distance"

- Attention-based sequence-to-sequence model: A neural architecture for sequence tasks that uses an attention mechanism to focus on relevant input parts during decoding. "We train a standard attention-based sequence-to-sequence model"

- Biaffine attentional graph-based model: A dependency parsing model that uses biaffine attention to score arcs in a graph-based formulation. "a deep biaffine attentional graph-based model"

- Bi-directional LSTM-CNNs-CRF: A sequence labeling architecture combining bidirectional LSTMs, CNNs, and a CRF layer for structured prediction. "We train a bi-directional LSTM- CNNs-CRF model"

- BLEU: A metric for evaluating machine translation quality based on n-gram precision with brevity penalty. "BLEU for MT"

- Character-level LSTM encoder: A neural encoder that processes sequences of characters (rather than words) using LSTM units. "two character-level LSTM encoders"

- Cosine distance: A distance measure derived from cosine similarity, commonly used to compare feature vectors. "The cosine distance between the phonological feature vectors"

- Cosine similarity: A measure of similarity between two vectors based on the cosine of the angle between them. "trained to maximize the cosine similarity"

- Cross-lingual transfer: Leveraging data or models from one language to improve performance in another language. "cross-lingual transfer"

- Data augmentation: Techniques for expanding training data by generating additional examples, often to improve robustness. "data augmentation"

- Dataset size ratio (Stf / Stk): The ratio of transfer-language to task-language dataset sizes, used as a predictive feature. "the ratio of the dataset size, Stf / Stk"

- Dependency parsing: The task of analyzing the syntactic structure of a sentence by identifying dependencies between words. "Dependency Parsing"

- Discounted Cumulative Gain (DCG): An information retrieval metric that accumulates relevance scores with position-based discounting. "Discounted Cumulative Gain (DCG)"

- Entity gazetteer: A curated list of named entities used as a resource for tasks like entity linking. "a bilingual entity gazetteer"

- Entity linking: The task of mapping mentions of entities in text to entries in a knowledge base. "entity linking accuracy"

- Ethnologue: A comprehensive database of world languages used as a resource for linguistic features. "Ethnologue"

- Exhaustive search: A brute-force approach that tries all candidate options to find the best one. "exhaustive search through the space of potential transfer languages"

- Featural distance (dfea): A composite distance computed from multiple linguistic feature distances (e.g., genetic, syntactic, phonological). "Featural distance (dfea)"

- Fine-tuning: Adapting a pretrained model to a specific task or dataset through additional training. "fine-tuning (Zoph et al., 2016; Neubig and Hu, 2018)"

- GBDT (Gradient Boosted Decision Trees): An ensemble learning method that builds additive decision trees via gradient boosting. "gradient boosted decision trees (GBDT; Ke et al. (2017))"

- Geographic distance (dgeo): A normalized spatial distance between languages based on their geographical locations. "Geographic distance (dgeo)"

- Genetic distance (dgen): A measure of genealogical relatedness between languages based on language family trees. "Genetic distance (dgen)"

- Glottolog: A database of language families and classifications used to derive genetic and geographic features. "Glottolog"

- Ideal Discounted Cumulative Gain (IDCG): The maximum possible DCG for a ranking, used to normalize DCG into NDCG. "Ideal Discounted Cumulative Gain (IDCG)"

- Inventory distance (dinv): A distance based on differences in phonological inventories between languages. "Inventory distance (dinv)"

- Joint training: Training a single model on concatenated data from multiple languages or sources simultaneously. "joint training"

- LambdaRank: A learning-to-rank algorithm that optimizes ranking metrics via gradient adjustments (lambdas). "the LightGBM implementation (Ke et al., 2017) of the LambdaRank algorithm"

- LAS (Labeled Attachment Accuracy): A dependency parsing metric measuring the percentage of words with correctly predicted head and label. "LAS (Labeled At- tachment Accuracy) excluding punctuation."

- Learning to rank: A machine learning approach focused on ordering items by relevance rather than predicting absolute scores. "learning to rank (see, e.g., Liu et al. (2009))"

- Leave-one-out cross validation: An evaluation protocol where one instance (e.g., language) is held out for testing while the rest are used for training, repeated for all instances. "leave-one-out cross validation"

- LightGBM: A fast, gradient-boosting framework for tree-based learning. "We use the LightGBM implementation (Ke et al., 2017)"

- Machine Translation (MT): The task of automatically translating text from one language to another. "Machine Translation"

- NCRF++ toolkit: An open-source toolkit for neural sequence labeling with CRF layers. "the NCRF++ toolkit (Yang and Zhang, 2018)"

- NDCG (Normalized Discounted Cumulative Gain): A normalized ranking metric comparing DCG to its ideal value to assess ranking quality. "Normalized Discounted Cumulative Gain (NDCG)"

- Orthodromic distance: The great-circle distance between two points on a sphere, used here for geographic language distance. "The orthodromic distance between the languages on the surface of the earth"

- PHOIBLE: A database of phonological inventories used to compute phonological feature distances. "PHOIBLE"

- Phonological distance (dpho): A distance based on phonological feature differences between languages. "Phonological distance (dpho)"

- Pivot language: An intermediate language used to bridge translation or transfer between two other languages. "a 'pivot language'"

- POS Tagging (Part-of-speech tagging): Assigning part-of-speech labels (e.g., noun, verb) to words in a sentence. "POS Tagging"

- Subword overlap (osw): The degree of shared subword units between corpora for two languages. "subword overlap osw"

- Syntactic distance (dsyn): A distance based on differences in syntactic features between languages. "Syntactic distance (dsyn)"

- Typological information: Linguistic properties describing structural features of languages (e.g., word order). "including typological information and corpus statistics"

- Type-Token Ratio (TTR): A measure of lexical diversity calculated as unique word types divided by total tokens. "Type-Token Ratio (TTR)"

- Universal Dependencies: A cross-linguistic treebank project providing standardized annotations for many languages. "Universal Dependencies v2.2"

- URIEL Typological Database: A resource providing typological, geographic, and genetic language features. "URIEL Typological Database"

- WALS database: The World Atlas of Language Structures, a database of structural (phonological, grammatical) properties of languages. "WALS database"

- Word overlap (ow): The degree of shared word types between corpora for two languages. "word overlap ow"

- XNMT toolkit: A research-oriented neural machine translation toolkit used for sequence-to-sequence modeling. "the XNMT toolkit"

- Zero-shot setting: A training/evaluation scenario where the model has seen no labeled data for the target language/task. "in a zero-shot setting"

- Zero-shot transfer: Applying a model trained on one language directly to another without task-language supervision. "zero-shot transfer"

Collections

Sign up for free to add this paper to one or more collections.