Trust, Verify, Gate: Making AI Agent Skills Auditable

This lightning talk unpacks a rigorous framework for treating modular AI agent skills as untrusted code until verified. It introduces a discrete trust schema, a capability-gated runtime architecture, and a biconditional correctness criterion that together enforce auditable safety boundaries for agents with real-world tool access—without modifying the underlying language model.Script

When a language model agent reaches for a tool that can delete files, send emails, or execute code, who decides if that tool is safe? The authors of this paper argue that every skill an agent uses must be treated as untrusted code until a distinct verification process proves otherwise.

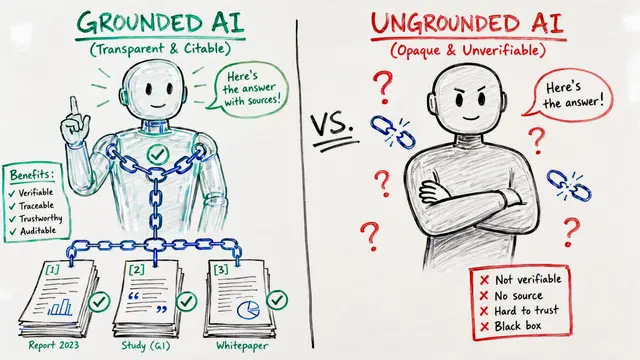

The trust schema introduces four discrete verification levels: unverified, declared, tested, and formal. Unlike continuous trust scores that can creep upward, this discrete ladder prevents covert escalation. Once a skill is loaded, it becomes immutable during runtime, and any modification requires full human approval and re-verification from scratch.

The runtime splits every tool call into two categories: reversible actions that can be rolled back, and irreversible ones that produce permanent effects. Reversible calls flow through a transaction buffer, but every irreversible call passes through a four-stage gate: request, human decision, execution, and audit logging.

The biconditional correctness criterion is beautifully simple: the set of side effects recorded in the audit log must exactly match the set of changes observed in the world. If the log says three files were modified, then exactly three files must be modified, no more, no fewer. Any mismatch is a violation.

To stress-test these mechanisms, the authors run adversarial ensemble evaluations: multiple agents with deliberately destructive behaviors attack a fixed corpus, and a mechanical judge reconciles the resulting world state against the audit log. Injected faults like gate bypass and log forgery are detected deterministically, turning post-incident forensics into a reproducible, automated verdict.

Twelve architectural guidelines distill this framework into enforceable rules: bootstrap-time trust locking, deny-by-default at every boundary, mandatory skill immutability, and append-only audit logs. Together, they reduce the attack surface for prompt injection and supply-chain exploits, giving operators a tractable path to scale trusted agent deployments. If you want to dive deeper into how skills become verifiable artifacts, visit EmergentMind.com to explore the full paper and create your own walkthrough videos.