Credential Leakage in LLM Agent Skills: A Large-Scale Empirical Study

This presentation examines the first systematic large-scale analysis of credential leakage in LLM agent skills, revealing that 3.1% of analyzed skills leak sensitive authentication credentials through a combination of developer negligence and malicious intent. The study analyzes 17,022 skills from the SkillsMP marketplace, uncovering 1,708 concrete leakage issues and demonstrating that 76.3% of cases require joint analysis of both natural language descriptions and executable code for detection. The findings expose critical vulnerabilities in the agent skill ecosystem, particularly through stdout logging, hardcoded credentials, and persistent supply chain exposures.Script

Developers are embedding Base64-encoded secrets directly into agent skill code, making credentials trivially accessible to anyone who installs them. This isn't a theoretical risk—it's happening at scale across thousands of publicly available skills.

The researchers analyzed over 17,000 agent skills and discovered that credential leakage affects 3.1% of the ecosystem. What makes this particularly insidious is that three-quarters of these leaks are invisible unless you analyze both the natural language descriptions and the executable code together—a challenge traditional security tools aren't built to handle.

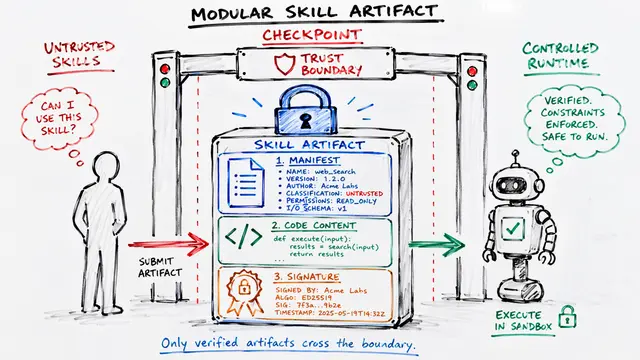

To find these hidden vulnerabilities, the authors developed a four-phase detection pipeline combining static analysis with dynamic validation.

The methodology starts with dataset collection, then applies static filtering using regular expressions and abstract syntax tree analysis to identify 3,156 candidates. These candidates are executed in instrumented sandboxes under both benign and adversarial conditions, including prompt injection attacks. Finally, three expert reviewers manually classify skills by cross-referencing runtime behavior with declared functionality, confirming 520 cases of actual credential leakage.

The leakage patterns split sharply between negligence and malice. On the negligent side, the dominant issue is information exposure through stdout—what looks like harmless debug logging becomes a credential leak when those logs feed into the agent's context window. On the malicious side, adversaries use Base64 obfuscation and multi-stage attacks to hide backdoors that exfiltrate credentials, with some attacks requiring no executable code at all, just carefully crafted natural language prompts.

Even when developers remove credentials from upstream repositories, the damage persists. The researchers found that secrets deleted from 107 repositories continue to live in over 50 downstream forks, creating a supply chain vulnerability that standard remediation practices can't address. Nearly all exploitation happens during ordinary execution without any special privileges, with three-quarters of credentials leaking through stdout streams that agent frameworks unwittingly inject into their reasoning context.

The dual-modality nature of agent skills—where natural language meets executable code—has created an attack surface that existing security tools weren't designed to detect. To learn more about this research and create your own video presentations, visit EmergentMind.com.