Spiking Neural Networks: Models and Applications

This presentation explores Spiking Neural Networks, computational models that process information through discrete spike events rather than continuous activations. We examine how SNNs achieve remarkable energy efficiency through event-driven computation, review key learning algorithms from probabilistic frameworks to surrogate gradients, and survey applications spanning classification, robotics, and associative memory. The talk highlights how biological principles inspire both theoretical advances and practical neuromorphic hardware implementations.Script

Imagine a neural network that doesn't hum continuously but instead whispers in sharp, efficient bursts—only speaking when it has something urgent to say. Spiking Neural Networks transform computation by replacing constant activation with discrete spike events, unlocking extraordinary energy efficiency and biological realism.

Let's begin by understanding what makes these networks fundamentally different from their classical counterparts.

Building on this distinction, classical networks operate with continuous, synchronized values, while Spiking Neural Networks communicate through discrete, timed events. This spike-based signaling enables temporal coding, where the precise timing of spikes carries information, fundamentally changing how computation unfolds.

These networks rest on neuron models that integrate incoming spikes into membrane potential, then fire when a threshold is crossed. The probabilistic GLM framework and deterministic integrate-and-fire variants provide mathematical rigor while preserving biological interpretability.

With these foundations in place, how do we actually train these spike-based systems?

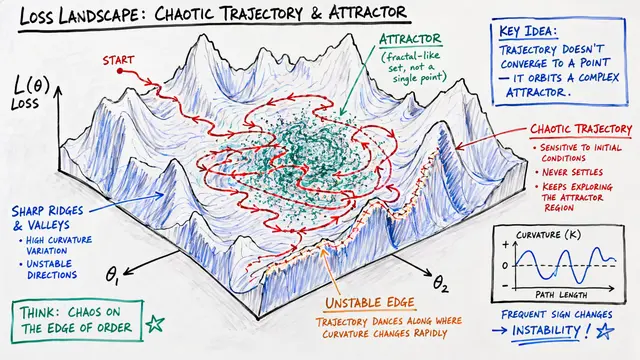

Training poses unique challenges because spikes are non-differentiable events. Researchers have developed surrogate gradient techniques that replace step functions with smooth approximations, biologically inspired plasticity rules like STDP, and conversion methods that map trained classical networks into the spiking domain, each offering different trade-offs between biological plausibility and performance.

Connecting learning to function, SNNs encode information through spike rates or precise timing, supporting diverse applications. They've demonstrated competitive accuracy on image classification, excel at temporal sequence tasks, and implement associative memories with attractor dynamics—where partial patterns trigger complete recall through recurrent connections.

These computational properties translate directly into remarkable hardware advantages.

The payoff comes in hardware realization, where event-driven operation means computation occurs only when spikes arrive. Custom neuromorphic chips achieve 6 to 8 fold energy savings over classical architectures, with FPGA implementations delivering real-time inference measured in fractions of a millisecond, all while sparse evolutionary learning maintains accuracy at just 10 percent connection density.

Yet challenges remain in this maturing field. Recurrent connections enhance robustness against adversarial attacks, but a fundamental trade-off exists between biologically local learning rules and accuracy on complex benchmarks, while scaling these architectures to the depth of modern deep networks and developing universally efficient encoding schemes are active frontiers requiring coordinated algorithmic and hardware innovation.

Spiking Neural Networks bridge neuroscience and engineering, offering a computational paradigm where efficiency emerges from embracing the discrete, temporal nature of neural signaling. To explore the latest research and dive deeper into neuromorphic intelligence, visit EmergentMind.com.