SoftMatcha 2: Searching Trillions of Tokens in Milliseconds

This presentation explores SoftMatcha 2, a breakthrough algorithm for semantically-flexible pattern matching across trillion-scale text corpora. The authors address critical scalability challenges in corpus search by introducing disk-aware staged suffix arrays and dynamic corpus-aware pruning. Through theoretical analysis and extensive empirical validation across seven languages and datasets up to 1.4 trillion tokens, they demonstrate sub-second search latency while handling word substitutions, insertions, and deletions—capabilities that enable practical applications like benchmark contamination detection and training data analysis for large language models.Script

Imagine trying to find a needle in a haystack, except the haystack contains a trillion pieces of straw, and you're not entirely sure what the needle looks like. That's the challenge researchers face when searching massive language model training corpora for semantically similar patterns.

Building on that challenge, the authors identify fundamental limitations in how we search these massive datasets. Current approaches either match text exactly or struggle with semantic flexibility, leaving researchers unable to efficiently find patterns that are similar but not identical across trillion-token corpora.

The solution they propose reimagines corpus search from the ground up.

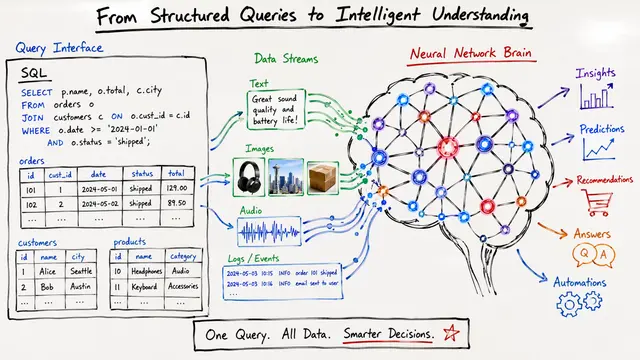

At its heart, SoftMatcha 2 reformulates search as finding token sequences with high semantic similarity using cosine distances between embeddings. This allows the algorithm to recognize that "olympics gold medalist" and "olympics silver medalist" are meaningfully related, capturing the kinds of variations that matter for understanding training data contamination and model behavior.

This visualization reveals how the algorithm balances exploration and efficiency. Starting from the query, it expands the search space to consider semantically similar alternatives, but the key innovation is in how aggressively it prunes unlikely candidates. Without this iterative pruning shown in blue versus the full gray-striped zone, the search space would explode exponentially, making trillion-scale search impossible.

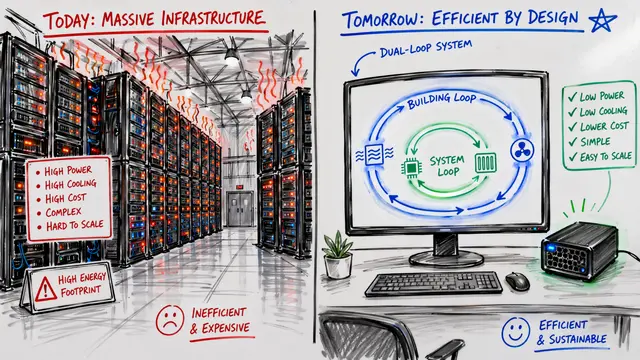

Now, what makes this work at scale comes down to two architectural innovations working in concert. The disk-aware suffix array solves the storage problem by keeping only a sparse index in memory while maintaining fast access to the full structure on disk. Meanwhile, dynamic pruning leverages the statistical reality that most word combinations simply don't occur in natural language, allowing the algorithm to safely discard vast portions of the search space based on corpus statistics.

The empirical results validate the theoretical foundations impressively. Across diverse corpora spanning multiple languages and reaching 1.4 trillion tokens, the system maintains consistently low latency. This isn't just incrementally better; it represents a 33-fold speedup over existing tools for exact search, while adding semantic flexibility that those tools simply cannot provide.

Perhaps the most compelling validation comes from applying this to a practical problem: detecting contamination in benchmark datasets. This breakdown shows how many test problems were flagged as potentially contaminated, with the critical insight being those samples shown beyond exact matches. The authors validated that 81% of these newly detected cases represent true contamination through semantic paraphrase or template leakage, precisely the kinds of subtle data issues that exact matching misses but that can significantly compromise evaluation integrity.

The authors are transparent about current limitations. The reliance on word-level embeddings means certain semantic equivalences remain out of reach, and the algorithmic guarantees rest on assumptions about corpus statistics that may not hold universally. Nevertheless, these constraints point toward clear research directions, particularly in incorporating more sophisticated compositional models while maintaining the efficiency that makes trillion-scale search tractable.

SoftMatcha 2 fundamentally changes what's computationally possible for corpus analysis at the scale of modern language model training, transforming semantic search from a theoretical ideal into a practical tool for understanding and auditing the data foundations of AI systems. Visit EmergentMind.com to explore more cutting-edge research.