SoftMatcha 2: A Fast and Soft Pattern Matcher for Trillion-Scale Corpora

This presentation explores SoftMatcha 2, a breakthrough algorithm for semantically flexible corpus search at trillion-scale. The authors tackle a critical challenge: traditional exact-match methods fail to capture semantic variations, while naive semantic search explodes in complexity. SoftMatcha 2 combines disk-aware staged suffix arrays with dynamic corpus-aware pruning to achieve sub-second soft pattern matching across datasets exceeding 1.4 trillion tokens. We'll examine how the algorithm exploits natural language statistics to suppress exponential candidate growth, its impressive empirical results across seven languages, and its practical application to benchmark contamination detection—where it reveals hidden data leakage missed by exact matching.Script

Imagine searching for a phrase across a trillion tokens in under a second, while capturing not just exact matches but semantic variations, synonyms, and paraphrases. Traditional exact-match algorithms break down at this scale, and naive semantic search creates an exponential explosion of candidates that's computationally intractable.

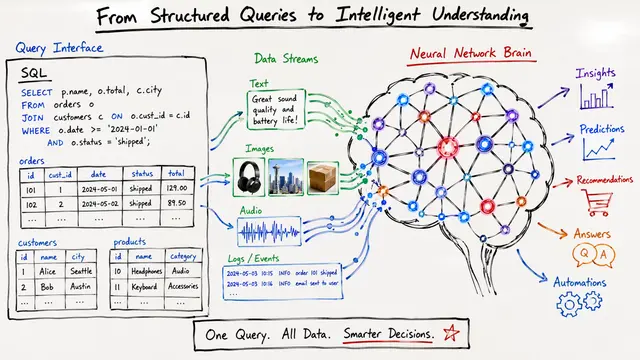

Let's start by understanding why existing search methods fall short at trillion scale.

Building on that challenge, traditional exact matching tools like suffix arrays completely miss semantically similar phrases. Meanwhile, inverted indexes struggle with the sheer scale of modern language model training corpora, and attempting to relax search semantically without careful design creates an exponential explosion of candidate matches that's impossible to process efficiently.

The authors introduce a clever solution that exploits fundamental statistical properties of language.

The algorithm introduces two synergistic innovations. First, a disk-aware staged suffix array architecture uses a sparse RAM-resident index to localize searches, ensuring each lookup requires only a single random disk access. Second, dynamic corpus-aware pruning exploits Zipfian power-law distributions in natural language, iteratively filtering candidate expansions against actual corpus occurrences to suppress exponential growth.

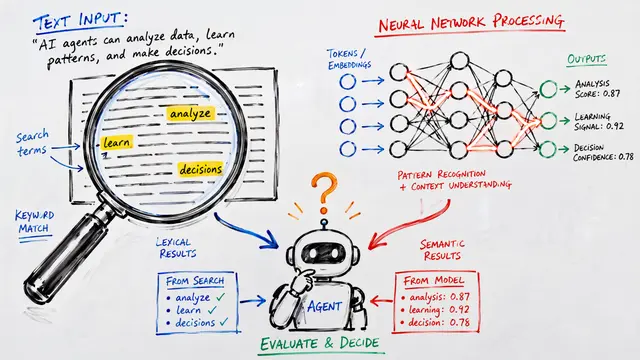

This visualization illustrates the pruning mechanism in action. When searching for "olympics gold medal," naive semantic expansion would require exploring the entire gray-striped zone plus the blue zone. The dynamic pruning strategy eliminates the gray regions by checking corpus occurrence at each expansion step, dramatically constraining the search space while preserving semantically relevant matches.

The empirical validation demonstrates remarkable efficiency at unprecedented scale.

Connecting these innovations to real-world performance, the authors evaluated SoftMatcha 2 on diverse corpora spanning up to 1.4 trillion tokens. They achieved 95th percentile soft-search latencies under 0.3 seconds, with exact search running 33 times faster than the infini-gram baseline, and critically, latency remained nearly constant even as corpus size increased by orders of magnitude.

Beyond raw performance, the authors applied SoftMatcha 2 to benchmark contamination detection, uncovering dirty test samples overlooked by exact matching. Of the newly identified samples, 81 percent were validated as true contamination involving semantic paraphrases or template variations like number substitutions, demonstrating that soft matching is essential for maintaining benchmark integrity in the age of trillion-token training corpora.

This breakdown reveals the contamination detection results across multiple benchmark datasets. The stacked bars show how many dirty problems were flagged, with the critical insight being the proportion of samples uniquely detected by soft search that were invisible to exact matching, validating the necessity of semantic flexibility for rigorous data curation.

SoftMatcha 2 establishes a new frontier for semantic corpus search, proving that trillion-scale soft matching is not only possible but practical, with profound implications for training data analysis, contamination detection, and understanding the behaviors of modern language models. To dive deeper into this work and explore other cutting-edge research, visit EmergentMind.com.