Secure Linear Alignment of Large Language Models

This presentation explores how independently trained large language models can be aligned using simple linear transformations to enable privacy-preserving inference and cross-model text generation. The work introduces HELIX, a protocol that achieves sub-second encrypted inference by exploiting the surprising geometric compatibility of modern language model representations, while maintaining strong cryptographic guarantees for client privacy.Script

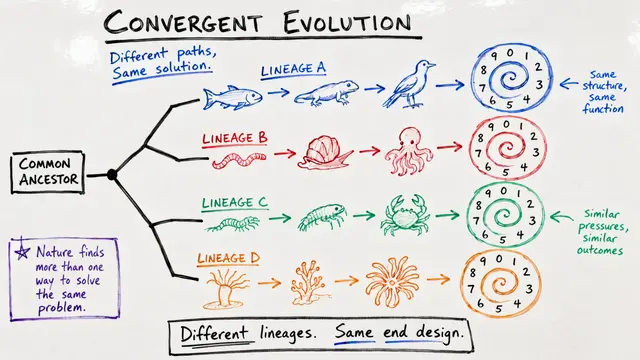

Modern language models, despite being trained independently by different organizations, are converging toward a shared geometric structure. This paper reveals that a simple linear transformation can align their hidden representations well enough to enable not just classification, but actual text generation across models, all while keeping queries encrypted.

The authors quantified this alignment rigorously. Using centered kernel alignment and other similarity measures across dozens of model pairs, they found robust shared structure, with scores consistently above 0.6 and often approaching 0.9. This isn't random overlap; it's evidence of a common latent geometry emerging from frontier-scale training.

That discovery enabled a practical privacy-preserving system.

HELIX implements two-party secure computation. The client encrypts embeddings from its private model, sends them to the provider who computes an alignment map under encryption, then performs inference on encrypted representations. The provider never sees plaintext queries or embeddings. Crucially, only the lightweight alignment and classification steps require encryption, not the full transformer stack, yielding sub-second latency and communication under 1 megabyte per query. Competing systems that encrypt entire transformers take tens of seconds and gigabytes.

Text generation across models is the headline result, but it's conditional. Across 34 model pairs, success required two things: vocabulary compatibility, quantified by exact token match rate with Pearson correlation 0.898, and sufficient model scale on both sides. When tokenizers diverge or the source model is too small, generation collapses. When conditions align, a linear map trained on public data lets one model's activations be decoded by another's head with surprising fluency.

The protocol assumes semi-honest adversaries and prioritizes client privacy. Encrypted embeddings and outputs are all the provider ever sees. The alignment map retained by the client reveals only coarse geometric structure, with membership inference attacks bounded theoretically and empirically at negligible advantage. Compared to encrypting entire transformers, HELIX achieves orders-of-magnitude efficiency gains without sacrificing cryptographic security for the query.

Linear maps unlock modular, privacy-preserving AI: independently trained models can collaborate without sharing parameters or data, and in some cases, even generate text across boundaries once thought impassable. Visit EmergentMind.com to explore more research and create your own video presentations.