Geometry Informed Tokenization of Molecules for Language Model Generation

This presentation explores Geo2Seq, a novel framework that transforms 3D molecular structures into discrete sequences that language models can process. By converting molecular geometries into rotation and translation invariant representations, the approach enables language models to generate chemically valid 3D molecules with unprecedented control over quantum properties, outperforming traditional diffusion-based methods in both validity and efficiency.Script

Can you teach a language model to speak the language of molecules in three dimensions? Most language models excel at processing text, but molecular structures exist in 3D space with complex geometries that seem fundamentally incompatible with sequential processing.

Building on that tension, let's examine why existing approaches fall short.

The core challenge is that 3D molecular structures don't naturally fit into the sequential format that language models require. While diffusion models have made progress, they miss out on the powerful capabilities that modern language models bring to the table.

To bridge this gap, the authors introduce Geo2Seq.

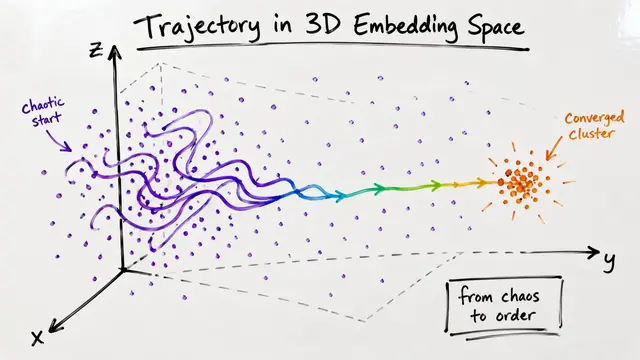

Geo2Seq solves this through two key innovations: it assigns each molecule a canonical ordering to ensure uniqueness, then transforms atomic positions into spherical coordinates that remain unchanged no matter how you rotate or move the molecule. The result is a faithful 1D sequence that language models can process.

This diagram illustrates the elegance of the approach: molecules are systematically ordered, each atom is represented by its type and spherical coordinates, and the entire structure gets concatenated into a single sequence. The spherical representation is the key to maintaining geometric integrity while achieving the invariance properties essential for reliable generation.

So how well does this actually work in practice?

When tested on standard benchmarks, Geo2Seq coupled with the Mamba language model demonstrated impressive results. On QM9, it achieved better atom stability and molecular validity than competing methods, and this performance carried over to the more challenging GEOM-DRUGS dataset as well.

Perhaps most exciting is the controllable generation capability: the framework can generate molecules targeting specific quantum properties like polarizability, and it does so more effectively than diffusion approaches. This opens new doors for rational drug design and materials discovery.

While promising, the authors acknowledge that Geo2Seq is still in early stages. Broader validation across different molecular datasets and exploration of various language model architectures will help establish its full potential and boundaries.

Geo2Seq demonstrates that language models can indeed learn to speak the language of 3D molecules when given the right vocabulary. Visit EmergentMind.com to dive deeper into this bridge between natural language processing and molecular design.