Natural Gradient via Optimal Transport

This presentation explores a novel mathematical framework that connects optimal transport theory with information geometry to create a new approach for optimization in statistical machine learning. The authors define a Riemannian metric using the Wasserstein distance on discrete probability spaces, enabling gradient descent that respects the geometric structure of optimal transport. By incorporating graph structures that represent prior knowledge about sample spaces, this work offers an alternative to classical Fisher-Rao natural gradients with distinct geometric properties and optimization paths.Script

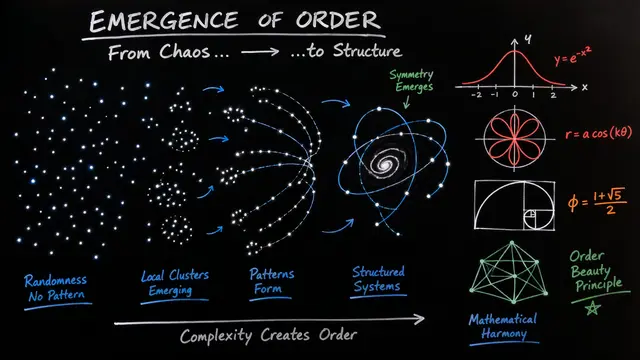

What if we could make gradient descent smarter by teaching it the geometry of how probability distributions move? This paper bridges two powerful mathematical frameworks, optimal transport and information geometry, to create a new way of optimizing statistical models.

Let's start with the challenge that motivated this work.

Building on that foundation, the authors ask how Wasserstein gradient descent, which captures meaningful distances through optimal transport, can be brought into the parameter spaces where we actually optimize models. The promise is an optimization method that respects both statistical structure and geometric intuition.

Here's how they construct this new geometric framework.

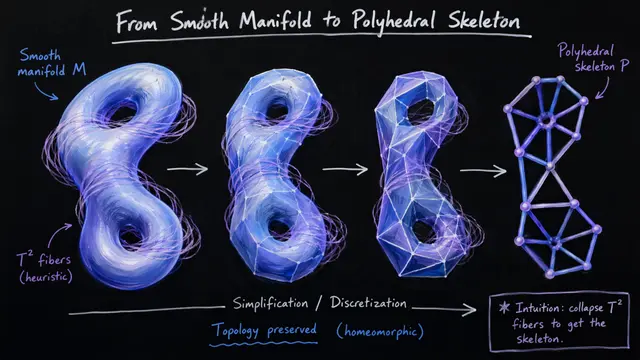

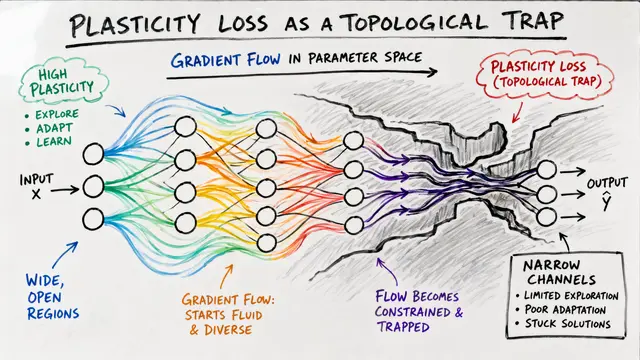

The key innovation is defining a Riemannian metric tensor directly from optimal transport. They represent discrete sample spaces as weighted graphs, where edge weights encode prior knowledge about how samples relate, then pull this geometric structure back through the parameterization to create gradient flows that follow geodesics in Wasserstein space.

This comparison highlights the trade-offs. While the Fisher-Rao metric has been the standard in natural gradient methods and offers computational efficiency, the Wasserstein approach brings in the ground metric, that prior knowledge about sample space structure, creating geometrically distinct optimization paths at the cost of additional computation.

Turning to implementation, the authors leverage graph theory machinery. The weighted Laplacian matrix becomes the key computational object, defining how gradients and divergences work on discrete spaces, while displacement convexity provides theoretical guarantees that the resulting optimization problems are well-behaved.

Now let's examine what the experiments reveal.

In experiments on hierarchical models with binary variables, the researchers found that graph structure matters deeply. The Wasserstein gradient takes qualitatively different paths compared to Fisher gradients, with the specific graph topology determining how probability mass flows during optimization.

The implications are significant for structured optimization problems where you have meaningful prior knowledge about how samples relate. However, computational cost remains a barrier, and the authors acknowledge that practical algorithms and adaptive learning rate strategies need further development before this approach can scale to large real-world problems.

This work opens a new geometric perspective on gradient-based optimization, showing how optimal transport can reshape the paths we take through parameter space. Visit EmergentMind.com to explore more cutting-edge research at the intersection of geometry and machine learning.