To Use or not to Use Muon: How Simplicity Bias in Optimizers Matters

This presentation examines the critical trade-offs between speed and inductive bias in optimizer design, focusing on Muon—a fast optimizer that eliminates the simplicity bias inherent to SGD. Through formal analysis in deep linear networks and controlled empirical studies, we reveal how Muon's spectral orthogonalization accelerates convergence but sacrifices structural generalization, representation sharing, and robustness to spurious correlations. The findings challenge the assumption that faster optimizers universally improve outcomes and demonstrate that optimizer-induced inductive biases fundamentally shape solution quality beyond wall-clock performance.Script

Faster training sounds like an obvious win. But what if the optimizer that gets you to convergence first is quietly dismantling the very structure your model needs to generalize? Muon promises speed, but the cost may be hidden in what it forgets to learn.

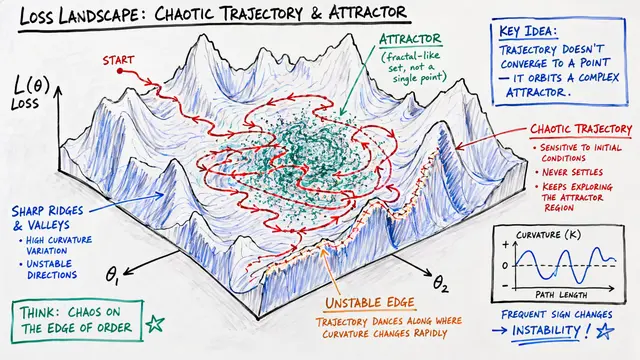

The authors identify a fundamental divergence in how optimizers shape learning. SGD incrementally builds representations, learning dominant patterns before subtle ones. Muon's spectral design abandons this sequential structure entirely, learning everything at once. The question becomes whether this acceleration comes at a hidden cost.

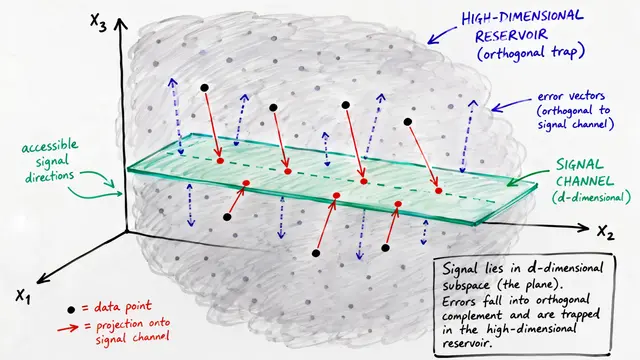

To understand this trade-off, the researchers turn to deep linear networks where the dynamics can be analyzed exactly.

This theoretical result reveals the core mechanism. Under SGD, each singular value is learned in order from largest to smallest, creating a stepwise filling of solution space. The dominant structure emerges first, then finer details. Muon and its theoretical abstraction, Spectral GD, instead learn all singular directions at once. Convergence is faster, but the implicit complexity control vanishes. The learning trajectory no longer respects the natural hierarchy of feature importance.

These theoretical predictions translate into measurable differences in real learning scenarios.

In a controlled routing task across 7 input and output domains, SGD discovers a compact, shared latent representation that generalizes to domain pairs never seen during training. Muon converges faster but memorizes each individual pair instead of abstracting the underlying structure. The learned representations under Muon exhibit high effective rank—a hallmark of memorization rather than principled compression. This directly contradicts the intuition that richer spectral distributions guarantee better generalization.

The authors test this on MNIST with class-specific spurious pixels added. SGD prioritizes the canonical digit features, diverging to the spurious signal only late in training. Muon and Adam begin exploiting both signals immediately. Under moderate spurious correlation strength, SGD achieves higher peak accuracy on non-spurious test data, demonstrating stronger robustness. When spurious features dominate, however, the preferred solution flips—SGD's bias becomes a liability while Muon's concurrent learning remains relatively stable.

These results demand a shift in how we evaluate optimizers. Faster convergence on in-distribution metrics does not guarantee the inductive properties we actually need. For tasks that require discovering shared principles, resisting shortcuts, or generalizing beyond training conditions, simplicity bias is not a bug—it is a feature. The choice between SGD and Muon is not about efficiency, but about alignment between optimizer dynamics and task structure.

The path forward lies in optimizers that can flexibly modulate their inductive bias. Rather than choosing between speed and structure, future designs might selectively orthogonalize updates or inject staged complexity control. Meta-learning could dynamically switch between Muon and SGD-like dynamics based on dataset characteristics. Explicit regularization might restore simplicity bias to fast optimizers without forfeiting their convergence advantages.

Muon teaches us that the fastest path to low training loss is not always the right path to the solution we need. The choice of optimizer writes the rules of what can be learned, not just how quickly. To explore more research like this and create your own video summaries, visit EmergentMind.com.